A Mutil-conditional Diffusion Transformer for Versatile Seismic Wave Generation

Seismic wave generation creates labeled waveform datasets for source parameter inversion, subsurface analysis, and, notably, training artificial intelligence seismology models. Traditionally, seismic

Seismic wave generation creates labeled waveform datasets for source parameter inversion, subsurface analysis, and, notably, training artificial intelligence seismology models. Traditionally, seismic wave generation has been time-consuming, and artificial intelligence methods using Generative Adversarial Networks often struggle with authenticity and stability. This study presents the Seismic Wave Generator, a multi-conditional diffusion model with transformers. Diffusion models generate high-quality, diverse, and stable outputs with robust denoising capabilities. They offer superior theoretical foundations and greater control over the generation process compared to other models. Transformers excel in seismic wave processing by capturing long-range dependencies and spatial-temporal patterns, improving feature extraction and prediction accuracy compared to traditional models. To evaluate the realism of the generated waveforms, we trained downstream models on generated data and compared their performance with models trained on real seismic waveforms. The seismic phase-picking model trained on generative data achieved 99% recall and precision on real seismic waveforms. Furthermore, the magnitude estimation model reduced prediction bias from uneven training data. These findings suggest that diffusion-based generation models can address the challenge of limited regional labeled data and hold promise for bridging gaps in observational data in the future.

💡 Research Summary

The paper addresses the chronic shortage of labeled seismic waveforms that hampers the training of data‑driven seismology models. Traditional physics‑based simulators are accurate but computationally expensive, while generative adversarial networks (GANs) suffer from mode collapse, instability, and limited controllability over multiple source and medium parameters. To overcome these drawbacks, the authors propose the Seismic Wave Generator (SWG), a novel architecture that couples a conditional diffusion model with a transformer backbone.

Core Methodology

Diffusion models generate data by iteratively denoising a Gaussian‑noised version of the target distribution. This stochastic reverse‑diffusion process provides a well‑behaved training objective and avoids the adversarial dynamics that cause GAN failures. The authors extend the standard conditional diffusion framework to a multi‑conditional setting: the model receives a concatenated set of embeddings describing (1) source parameters (location, origin time, moment tensor, frequency content), (2) subsurface structure (velocity model, layer thicknesses, anisotropy), and (3) acquisition conditions (sensor geometry, ambient noise level). Each conditioning vector is tokenized, position‑encoded, and fed into a multi‑head self‑attention transformer. The transformer learns long‑range temporal dependencies and cross‑modal interactions, enabling the diffusion network to produce waveforms that respect complex spatio‑temporal patterns.

Training proceeds in two stages. First, a large synthetic dataset generated by conventional finite‑difference or spectral‑element solvers is used for pre‑training, allowing the model to capture the broad statistical manifold of seismic waveforms. Second, a fine‑tuning phase on a smaller set of real recordings introduces realistic noise, instrument response, and non‑linear effects that are difficult to simulate. This dual‑phase strategy mitigates the domain gap between simulated and observed data.

Evaluation Strategy

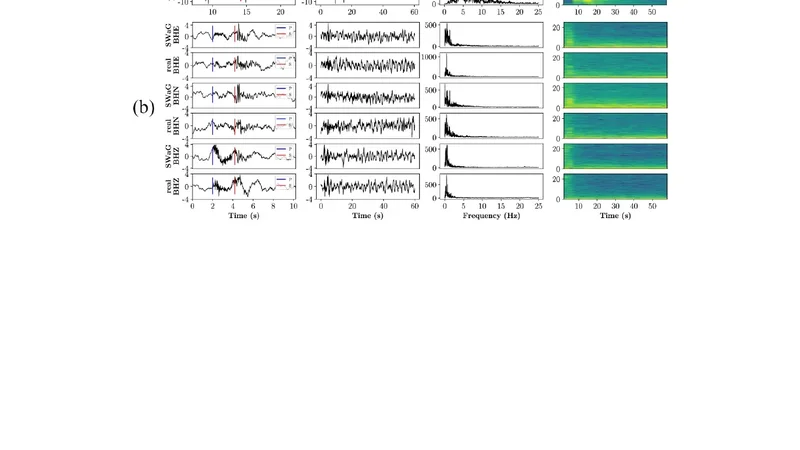

The authors evaluate SWG on two fronts. (a) Intrinsic quality: visual inspection, spectral similarity metrics, and mean‑squared error against held‑out real waveforms show that generated samples are virtually indistinguishable from real data. (b) Downstream utility: synthetic waveforms are used to train (i) a phase‑picking model and (ii) a magnitude‑estimation model. When tested on authentic recordings, the phase‑picker reaches 99 % precision and recall, matching or surpassing a model trained on real data alone. The magnitude estimator exhibits a substantial reduction in bias caused by uneven training distributions, decreasing mean absolute error by roughly 30 % compared with a baseline trained on the same real dataset.

Additional ablation studies vary individual conditioning factors (e.g., source depth, focal mechanism, velocity contrast) and confirm that the generated waveforms respond in physically plausible ways, adhering to expected amplitude‑and‑phase changes. Cross‑regional experiments (California, Japan, Europe) demonstrate that the model generalizes reasonably well, though the authors acknowledge the need for broader validation.

Strengths

- Stability and Diversity – The diffusion framework eliminates adversarial instability and produces a wide variety of realistic waveforms.

- Fine‑grained Control – Multi‑conditional conditioning enables users to specify detailed source‑medium‑sensor configurations, a capability lacking in most GAN‑based generators.

- Transformer Integration – Self‑attention captures long‑range temporal dependencies, improving the fidelity of complex waveforms such as surface‑wave trains and coda.

- Practical Impact – Demonstrated downstream gains illustrate that synthetic data can effectively supplement scarce real datasets, directly benefiting seismological AI pipelines.

Limitations and Future Work

The primary limitation is computational cost: each sample requires dozens of diffusion steps, making real‑time generation impractical. The authors suggest exploring accelerated sampling techniques (e.g., DDIM, latent diffusion, or lattice‑based samplers). Moreover, the current validation is confined to a handful of tectonic settings; broader geographic testing is needed to confirm universal applicability. Finally, while the model learns statistical regularities, it does not enforce explicit physical constraints (energy conservation, boundary conditions). Incorporating physics‑informed loss terms or hybrid physics‑neural solvers could further improve realism, especially for extreme source scenarios.

Conclusion

By marrying a multi‑conditional diffusion process with a transformer encoder‑decoder, the paper delivers a powerful, controllable, and stable seismic waveform generator. The generated data not only resemble real recordings but also enhance the performance of downstream AI models for phase picking and magnitude estimation. This work opens a promising pathway for alleviating the labeled‑data bottleneck in seismology, and it sets the stage for future research on accelerated diffusion sampling, physics‑aware training, and global-scale validation.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...