AS-ASR: A Lightweight Framework for Aphasia-Specific Automatic Speech Recognition

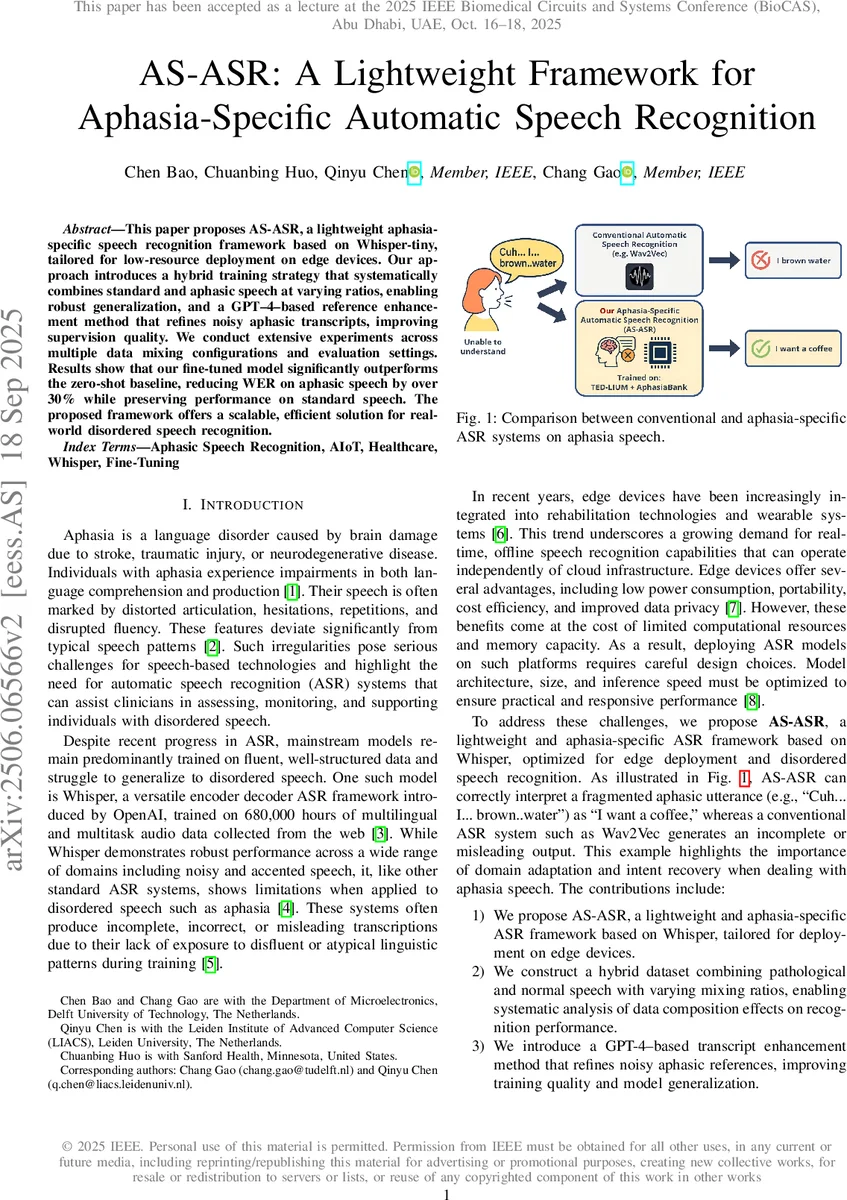

This paper proposes AS-ASR, a lightweight aphasia-specific speech recognition framework based on Whisper-tiny, tailored for low-resource deployment on edge devices. Our approach introduces a hybrid training strategy that systematically combines standard and aphasic speech at varying ratios, enabling robust generalization, and a GPT-4-based reference enhancement method that refines noisy aphasic transcripts, improving supervision quality. We conduct extensive experiments across multiple data mixing configurations and evaluation settings. Results show that our fine-tuned model significantly outperforms the zero-shot baseline, reducing WER on aphasic speech by over 30% while preserving performance on standard speech. The proposed framework offers a scalable, efficient solution for real-world disordered speech recognition.

💡 Research Summary

**

The paper introduces AS‑ASR, a lightweight, aphasia‑specific automatic speech recognition framework built on the Whisper‑tiny model, designed for deployment on resource‑constrained edge devices. Recognizing that mainstream ASR systems are trained on fluent, well‑structured speech and therefore struggle with the disfluent, fragmented, and hesitant patterns characteristic of aphasic speech, the authors propose a three‑pronged approach: (1) selecting Whisper‑tiny‑en (≈39 M parameters, ~1 GB VRAM) as the base architecture to ensure low latency and low power consumption on platforms such as the Jetson Nano; (2) constructing a hybrid training corpus that mixes pathological speech from AphasiaBank with standard speech from TED‑LIUM v2 in systematic ratios (10 %:90 % up to 90 %:10 %) while keeping the total number of segments constant (≈14 000) and using a consistent 80 %/10 %/10 % train/validation/test split; and (3) enhancing the quality of the aphasic transcripts using GPT‑4. The GPT‑4 enhancement pipeline removes non‑linguistic markup, then rewrites each utterance into fluent standard English without altering the intended meaning, thereby providing cleaner supervision for fine‑tuning while acknowledging the risk of over‑correction that could erase diagnostically relevant features.

Training is performed on a high‑performance GPU cluster (8 × NVIDIA RTX A6000) with mixed‑precision (fp16) and gradient checkpointing, using the HuggingFace Trainer, AdamW optimizer (lr = 5e‑5), and a linear learning‑rate scheduler for 10 epochs, batch size 16 per device. Evaluation relies on word error rate (WER). Two baselines are defined: Baseline‑1 (zero‑shot Whisper‑tiny‑en) and Baseline‑2 (average WER of Whisper‑tiny, Base, Small, Medium, and Large on the pure aphasia test set). Baseline‑2 shows that larger Whisper models achieve lower WER (Large = 0.622) but require prohibitive memory (≥10 GB) and are unsuitable for edge deployment. Whisper‑tiny‑en, despite a higher baseline WER (0.787), offers a practical trade‑off between size and performance.

Fine‑tuning Whisper‑tiny‑en on the hybrid dataset yields substantial gains. On the aphasia‑only test set, WER drops from 0.808/0.755 (dev/test) to 0.430/0.454, a reduction of more than 30 %. On the standard TED‑LIUM test set, performance remains essentially unchanged (0.119 → 0.116), demonstrating that the model does not sacrifice fluency recognition. On a merged test set combining both domains, WER improves from 0.515/0.498 to 0.162/0.170, indicating enhanced robustness across mixed acoustic conditions.

A systematic analysis of data mixing ratios reveals a clear trade‑off: increasing the proportion of aphasic data consistently lowers aphasia WER but gradually raises TED‑LIUM WER. Balanced configurations (e.g., 50 % aphasia / 50 % TED‑LIUM or 70 % aphasia / 30 % TED‑LIUM) achieve a practical compromise, maintaining acceptable performance on both pathological and fluent speech. This insight is crucial for real‑world clinical deployments where a system must handle a spectrum of speech qualities.

The authors conclude that AS‑ASR delivers a scalable, efficient solution for disordered speech recognition on edge hardware. Future work includes clinical validation of GPT‑4‑enhanced transcripts, design of specialized AIoT accelerators, and integration of real‑time LLM‑based correction interfaces, aiming to embed the framework into wearable rehabilitation and assistive devices for post‑stroke patients.

Comments & Academic Discussion

Loading comments...

Leave a Comment