DAVIS: Planning Agent with Knowledge Graph-Powered Inner Monologue

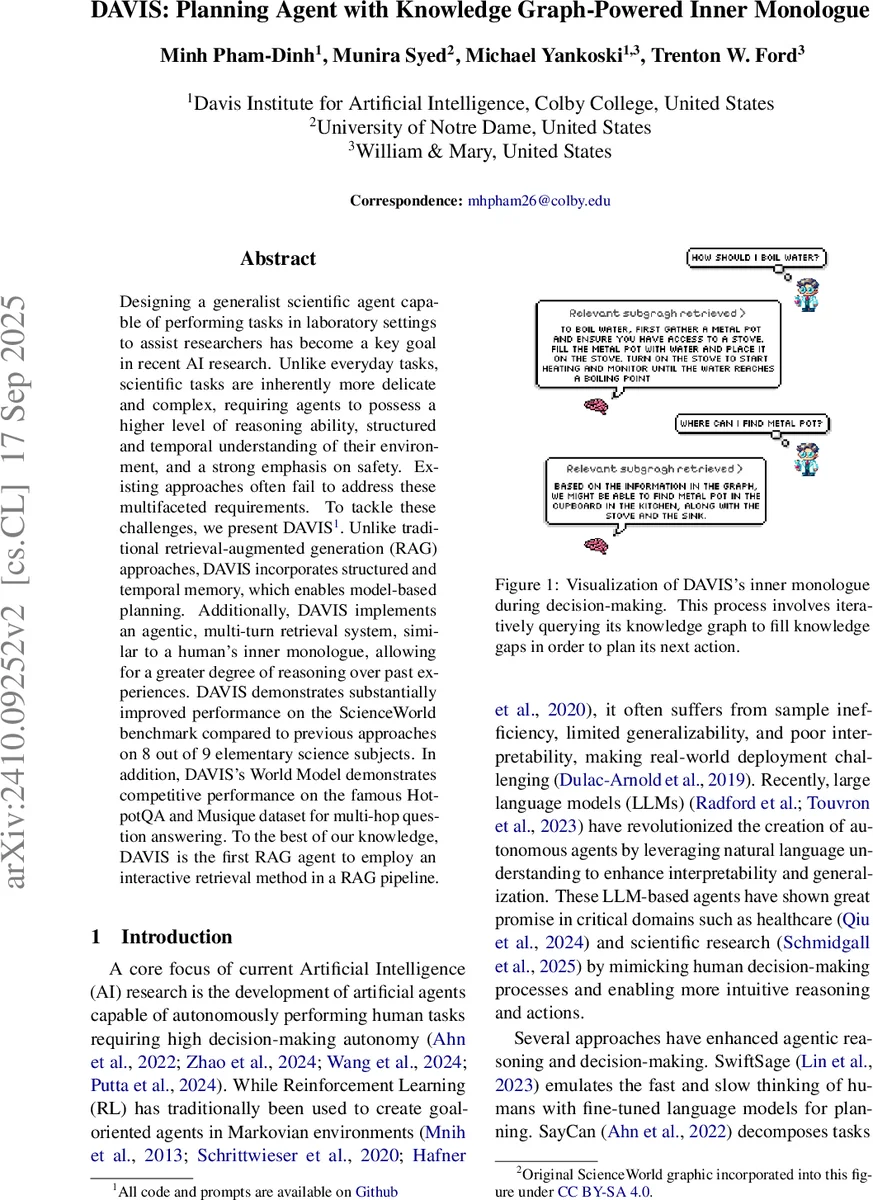

Designing a generalist scientific agent capable of performing tasks in laboratory settings to assist researchers has become a key goal in recent Artificial Intelligence (AI) research. Unlike everyday tasks, scientific tasks are inherently more delicate and complex, requiring agents to possess a higher level of reasoning ability, structured and temporal understanding of their environment, and a strong emphasis on safety. Existing approaches often fail to address these multifaceted requirements. To tackle these challenges, we present DAVIS. Unlike traditional retrieval-augmented generation (RAG) approaches, DAVIS incorporates structured and temporal memory, which enables model-based planning. Additionally, DAVIS implements an agentic, multi-turn retrieval system, similar to a human’s inner monologue, allowing for a greater degree of reasoning over past experiences. DAVIS demonstrates substantially improved performance on the ScienceWorld benchmark comparing to previous approaches on 8 out of 9 elementary science subjects. In addition, DAVIS’s World Model demonstrates competitive performance on the famous HotpotQA and MusiqueQA dataset for multi-hop question answering. To the best of our knowledge, DAVIS is the first RAG agent to employ an interactive retrieval method in a RAG pipeline.

💡 Research Summary

The paper introduces DAVIS, a novel planning agent designed for text‑based scientific environments such as the ScienceWorld laboratory simulator. Unlike conventional Retrieval‑Augmented Generation (RAG) agents that rely on a single, unstructured retrieval pass, DAVIS integrates a Temporal Knowledge Graph (TKG) as its internal World Model (WM) and employs a multi‑turn “inner monologue” that iteratively queries this graph to fill knowledge gaps before committing to an action.

Core Architecture

-

World Model (Temporal Knowledge Graph) – Every interaction (observation‑action‑next‑observation) is parsed using a large language model (LLM) together with Stanford CoreNLP coreference resolution. The parsed text is transformed into quadruple facts (subject, relation, object, timestamp) and incrementally added to a TKG. This graph preserves temporal ordering, enabling causal reasoning about how entities evolve over time—a crucial capability for scientific tasks where actions have delayed or compound effects.

-

Inner Monologue (Multi‑turn Retrieval‑Dialogue) – The agent maintains a running dialogue log (M_t) with its WM. At each planning step it summarizes its belief state (\hat b_t) via an LLM, then issues a series of targeted queries to the TKG. Retrieved sub‑graphs are fed back into the belief estimator and policy (\pi), allowing the agent to dynamically refine its understanding of the environment. This process mimics human reflective thinking: the agent asks itself “what do I still not know?” and searches the graph repeatedly until the knowledge gap is resolved.

-

Actor‑Critic Decision Loop – DAVIS frames the problem as a Partially Observable Markov Decision Process (POMDP). The actor translates high‑level plans generated by the WM into concrete textual actions for the environment. Simultaneously, the critic continuously compares the observed next state (o_{t+1}) with the WM‑predicted transition (\hat T), checking safety constraints and flagging discrepancies. If a violation is detected, an optional supervisor alert can be triggered.

Learning and Approximation

The belief state (\hat b_t) is approximated by a prompting function (f(\tau_{t’:t})) that extracts salient information from the trajectory history. Transition and reward models are also approximated using the TKG and the inner monologue context, yielding three learned functions: policy (\pi(a_t|\hat b_t,M_t)), transition (\hat T(o_{t+1}|\hat b_t,a_t,M_t)), and reward (\hat R(r_t|\hat b_t,a_t,M_t)). This enables model‑based planning without explicit environment dynamics, leveraging the LLM’s pattern‑recognition abilities to extrapolate future events from past graph patterns.

Experimental Evaluation

- ScienceWorld Benchmark: DAVIS was tested on nine elementary‑science subjects (physics, chemistry, biology, etc.). It outperformed four strong baselines—SwiftSage, ReAct, Reflexion, and RAP—on eight subjects, achieving 7–12 percentage‑point gains in task success rate. The advantage was most pronounced on tasks requiring multi‑step procedural reasoning and temporal dependencies.

- Multi‑hop QA Datasets: On HotpotQA and MusiqueQA, DAVIS’s TKG‑based graph QA combined with LLM reasoning matched or exceeded state‑of‑the‑art RAG systems, demonstrating that the same graph‑driven inner monologue can serve both planning and open‑domain question answering.

Contributions

- Introduction of a structured, temporal memory (TKG) that supports causal, multi‑hop reasoning in scientific domains.

- A novel inner‑monologue retrieval mechanism that allows the agent to iteratively self‑question and refine its world model, moving beyond static retrieval.

- An actor‑critic planning loop that integrates real‑time safety monitoring with model‑based predictions.

Limitations & Future Work

The construction of the TKG depends on LLM‑driven parsing and coreference resolution, which can propagate errors into the graph. The current implementation is limited to text‑only simulators; extending to multimodal or physical robotic platforms will require richer perception pipelines and possibly formal verification of safety constraints. Future research directions include improving automated triple extraction, scaling the TKG to multimodal knowledge sources, and integrating formal methods for safety guarantees.

In summary, DAVIS represents the first RAG‑based agent that couples a temporal knowledge graph with an interactive inner monologue, achieving superior planning, interpretability, and safety in complex scientific environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment