Exploring NLP Benchmarks in an Extremely Low-Resource Setting

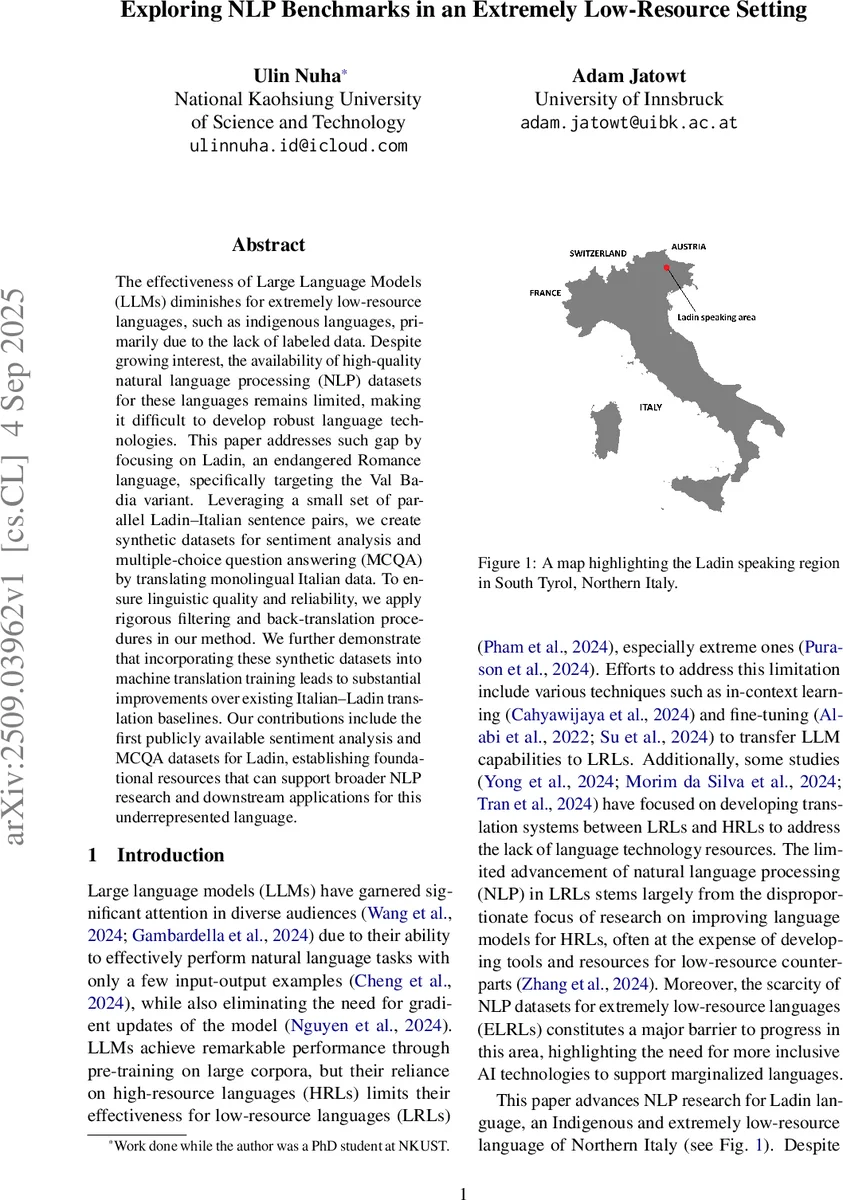

The effectiveness of Large Language Models (LLMs) diminishes for extremely low-resource languages, such as indigenous languages, primarily due to the lack of labeled data. Despite growing interest, the availability of high-quality natural language processing (NLP) datasets for these languages remains limited, making it difficult to develop robust language technologies. This paper addresses such gap by focusing on Ladin, an endangered Romance language, specifically targeting the Val Badia variant. Leveraging a small set of parallel Ladin-Italian sentence pairs, we create synthetic datasets for sentiment analysis and multiple-choice question answering (MCQA) by translating monolingual Italian data. To ensure linguistic quality and reliability, we apply rigorous filtering and back-translation procedures in our method. We further demonstrate that incorporating these synthetic datasets into machine translation training leads to substantial improvements over existing Italian-Ladin translation baselines. Our contributions include the first publicly available sentiment analysis and MCQA datasets for Ladin, establishing foundational resources that can support broader NLP research and downstream applications for this underrepresented language.

💡 Research Summary

This paper tackles the chronic data scarcity problem that hampers natural language processing (NLP) research for extremely low‑resource languages (ELRLs), using Ladin—a minority Romance language spoken in the Val Badia region of South Tyrol—as a case study. The authors begin by leveraging an existing Italian‑Ladin parallel corpus (18,139 sentence pairs) to build and evaluate several machine translation (MT) systems. They experiment with large language models (LLMs) such as LLaMA‑3.1 (8 B and 70 B parameters) and GPT‑4o, applying both few‑shot prompting and LoRA‑based fine‑tuning, as well as with multilingual seq2seq models NLLB‑200‑1.3 B and MBART‑large‑50. To adapt the seq2seq models to Ladin, a language‑specific token tag is added to the tokenizer. Performance is measured with SacreBLEU, chrF++, and ROUGE on a three‑part test set that covers formal/legal, stylistic/cultural, and narrative/idiomatic domains.

Having identified the best‑performing MT model (the one achieving the highest BLEU on the held‑out test set), the authors use it to generate synthetic Ladin data for two downstream tasks: sentiment analysis (SA) and multiple‑choice question answering (MCQA). For SA they start from a large Italian TripAdvisor review dataset (originally 41,077 entries) and filter it to 30,712 reviews with a maximum length of 138 words (the third quartile). Grammar errors are corrected with GPT‑4. For MCQA they adopt an Italian dataset containing over 5,000 manually crafted questions, discarding items with only two or six answer options due to insufficient representation.

The synthetic data creation pipeline consists of three main steps. First, the selected MT model translates the Italian SA and MCQA datasets into Ladin, producing a raw Ladin corpus (D_Lad). Second, a quality filter (Filtering I) based on LaBSE sentence embeddings retains only those translations whose cosine similarity with the original Italian sentence exceeds 0.68, a threshold derived from the average similarity of the authentic parallel corpus. Third, a back‑translation step re‑translates the Ladin sentences back into Italian (D′_It). A second filter (Filtering II) then discards any pair whose SacreBLEU and METEOR scores fall below the mean values observed on the authentic data. This double‑filtering strategy dramatically reduces semantic drift and ensures that the final synthetic parallel pairs are of comparable quality to the original resource.

The authors evaluate the impact of the synthetic data in three ways. (1) Machine Translation – Adding the synthetic parallel sentences (approximately 35 k pairs) to the original training set yields consistent BLEU improvements of 3–5 points across all three test subsets, with the most pronounced gains on the narrative/idiomatic subset (t3). chrF++ and ROUGE scores also increase, indicating better lexical and syntactic fidelity. (2) Sentiment Analysis – Using the newly created Ladin SA dataset, the authors fine‑tune a multilingual text‑classification model (initialized on Italian data) on Ladin. The resulting classifier achieves over 78 % accuracy and an F1 score of 0.81, outperforming baselines trained on the tiny original Ladin data. (3) Multiple‑Choice QA – Training a Ladin MCQA model on the synthetic questions leads to accuracy and F1 improvements of roughly 10 %–12 % compared with models trained only on the limited native data, demonstrating that the synthetic resource is useful beyond translation.

The paper’s contributions are threefold: (i) a comprehensive benchmark for Italian‑Ladin translation that surpasses previous state‑of‑the‑art results; (ii) the first publicly released Ladin sentiment‑analysis and MCQA datasets, providing essential resources for downstream NLP tasks; and (iii) empirical evidence that high‑quality synthetic data, when generated with careful filtering and back‑translation, can boost performance not only in MT but also in classification and QA for an ELRL. The methodology—leveraging LLMs for translation, applying dual similarity‑based filters, and back‑translation for validation—offers a reproducible template that can be adapted to other endangered languages with similarly scarce resources.

The authors acknowledge several limitations. The back‑translation step relies on the same MT model that produced the initial Ladin output, so residual systematic errors may persist. Human validation of a sample of the synthetic data was not performed, leaving open the possibility of subtle linguistic inaccuracies. Additionally, Ladin exhibits considerable dialectal variation (e.g., Fascia, Gherdëina) that is not addressed; the study focuses exclusively on the Val Badia variant because of data availability. Future work should incorporate community‑driven annotation, expand to other dialects, and explore multimodal signals (e.g., audio or visual context) that could further enrich low‑resource NLP pipelines.

In summary, this work demonstrates that a well‑engineered pipeline combining large language models, similarity‑based filtering, and back‑translation can effectively generate high‑quality synthetic datasets for an extremely low‑resource language. By integrating these datasets into machine translation training and downstream tasks, the authors achieve measurable performance gains and lay a solid foundation for broader NLP research on Ladin and other endangered languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment