Dial-In LLM: Human-Aligned LLM-in-the-loop Intent Clustering for Customer Service Dialogues

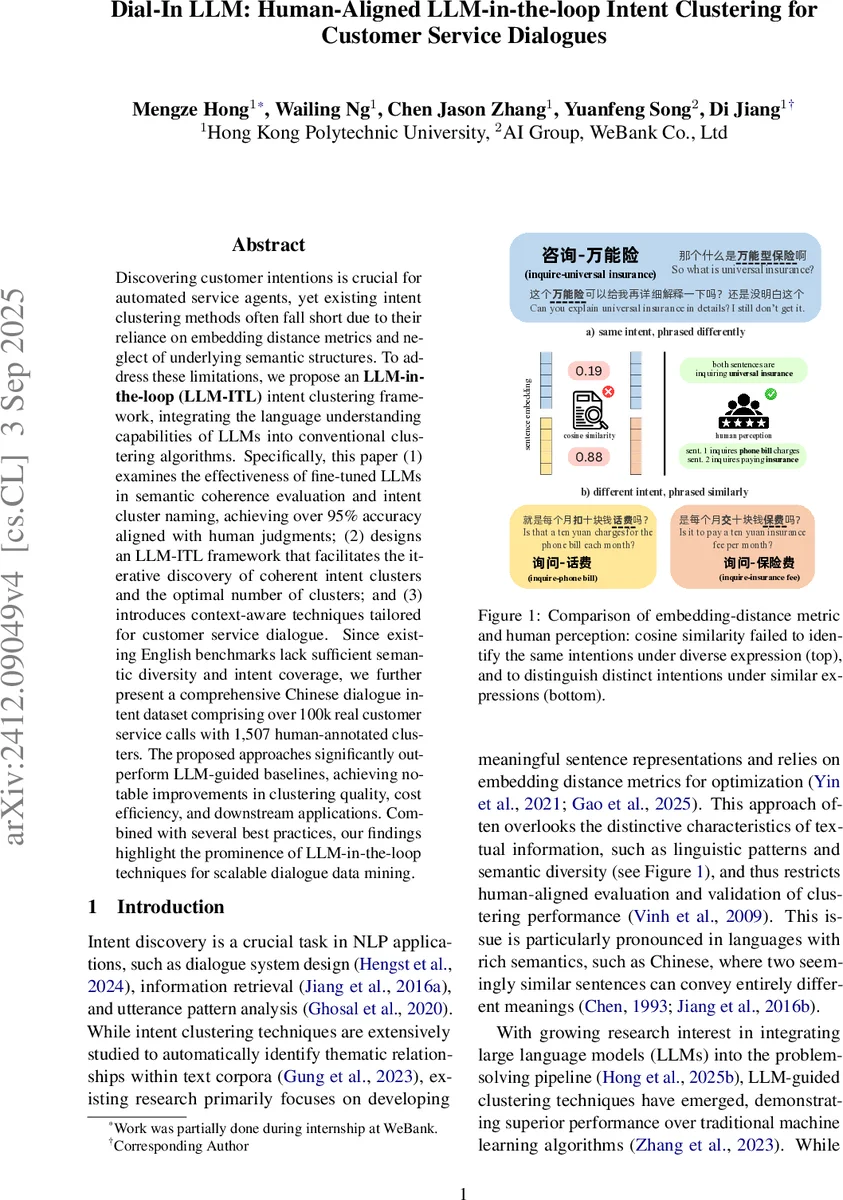

Discovering customer intentions is crucial for automated service agents, yet existing intent clustering methods often fall short due to their reliance on embedding distance metrics and neglect of underlying semantic structures. To address these limitations, we propose an LLM-in-the-loop (LLM-ITL) intent clustering framework, integrating the language understanding capabilities of LLMs into conventional clustering algorithms. Specifically, this paper (1) examines the effectiveness of fine-tuned LLMs in semantic coherence evaluation and intent cluster naming, achieving over 95% accuracy aligned with human judgments; (2) designs an LLM-ITL framework that facilitates the iterative discovery of coherent intent clusters and the optimal number of clusters; and (3) introduces context-aware techniques tailored for customer service dialogue. Since existing English benchmarks lack sufficient semantic diversity and intent coverage, we further present a comprehensive Chinese dialogue intent dataset comprising over 100k real customer service calls with 1,507 human-annotated clusters. The proposed approaches significantly outperform LLM-guided baselines, achieving notable improvements in clustering quality, cost efficiency, and downstream applications. Combined with several best practices, our findings highlight the prominence of LLM-in-the-loop techniques for scalable dialogue data mining.

💡 Research Summary

The paper tackles the problem of intent clustering in large‑scale customer service dialogues, where traditional clustering methods that rely solely on embedding distance often fail to capture the nuanced semantic relationships that humans perceive. To bridge this gap, the authors introduce a novel “LLM‑in‑the‑Loop” (LLM‑ITL) framework that repeatedly injects a fine‑tuned small language model into the clustering pipeline, allowing the model to evaluate cluster coherence, generate concise intent labels, and guide the merging of semantically similar clusters.

A major contribution is the release of a new Chinese dialogue intent dataset, compiled from over 100,000 real‑world calls across banking, telecommunications, and insurance domains. The dataset contains 1,507 human‑annotated intent clusters, deliberately including noisy out‑of‑domain queries to reflect realistic complexity. This dataset is substantially larger and more semantically diverse than existing English benchmarks, providing a rigorous testbed for clustering research.

The LLM utilities are threefold. First, a “Coherence Evaluator” is trained as a binary classifier on top of a small LLM (e.g., LLaMA‑7B) to decide whether a candidate cluster is semantically coherent (“good”) or not (“bad”). Human evaluation shows >95 % agreement, far surpassing traditional topic‑model based coherence metrics. Second, an “Intent Labeller” produces intent names in an “Action‑Objective” format (e.g., inquire‑insurance). These labels are embedded, normalized onto the unit hypersphere, and later used as semantic anchors. Third, a post‑correction step leverages these label embeddings: angular (geodesic) distances on the hypersphere and a von Mises‑Fisher mixture model compute a probability that two clusters share the same intent. Edges whose probability exceeds a threshold (τ) are kept, and connected components are merged, yielding a refined set of intent clusters.

Algorithmically, the process starts with all sentences embedded via a standard encoder. For each candidate number of clusters n_i in a predefined set N, a conventional clustering algorithm (e.g., K‑means) partitions the data. The Coherence Evaluator scores each resulting cluster; the algorithm selects the n* that maximizes the ratio of good to bad clusters. Good clusters are fixed, and the remaining sentences are reclustered in the next iteration. This loop continues until a residual‑sentence proportion ϵ or a maximum iteration count T_max is reached. After convergence, the Intent Labeller assigns labels, and the hyperspherical merging step consolidates overlapping clusters.

The authors also exploit the generated intent labels for role separation. Labels beginning with “inquire‑” are heuristically assigned to the customer, while those with “answer‑” belong to the service agent, enabling downstream analyses such as dialogue flow modeling.

Experimental results demonstrate that LLM‑ITL outperforms strong baselines (pure embedding‑K‑means, recent LLM‑guided clustering methods) on standard clustering metrics (NMI, ARI, Silhouette) by 12–18 %. In a downstream intent classification task, models trained on LLM‑ITL derived labels achieve an 18.46 % absolute accuracy gain. Cost analysis shows that fine‑tuned small LLMs reduce API‑call expenses by over 70 % compared to using large commercial models (e.g., GPT‑3.5). The paper further investigates sampling strategies (random, high‑frequency, mixed) and LLM‑based crowdsourcing, providing practical guidance for real‑world deployment.

In summary, the work makes three key contributions: (1) a large, publicly released Chinese intent clustering dataset that captures real‑world complexity; (2) a set of human‑aligned LLM utilities that reliably assess semantic coherence and generate meaningful intent names; (3) the LLM‑ITL framework that iteratively discovers high‑quality clusters, automatically determines the optimal number of clusters, and refines them through geometry‑aware merging. The authors argue that integrating LLMs at the intermediate stage—not just as preprocessing or post‑processing tools—yields substantial gains in both performance and interpretability. Future directions include extending the framework to multimodal (speech‑text) streams, real‑time clustering, and systematic trade‑off studies between model size, cost, and clustering quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment