Fast and Accurate SVD-Type Updating in Streaming Data

💡 Research Summary

The paper addresses the problem of efficiently updating a singular value decomposition (SVD) for a matrix that receives frequent low‑rank modifications in a streaming setting. Computing a fresh optimal SVD after each update is prohibitive for large‑scale data, so the authors propose a family of algorithms that update the bidiagonal factorization directly, thereby avoiding a full recomputation while preserving the accuracy of SVD‑based methods.

The authors first formalize the common low‑rank update model A⁺ = A + B Cᵀ, where B ∈ ℝ^{m×r} and C ∈ ℝ^{n×r} (r ≪ min(m,n)). Assuming an existing bidiagonal decomposition A = Q B Pᵀ (with orthogonal Q, P and upper bidiagonal B), the goal is to obtain Q⁺, B⁺, P⁺ such that A⁺ = Q⁺ B⁺ P⁺ᵀ, using only the previously computed factors and the low‑rank perturbation.

Three main contributions are presented:

-

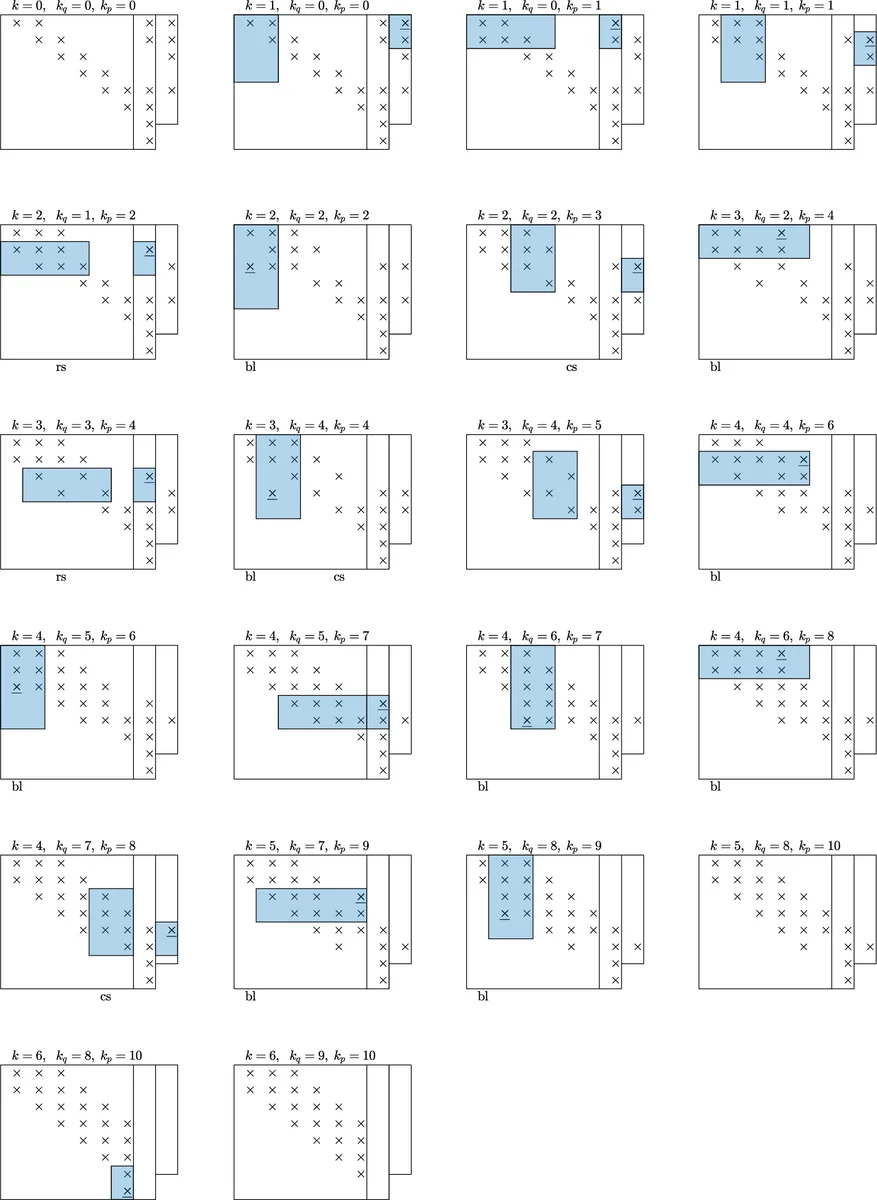

Compact Householder Low‑Rank Update – Traditional Householder bidiagonalization stores a dense sequence of reflectors, leading to high memory usage and fill‑in when applied to an updated matrix. The authors adopt a compact WY representation, storing only the essential reflector vectors in thin matrices Yₖ (m×k) and Wₖ (n×k). Using the associated upper‑triangular matrices Tₖ and Rₖ, the orthogonal factors are expressed as Qₖ = I – 2 Yₖ Tₖ⁻¹ Yₖᵀ and Pₖ = I – 2 Wₖ Rₖ⁻¹ Wₖᵀ. When these are applied to the perturbed bidiagonal matrix B + b cᵀ, the bidiagonal structure is preserved: no new off‑bidiagonal entries appear, and the update can be performed with roughly half the memory of LAPACK’s dgebrd/zgebrd implementations. The computational cost scales as O(m n t) with t = min(m,n), a substantial reduction compared with the O(m n t) of a full re‑bidiagonalization.

-

Givens‑Rotation Low‑Rank Update – The authors develop a rotation‑based scheme that annihilates the extra off‑bidiagonal elements introduced by the low‑rank term. Each Givens rotation requires only about ten floating‑point operations, and the total number of rotations grows quadratically with the problem size, yielding an overall O(n²) complexity instead of the cubic cost of conventional approaches. Orthogonal factors Q and P are updated explicitly, allowing the subsequent diagonalization step (the second phase of the SVD) to proceed without additional overhead.

-

Randomized Bidiagonal Decomposition (RBD) – Inspired by the randomized SVD, the authors sketch the column space of A with a random matrix S ∈ ℝ^{n×r}, compute Y = A S, obtain an orthonormal basis Q_Y via a thin QR, then sketch the row space with Z = Q_Yᵀ A and again QR to get Q_Z. The resulting small bidiagonal matrix B_r = Q_Zᵀ Z can be either directly used as a low‑rank approximation or further factorized with a conventional SVD. This approach offers a succinct pipeline that is especially advantageous when the singular values decay slowly, as it avoids the need to form the full bidiagonal matrix.

The paper provides theoretical error bounds linking the bidiagonal approximation error to the optimal truncated SVD error, showing that the proposed updates retain near‑optimal accuracy (the error is within a small multiple of the sum of discarded singular values). Complexity analyses confirm that the Householder method reduces memory by roughly 50 % and the Givens method reduces arithmetic work from O(n³) to O(n²).

Extensive experiments are conducted on a large movie‑rating dataset (hundreds of thousands of users, thousands of items) and on a high‑throughput network subspace‑tracking task. The new algorithms are compared against LAPACK’s bidiagonalization routines, Brand’s incremental thin‑SVD, and other state‑of‑the‑art incremental SVD techniques. Results demonstrate 2–5× speedups, memory savings of 40–50 %, and approximation errors on the order of 10⁻⁴, matching or surpassing the accuracy of full SVD recomputation. The RBD method shows comparable accuracy with a much smaller computational footprint when the rank r is modest.

In conclusion, the authors argue that maintaining the bidiagonal structure during low‑rank updates is the key to scalable streaming SVD. Their three algorithms—compact Householder, Givens‑rotation, and randomized bidiagonal decomposition—offer complementary trade‑offs between memory, speed, and implementation simplicity, making them suitable for real‑time recommendation systems, online subspace tracking, and other large‑scale streaming applications. Future work is suggested on handling simultaneous multi‑rank updates, distributed parallel implementations, and extensions to kernel or non‑linear settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment