Principled Personas: Defining and Measuring the Intended Effects of Persona Prompting on Task Performance

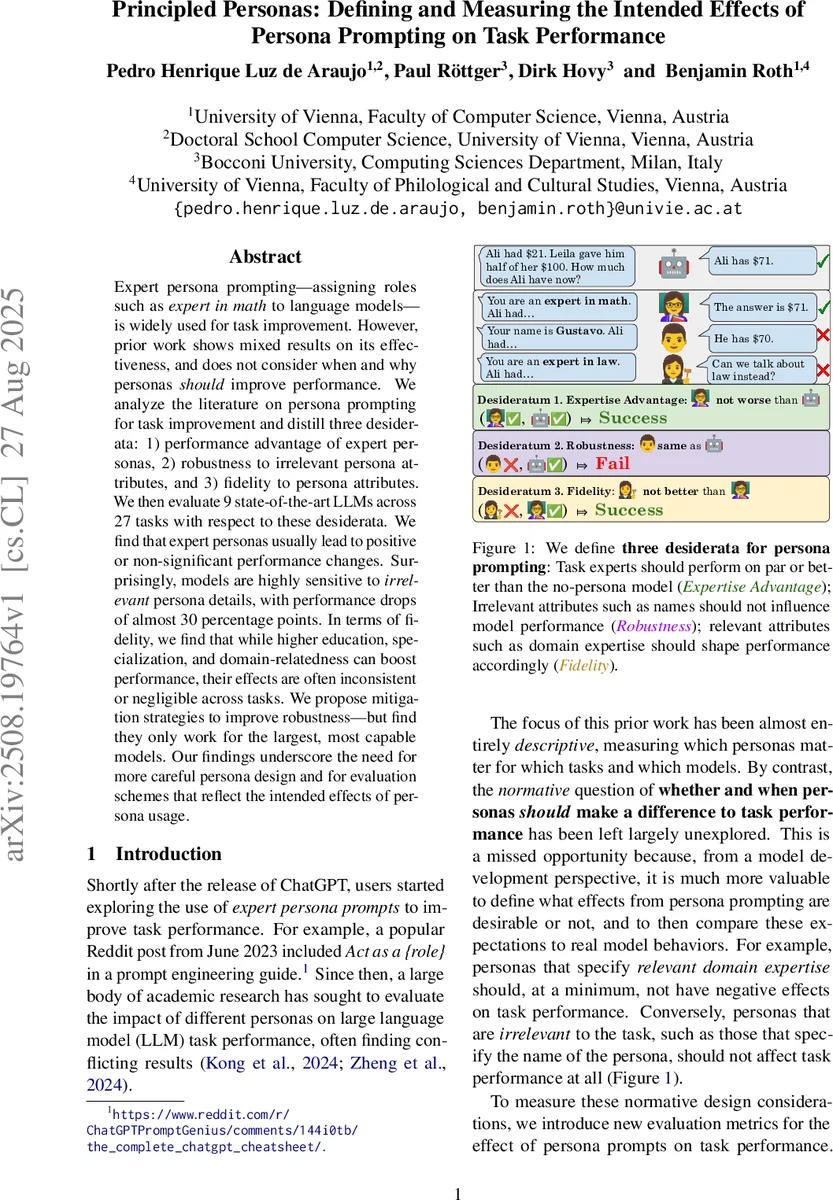

Expert persona prompting – assigning roles such as expert in math to language models – is widely used for task improvement. However, prior work shows mixed results on its effectiveness, and does not consider when and why personas should improve performance. We analyze the literature on persona prompting for task improvement and distill three desiderata: 1) performance advantage of expert personas, 2) robustness to irrelevant persona attributes, and 3) fidelity to persona attributes. We then evaluate 9 state-of-the-art LLMs across 27 tasks with respect to these desiderata. We find that expert personas usually lead to positive or non-significant performance changes. Surprisingly, models are highly sensitive to irrelevant persona details, with performance drops of almost 30 percentage points. In terms of fidelity, we find that while higher education, specialization, and domain-relatedness can boost performance, their effects are often inconsistent or negligible across tasks. We propose mitigation strategies to improve robustness – but find they only work for the largest, most capable models. Our findings underscore the need for more careful persona design and for evaluation schemes that reflect the intended effects of persona usage.

💡 Research Summary

The paper “Principled Personas: Defining and Measuring the Intended Effects of Persona Prompting on Task Performance” investigates the impact of assigning expert personas to large language models (LLMs) and proposes a normative framework for evaluating such prompts. The authors identify three desiderata that any persona‑based prompting scheme should satisfy: (1) Expertise Advantage – an expert persona should not degrade performance compared to a no‑persona baseline; (2) Robustness – irrelevant persona attributes (e.g., name, favorite color) should have no effect on task outcomes; (3) Fidelity – relevant attributes such as domain expertise, specialization level, or education should shape performance in a predictable, monotonic way.

To operationalize these desiderata, the authors define quantitative metrics: a simple performance gap for Expertise Advantage, a worst‑group accuracy drop for Robustness, and a Kendall‑τ rank correlation between expected and observed ordering of personas for Fidelity.

The experimental study evaluates nine open‑weight, instruction‑tuned LLMs from three families (Gemma‑2, Llama‑3.1, Qwen2.5) across three size scales (≈2 B to 70 B parameters) on 27 tasks drawn from five benchmark datasets (TruthfulQA, GSM8K, MMLU‑Pro, BIG‑Bench, MA TH). The tasks cover factual QA, multiple‑choice reasoning, and open‑ended mathematical problem solving, all with objective ground truth.

Key findings:

- Expertise Advantage is generally satisfied. Most models, especially the largest (70 B), show modest gains (≈2–4 percentage points) when prompted as domain experts. Smaller models sometimes exhibit slight declines, indicating that the benefit scales with model capacity.

- Robustness is severely lacking. Introducing a name or a favorite‑color statement can reduce accuracy by an average of 12 pp (name) and 18 pp (color), with worst‑case drops approaching 30 pp. The effect persists across model families, though the largest models mitigate it slightly.

- Fidelity shows mixed results. Higher education levels modestly improve performance on some knowledge‑heavy tasks (τ≈0.3), but the correlation is weak overall (τ≈0.1–0.2). Specialization hierarchy (broad → focused → niche) rarely aligns with the expected ordering, and domain‑match effects (in‑domain vs. out‑of‑domain experts) yield only small advantages (≈4 pp).

The authors also test three mitigation strategies: (a) stripping irrelevant attributes from prompts, (b) fine‑tuning models with persona‑aware data, and (c) proposing role‑conditioned attention mechanisms (the latter not implemented). Only (a) fully restores robustness by eliminating the offending tokens, while (b) yields modest improvements (≈5 pp) but only for the 70 B models; smaller models gain little.

The paper concludes that while expert personas can be beneficial, current LLMs are overly sensitive to non‑task‑related prompt content, undermining the reliability of persona‑based prompting. Designers should therefore (i) avoid embedding irrelevant attributes, (ii) prioritize large‑scale models when robustness is required, and (iii) explore architectural changes that treat persona tokens separately from content tokens. The work provides a clear evaluation framework that can guide future research on controlled prompting and model alignment.

Comments & Academic Discussion

Loading comments...

Leave a Comment