Towards Robust and Controllable Text-to-Motion via Masked Autoregressive Diffusion

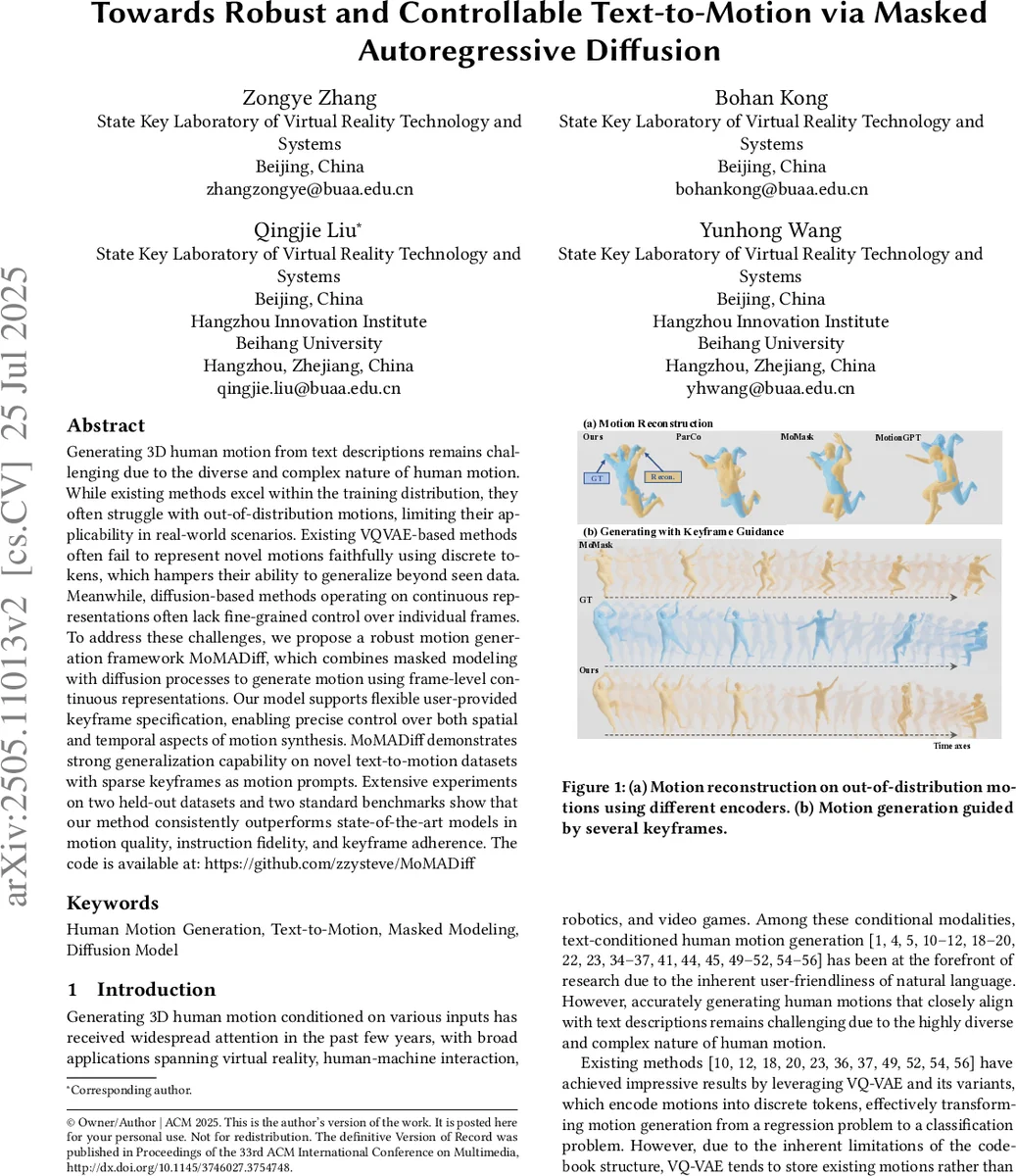

Generating 3D human motion from text descriptions remains challenging due to the diverse and complex nature of human motion. While existing methods excel within the training distribution, they often struggle with out-of-distribution motions, limiting their applicability in real-world scenarios. Existing VQVAE-based methods often fail to represent novel motions faithfully using discrete tokens, which hampers their ability to generalize beyond seen data. Meanwhile, diffusion-based methods operating on continuous representations often lack fine-grained control over individual frames. To address these challenges, we propose a robust motion generation framework MoMADiff, which combines masked modeling with diffusion processes to generate motion using frame-level continuous representations. Our model supports flexible user-provided keyframe specification, enabling precise control over both spatial and temporal aspects of motion synthesis. MoMADiff demonstrates strong generalization capability on novel text-to-motion datasets with sparse keyframes as motion prompts. Extensive experiments on two held-out datasets and two standard benchmarks show that our method consistently outperforms state-of-the-art models in motion quality, instruction fidelity, and keyframe adherence. The code is available at: https://github.com/zzysteve/MoMADiff

💡 Research Summary

The paper tackles the challenging problem of generating 3‑D human motion from natural language descriptions, a task that suffers from limited generalization to out‑of‑distribution (OOD) motions and a lack of fine‑grained temporal control. Existing approaches fall into two camps: discrete token‑based methods that rely on VQ‑VAE codebooks, and continuous diffusion models that generate whole sequences at once. The former struggle to faithfully represent motions not seen during training, while the latter cannot easily incorporate user‑specified constraints on individual frames.

MoMADiff (Masked Autoregressive Diffusion for Motion) proposes a unified framework that combines a frame‑wise continuous latent space with a masked autoregressive diffusion model. First, a lightweight CNN‑based motion VAE encodes a raw motion sequence X =

Comments & Academic Discussion

Loading comments...

Leave a Comment