Enhancing Scene Transition Awareness in Video Generation via Post-Training

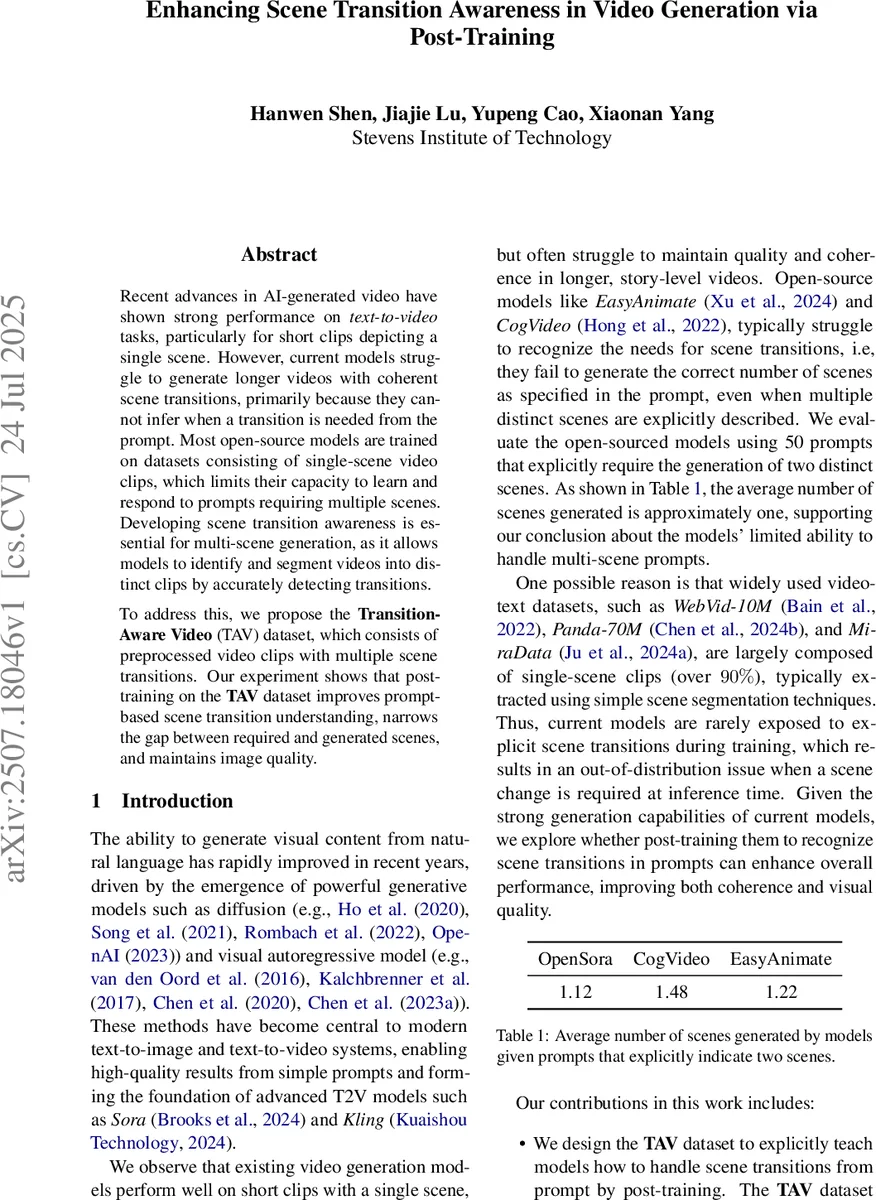

Recent advances in AI-generated video have shown strong performance on \emph{text-to-video} tasks, particularly for short clips depicting a single scene. However, current models struggle to generate longer videos with coherent scene transitions, primarily because they cannot infer when a transition is needed from the prompt. Most open-source models are trained on datasets consisting of single-scene video clips, which limits their capacity to learn and respond to prompts requiring multiple scenes. Developing scene transition awareness is essential for multi-scene generation, as it allows models to identify and segment videos into distinct clips by accurately detecting transitions. To address this, we propose the \textbf{Transition-Aware Video} (TAV) dataset, which consists of preprocessed video clips with multiple scene transitions. Our experiment shows that post-training on the \textbf{TAV} dataset improves prompt-based scene transition understanding, narrows the gap between required and generated scenes, and maintains image quality.

💡 Research Summary

The paper addresses a fundamental limitation of current text‑to‑video (T2V) models: they are trained almost exclusively on single‑scene video clips, which makes them unable to recognize when a prompt calls for multiple scenes or a transition between scenes. The authors first demonstrate this problem empirically by evaluating three open‑source models (OpenSora, CogVideo, EasyAnimate) on 50 prompts that explicitly require two distinct scenes. The average number of scenes generated by these models hovers around one, confirming that they do not infer the need for a transition from the textual description.

To remedy this, the authors introduce the Transition‑Aware Video (TAV) dataset. They sample 500 videos from the validation split of the large‑scale Panda‑70M dataset, ensuring the subset mirrors the overall category distribution. Using a modified version of PySceneDetect, they compute a weighted average pixel difference in HSV space between consecutive frames; when this value exceeds a manually set threshold, a scene cut is declared. For each video they keep only the first detected cut and extract a 10‑second segment centered on the cut (5 seconds before and after). If the video does not contain enough frames on either side, the available footage is used.

Each 10‑second clip contains two distinct scenes. The authors employ the BLIP vision‑language model to generate separate textual descriptions for the “previous” and “next” scenes, then concatenate them into a single prompt of the form “Previous scene: … Next scene: …”. This yields 500 video‑prompt pairs that explicitly encode a scene transition.

The TAV dataset is then used for post‑training (fine‑tuning) of the OpenSora‑Plan v1.3.1 model. Training is performed with DeepSpeed Zero‑Stage 2, an mT5‑XXL text encoder, a 256 × 256 resolution video auto‑encoder (WFV AEModel D8_4x8x8), and a batch size of 1 for 100 steps (≈2 hours per epoch on a single Nvidia H200). Key hyper‑parameters include a learning rate of 1e‑5, bf16 mixed precision, EMA decay 0.9999, sparse 1‑D attention (sparse_n = 4), and an SNR‑weighted diffusion loss (snr_gamma = 5.0).

Evaluation is conducted with three prompt groups:

- Group A – single‑sentence prompts that do not mention a transition (e.g., “Superman flying across the city”).

- Group B – two‑sentence prompts that imply a transition but do not explicitly label it (e.g., “Superman flies, then sees Batman fighting Joker”).

- Group C – prompts that explicitly label the two scenes using the “Previous scene / Next scene” format.

Both the baseline (pre‑fine‑tuning) and the post‑trained model are run on the same set of prompts, and two metrics are recorded: (1) the average number of scene segments generated per video, and (2) video quality scores from VBench (aesthetic quality, overall consistency, dynamic degree, imaging quality).

Results show a dramatic increase in the average number of generated scenes after post‑training. For the baseline, Groups B and C still produce roughly one segment on average (1.06–1.12). After 24 epochs of post‑training, the averages rise to 2.38 (Group A), 2.70 (Group B), and 2.90 (Group C). Even after 36 epochs, the numbers stay above 2.0, indicating that the model has learned to respect multi‑scene instructions.

Crucially, video quality does not degrade. In fact, dynamic consistency and overall temporal smoothness improve (e.g., dynamic degree rises from 0.045 to ~0.06, overall consistency from 0.203 to ~0.5). Aesthetic and imaging quality remain comparable to the baseline, confirming that the added scene‑transition knowledge does not sacrifice visual fidelity.

The authors acknowledge several limitations: the TAV dataset is small (500 clips), the scene‑cut threshold is heuristically chosen, and experiments are limited to a single architecture (OpenSora). They suggest future work should explore larger, more diverse multi‑scene datasets, systematic comparison of different cut‑detection algorithms, and integration of dedicated transition modules (e.g., transition tokens or planning LLMs) into various T2V pipelines.

In conclusion, the paper demonstrates that a data‑centric post‑training approach can endow existing diffusion‑based video generators with robust scene‑transition awareness. By explicitly teaching the model how to handle prompts that require multiple scenes, the authors bridge a critical gap between prompt intent and generated output, paving the way for more coherent, story‑level video synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment