ApproxGNN: A Pretrained GNN for Parameter Prediction in Design Space Exploration for Approximate Computing

Approximate computing offers promising energy efficiency benefits for error-tolerant applications, but discovering optimal approximations requires extensive design space exploration (DSE). Predicting the accuracy of circuits composed of approximate components without performing complete synthesis remains a challenging problem. Current machine learning approaches used to automate this task require retraining for each new circuit configuration, making them computationally expensive and time-consuming. This paper presents ApproxGNN, a construction methodology for a pre-trained graph neural network model predicting QoR and HW cost of approximate accelerators employing approximate adders from a library. This approach is applicable in DSE for assignment of approximate components to operations in accelerator. Our approach introduces novel component feature extraction based on learned embeddings rather than traditional error metrics, enabling improved transferability to unseen circuits. ApproxGNN models can be trained with a small number of approximate components, supports transfer to multiple prediction tasks, utilizes precomputed embeddings for efficiency, and significantly improves accuracy of the prediction of approximation error. On a set of image convolutional filters, our experimental results demonstrate that the proposed embeddings improve prediction accuracy (mean square error) by 50% compared to conventional methods. Furthermore, the overall prediction accuracy is 30% better than statistical machine learning approaches without fine-tuning and 54% better with fast finetuning.

💡 Research Summary

The paper introduces ApproxGNN, a pretrained graph neural network (GNN) designed to serve as a universal surrogate model for quality‑of‑result (QoR) and hardware‑cost prediction in the design‑space exploration (DSE) of approximate computing accelerators. Approximate computing exploits the error tolerance of many applications (e.g., image filtering, video processing) to trade accuracy for energy efficiency, but identifying the optimal combination of approximate components (adders, multipliers, etc.) requires evaluating an astronomically large number of design points. Traditional approaches either perform full synthesis and simulation for each candidate—an impractically time‑consuming process—or train a new machine‑learning model for every target circuit, incurring heavy retraining overhead.

ApproxGNN addresses these challenges through a two‑stage methodology. In the first stage, a component‑embedding model learns high‑dimensional vector representations of approximate adders directly from their RTL descriptions. The authors built a custom Verilog parser that converts RTL code into a graph where nodes correspond to logic elements and edges to signal connections. Using message‑passing GNN layers (implemented with PyTorch Geometric), the model captures structural, timing, and power characteristics beyond conventional scalar error metrics such as mean absolute error (MAE) or worst‑case error. These embeddings are computed once per library component and stored for reuse.

In the second stage, a parameter‑prediction model consumes the circuit‑level graph together with the pre‑computed component embeddings. It performs graph‑level aggregation and a read‑out phase to jointly regress two targets: (i) QoR, expressed as approximation error, and (ii) hardware cost metrics (area, power, delay). Multi‑task learning is employed so that shared representations improve generalization across both objectives.

To train the models, the authors generated a synthetic dataset based on the EvoApproxLib library of approximate adders. They automatically constructed a variety of convolutional image‑filter accelerators (blur, Sobel, Gaussian) at the RTL level, randomly assigning library components to arithmetic operations. Each generated design was fully synthesized and simulated, providing ground‑truth QoR and hardware cost values. Compared with the AutoAx pipeline, which requires roughly 4,000 random configurations per accelerator, ApproxGNN needed only about 30 % of that number to achieve comparable coverage, dramatically reducing the data‑collection burden.

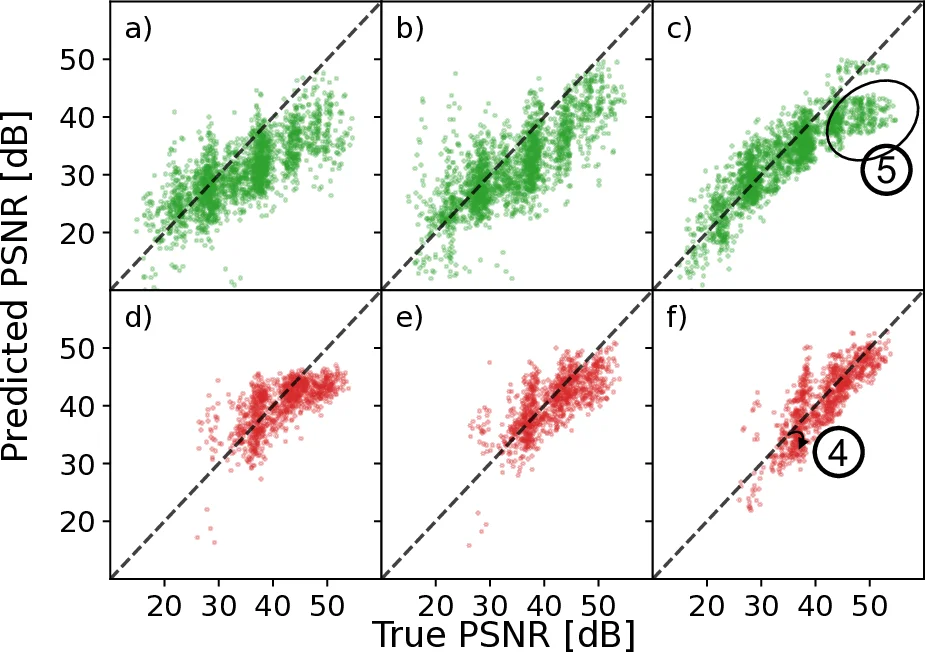

Experimental results demonstrate three key improvements. First, the mean‑square error (MSE) of QoR prediction is reduced by 50 % relative to conventional statistical models (Random Forest, Decision Tree). Second, when compared with the state‑of‑the‑art ApproxPilot GNN approach, ApproxGNN achieves a 30 % higher overall prediction accuracy without any fine‑tuning. Third, a lightweight fine‑tuning step on a small, application‑specific dataset further boosts accuracy by an additional 24 % (total 54 % improvement over the baseline). Importantly, because component embeddings are precomputed, inference on a new accelerator incurs negligible overhead, enabling near‑instantaneous DSE iterations. The authors integrated the surrogate into a multi‑objective evolutionary algorithm (NSGA‑II) and observed a roughly 60 % reduction in total DSE runtime while still locating Pareto‑optimal configurations that, after final synthesis, meet the desired trade‑offs.

The paper also discusses limitations. ApproxGNN currently focuses on approximate adders; extending the framework to other operator types (multipliers, shifters) will require additional embedding training. Moreover, GNN scalability can become an issue for very large ASIC designs, suggesting future work on hierarchical GNNs or graph‑sampling techniques. Nonetheless, the authors provide an open‑source repository containing the Verilog parser, dataset generation scripts, pretrained embedding and prediction models, facilitating reproducibility and further research.

In summary, ApproxGNN delivers a reusable, pretrained GNN that eliminates per‑design retraining, leverages learned embeddings for richer component characterization, and substantially improves both prediction accuracy and DSE efficiency in approximate computing. This represents a significant step toward practical, automated design flows for energy‑efficient, error‑tolerant hardware.

Comments & Academic Discussion

Loading comments...

Leave a Comment