SCALE: Towards Collaborative Content Analysis in Social Science with Large Language Model Agents and Human Intervention

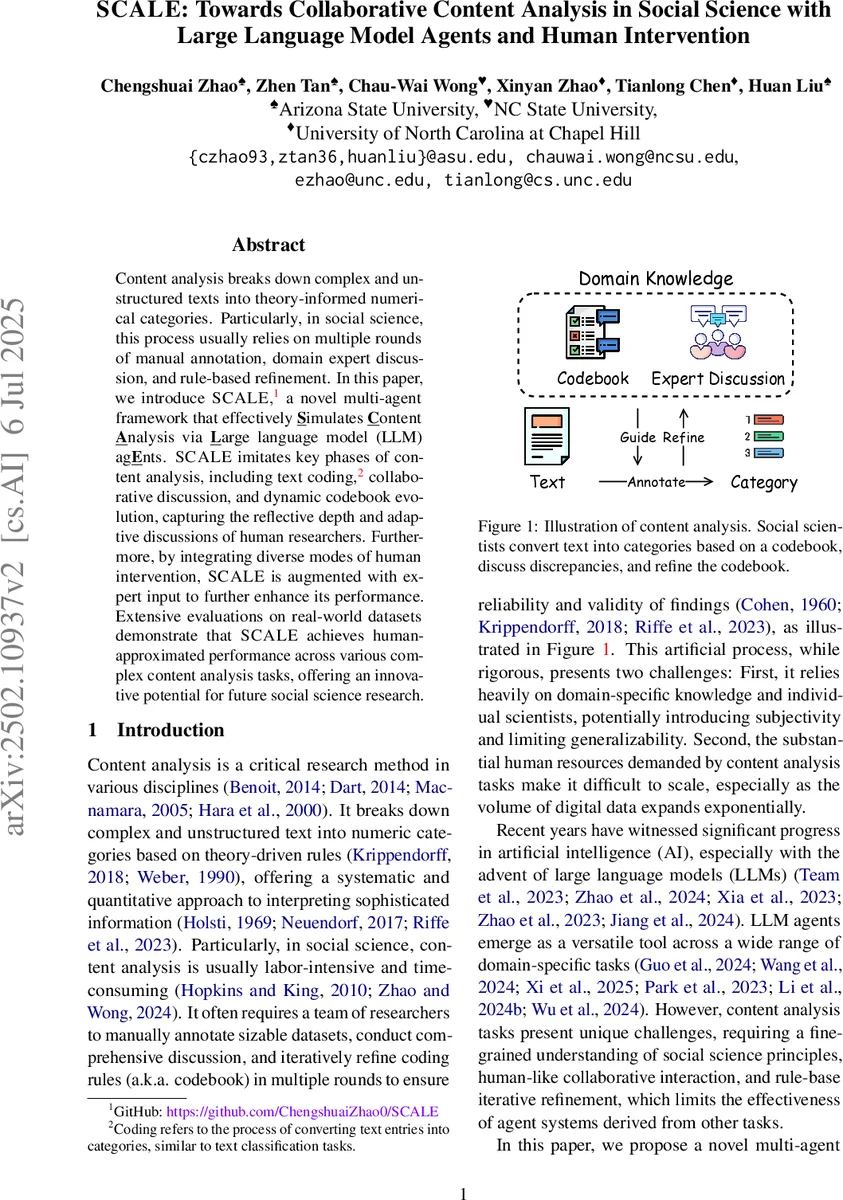

Content analysis breaks down complex and unstructured texts into theory-informed numerical categories. Particularly, in social science, this process usually relies on multiple rounds of manual annotation, domain expert discussion, and rule-based refinement. In this paper, we introduce SCALE, a novel multi-agent framework that effectively $\underline{\textbf{S}}$imulates $\underline{\textbf{C}}$ontent $\underline{\textbf{A}}$nalysis via $\underline{\textbf{L}}$arge language model (LLM) ag$\underline{\textbf{E}}$nts. SCALE imitates key phases of content analysis, including text coding, collaborative discussion, and dynamic codebook evolution, capturing the reflective depth and adaptive discussions of human researchers. Furthermore, by integrating diverse modes of human intervention, SCALE is augmented with expert input to further enhance its performance. Extensive evaluations on real-world datasets demonstrate that SCALE achieves human-approximated performance across various complex content analysis tasks, offering an innovative potential for future social science research.

💡 Research Summary

The paper introduces SCALE, a multi‑agent framework that simulates the full workflow of content analysis in the social sciences using large language model (LLM) agents together with human expert intervention. Traditional content analysis relies on a manually crafted codebook, independent coding by multiple researchers, iterative discussion to resolve disagreements, and successive refinement of the codebook. This process is labor‑intensive, costly, and prone to subjectivity. SCALE automates these steps in four stages. First, a set of N LLM agents is instantiated, each endowed with a distinct persona that mimics a real social scientist (age, discipline, experience, etc.). The agents receive either a pre‑populated codebook of theory‑driven rules or an empty rule set, prompting them to generate or refine rules from scratch. Second, each agent independently annotates a batch of texts according to the current codebook, producing categorical labels. Third, when agents’ annotations diverge, they engage in a structured, up‑to‑K‑round discussion. In each round agents exchange rationales, critique each other’s decisions, and may revise their labels. Fourth, the outcomes of the discussion feed into a codebook‑evolution phase where agents collaboratively modify, add, or delete rules, optionally enriching existing rules with illustrative examples.

Human involvement is woven into the framework through two dimensions: scope (targeted vs. extensive) and role (collaborative vs. directive). Targeted intervention limits human input to the discussion phase, while extensive intervention also covers codebook evolution. In collaborative mode, agents may accept or reject expert feedback, fostering a bidirectional dialogue; in directive mode, agents must obey every instruction, providing a top‑down control mechanism. These designs draw on social influence theory and human‑computer interaction literature to mitigate algorithmic bias and preserve domain expertise.

The authors evaluate SCALE on six real‑world datasets covering brand‑consumer dialogues, cancer emotional support, narrative event sequences, Flint water‑poisoning emotions, and product‑incident sentiment. Tasks include multi‑class and multi‑label classification. Metrics such as Cohen’s κ, accuracy, annotation time, and cost savings demonstrate that SCALE achieves near‑human performance (κ≈0.78–0.84, accuracy 84–92 %) while reducing annotation time by roughly 68 % and labor costs by over 70 %. Moreover, rule modifications proposed by agents during codebook evolution are accepted by human experts in 85 % of cases, indicating that automatically generated rules are practically useful.

The paper acknowledges limitations: LLMs can produce hallucinations, discussion rounds may become expensive if many disagreements arise, and starting from an empty codebook can lead to lengthy initial negotiations. To address these issues, the authors suggest dynamic termination criteria for discussions, refined prompting strategies, and hybrid approaches that combine pre‑validated rule templates with agent‑generated suggestions.

In sum, SCALE offers a scalable, theory‑grounded, and human‑centric solution for automating content analysis. By faithfully reproducing the reflective depth of human researchers while leveraging the speed and consistency of LLMs, it opens the door to large‑scale quantitative text analysis in social science and humanities, and provides a template for extending AI‑assisted methodologies to other expert‑driven research domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment