Beyond Productivity: Rethinking the Impact of Creativity Support Tools

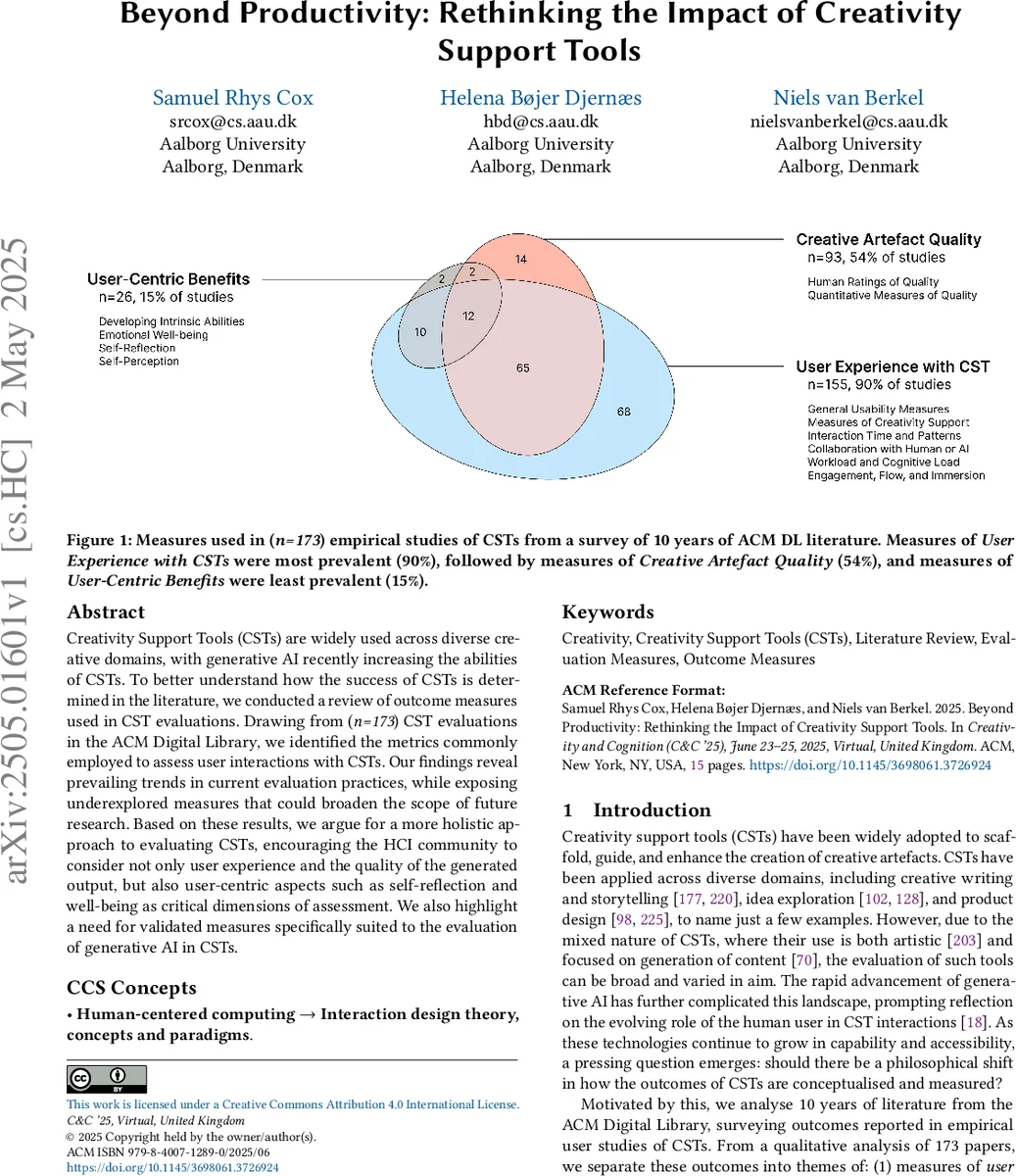

Creativity Support Tools (CSTs) are widely used across diverse creative domains, with generative AI recently increasing the abilities of CSTs. To better understand how the success of CSTs is determined in the literature, we conducted a review of outcome measures used in CST evaluations. Drawing from (n=173) CST evaluations in the ACM Digital Library, we identified the metrics commonly employed to assess user interactions with CSTs. Our findings reveal prevailing trends in current evaluation practices, while exposing underexplored measures that could broaden the scope of future research. Based on these results, we argue for a more holistic approach to evaluating CSTs, encouraging the HCI community to consider not only user experience and the quality of the generated output, but also user-centric aspects such as self-reflection and well-being as critical dimensions of assessment. We also highlight a need for validated measures specifically suited to the evaluation of generative AI in CSTs.

💡 Research Summary

**

The paper conducts a systematic review of outcome measures used in the evaluation of Creativity Support Tools (CSTs) over a ten‑year span (2015‑2024) in the ACM Digital Library. Starting from an initial set of 1,241 papers retrieved with the keywords “creativity support tool” and “creativity,” the authors applied a detailed inclusion/exclusion protocol that filtered out studies lacking empirical user interaction, those focused solely on taxonomy creation, or those that evaluated only sentiment or non‑interactive content generation. After title/abstract screening and full‑text verification, 235 papers remained for deeper analysis; a subsequent coding phase removed another 62 papers due to insufficient reporting, yielding a final corpus of 173 empirical studies.

The authors performed open coding on each paper to extract every reported outcome metric, then grouped these into three high‑level themes: (1) User Experience with CST, (2) Creative Artefact Quality, and (3) User‑Centric Benefits. Within the User Experience theme, traditional usability and workload instruments dominate: System Usability Scale (SUS), NASA‑TLX, UEQ, along with ad‑hoc satisfaction and usefulness questionnaires. This theme appears in 90 % of the studies, indicating a strong bias toward measuring interface ergonomics, perceived ease of use, and immediate task load.

The Creative Artefact Quality theme captures evaluations of the generated output’s novelty, usefulness, completeness, and aesthetic value. Researchers employ expert ratings, peer review, and automated similarity metrics such as BLEU or ROUGE. This theme is present in 54 % of the papers, reflecting the field’s continued emphasis on the “product” side of creativity.

The third theme, User‑Centric Benefits, is markedly under‑represented, appearing in only 15 % of the studies. It includes measures of self‑reflection, learning gains, emotional well‑being, self‑efficacy, and other personal outcomes that extend beyond the artefact itself. The authors note that while the health‑promoting effects of creative activities are well‑documented in broader literature (e.g., reductions in anxiety, improvements in self‑esteem), CST research rarely incorporates such metrics, especially in the context of generative AI‑enhanced tools.

Venue analysis shows that the majority of the papers were published in CHI (55), Creativity and Cognition (28), DIS (16), and UIST (16), spanning domains such as education (from kindergarten to university), industry (designers, live coders, writers), entertainment, and accessibility. Despite this diversity, the distribution of outcome measures remains consistent across domains, reinforcing the notion of a field‑wide evaluation paradigm focused on usability and artefact quality.

From these findings, the authors argue for a paradigm shift toward a holistic evaluation framework that balances productivity‑oriented metrics with user‑centric outcomes. They propose several concrete actions:

- Validate and extend existing user‑experience scales to capture nuances introduced by generative AI, such as perceived agency, trust in AI suggestions, and collaborative flow.

- Develop AI‑specific collaboration metrics, e.g., Human‑AI Co‑Creativity Efficiency, AI Contribution Ratio, and measures of sustained creative flow during iterative prompting.

- Integrate established psychological instruments (PANAS for affect, Flow State Scale, Self‑Efficacy Scale) to assess long‑term benefits like learning, emotional resilience, and identity development.

- Encourage multi‑disciplinary collaboration among HCI researchers, cognitive scientists, designers, and AI developers to co‑create standardized, validated measurement suites.

- Incorporate these measures early in the design process, ensuring that tools are evaluated not only for the quality of their output but also for their impact on the creator’s well‑being and growth.

The paper concludes that the current evaluation landscape for CSTs is narrowly focused on immediate usability and artefact quality, which limits our understanding of how these tools affect creators holistically. By broadening the metric set to include self‑reflection, learning, and well‑being—especially in the era of powerful generative models—future research can better align CST development with the ultimate goal of enhancing human creativity and quality of life.

Comments & Academic Discussion

Loading comments...

Leave a Comment