Masked strategies for images with small objects

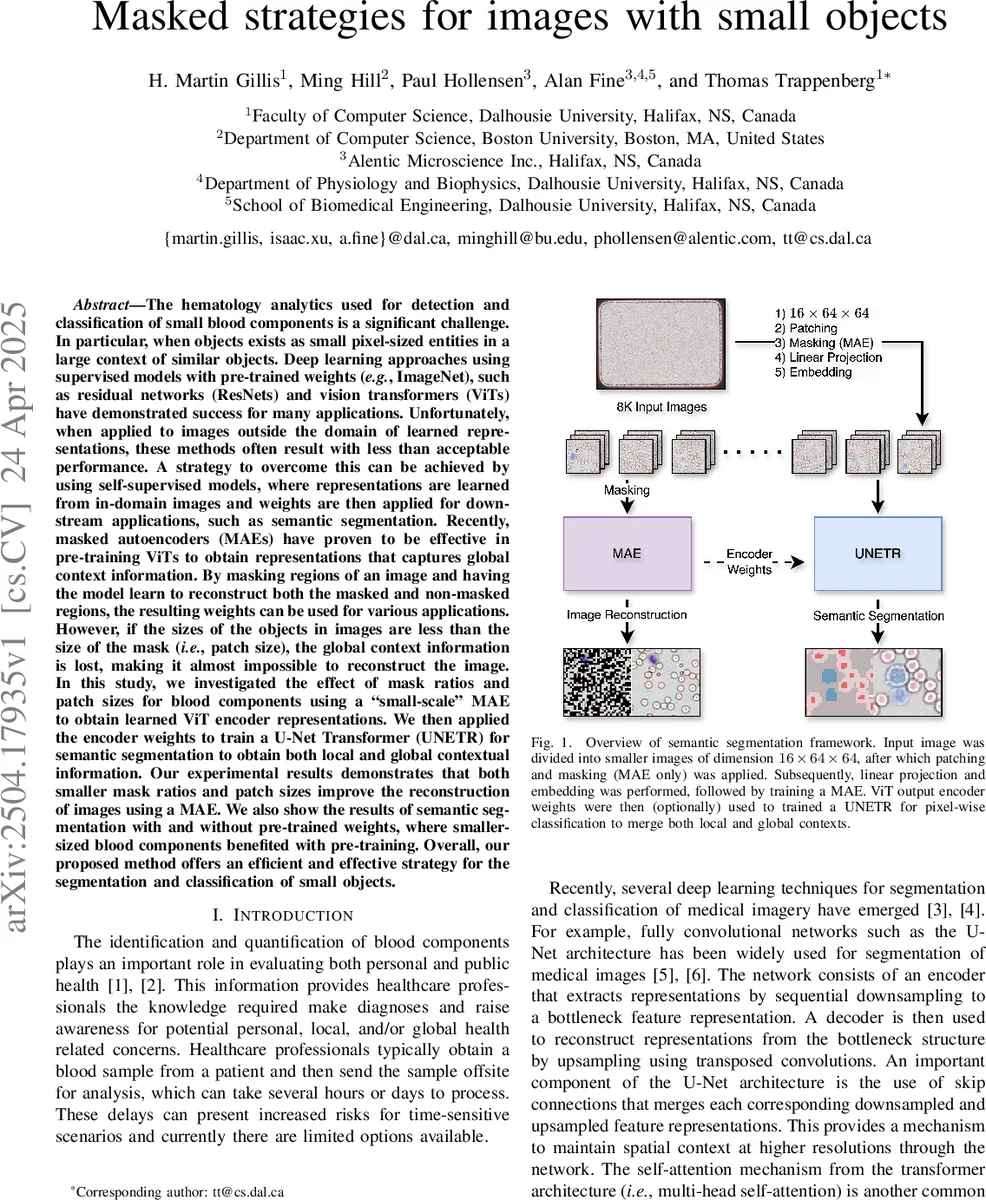

The hematology analytics used for detection and classification of small blood components is a significant challenge. In particular, when objects exists as small pixel-sized entities in a large context of similar objects. Deep learning approaches using supervised models with pre-trained weights, such as residual networks and vision transformers have demonstrated success for many applications. Unfortunately, when applied to images outside the domain of learned representations, these methods often result with less than acceptable performance. A strategy to overcome this can be achieved by using self-supervised models, where representations are learned and weights are then applied for downstream applications. Recently, masked autoencoders have proven to be effective to obtain representations that captures global context information. By masking regions of an image and having the model learn to reconstruct both the masked and non-masked regions, weights can be used for various applications. However, if the sizes of the objects in images are less than the size of the mask, the global context information is lost, making it almost impossible to reconstruct the image. In this study, we investigated the effect of mask ratios and patch sizes for blood components using a MAE to obtain learned ViT encoder representations. We then applied the encoder weights to train a U-Net Transformer for semantic segmentation to obtain both local and global contextual information. Our experimental results demonstrates that both smaller mask ratios and patch sizes improve the reconstruction of images using a MAE. We also show the results of semantic segmentation with and without pre-trained weights, where smaller-sized blood components benefited with pre-training. Overall, our proposed method offers an efficient and effective strategy for the segmentation and classification of small objects.

💡 Research Summary

This paper addresses the challenging problem of detecting and classifying extremely small blood components—particularly platelets and platelet aggregates—in high‑resolution microscopy images. Conventional supervised deep‑learning models pre‑trained on large natural‑image datasets such as ImageNet often fail to generalize to this domain because the learned representations lack the fine‑grained, global context needed for pixel‑level discrimination of objects that may be only a few pixels in size. To overcome this limitation, the authors propose a two‑stage framework that combines self‑supervised masked autoencoders (MAE) with a Vision‑Transformer‑based UNETR segmentation network.

In the first stage, a “small‑scale” MAE is trained on patches extracted from 16‑channel, 3 K × 4 K blood‑sample images captured by the Prospector lens‑less near‑field microscope. The original images are divided into 64 × 64 tiles (16 × 64 × 64 tensors) to drastically reduce memory requirements. The MAE architecture follows the Hugging‑Face implementation, using a ViT encoder with 6 layers, 6 attention heads, an embedding dimension of 192, and a hidden MLP dimension of 768. Crucially, the authors explore three patch sizes (2, 4, 8 pixels) and three mask ratios (0.5, 0.75, 0.9). Experiments show that smaller patches and lower mask ratios lead to markedly lower reconstruction error (mean absolute error) because the mask does not completely obscure the tiny objects. With a patch size of 2 px and a mask ratio of 0.5, the MAE achieves the best reconstruction quality after 100 epochs (batch size 16, Adam optimizer, OneCycleLR schedule).

The second stage leverages the encoder weights learned by the MAE to initialize the ViT backbone of a UNETR model. UNETR integrates the transformer encoder with a U‑Net‑style decoder via skip connections, thereby preserving both long‑range dependencies (global context) and high‑resolution spatial details (local context). Three patch sizes (2, 4, 8) and six feature‑size settings (16, 32, 64, 128, 256, 512) are evaluated. For patch size 2, only the final encoder layer (layer 6) is passed to the decoder; for patch size 4, layers 6 and 3 are used; for patch size 8, layers 6, 4, and 2 are combined, providing multi‑scale information. The segmentation dataset comprises 2 382 tiles with pixel‑wise labels for nine classes (background, WBC, platelet, RBC interior, RBC exterior, bead, artifact, debris, bubble). Because platelets occupy only ~2 % of the total pixels, class imbalance is severe. Five‑fold cross‑validation is performed, with data augmentation (random flips) applied consistently to images and masks.

Results demonstrate that pre‑training the encoder yields substantial gains over a randomly initialized UNETR. Overall accuracy improves from ~0.96 to ~0.98, and the platelet F1‑score rises from 0.71 (random init) to 0.94–0.95 (pre‑trained). Smaller patch sizes (2 px and 4 px) consistently outperform patch 8, confirming that preserving fine‑grained detail during MAE pre‑training is essential for downstream segmentation of tiny objects. The best configuration (patch 2, feature size 512) achieves the highest per‑class F1‑scores across all categories, while still fitting within the memory constraints of a modest GPU thanks to the tile‑based processing and reduced embedding dimensions.

The authors also highlight the practical relevance of their approach: the “divide‑and‑conquer” tiling strategy enables on‑device training and inference on the Prospector platform, which is designed for rapid point‑of‑care blood analysis. By reducing the ViT encoder’s depth and head count, the model can be deployed on hardware with limited RAM without sacrificing segmentation quality.

In summary, the paper makes three key contributions: (1) an efficient, tile‑based MAE pre‑training pipeline tailored for images containing sub‑pixel objects; (2) empirical evidence that lower mask ratios and smaller patch sizes preserve the information needed to reconstruct and later segment tiny blood components; and (3) a demonstration that initializing a UNETR with these domain‑specific weights markedly improves semantic segmentation of small objects, especially platelets. The work opens avenues for further research such as adaptive masking, multi‑scale pyramid encoders, or integration with downstream classification tasks for comprehensive blood‑cell analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment