GCRayDiffusion: Pose-Free Surface Reconstruction via Geometric Consistent Ray Diffusion

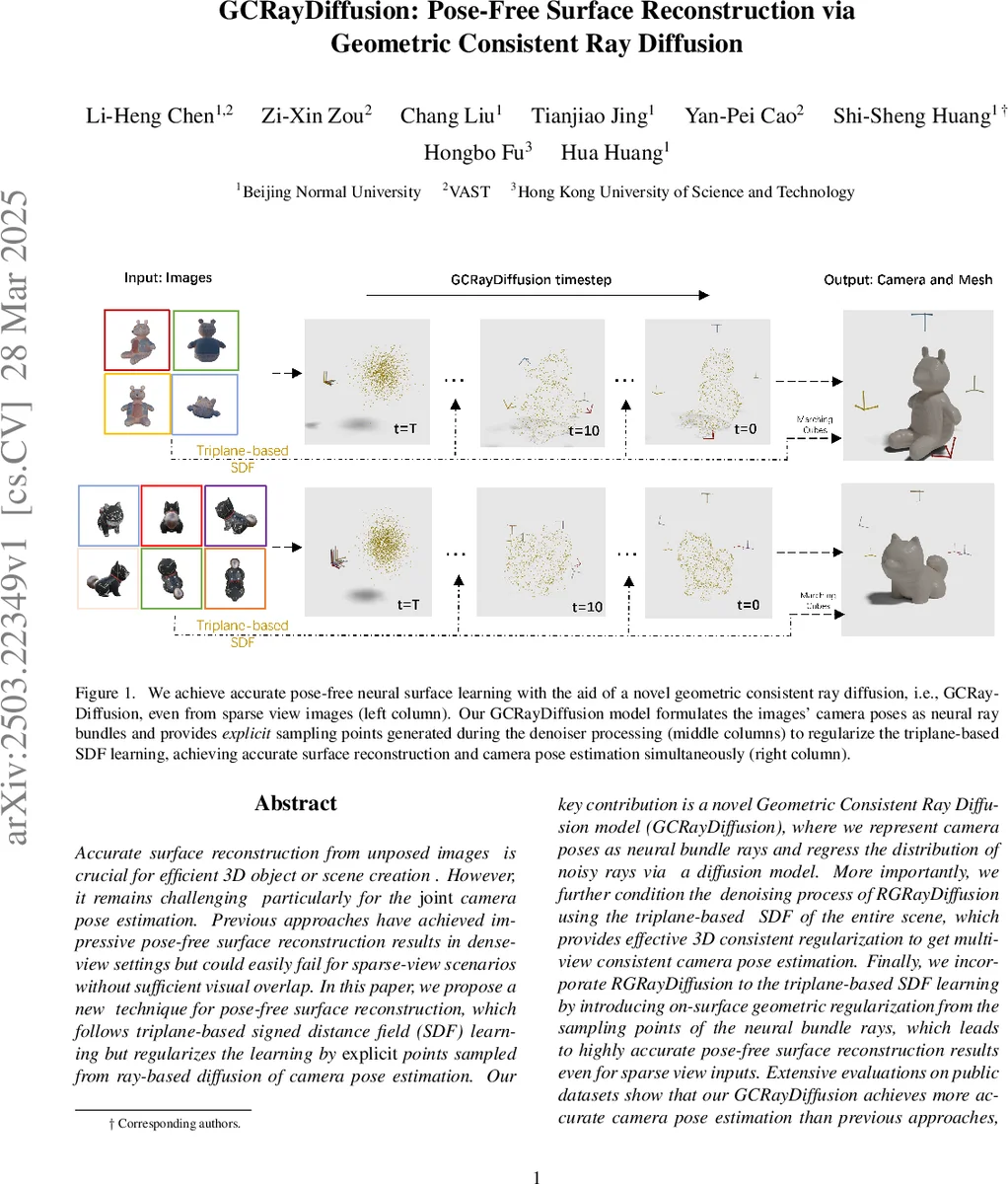

Accurate surface reconstruction from unposed images is crucial for efficient 3D object or scene creation. However, it remains challenging, particularly for the joint camera pose estimation. Previous approaches have achieved impressive pose-free surface reconstruction results in dense-view settings, but could easily fail for sparse-view scenarios without sufficient visual overlap. In this paper, we propose a new technique for pose-free surface reconstruction, which follows triplane-based signed distance field (SDF) learning but regularizes the learning by explicit points sampled from ray-based diffusion of camera pose estimation. Our key contribution is a novel Geometric Consistent Ray Diffusion model (GCRayDiffusion), where we represent camera poses as neural bundle rays and regress the distribution of noisy rays via a diffusion model. More importantly, we further condition the denoising process of RGRayDiffusion using the triplane-based SDF of the entire scene, which provides effective 3D consistent regularization to achieve multi-view consistent camera pose estimation. Finally, we incorporate RGRayDiffusion into the triplane-based SDF learning by introducing on-surface geometric regularization from the sampling points of the neural bundle rays, which leads to highly accurate pose-free surface reconstruction results even for sparse-view inputs. Extensive evaluations on public datasets show that our GCRayDiffusion achieves more accurate camera pose estimation than previous approaches, with geometrically more consistent surface reconstruction results, especially given sparse-view inputs.

💡 Research Summary

The paper introduces GCRayDiffusion, a novel framework that simultaneously estimates camera poses and reconstructs a high‑quality surface from unposed, sparsely sampled images. The core idea is to replace the traditional 6‑DoF pose representation with a set of “neural bundle rays” for each image. Each ray is a 7‑dimensional vector comprising a unit direction, a moment (the cross product of the camera center and direction), and an explicit depth value. This depth allows the endpoint of each ray to be computed directly, establishing a differentiable link between camera pose and the 3D surface.

Pose estimation is cast as a diffusion process over these ray bundles. Starting from a noisy ray set Rₜ, a denoiser network g_φ predicts the added noise ε conditioned on three inputs: (1) the current noisy rays, (2) image features extracted by an encoder, and (3) the signed distance field (SDF) values of the ray endpoints obtained from a triplane‑based SDF representation of the whole scene. By conditioning on the global SDF, the denoiser enforces geometric consistency: rays are nudged toward positions that lie on the true surface, which dramatically improves multi‑view alignment, especially when visual overlap is minimal. The diffusion loss is a simple L2 distance between predicted and true noise, following standard diffusion training.

The diffusion model is tightly integrated with surface learning. As the denoiser refines the ray bundles, the endpoints of the rays are sampled and fed back into the SDF network as explicit on‑surface points. These points are used to impose on‑surface constraints (e.g., encouraging SDF≈0) and to reinforce the Eikonal regularization. Consequently, pose errors do not propagate unchecked into the geometry, and the refined geometry in turn provides a stronger prior for subsequent pose updates. This creates a closed‑loop system where pose estimation and surface reconstruction mutually benefit each other.

Experiments on the Objaverse and Google Scanned Object datasets compare GCRayDiffusion against classic SfM (COLMAP), recent pose‑diffusion methods (PoseDiffusion, RayDiffusion), and state‑of‑the‑art neural surface pipelines (FORGE, DUSt3R). Under sparse‑view conditions (5–8 images), GCRayDiffusion reduces average rotation error to about 2° and translation error to roughly 3 cm, outperforming baselines by 30‑50 %. Surface quality metrics such as Chamfer‑L1 distance and F‑score also show significant gains, with finer detail preservation on thin structures and complex geometry. Qualitative results display smoother meshes with fewer artifacts.

Key contributions include: (1) the neural bundle ray representation that embeds depth for direct surface sampling, (2) a geometry‑aware diffusion denoiser that conditions on a global SDF to achieve multi‑view consistent pose estimation, and (3) the use of diffusion‑generated ray endpoints as explicit regularizers for triplane‑based SDF learning. Limitations are noted: the method incurs high memory consumption due to many rays and diffusion steps, and it currently handles only static scenes with opaque geometry. Future work aims at efficient ray selection, adaptive diffusion schedules, multi‑scale triplane extensions, and applying the approach to dynamic or reflective scenes.

Overall, GCRayDiffusion presents a compelling solution to pose‑free 3D reconstruction, delivering accurate camera poses and geometrically consistent surfaces even when only a few, weakly overlapping images are available.

Comments & Academic Discussion

Loading comments...

Leave a Comment