Text-Derived Relational Graph-Enhanced Network for Skeleton-Based Action Segmentation

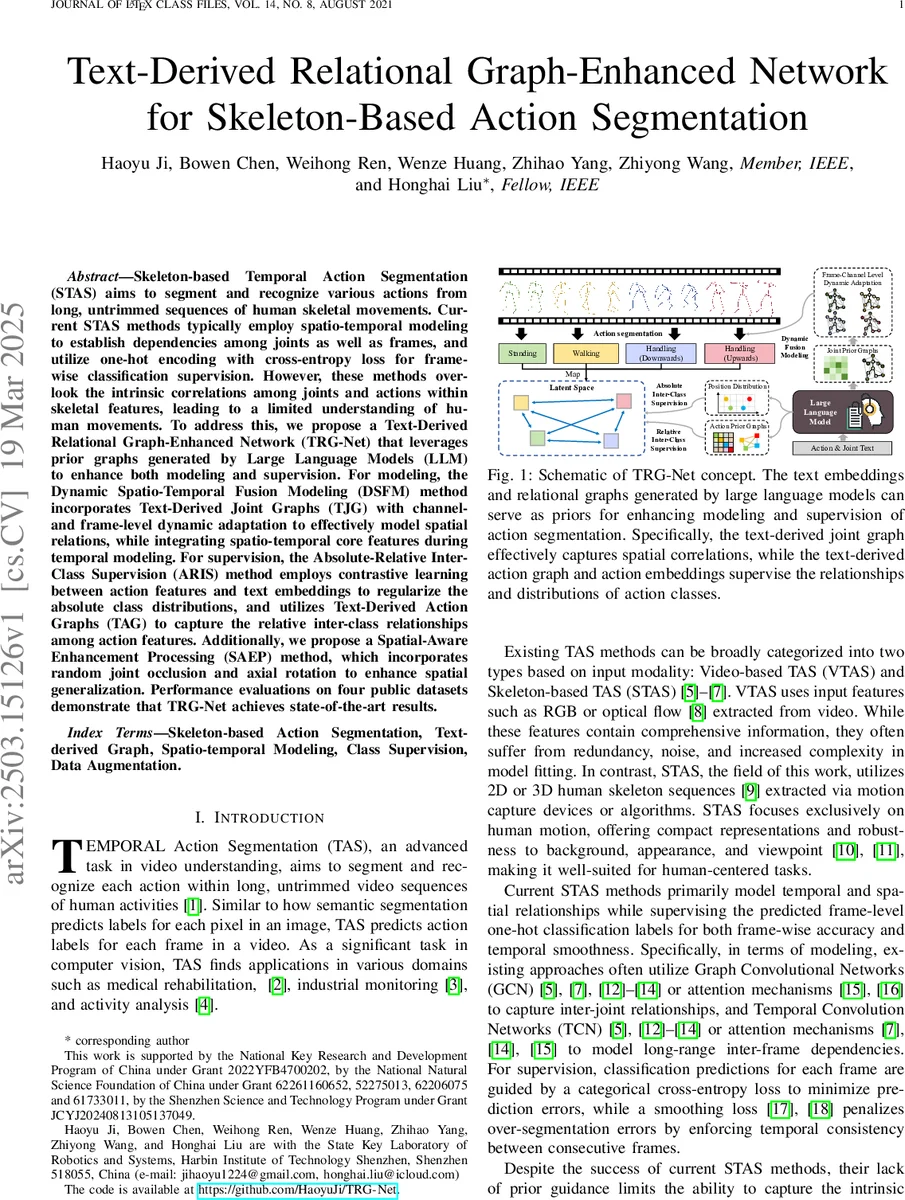

Skeleton-based Temporal Action Segmentation (STAS) aims to segment and recognize various actions from long, untrimmed sequences of human skeletal movements. Current STAS methods typically employ spatio-temporal modeling to establish dependencies among joints as well as frames, and utilize one-hot encoding with cross-entropy loss for frame-wise classification supervision. However, these methods overlook the intrinsic correlations among joints and actions within skeletal features, leading to a limited understanding of human movements. To address this, we propose a Text-Derived Relational Graph-Enhanced Network (TRG-Net) that leverages prior graphs generated by Large Language Models (LLM) to enhance both modeling and supervision. For modeling, the Dynamic Spatio-Temporal Fusion Modeling (DSFM) method incorporates Text-Derived Joint Graphs (TJG) with channel- and frame-level dynamic adaptation to effectively model spatial relations, while integrating spatio-temporal core features during temporal modeling. For supervision, the Absolute-Relative Inter-Class Supervision (ARIS) method employs contrastive learning between action features and text embeddings to regularize the absolute class distributions, and utilizes Text-Derived Action Graphs (TAG) to capture the relative inter-class relationships among action features. Additionally, we propose a Spatial-Aware Enhancement Processing (SAEP) method, which incorporates random joint occlusion and axial rotation to enhance spatial generalization. Performance evaluations on four public datasets demonstrate that TRG-Net achieves state-of-the-art results.

💡 Research Summary

This paper addresses two fundamental shortcomings of current skeleton‑based temporal action segmentation (STAS) methods: (1) the inability to capture fine‑grained, action‑dependent spatial relationships among joints, and (2) the lack of semantic supervision that reflects inter‑class similarities. To overcome these issues, the authors propose TRG‑Net, a framework that injects prior knowledge derived from large language models (LLMs) into both the modeling and supervision stages.

First, descriptive sentences for each joint and each action are generated automatically with GPT‑4. These sentences are encoded by a pre‑trained BERT model, producing joint embeddings (E_J) and action embeddings (E_A). Pairwise Euclidean distances between embeddings are normalized to construct two relational graphs: the Text‑Derived Joint Graph (TJG) that encodes semantic affinities among joints, and the Text‑Derived Action Graph (TAG) that encodes affinities among action classes. These graphs serve as external priors.

The modeling component, Dynamic Spatio‑Temporal Fusion Modeling (DSFM), consists of a spatial branch and a temporal‑fusion branch. In the spatial branch, the input skeleton sequence X∈ℝ^{C₀×T×V} passes through a channel‑level dynamic GCN and a frame‑level dynamic GCN. Both GCNs adapt their adjacency matrices in real time using TJG, allowing the network to re‑weight joint connections according to the current frame’s features. This dynamic adaptation enables the model to represent, for example, the “hand‑elbow” relationship differently when the subject is walking versus sitting. The temporal‑fusion branch preserves the core spatial features while applying a Linformer‑based temporal convolution, thereby achieving long‑range temporal reasoning without losing spatial context.

The supervision component, Absolute‑Relative Inter‑Class Supervision (ARIS), introduces two complementary losses. The absolute part uses contrastive (InfoNCE) learning between the per‑frame action feature f_i and its corresponding text embedding a_i, encouraging each feature to align closely with its textual description. This regularizes the absolute distribution of class features. The relative part leverages TAG: the normalized inverse distances between action embeddings define a target similarity matrix R. A KL‑divergence loss forces the similarity of learned action features to match R, pulling semantically similar actions (e.g., “walking” and “running”) together and pushing dissimilar ones apart. Together, these losses embed semantic structure directly into the feature space.

To improve robustness, the authors propose Spatial‑Aware Enhancement Processing (SAEP), a data‑augmentation scheme that (i) randomly masks a subset of joints in each frame (random joint occlusion) and (ii) applies random axial rotations to the whole skeleton. These augmentations simulate occlusion and viewpoint changes, encouraging the network to rely on global motion cues rather than fixed joint positions.

Experiments are conducted on four public benchmarks: PKU‑MMD (cross‑subject and cross‑view splits), LARa, and MCFS‑130. TRG‑Net consistently outperforms state‑of‑the‑art baselines—including GCN‑TCN hybrids, pure Transformers, and the prior LaSA method—by 2–4 percentage points in frame accuracy and by notable margins in edit distance and F1@10/25. Ablation studies reveal that removing TJG, TAG, or SAEP each degrades performance by 1.5–2.2 %, confirming the contribution of every component. The model’s parameter count and FLOPs remain comparable to existing Transformer‑based approaches, preserving real‑time feasibility.

The paper also discusses limitations: the reliance on predefined joint/action names for text generation means that adding new actions requires regenerating the graphs; the fixed dimensionality of BERT embeddings may limit discrimination of extremely subtle motion differences; and the contrastive loss assumes high‑quality textual descriptions, which may not always be available. Future work is suggested to explore dynamic graph updating via multimodal prompt tuning and to integrate additional modalities (e.g., RGB video or audio) for richer semantic alignment.

In summary, TRG‑Net demonstrates that leveraging LLM‑derived textual priors can substantially enhance both spatial‑temporal modeling and semantic supervision in skeleton‑based action segmentation, achieving state‑of‑the‑art performance while maintaining efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment