Societal citations undermine the function of the science reward system

Citations in the scientific literature system do not simply reflect relationships between knowledge but are influenced by non-objective and societal factors. Citation bias, irresponsible citation, and citation manipulation are widespread and have become a serious and growing problem. However, it has been difficult to assess the consequences of mixing societal factors into the literature system because there was no observable literature system unmixed with societal factors for comparison. In this paper, we construct a mathematical theorem network, representing a logic-based and objective knowledge system, to address this problem. By comparing the mathematical theorem network and the scientific citation networks, we find that these two types of networks are significantly different in their structure and function. In particular, the reward function in citation networks is impaired: The scientific citation network fails to provide more recognition for more disruptive results, while the mathematical theorem network can achieve. We develop a network generation model that can create two types of links$\unicode{x2014}$logical and societal$\unicode{x2014}$to account for these differences. The model parameter $q$, which we call the human influence factor, can control the number of societal links and thus regulate the degree of mixing of societal factors in the networks. Under this design, the model successfully reproduces the differences among real networks. These results suggest that the presence of societal factors undermines the function of the scientific reward system. To improve the status quo, we advocate for reforming the reference list format in papers, urging journals to require authors to separately disclose logical references and social references.

💡 Research Summary

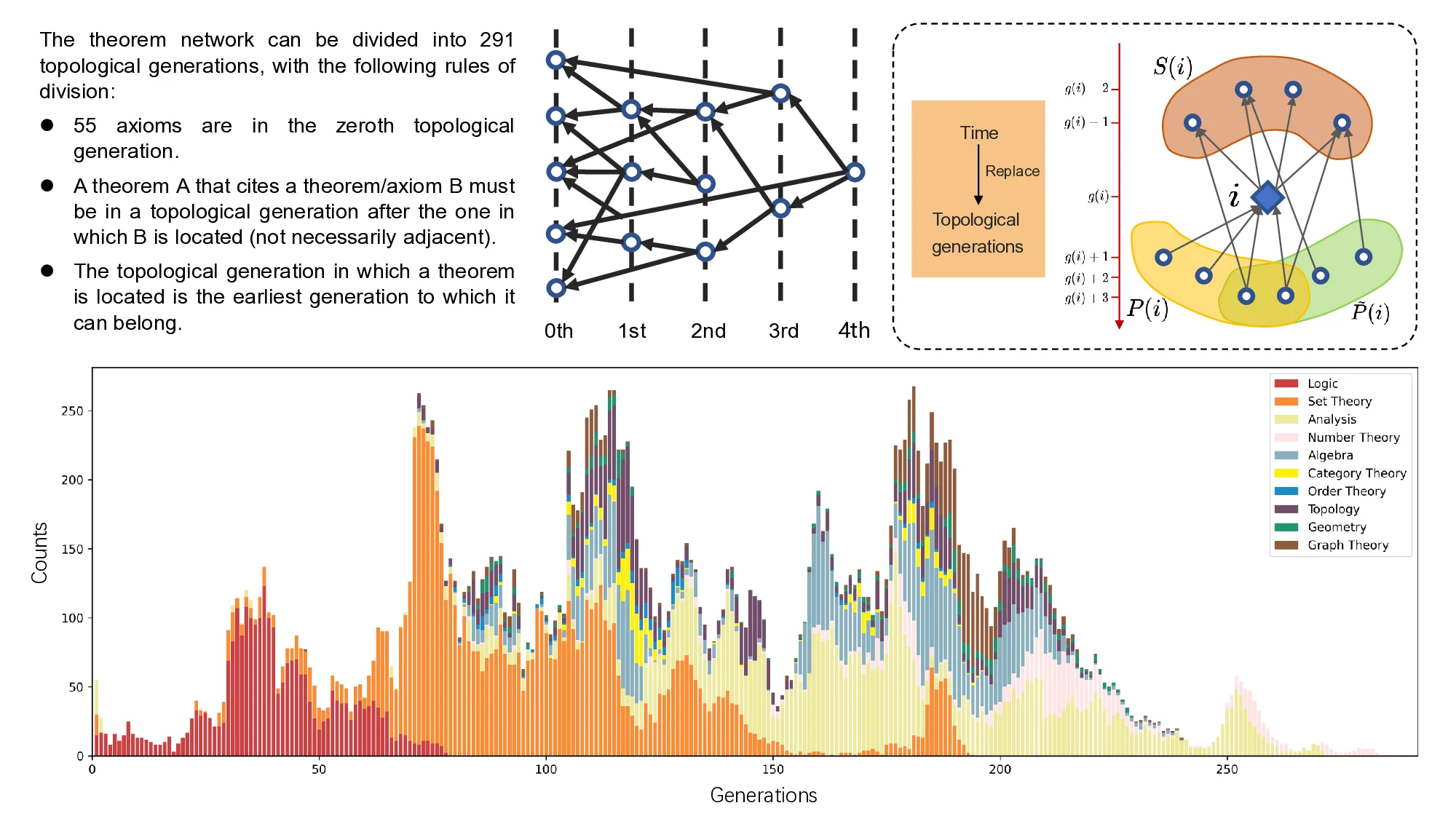

The paper tackles the pervasive problem that scientific citations are not pure reflections of knowledge relationships but are heavily contaminated by societal influences such as journal prestige, author reputation, country, gender, and even title length. Because there is no real-world literature system free of these biases, the authors construct an “ideal” knowledge network using formal mathematical theorems, which they argue are linked solely by logical deduction. They extract a theorem network from the Metamath database (26 426 nodes, 466 480 directed links) and compare it with two real citation networks of comparable size: a general scientific citation network from SciSciNet (39 028 nodes, 169 472 links) and the high‑energy‑physics theory citation network (cit‑HepTh, 27 400 nodes, 351 884 links).

Structural analysis reveals three major differences. First, the out‑degree distribution of the theorem network follows an exponential‑like decay, whereas both citation networks exhibit heavy‑tailed power‑law out‑degree distributions, indicating that a few papers accumulate disproportionate numbers of references. Second, self‑degree correlation (out‑degree vs. in‑degree) is negative in the theorem network (Spearman ρ = ‑0.325) but positive in the citation networks (ρ = 0.148 and 0.353), suggesting that in citation networks papers that cite many others tend also to receive many citations—a pattern absent in pure logical systems. Third, clustering coefficients are markedly higher in citation networks (0.108–0.157) than in the theorem network (0.042), reflecting the prevalence of triadic closure driven by social factors.

Functionally, the authors use the disruption metric—a recent measure of a paper’s originality—to test whether the reward system (citations) aligns with scientific novelty. In the theorem network, disruption and citation count are positively correlated (Pearson = 0.193), and highly cited theorems tend to be more disruptive. In contrast, the citation networks show negligible or slightly negative correlations (0.031 and –0.040), and the most cited papers in cit‑HepTh are actually less disruptive. Moreover, the Gini coefficient of normalized disruption is lowest for the theorem network (0.040) and substantially higher for the citation networks (0.143–0.156), indicating that societal factors increase inequality in the distribution of originality.

To explain these observations, the authors propose a generative model that distinguishes “logical links” (created based on vector‑based similarity in ten mathematical sub‑domains) from “societal links” (added with probability q to neighbors of the logical target, mimicking triadic closure). Each time step a new node adds at least one logical link; with probability q it also creates additional societal links, which can generate power‑law out‑degree tails and higher clustering. When q = 0 the model reproduces the theorem network; increasing q yields structures matching real citation networks, thereby supporting the hypothesis that societal citations are responsible for the observed structural and functional deviations.

Finally, the authors argue that the mixing of logical and societal citations undermines the scientific reward system and propose a concrete reform: journals should require authors to separate “logical references” (those essential to the argument) from “social references” (e.g., for persuasion, reputation building). Such a bifurcated reference list would make citation‑based metrics more transparent and help restore the alignment between scientific originality and recognition.

Comments & Academic Discussion

Loading comments...

Leave a Comment