Secure Event-Triggered Distributed Kalman Filters for State Estimation over Wireless Sensor Networks

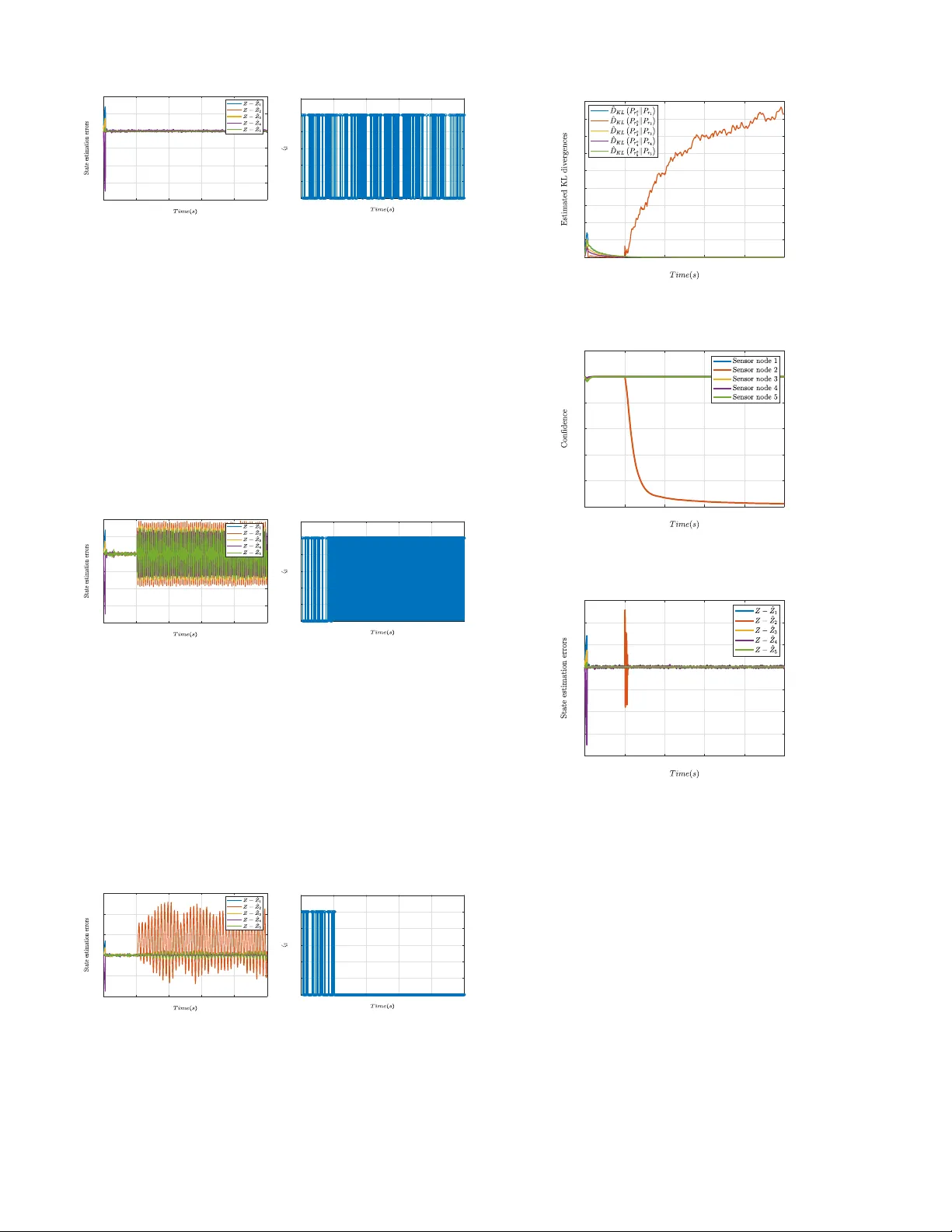

In this paper, we analyze the adverse effects of cyber-physical attacks as well as mitigate their impacts on the event-triggered distributed Kalman filter (DKF). We first show that although event-triggered mechanisms are highly desirable, the attacke…

Authors: Aquib Mustafa, Majid Mazouchi, Hamidreza Modares