A Dual Alternating Direction Method of Multipliers for Image Decomposition and Restoration

In this paper, we develop a dual alternating direction method of multipliers (ADMM) for an image decomposition model. In this model, an image is divided into two meaningful components, i.e., a cartoon part and a texture part. The optimization algorit…

Authors: Qingsong Wang, Chengjing Wang, Peipei Tang

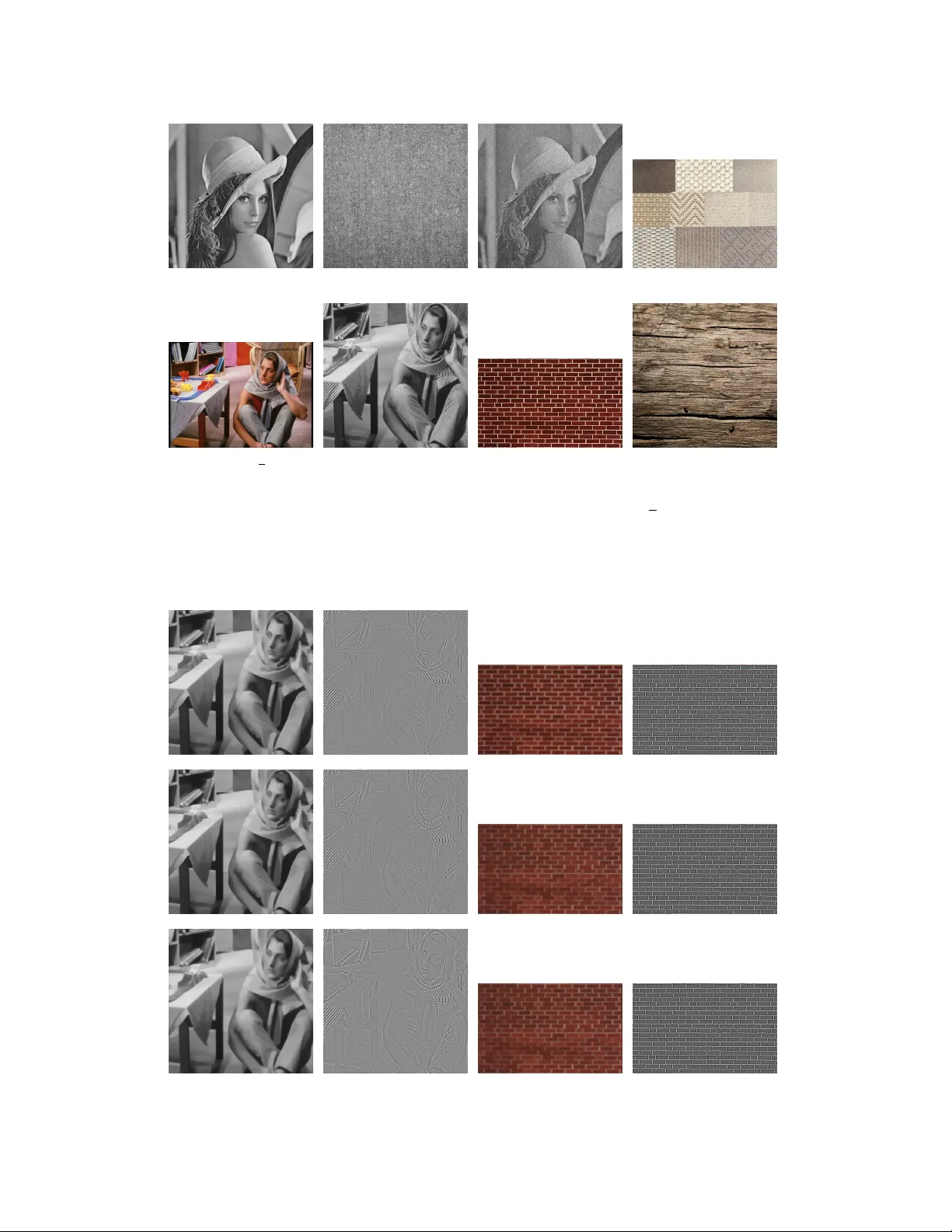

1 A Dual Alternating Direction Method of Multipliers for Image Decomposition and Restoration Qingsong W ang, Chengjing W ang, Peipei T ang, and Dunbiao Niu Abstract —In this paper , we de velop a dual alternating dir ection method of multipliers (ADMM) for an image decomposition model. In this model, an image is divided into two meaningful components, i.e., a cartoon part and a texture part. The opti- mization algorithm that we develop not only gives the cartoon part and the texture part of an image b ut also gives the restor ed image (cartoon part + texture part). W e also present the global con ver gence and the local linear con ver gence rate for the algorithm under some mild conditions. Numerical experiments demonstrate the efficiency and robustness of the dual ADMM (dADMM). Furthermor e, we can obtain relativ ely higher signal- to-noise ratio (SNR) comparing to other algorithms. It shows that the choice of the algorithm is also important even for the same model. Index T erms —Alter nating dir ection method of multipliers, Cartoon and texture, Deblurring, Image decomposition, Inpaint- ing, T otal variation. I . I N T RO D U C T I O N I N computer science, digital image processing is the use of computer algorithms to perform image processing on images. As we know , image decomposition and restoration are the basic methods in image and computer vision science. Recently , they hav e been playing more and more important roles in digit image processing, machine learning, pattern recognition, and biomedical engineering, etc. As for image decomposition, an image can be divided into two parts, i.e., a cartoon part and a texture part. As for image restoration, it includes many types, such as image denoising, image inpainting, image deblurring, and so on. The aim of image decomposition and restoration is to enhance the quality of an image that is degraded by some reason. The main tasks of this paper are extracting the cartoon and texture components from a degraded image (a blurry and/or missing pixels image) and getting a restored image from the decomposed results. Giv en an intensity function f which is an image, the image decomposition is to deriv e f = u + v , The work of Peipei T ang was supported by the Natural Science Foun- dation of Zhejiang Province of China under Grant L Y19A010028 and the Science & T echnology Dev elopment Project of Hangzhou, China under Grant 20170533B22. Qingsong W ang, School of Mathematics, Southwest Jiaotong University , No.999, Xian Road, W est Park, High-tech Zone, Chengdu 611756, China. ( nothing2wang@hotmail.com ). Chengjing W ang, School of Mathematics, Southwest Jiaotong Univ ersity , No.999, Xian Road, W est Park, High-tech Zone, Chengdu 611756, China. ( renascencewang@hotmail.com ). Peipei T ang, School of Computing Science, Zhejiang University City College, Hangzhou 310015, China. ( tangpp@zucc.edu.cn ). Dunbiao Niu, College of Mathematics, Sichuan Uni versity , No.24 South Section 1, Y ihuan Road, Chengdu 610065, China. ( dunbiaoniu_sc@163.com ). where u and v represent the cartoon and texture component of the image f , respectiv ely . In general, the cartoon part u is an image formed by homogeneous regions and with sharp boundaries, the texture part v is noise or small scale repeated details. Fig. 1 illustrates a image example (the W eave image in Fig. 2) of cartoon and texture where the distinctions of between the two components can be visually discernable. (a) W eave (b) cartoon part (c) texture part Fig. 1: Image decomposition on W eave image ( c ) in Fig. 2. According to some literatures, the first model about image decomposition is the classic image denoising model proposed in [1], i.e., the well-known Rudin, Osher , and Fatemi (R OF) total variation (TV) minimization model for image denoising. The model is gi ven by inf u Z Ω |∇ u | + λ Z Ω | f − u | 2 , (1) where the set Ω is a domain of R n , R Ω |∇ u | denotes the TV of u with the assumption that u is of bounded variation: u ∈ B V (Ω) . R Ω | f − u | 2 is a fidelity term and λ > 0 is a weight parameter . Although the problem (1) is con ve x, it is still difficult to solve the functional programming problem. Then we write out the discrete version of the problem (1) as follows min u ∈ R n ,v ∈ R n τ k|∇ u |k 1 + k v k 2 2 s.t. u + v = b, (2) where b ∈ R n is a given image, ∇ := ∇ 1 ∇ 2 : R n → R n × R n denotes the discrete version of the first-order deriv ativ e operator (see [15] for more details), and τ > 0 is a control parameter for the decomposition of b into the cartoon part u and the texture part v , k|∇ u |k 1 is the TV semi-norm for inducing the cartoon part u ∈ R n , which is defined as k|∇ u |k 1 := n X i =1 ( ∇ 1 u ) 2 i + ( ∇ 2 u ) 2 i 1 / 2 . It has been pointed out in the seminal book [10] that the l 1 norm or l 2 norm does not characterize the oscillatory 2 components. This means that these components do not have small norms (see e.g. [16]). In [10], a new model was proposed by replacing k · k 2 2 of the R OF model (2) with a weaker norm. The v alidity of this model has been verified by many numerical experiments in some papers, e.g., [4], [8], and [9]. Meyer’ s model can be equi valently written as min u ∈ R n ,v ∈ R n τ k|∇ u |k 1 + k v k − 1 , ∞ s.t. u + v = b, (3) where k v k − 1 , ∞ is the neg ativ e semi-norm in Sobolev space for inducing the texture v . For any s ∈ [1 , + ∞ ] , || v || − 1 ,s := inf {k| g |k s | v = div g , g ∈ R n × R n } , (4) where k| g |k s = ( P n i =1 | g | s i ) 1 s , and div := −∇ T : R n × R n → R n is the diver gence operator . For the details about the operator di v, one may refer to [15]. It is very difficult to get a numerical solution because the Euler-Lagrange equation of (3) can not be written out directly . There are many image decomposition models which hav e overcome this dif ficulty . For example, motiv ated by the approximation to the l ∞ norm of | g | i = p ( g 1 ) 2 i + ( g 2 ) 2 i , g = ( g 1 , g 2 ) ∈ R n × R n , and k| g |k ∞ = k q g 2 1 + g 2 2 k ∞ = lim s →∞ k q g 2 1 + g 2 2 k s , the follo wing con vex minimization problem is used in [4]. min u ∈ R n ,g ∈ R n × R n τ k|∇ u |k 1 + 1 2 k u + div g − b k 2 2 + µ k| g |k s , (5) where u is the cartoon part of an image, v = div g is the texture part of an image, s ≥ 1 , and τ > 0 , µ > 0 are two control parameters. The first and third terms are penalty terms, and the second term satisfies b ≈ u + div g . This is the first practical method to overcome the difficulty . In [4], the gradient method is employed to solve the Euler-Lagrange equation of (5). It has also been demonstrated that the solution of (5) can be used for texture discrimination and segmentation. In [17], Osher et al. proposed an alternative model by replacing k v k − 1 , ∞ in the problem (3) with k v k − 1 , 2 . Then the model is min u ∈ R n ,v ∈ R n τ k|∇ u |k 1 + k v k − 1 , 2 s.t. u + v = b. In [3], Aujol et al. studied a constrained optimization model for image image decomposition, that is, min u ∈ R n ,v ∈ R n k|∇ u |k 1 + 1 2 σ k b − u − v k 2 2 s.t. k v k − 1 , ∞ ≤ µ, where σ and µ are two positiv e parameters to decompose the image b into the cartoon part u and texture part v . Y in et al. [19] studied a second-order cone programming problem, and the problem (3) is a special case of this problem. An ov ervie w of image and signal decomposition by using sparsity and morphological di versity was giv en in [18]. As for image restoration, it is often formulated as an inv erse problem. For a degraded image b , we recover an unknown clean image x from b such that b ≈ H x, where the degradation operation H : R n → R n is a linear operator . H x may be contaminated by some noises, such as the additiv e noise (for example, Gaussian noise, or impulse noise, i.e., b = H x + ε ), Poisson noise and other multiplicative noise. H can be an identity operator (for denoising), a con volution operator (for deblurring), a projection operator (for inpainting) or the mixing of these operators. When we decompose an image x into two parts, i.e., the cartoon part u and the texture part v , it satisfies b ≈ H ( u + v ) = H ( u + div g ) . In this paper, we study an image decomposition and recon- struction model for images with degradations. The optimiza- tion problem to be considered can be explicitly written as min u ∈ R n ,g ∈ R n × R n τ k|∇ u |k 1 + 1 2 k H ( u + div g ) − b k 2 2 + µ k| g |k s , (6) where τ > 0 and µ > 0 are the trade-off parameters between the cartoon part u and the texture part div g , respecti vely; and s ≥ 1 . Actually only the cases of s = 1 , s = 2 and s = ∞ are considered in the numerical experiments (see Section III for details). There are some algorithms to solve problem (6). The alter- nating direction method of multipliers (ADMM) [13] and the ADMM with Gaussian back substitution in [2] are often used methods. Most of the ADMM type methods are applied to the primal problem (pADMM). The proximal gradient (forward- backward splitting) method in [12] is a special gradient descent method, which is mainly used to solve the optimization problem of non-dif ferentiable objective function. Furthermore, the alternating minimization (AM) based method [14] and the partial differential equation (PDE) based method [4] hav e also been used to solve this kind of problem. Among these algorithms, by and large, the pADMM is relativ ely most effecti ve and robust. Howe ver , for some problems the pADMM is less efficient which also affect to some extent the quality of the image decomposition and restoration. The rest of this paper is organized as follows. In Section II, we develop a dual ADMM (dADMM) for a more general model problem. Next, some details about the algorithm are explained. W e also present the global conv ergence and the local linear conv ergence rate of the algorithm, respectiv ely . Some numerical e xperiments for image decomposition and restoration are presented in Section III. Finally , we giv e the conclusion in Section IV . A. Preliminaries In this subsection, we summarize some notations of con ve x optimization which will be used in the subsequent analysis. 3 For a giv en proper conv ex function f : R n → ( −∞ , + ∞ ] , the proximal mapping Prox σ f ( · ) of f with positive parameter σ is defined by Prox σ f ( x ) := arg min u ∈ R n { f ( u ) + 1 2 σ k u − x k 2 2 } , ∀ x ∈ dom f , where dom f is defined as dom f := { x ∈ R n | f ( x ) < + ∞} . Next, the Moreau identity is giv en by Prox σ f ( x ) + σ Prox f ∗ /σ ( x/σ ) = x. For a giv en function f : R n → [ −∞ , + ∞ ] , the conjugate of f is defined as f ∗ ( y ) = sup x ∈ dom f {h y , x i − f ( x ) } . The function f ∗ is closed and con vex (even when f is not). For more details, one may refer to [20]. I I . T H E A L G O R I T H M In this section, we first introduce a general optimization problem. Then the dADMM is applied to solve the problem. Based on the conv ergence result of the semi-proximal ADMM which was summerized in [5] and the linear con vergence rate result which was established in [6], we will establish the global conv ergence and the local linear conv ergence rate of our algorithm. Now we consider a general optimization problem as the following form min x ∈X ,y ∈Y 1 2 k Ax + B y − b k 2 2 + p ( x ) + q ( y ) , (7) where X , Y and Z are three finite-dimensional real Euclidean spaces each equipped with an inner product h· , ·i and its induced norm k · k , b ∈ Z is a giv en variable, A : X → Z and B : Y → Z are two linear operators, p ( x ) and q ( y ) are two closed proper con vex functions (which may be nonsmooth). It is quite clear that the problem (6) is a special case of the problem (7) with the variables x ∈ R n , y ∈ R n × R n , b ∈ R n , the linear operators A = H and B = H · div, the functions p ( · ) = τ k|∇ ( · ) |k 1 and q ( · ) = µ k| · |k s . By introducing a slack variable z , the optimization problem (7) is equi valent to min x ∈X ,y ∈Y ,z ∈Z 1 2 k z k 2 2 + p ( x ) + q ( y ) s.t. Ax + B y − b = z . (P) The Lagrangian function associated with problem ( P ) is given by l ( z , x, y ; u ) = 1 2 k z k 2 2 + p ( x ) + q ( y ) + h u, Ax + B y − z − b i . Then max u ∈Z inf z ,x,y l ( z , x, y ; u ) = max u ∈Z { inf z { 1 2 k z k 2 2 − h u, z i} + inf x { p ( x ) + h A ∗ u, x i} + inf y { q ( y ) + h B ∗ u, y i} − h u, b i} = max u ∈Z {− 1 2 k u k 2 2 − sup x {h− A ∗ u, x i − p ( x ) } − sup y {h− B ∗ u, y i − q ( y ) } − h u, b i} = max u ∈Z {− 1 2 k u k 2 2 − p ∗ ( − A ∗ u ) − q ∗ ( − B ∗ u ) − h u, b i} . Thus the dual of problem ( P ) can be explicitly written as min u ∈Z 1 2 k u k 2 2 + p ∗ ( − A ∗ u ) + q ∗ ( − B ∗ u ) + h u, b i . (8) By introducing two slack variables v and w , (8) can be equiv alently rewritten as min u ∈Z ,v ∈X ,w ∈Y 1 2 k u k 2 2 + h u, b i + p ∗ ( v ) + q ∗ ( w ) s.t. − A ∗ u − v = 0 , − B ∗ u − w = 0 . (D) Then the augmented Lagrangian function associated with problem ( D ) is giv en by L σ ( u, v , w ; x, y ) = 1 2 k u k 2 2 + h u, b i + p ∗ ( v ) + q ∗ ( w ) + h x, − A ∗ u − v i + h y , − B ∗ u − w i + σ 2 k A ∗ u + v k 2 2 + σ 2 k B ∗ u + w k 2 2 . Now we describe the algorithmic frame work of the dADMM as follo ws. The dADMM for problem ( D ) : Giv en ε > 0 . Let τ ∈ (0 , (1 + √ 5) / 2) be a scalar parameter , σ > 0 be a given arbitrary parameter, and choose u 0 , v 0 , w 0 , x 0 , y 0 . For k = 0 , 1 , 2 , . . . , perform the following steps in each iteration: Step 1. Compute the u, v , w : ( I Z + σ AA ∗ + σ B B ∗ ) u k +1 = Ax k + B y k − b − σ Av k − σ B w k , v k +1 w k +1 = " Prox p ∗ /σ ( x k σ − A ∗ u k +1 ) Prox q ∗ /σ ( y k σ − B ∗ u k +1 ) # , Step 2. Update x, y : x k +1 = x k + τ σ ( − A ∗ u k +1 − v k +1 ) , y k +1 = y k + τ σ ( − B ∗ u k +1 − w k +1 ) , Step 3. If a termination criterion is not satisfied, go to Step 1 . Then we describe the details of how to solve the u - subproblem and ( v , w ) -subproblem of the algorithm. 4 • u - subproblem: ¯ u = arg min u ∈Z 1 2 k u k 2 2 + h u, b i − h x, A ∗ u + v i − h y , B ∗ u + w i + σ 2 k A ∗ u + v k 2 2 + σ 2 k B ∗ u + w k 2 2 , which is equi valent to solve the following linear system ( I Z + σ AA ∗ + σ B B ∗ ) u = Ax + B y − b − σ Av − σ B w. (9) In (9), I Z denotes the identity matrix in the space Z . There are similar notations behind and we will not give more explanations. • ( v , w ) - subproblem: ¯ v = arg min v ∈X { p ∗ ( v ) − h x, A ∗ u + v i + σ 2 k A ∗ u + v k 2 2 } = arg min v ∈X { p ∗ ( v ) + σ 2 k v − ( x σ − A ∗ u ) k 2 2 } = Prox p ∗ /σ ( x σ − A ∗ u ) , ¯ w = arg min w ∈Y { q ∗ ( w ) − h y , B ∗ u + w i + σ 2 k B ∗ u + w k 2 2 } = arg min w ∈Y { q ∗ ( w ) + σ 2 k w − ( y σ − B ∗ u ) k 2 2 } = Prox q ∗ /σ ( y σ − B ∗ u ) . Based on the Moreau identity , we have Prox p ∗ /σ ( x σ − A ∗ u ) = x − σ A ∗ u − Prox σ p ( x − σ A ∗ u ) σ , Prox q ∗ /σ ( y σ − B ∗ u ) = y − σ B ∗ u − Prox σ q ( y − σ B ∗ u ) σ . Next, we adapt the results developed in [5], [6] and [20] to establish the global conv ergence in Theorem II.1 and the local linear con vergence rate of the dADMM in Theorem II.2, respectiv ely . Theorem II.1. W e consider the situation of S = 0 and T = 0 in ([5], Theorem B.1). Assume that the solution set of ( D ) is nonempty and that the constraint qualification holds. Let the sequence { ( u k , v k , w k , x k , y k ) } be generated fr om the dADMM. If τ ∈ (0 , (1 + √ 5) / 2) , then the sequence { ( u k , v k , w k ) } con ver ges to an optimal solution to the pr oblem ( D ) and the sequence { ( x k , y k ) } con verg es to an optimal solution to the pr oblem ( P ) . Pr oof. W e can prov e the conclusion of this theorem based on Theorem B.1 in [5] directly . Thus, we omit the details here. Before going to the statement of the following theorem, we denote m := ( u, v , w , x, y ) with u ∈ Z , v , x ∈ X and w , y ∈ Y , and M := Z × X × Y × X × Y . Besides, define the KKT (Karush-Kuhn-T ucker) mapping [22] R : M → M as R ( m ) := u + b − Ax − B y v − Prox p ∗ ( v + x ) w − Prox q ∗ ( w + y ) − A ∗ u − v − B ∗ u − w , ∀ m ∈ M . If there exists ( ¯ u, ¯ v , ¯ w , ¯ x, ¯ y ) which satisfies R ( m ) = 0 , then ( ¯ u, ¯ v , ¯ w, ¯ x, ¯ y ) is called a KKT point for the problem ( D ) . Define a self-adjoint linear operator P as below P := Diag ( I Z , σ I X ×Y , ( τ σ ) − 1 I X ×Y ) + s τ σ ΓΓ ∗ , where s τ := 5 − τ − 3 min { τ , τ − 1 } 4 , ∀ τ ∈ (0 , ∞ ) , and Diag ( · , · , · ) is a 3 × 3 block diagonal linear operator . Note that 1 / 4 ≤ s τ ≤ 5 / 4 , ∀ τ ∈ (0 , (1 + √ 5) / 2) , and let Γ : X × Y → M be a linear operator such that its adjoint Γ ∗ satisfies Γ ∗ ( m ) = − A ∗ − B ∗ × u + − I X 0 0 − I Y × v w = − A ∗ u − v − B ∗ u − w , ∀ m ∈ M . Then we denote k m k P := p h m, P m i and dist P ( m, Ω) := inf ω ∈ Ω k m − ω k P for any m ∈ M and any set Ω ⊆ M . Theorem II.2. W e consider the situation of S = 0 and T = 0 in ([6], Cor ollary 1). Let τ ∈ (0 , (1 + √ 5) / 2) . Assume that the solution set ¯ Ω = { ¯ m := ( ¯ u, ¯ v , ¯ w , ¯ x, ¯ y ) } is nonempty . Suppose that the mapping R : M → M is piecewise polyhedral. Then ther e exist a constant ˆ η > 0 such that the infinite sequence { ( u k , v k , w k , x k , y k ) } generated fr om the dADMM satisfies that for all k ≥ 1 , dist ( m k , ¯ Ω) ≤ ˆ η k R ( m k ) k 2 , dist P ( m k +1 , ¯ Ω) ≤ ˆ µ dist P ( m k , ¯ Ω) , wher e 0 < ˆ µ < 1 is a constant parameter . Pr oof. By using the Corollary 1 in [6], the desired conclusions of this theorem can be obtained readily . So we omit the details here. I I I . N U M E R I C A L E X P E R I M E N T S In this section, we perform the numerical experiments for the dADMM to solve the problem ( D ) with dif ferent choices of A . More specifically , we test four cases in this section: (1) A = I , where I is a identity matrix; (2) A = S , where S is a blurring matrix; (3) A = K , where K is a binary matrix which indicates the missing pixels by zero entries; (4) A = K S , where K S is the composition of a binary matrix and a blurring matrix. All of the experiments were implemented in M A T L A B R2018a x64 on a PC with an Intel i5-8600K 3.6 GHz processor and 8GB memory . In order to measure the quality of the image decomposition, it is assumed that the cartoon part and the texture part of an image are uncorrelated according to the paper [3]. The correlation between the cartoon part u and the texture part v is computed by Corr ( u, v ) := cov ( u, v ) p var ( u ) v ar ( v ) , 5 where var ( · ) and cov ( · , · ) refer to the sample variance and cov ariance of given data, respectively . W e use the PSNR value which is defined by PSNR = 10 log 10 I 2 max MSE , to measure the performance of image restoration, where I max is the maximum intensity of the original image, and MSE is defined by MSE = 1 mn m X i =1 n X j =1 [ I ( i, j ) − N ( i, j )] 2 , with I being a noise-free m × n monochrome image and N being the noisy approximation image of I. The images used in this section are displayed in Fig. 2. Note that the Mixed image ( c ) in Fig. 2 is deriv ed by combining ( a ) and ( b ) with the ratio of 6:4. W e denote R P = k u + b − Ax − B y k 2 1 + k A k 2 , R D = k A T u + v k 2 + k B T u + w k 2 1 + k A k 2 , R C = k v − Prox p ∗ ( v + x ) k 2 + k w − Prox q ∗ ( w + y ) k 2 1 + k A k 2 . The numerical experiments are terminated if the stopping criterion T ol = max { R P , R D , R C } ≤ 10 − 3 is satisfied or the maximal iterations reaches 70. This stopping criterion is also used for the other two algorithms (ADME and ADMGB) in [2]. For all the experiments, the initial iteration point is set to be zero. Remark 1. Some elements of the textur e part v ∈ R n × m ar e less than zer o in the experiments. W e normalize the matrix v in order to show the textur e part of the image clearly . The step of normalization is defined by v ij = v ij − v min v max − v min , ∀ i = 1 , . . . , n, j = 1 , . . . , m. wher e v min = min 1 ≤ i ≤ n, 1 ≤ j ≤ m { v ij } and v max = max 1 ≤ i ≤ n, 1 ≤ j ≤ m { v ij } . A. Case 1: A = I W e consider the case of A = I in this subsection, i.e., a image will be decomposed into a clean and an additi ve noise images. For the images ( f ) and ( g ) in Fig. 2, we implement the ADME, ADMGB and dADMM in this case. For the ADME and ADMGB, we take the parameters as τ = 1 × 10 − 1 , µ = 1 × 10 − 3 and β 1 = β 2 = β 3 = 10 . For the dADMM, we set the parameters as τ = 1 × 10 − 1 , µ = 3 × 10 − 2 and σ = 0 . 8 . In Fig. 3, the decomposed results (the cartoon part and the texture part) of the images ( f ) and ( g ) with different values of s are displayed. W e can hardly see the dif ference with dif ferent values of s from Fig. 3 directly . In order to measure the quality of the image decomposition, the correlation between the cartoon and the texture is computed. The corresponding results are displayed in Fig. 4. The results in Fig. 4 show a tiny difference of different s , which coincides with the conclusion from [4]. These results show that the ef fectiveness of the optimization problem (5) is not sensiti ve to dif ferent values of s . So in the remaining experiments, one of s = 1 , s = 2 and s = ∞ is used to the numerical simulations for conv enience. Next, we compare the dADMM with the other two algo- rithms (the ADME and ADMGB in [2]) on image decompo- sition. For this comparison, we focus on the images ( c ) and ( g ) in Fig. 2. The decomposed results about the image ( c ) in Fig. 2 are showed in Fig. 5 and the v ariations of the correlation values of the images ( c ) and ( g ) are displayed in Fig. 6. Now we add some additiv e noise to the image ( e ) in Fig. 2, which is b = ( u + v ) + ε , where the additiv e noise ε is Gaussian noise. The noised image b is decomposed by the model (5) with three algorithms. In this experiment, the Gaussian white noise ε is generated by M A T L A B function imnoise(Im,‘gaussian’,0,0.1) . The results are dis- played in Fig. 7 and Fig. 8, respectiv ely . From Fig. 7, we note that we can obtain a better cartoon part and a more clear texture part by the dADMM than the other two algorithms (ADME and ADMGB). W e plot the variations of Corr ( u, v ) with respect to the iterations in Fig. 8. It shows that dADMM can reach a relativ ely lower correlation value with fewer iterations than the other two algorithms. W e can see that the dADMM has a better performance than the other two algorithms (i.e., ADME and ADMGB in [2]) in the experiments in case of A = I . B. Case 2: A = S In this case, S is a blurring matrix, i.e., A in (7) is a con volution operator . W e test both the Out-of-focus blur and the Gaussian blur in this subsection with the images ( a ) and ( d ) in Fig. 2 and compare the performances of the algorithms (the ADME, ADMGB and dADMM). T o implement the ADME and ADMGB, we take the parameters as τ = 5 × 10 − 5 , µ = 1 × 10 − 5 and β 1 = β 2 = β 3 = 1 × 10 − 2 . T o implement the dADMM, we set the parameters as τ = 8 × 10 − 6 , µ = 4 × 10 − 4 and σ = 2 × 10 2 . The numerical results of the ADME, ADMGB and dADMM on the images ( a ) and ( d ) with different blur kernels are showed in T able I. In the table, “Gaus- sian(40,40)” means the blur kernel is generated by M A T L A B function fspecial(‘gaussian’,40,40) , and “Out-of- focus(40)” means the Out-of-focus blur kernel with a radius of 40 giv en by M A T L A B function fspecial(’disk’,40) . In T able I we report the detailed numerical results for the ADME, ADMGB and dADMM in the image decomposition and reconstruction problems. One can observe from T able I that the dADMM takes less time and less iterations to get a higher PSNR value of the restored image than the ADME and ADMGB for most of the tested examples. And we display the decomposed results of the cartoon parts, the texture parts and the restored images of W eave image (( d ) in Fig. 2) in the case of “Out-of-focus(40)” in T able I by two algorithms (ADMGB 6 (a) Lena (b) W ool (c) Mixed (d) W eave (e) Barbara RGB (f) Barbara (g) Brick (h) W ood Fig. 2: T esting images: ( a ) 512 × 512 Lena image, ( b ) 512 × 512 W ool image, ( c ) combined 512 × 512 Lena and W ool image (denoted Mixed image), ( d ) 768 × 1024 × 3 W eave image, ( e ) 576 × 787 × 3 Barbara RGB image, ( f ) 512 × 512 a part of Barbara image, ( g ) 393 × 635 × 3 Brick image, ( h ) 1024 × 1024 × 3 W ood image. Fig. 3: Image decomposition on clean images (( f ) and ( g ) in Fig. 2, respectiv ely) with different values of s . From left to right: the cartoon part, the texture part of ( f ) and ( g ), respectively . The top row: s = 1 . The center ro w: s = 2 . The bottom ro w: s = ∞ . 7 T ABLE I: Image decomposition on the images with blurry: “Iter” – the number of iterations; “T ol” : – tolerance for the stopping criterion; “T ime” – computing time (in seconds); “PSNR 0 ” (“PSNR”) – the PSNR value between the blurred (restored) image and the original image, respecti vely . And a=“ ADME”; b=“ ADMGB”; c= “dADMM”. Image Blur PSNR 0 Iter (a | b | c) T ol (a | b | c) Time (a | b | c) PSNR (a | b | c) Lena Gaussian(20,20) 22.25 70 | 70 | 23 3.0e-1 | 3.0e-1 | 9.8e-4 5.19 | 5.69 | 1.39 30.41 | 30.42 | 31.38 Gaussian(30,30) 20.61 70 | 70 | 21 2.1e-1 | 2.1e-1 | 8.6e-4 5.20 | 5.59 | 1.25 28.23 | 28.25 | 28.87 Out-of-focus(20) 19.95 70 | 70 | 19 3.5e-3 | 1.0e-2 | 9.5e-4 5.48 | 5.84 | 1.12 29.80 | 29.82 | 30.73 Out-of-focus(30) 18.48 70 | 70 | 16 3.4e-3 | 2.7e-2 | 9.2e-4 5.20 | 5.73 | 0.97 27.76 | 27.78 | 28.51 W eave Gaussian(40,40) 19.60 70 | 70 | 49 2.2e-1 | 2.2e-1 | 9.9e-4 77.04 | 86.54 | 66.73 26.40 | 26.42 | 27.21 Gaussian(50,50) 19.44 70 | 70 | 47 1.9e-1 | 1.9e-1 | 9.8e-4 76.85 | 86.07 | 64.16 24.95 | 24.96 | 26.68 Out-of-focus(40) 19.30 70 | 70 | 39 1.7e-2 | 4.3e-2 | 9.9e-4 75.86 | 86.10 | 49.23 26.85 | 26.87 | 27.05 Out-of-focus(55) 19.10 70 | 70 | 39 1.3e-2 | 3.8e-2 | 9.9e-4 76.89 | 61.87 | 49.15 24.78 | 24.80 | 26.51 Fig. 4: V ariations of Corr ( u, v ) with respect to the iterations for the image ( f ) Barbara in Fig. 2 with s = 1 , s = 2 , and s = ∞ . and dADMM) in Fig. 9. The numerical performances indicate that the dADMM is a robust, high-performance algorithm for the optimization problem (6). C. Case 3: A = K This subsection is dev oted to the more difficult part of image decomposition and restoration on an image with missing pixels. For a binary matrix K ∈ R m × n and a clean image M ∈ R m × n . The de graded image D = K ◦ M , where the oper - ator ◦ denotes the Hadamard product, i.e., D i,j = K i,j × M i,j ( i = 1 , . . . , m ; j = 1 , . . . , n ). The images ( f ) and ( h ) in Fig. 2 are used in this subsection. And K is set to be a 512 × 512 matrix and a 1024 × 1024 matrix for the image ( f ) and ( h ), respectiv ely . T ABLE II: Image decomposition and restoration on the images with missing pixels: “Iter” – the number of iterations; “T ol” – tolerance for the stopping criterion; “Time” – computing time (in seconds); “PSNR 0 ” (“PSNR”) – the PSNR value between the painted (restored) image and the original image, respectiv ely . And a=“ ADMGB”; b= “dADMM”. Image PSNR 0 Iter (a | b) T ol (a | b) Time (a | b) PSNR (a | b) Barbara 14.13 70 | 39 7.5e-3 | 9.3e-4 4.95 | 3.23 29.34 | 30.71 W ood 14.81 70 | 39 2.1e-2 | 9.0e-4 97.63 | 79.16 24.24 | 25.25 Fig. 5: Image decomposition on the Mixed image ( c ) in Fig. 2 with A = I . The first column: the cartoon part. The second column: the texture part. From top to bottom are the decomposed results by the ADME, ADMGB, and dADMM. W e compare the performances of the ADMGB and dADMM in this case. The parameters of the ADMGB are set as τ = 1 × 10 − 2 , µ = 5 × 10 − 3 and β 1 = β 2 = β 3 = 5 × 10 − 2 . T o implement the dADMM, we take τ = 4 × 10 − 3 , µ = 1 × 10 − 3 , and σ = 3 × 10 3 . Finally , the PSNR v alues with respect to the iterations for the images (( f ) and ( h ) in Fig. 2) with missing pixels are showed in Fig. 10. W e display the decomposed images and the restored images of two algorithms (ADMGB and dADMM) for the images (( f ) and ( h ) in Fig. 2) in Fig. 11. From Fig. 10 and T able II we can see that the PSNR value 8 Fig. 6: V ariations of Corr ( u, v ) with respect to the iterations for Mixed image ( c ) and Brick image ( g ) in Fig. 2. Fig. 7: Image decomposition on the noised image ( e ) in Fig. 2 with A = I . From top to bottom are the noised image, cartoon parts and texture parts. From left to right are the decompositions by the ADME, ADMGB and dADMM. Fig. 8: V ariations of Corr ( u, v ) with respect to the iterations for the ADME, ADMGB and dADMM on the image ( e ) in Fig. 2 with Gaussian noise. Fig. 9: Image decomposition and restoration on blurred image with “Out-of-focus(40)” about image ( d ) in Fig. 2. From top to bottom are the blurred image, cartoons, textures and restored images. From left to right are the results obtained by the ADMGB and dADMM, respecti vely . Fig. 10: V ariations of the PSNR values with respect to the iterations for the ADMGB and dADMM on the images ( f ) and ( h ) in Fig. 2 with missing pixels. 9 Fig. 11: Image decomposition and restoration on images with missing pixels. From top row to bottom ro w are degraded images, cartoon parts, texture parts and restored images. 512 × 512 Barbara image with missing pixels, decomposition and restoration image by the ADMGB and dADMM on first two column respecti vely . 1024 × 1024 × 3 W ood image with missing pixels, decomposition and restoration image by the ADMGB and dADMM on last two column respectively . of the reconstructed image generated by the dADMM is higher than that generated by the ADMGB. Moreov er, we can get a better PSNR v alue with less time and less iterations by the dADMM. D. Case 4: A = K S In this subsection, we solve the problem (7) with A = K S , where S is a blurring matrix, and K is a binary matrix. That is, we consider decomposing images with both blurry and missing pixels. The images ( a ) and ( h ) in Fig. 2 are used in this part. The blurring matrices S (the Out-of-focus blur and the Gaussian blur) in T able I are used as blurring matrix, and K is set to be a 512 × 512 matrix and a 1024 × 1024 matrix for image ( f ) and ( h ), respectiv ely . W e implement two algorithms (the ADMGB and dADMM) in this part. For the ADMGB, we take τ = 5 × 10 − 5 , µ = 1 × 10 − 5 and β 1 = β 2 = β 3 = 1 × 10 − 2 . And for the dADMM, we take τ = 5 × 10 − 3 , µ = 3 × 10 − 3 and σ = 3 × 10 3 . All results in this case are displayed in T able III and Fig. 12. And the result images (cartoon parts, texture parts and restored images) for W ood image with “Out-of-focus(20)” plus missing pixels are showed in Fig. 12. W e report in T able III the detailed numerical results for the ADMGB and dADMM on the image decomposition and restoration of the images with blurry and missing pix els. It can be observed from T able III that the PSNR 10 T ABLE III: Image decomposition and restoration of the images with blurry and missing pixels: “Iter” – the number of iterations; “T ol” – tolerance for the stopping criterion; “T ime” – computing time (in seconds); “PSNR 0 ” (“PSNR”) – the PSNR value between the blurred (restored) image and the original image, respecti vely . And a=“ ADMGB”; b= “dADMM”. Image Blur + Missing pixels PSNR 0 Iter (a | b) T ol (a | b) T ime (a | b) PSNR (a | b) Lena Gaussian(15,15) + Missing pixels 11.54 70 | 16 4.9e-2 | 7.5e-4 16.20 | 2.77 24.88 | 26.44 Gaussian(20,20) + Missing pixels 11.47 70 | 15 5.6e-2 | 8.2e-4 16.22 | 2.62 25.48 | 26.20 Out-of-focus(10) + Missing pixels 12.98 70 | 15 5.3e-2 | 7.8e-4 15.43 | 2.59 25.76 | 26.76 Out-of-focus(15) + Missing pixels 11.38 70 | 15 5.8e-2 | 7.2e-4 14.85 | 2.56 24.05 | 25.67 W ood Gaussian(20,20) + Missing pixels 12.70 70 | 15 8.6e-2 | 9.1e-4 423.97 | 101.35 18.54 | 20.93 Gaussian(30,30) + Missing pixels 12.48 70 | 15 8.5e-2 | 9.1e-4 441.78 | 100.35 17.75 | 19.66 Out-of-focus(15) + Missing pixels 12.58 70 | 15 6.8e-2 | 9.1e-4 395.44 | 100.55 17.48 | 19.23 Out-of-focus(20) + Missing pixels 12.43 70 | 15 7.2e-2 | 9.0e-4 395.85 | 99.96 17.04 | 18.35 Fig. 12: Image decomposition and restoration on the image with blurry and missing pixels ( W ood image in Fig. 2 with blurry (“Out-of-focus(20)”) and missing pixels). From top to bottom are the degraded image, cartoon parts, texture parts and restored images, respectiv ely . Left column: Decomposition by the ADMGB. Right column: Decomposition by the dADMM. values by the dADMM are higher than those by the ADMGB. Moreov er , the dADMM is obviously faster than the ADMGB. Now we take a further look at the variations of the PSNR values and the KKT residuals with respect to the iterations. Fig. 13 sho ws the variations of the PSNR values and the KKT residuals with respect to the iterations for the ADMGB and dADMM for ( h ) in Fig. 2 with blurry (“Out-of-focus(20)”) and missing pixels, respectiv ely . Firstly , for the ADMGB, the PSNR v alue can hardly impro ve after the first sev eral iterations, while for the dADMM, the PSNR value increase all the time though it increases very slow at the later stage. Secondly , the KKT residuals by the dADMM is obviously smaller than that by the ADMGB. Note that the reason lies in the fact that sev eral variables are introduced for the ADMGB which directly lead to a smaller iteration step size than the dADMM. I V . C O N C L U S I O N In this paper , we developed the dADMM to solve the image decomposition and restoration problem with blurry and/or missing pixels. The global con vergence and the local linear conv ergence rate of the algorithm were also giv en. The numerical simulation results also demonstrated that our proposed algorithm is rob ust and efficient, and can obtain relativ ely more higher SNRs for various image decomposition and restoration problems. Thus, it sho ws that ev en for the same model problem, the choice of the algorithm is also important. A P P E N D I C E S Note that to compute Prox σ p ( · ) is equi v alent to solve the following optimization problem ¯ x = arg min x σ k|∇ x |k 1 + 1 2 k x − y k 2 2 . (10) Therefore,we adopt the algorithm in [7] to solve problem (10). For more detailed information, one may see [7]. Furthermore, to compute Prox σ q ( · ) is equiv alent to solve the follo wing optimization problem ¯ x = arg min x σ k| x |k s + 1 2 k x − y k 2 2 , where σ > 0 . • If s = 1 , ¯ x = y − max { min { y , σ } , − σ } . 11 Fig. 13: From top to bottom are the variations of the PSNR values and KKT residuals with respect to the iterations for the ADMGB and dADMM for ( h ) in Fig. 2 with blurry (“Out-of- focus(20)”) and missing pixels, respectiv ely . • If s = 2 , ¯ x = y − min {k| y |k , σ } y k| y |k . • If s = ∞ , ¯ x = y − P Ω ( y ) , where P Ω ( · ) denotes the projection operator onto Ω = { y | k| y |k 1 ≤ σ } . A C K N O W L E D G E M E N T S W e would like to thank Professor W enxing Zhang at Uni ver- sity of Electronic Science and T echnology of China for many useful discussions and sharing the code for us. R E F E R E N C E S [1] L. Rudin, S. Osher , and E. Fatemi, “Nonlinear total variation based noise remov al algorithms, ” Phys. D., vol. 60, no. 1, pp. 259-268, 1992. [2] M.K. Ng, X.M. Y uan, and W .X. Zhang, “Coupled variational image decomposition and restoration model for blurred cartoon-plus-texture images with missing pixels, ” IEEE Image proc., V ol. 22, no. 6, pp. 2233- 2246, 2013. [3] J.-F . Aujol, G. Gilboa, T . Chan, and S. Osher, “Structure-texture image decomposition-modeling, algorithms, and parameter selection, ” Int. J. Comput. V is., vol. 67, no. 1, pp. 111-136, 2006. [4] L. V ese and S. Osher, “Modeling textures with total variation minimiza- tion and oscillating patterns in image processing, ” J. Sci. Comput., vol. 19, no. 1, pp. 553-572, 2003. [5] M. Fazel, T .K. Pong, D.F . Sun, and P . Tseng, “Hankel matrix rank minimization with applications to system identification and realization, ” SIAM J. Matrix Anal. Appl., vol. 34, no. 3, pp. 946-977, 2013. [6] D.R. Han, D.F . Sun, L.W . Zhang, “Linear rate conv ergence of the alternat- ing direction method of multipliers for conv ex composite programming, ” Math. Oper. Res., V ol 43, no. 2, pp. 622-637, 2017. [7] L. Condat, “ A direct algorithm for 1D total variation denoising, ” IEEE Signal Proc. Letters, vol. 20, no. 11, pp. 1054-1057, 2013. [8] J.-F . Aujol and A. Chambolle, “Dual norms and image decomposition models, ” Int. J. Comput. V is., vol. 63, no. 1, pp. 85-104, 2005. [9] P . Maure, J.-F . Aujol, and G. Peyr ´ e, “Locally parallel texture modeling, ” SIAM J. Imag. Sci., vol. 4, no. 1, pp. 413-447, 2011. [10] Y . Meyer , “Oscillating patterns in image processing and nonlinear ev olution equations, ” (Univ ersity Lecture Series), vol. 22, Providence, RI, USA: AMS, 2002. [11] B.S. He, M. T ao, and X.M. Y uan, “ Alternating direction method with Gaussian back substitution for separable con vex programming, ” SIAM J. Optim., vol. 22, no. 2, pp. 313-340, 2012. [12] J.F . Cai, R.H. Chan, and Z. Shen, “Simultaneous cartoon and texture inpainting, ” Inverse Prob . Imaging, vol. 4, no. 3, pp. 379-395, 2010. [13] R. Glowinski and A. Marrocco, “Sur l’approximation par ´ el ´ ements finis d’ordre un et la r ´ esolution par p ´ enalisation-dualit ´ e d’une classe de probl ˙ emes de Dirichlet non lin ´ eaires, ” Revue Fr . Autom. Inform. Rech. Opr ., Anal. Num ´ er ., vol. 2, pp. 41-76, 1975. [14] J.-F . Aujol, G. Aubert, L. Blanc-F ´ erau, and A. Chambolle, “Image decomposition into a bounded variation component and an oscillating component, ” J. Math. Imaging V is., vol. 22, no. 1, pp. 71-88, 2005. [15] R. Adams, Sobolev Spaces , Pure and Applied Mathematics. San Fran- cisco, CA, USA: Academic, 1975. [16] F . Andreu-V aillo, V . Caselles, and J.M. Maz ´ on, P arabolic quasilin- ear equations minimizing linear gr owth functionals . Cambridge, MA: Birkhauser , 2004. [17] S. Osher, A. Sole, and L. V ese, “Image decomposition and restoration using total variation minimization and the H − 1 norm, ” Multiscale Model. Simul., vol. 1, no. 3, pp. 349-370, 2003. [18] M. Fadili, J. Starck, J. Bobin, Y . Moudden, “Image decomposition and separation using sparse representations: an overview , ” Proc. IEEE, vol. 98, no. 6, pp. 983-994,2010. [19] W . Yin, D. Goldfarb, S. Osher, “ A comparison of three total variation based texture extraction models, ” J. V is. Commun. Image R., vol. 18, no. 3, pp. 240-252, 2007. [20] T . Rockafellar , Con vex Analysis . Princeton, NJ, USA: Princeton Univ er- sity Press, 1970. [21] J. Nocedal, and S.J. Wright, Numerical Optimization . second edition, New Y ork: Springer, 2006. [22] H.W . Kuhn, A.W . Tuck er , “Nonlinear programming, ” Proceedings of 2nd Berkeley Symposium. Berkeley: University of California Press., pp. 481-492, 1951. Qingsong W ang received the Bachelor’ s de- gree from Southwest Jiaotong University , Chengdu, China. He is now a Master student at School of Mathematics, Southwest Jiaotong University . His research interests include numerical optimization, image processing. Chengjing W ang receiv ed the B.S. and the Ph.D. degrees from Zhejiang University , Hangzhou, China. He is currently an Associate Professor at School of Mathematics, Southwest Jiaotong Univ ersity , Chengdu, China. His current research interests focus on the theories and algorithms of large-scale opti- mization and its applications in statistics learning. 12 Peipei T ang received the B.S. and the Ph.D. degrees from Zhejiang Uni versity , Hangzhou, China. She is currently an Associate Professor at School of Com- puter and Computing Science, Zhejiang University City College, Hangzhou, China. Her current research interests include numerical optimization, with its applications in data mining and statistics learning. Dunbiao Niu received the B.S. degree in statis- tics from Southwest Jiaotong University , Chengdu, China in 2016. He is currently pursuing the M.S. de- gree in probability theory and mathematical statistics at Sichuan University . His current research interests mainly include statistical learning and large-scale optimization.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment