MaskTerial: A Foundation Model for Automated 2D Material Flake Detection

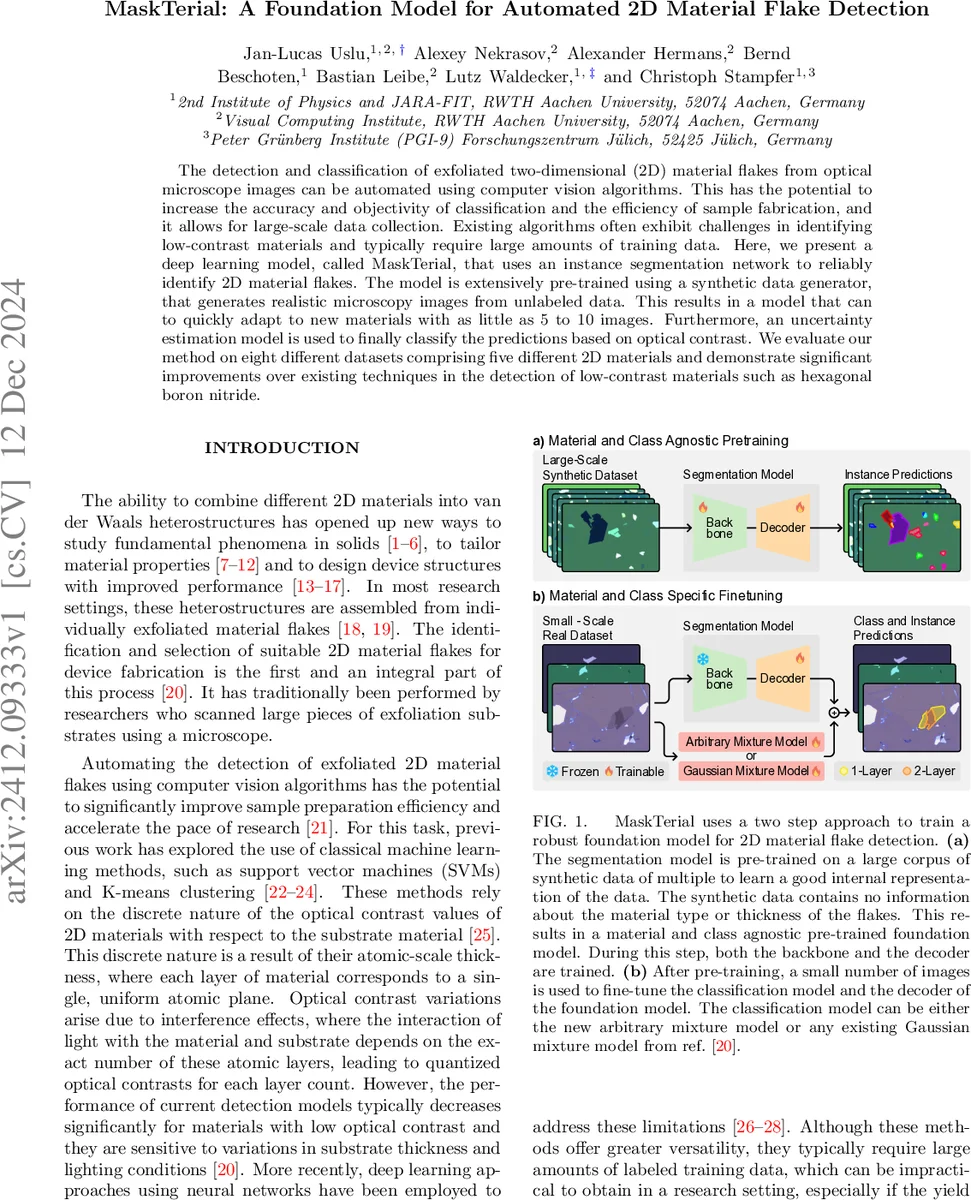

The detection and classification of exfoliated two-dimensional (2D) material flakes from optical microscope images can be automated using computer vision algorithms. This has the potential to increase the accuracy and objectivity of classification and the efficiency of sample fabrication, and it allows for large-scale data collection. Existing algorithms often exhibit challenges in identifying low-contrast materials and typically require large amounts of training data. Here, we present a deep learning model, called MaskTerial, that uses an instance segmentation network to reliably identify 2D material flakes. The model is extensively pre-trained using a synthetic data generator, that generates realistic microscopy images from unlabeled data. This results in a model that can to quickly adapt to new materials with as little as 5 to 10 images. Furthermore, an uncertainty estimation model is used to finally classify the predictions based on optical contrast. We evaluate our method on eight different datasets comprising five different 2D materials and demonstrate significant improvements over existing techniques in the detection of low-contrast materials such as hexagonal boron nitride.

💡 Research Summary

MaskTerial introduces a two‑stage deep‑learning framework for automated detection and thickness classification of exfoliated two‑dimensional (2D) material flakes in optical microscope images. The authors address two major bottlenecks that have limited previous approaches: (i) poor performance on low‑contrast materials such as hexagonal boron nitride (hBN) and (ii) the need for large, manually annotated datasets. Their solution combines a foundation‑model style pre‑training on massive synthetic data with a physics‑informed uncertainty estimation head, enabling rapid adaptation to new materials with only a handful of real images.

Synthetic data generation.

A novel pipeline first mines flake shapes from ~100 k unlabeled microscope images of exfoliated graphite. After grayscale conversion, brightness thresholding, connected‑component extraction, and an L2‑classifier filter, about 35 k high‑quality flake silhouettes are collected. In the second phase, these silhouettes are randomly placed, rotated, scaled, and overlapped on a blank canvas to form a grayscale “layer‑count” mask. Using the transfer‑matrix method (TMM) together with material‑specific dispersion relations, substrate SiO₂ thickness, illumination spectrum, and camera response curves, the grayscale mask is rendered into realistic RGB images. Post‑processing adds simplex‑noise‑based tape residues, shadows, vignetting, and Gaussian sensor noise. This yields roughly 42 k synthetic images per material (graphene, CrI₃, hBN, TaS₂, MoSe₂, WS₂, WSe₂), for a total of ~300 k images with perfect ground‑truth masks, which are used for extensive pre‑training.

Model architecture.

Stage 1 is an instance‑segmentation network based on Mask2Former. A ResNet‑50 backbone extracts multi‑scale features, which are upsampled by a pixel decoder and fed into a transformer decoder equipped with learnable query embeddings (inspired by DETR). The decoder produces a set of binary masks that localize all flakes in the image, without assigning any thickness class. Because the pre‑training data contain no material‑specific labels, the network learns material‑agnostic visual cues, making it a true “foundation model” for 2D‑flake detection.

Stage 2 is the Arbitrary Mixture Model (AMM), a lightweight classifier that maps the optical contrast of each detected mask into a latent space and fits class‑conditional Gaussians. The AMM consists of a ResNet with spectral normalization and residual connections, trained with a standard cross‑entropy loss on the contrast distributions. By employing the Deep Deterministic Uncertainty (DDU) technique, the network’s embedding space is constrained to be smooth and locally linear, allowing the posterior probability of each class to be computed analytically from the Gaussian densities. This yields calibrated class probabilities and an explicit uncertainty estimate for every prediction, which is especially valuable when dealing with low‑contrast flakes.

Fine‑tuning and evaluation.

To test transferability, the authors assembled eight real‑world datasets covering five materials (graphene, hBN, MoSe₂, WS₂, WSe₂). For each material they collected low, medium, and high substrate‑thickness‑variance subsets (≈5 nm, 10 nm, and 20 nm spread around a nominal 90 nm SiO₂ layer) and ensured that training and test images came from independent exfoliation runs. Fine‑tuning required only 5–10 annotated images per material. Across all datasets, MaskTerial achieved average precision (AP) improvements of 15–30 % over baseline methods such as SVM/K‑means contrast classifiers, standard CNNs, and even Mask RCNN trained from scratch. The most striking gain was observed for hBN, where prior methods failed to detect flakes, whereas MaskTerial reached AP ≈ 0.85. Uncertainty scores successfully separated high‑confidence detections from ambiguous ones, enabling a human‑in‑the‑loop review process.

Key contributions and impact.

- Synthetic‑data‑driven foundation model – By leveraging physics‑based rendering and large‑scale shape mining, the authors eliminate the need for extensive manual annotation while still capturing the complex optical interference patterns that define 2D‑flake contrast.

- Decoupled detection and classification – The instance‑segmentation stage is reusable across materials; only the lightweight AMM needs retraining when a new material is introduced, dramatically reducing adaptation cost.

- Uncertainty‑aware classification – The DDU‑based Gaussian mixture provides calibrated probabilities and explicit epistemic uncertainty, a rare feature in computer‑vision pipelines for materials science.

Overall, MaskTerial demonstrates that a carefully engineered combination of synthetic pre‑training, transformer‑based segmentation, and physics‑informed probabilistic classification can overcome the long‑standing challenges of low‑contrast 2D‑material detection and scarce labeled data. The methodology is readily extensible to other microscopy domains where contrast is subtle and annotation resources are limited, suggesting a broad applicability beyond the immediate field of van‑der‑Waals heterostructure fabrication.

Comments & Academic Discussion

Loading comments...

Leave a Comment