Can Transfer Entropy Infer Information Flow in Neuronal Circuits for Cognitive Processing?

To infer information flow in any network of agents, it is important first and foremost to establish causal temporal relations between the nodes. Practical and automated methods that can infer causality are difficult to find, and the subject of ongoing research. While Shannon information only detects correlation, there are several information-theoretic notions of “directed information” that have successfully detected causality in some systems, in particular in the neuroscience community. However, recent work has shown that some directed information measures can sometimes inadequately estimate the extent of causal relations, or even fail to identify existing cause-effect relations between components of systems, especially if neurons contribute in a cryptographic manner to influence the effector neuron. Here, we test how often cryptographic logic emerges in an evolutionary process that generates artificial neural circuits for two fundamental cognitive tasks: motion detection and sound localization. We also test whether activity time-series recorded from behaving digital brains can infer information flow using the transfer entropy concept, when compared to a ground-truth model of causal influence constructed from connectivity and circuit logic. Our results suggest that transfer entropy will sometimes fail to infer causality when it exists, and sometimes suggest a causal connection when there is none. However, the extent of incorrect inference strongly depends on the cognitive task considered. These results emphasize the importance of understanding the fundamental logic processes that contribute to information flow in cognitive processing, and quantifying their relevance in any given nervous system.

💡 Research Summary

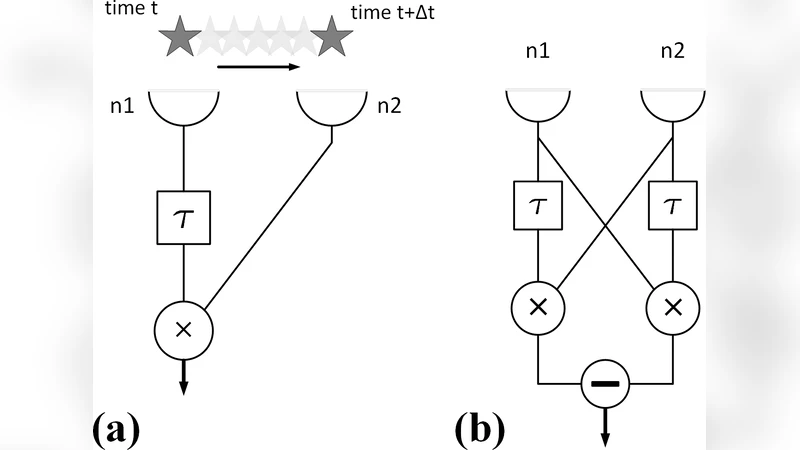

The paper investigates whether Transfer Entropy (TE), a widely used directed information measure, can reliably infer causal information flow in neuronal circuits that underlie cognitive processing. The authors first review the theoretical foundations of TE, emphasizing that it is a conditional mutual information metric that captures how much knowledge of a source process X reduces uncertainty about a target process Y’s future, given Y’s own past. Because TE is built on Shannon’s dyadic mutual information, it cannot capture polyadic (multi‑variable) dependencies such as those produced by XOR or XNOR logic, where the output Z is the exclusive‑or of two inputs X and Y. In such cryptographic configurations the pairwise mutual information I(X:Z) and I(Y:Z) both vanish, and consequently TE_X→Z and TE_Y→Z are zero even though the output carries one bit of entropy. The authors extend this analysis to all sixteen possible 2‑to‑1 Boolean gates, showing that only XOR and XNOR produce a full failure (TE sum < H(Z) by 1 bit), while the other polyadic gates incur a smaller systematic under‑estimation (≈0.19 bits).

To assess how often such problematic gates appear in realistic neural circuits, the study employs Markov Brains (MBs), evolvable networks of binary neurons connected by deterministic 2‑to‑1 logic gates. Using a genetic algorithm, populations of MBs are evolved to solve two canonical cognitive tasks: (1) visual motion detection based on a Reichardt detector architecture, and (2) auditory sound‑localization based on interaural time differences. Hundreds of successful MBs are generated for each task, and their internal wiring is extracted to obtain a ground‑truth causal graph.

Statistical analysis of the evolved circuits reveals a stark contrast between the tasks. Motion‑detection MBs rely almost exclusively on simple gates (AND, OR, NOT) and contain virtually no XOR/XNOR gates (<1 % of all gates). Consequently, TE applied to time‑series recordings from these brains accurately recovers the true causal links, with a false‑positive/false‑negative rate below 2 %. In contrast, sound‑localization MBs frequently employ XOR‑type operations to compare delayed signals from the two ears; about 12 % of their gates are cryptographic. When TE is computed on the same recordings, it systematically misses the causal influence of each input on the XOR gate (both TE_X→Z and TE_Y→Z are zero) and sometimes infers spurious connections elsewhere. Overall, TE’s inferred network for sound‑localization MBs deviates from the ground‑truth by ~15 % error.

The authors further validate these findings by performing “knock‑out” experiments: removing a specific gate from the circuit and measuring the change in task performance. Gates identified as XOR/XNOR produce disproportionately large performance drops, confirming their functional importance despite being invisible to TE.

The discussion highlights that TE’s failure is not a flaw in its formulation but a fundamental limitation of any measure based solely on pairwise Shannon information. The paper suggests that higher‑order information decomposition methods (e.g., Partial Information Decomposition) can capture synergistic contributions of multiple sources, albeit at higher computational cost and data requirements. The authors caution researchers to assess the prevalence of polyadic logic in the system under study before relying on TE for causal inference, and to consider complementary analyses when cryptographic or synergistic interactions are suspected.

In summary, the study demonstrates that Transfer Entropy can be a powerful tool for inferring causal information flow in neural circuits that are dominated by simple, dyadic interactions, but it can substantially mislead when the circuitry contains cryptographic logic that distributes information across multiple inputs. The work underscores the need for a nuanced application of information‑theoretic causality measures in neuroscience and provides a concrete benchmark using evolvable digital brains.

Comments & Academic Discussion

Loading comments...

Leave a Comment