Training Stiff Neural Ordinary Differential Equations with Explicit Exponential Integration Methods

Stiff ordinary differential equations (ODEs) are common in many science and engineering fields, but standard neural ODE approaches struggle to accurately learn these stiff systems, posing a significant barrier to the widespread adoption of neural ODEs. In our earlier work, we addressed this challenge by utilizing single-step implicit methods for solving stiff neural ODEs. While effective, these implicit methods are computationally costly and can be complex to implement. This paper expands on our earlier work by exploring explicit exponential integration methods as a more efficient alternative. We evaluate the potential of these explicit methods to handle stiff dynamics in neural ODEs, aiming to enhance their applicability to a broader range of scientific and engineering problems. We found the integrating factor Euler (IF Euler) method to excel in stability and efficiency. While implicit schemes failed to train the stiff van der Pol oscillator, the IF Euler method succeeded, even with large step sizes. However, IF Euler’s first-order accuracy limits its use, leaving the development of higher-order methods for stiff neural ODEs an open research problem.

💡 Research Summary

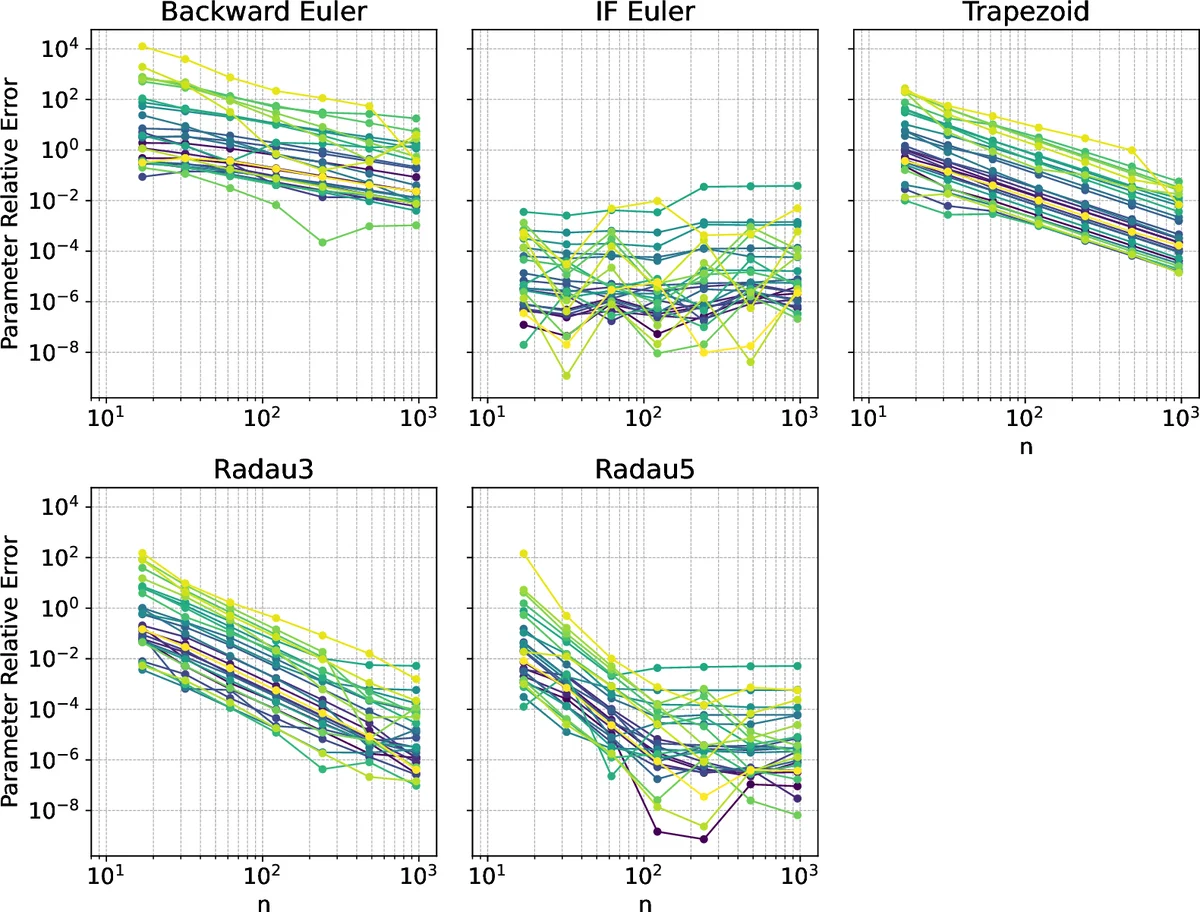

This paper addresses a fundamental bottleneck in training neural ordinary differential equations (neural ODEs) on stiff dynamical systems: the trade‑off between numerical stability and computational cost. Prior work demonstrated that single‑step implicit solvers (e.g., backward Euler, trapezoidal, Radau III/5) can successfully train stiff neural ODEs, but each integration step requires solving a nonlinear system, leading to high per‑step overhead and extensive Jacobian evaluations. To overcome this, the authors explore explicit exponential integration methods, focusing on the integrating‑factor Euler (IF Euler) scheme, a first‑order explicit method that treats the stiff linear part analytically via matrix exponentials while handling the remaining nonlinear part explicitly.

The paper first reviews the nature of stiffness—large eigenvalue spread in the Jacobian that forces conventional explicit methods to adopt prohibitively small step sizes. It then introduces the exponential integration framework, where the ODE is split into a linear stiff component (A) and a nonlinear remainder (g(y)). The IF Euler update reads

(y_{n+1}=e^{hA}y_n + h,\varphi_1(hA)g(y_n)) with (\varphi_1(z)=(e^{z}-1)/z). By computing the matrix exponential (or a Krylov‑subspace approximation) once per step, the method inherits the unconditional stability of implicit schemes without requiring a nonlinear solve.

Experimental validation uses the classic stiff Van der Pol oscillator with (\mu=1000). A standard explicit Runge‑Kutta‑Fehlberg integrator needs over 400 k time points and nearly 3 million function evaluations to remain stable, whereas a fifth‑order implicit Radau IIA reduces this to under 1 k points but still incurs Jacobian solves. IF Euler, with a relatively large step size (e.g., (h=0.1)), integrates the same trajectory accurately using roughly 10 k steps and about 1.2 k function evaluations, demonstrating stability comparable to implicit methods.

In the neural ODE training context, the authors adopt a Discretize‑Optimize (Disc‑Opt) strategy: the ODE is discretized first, then the loss is minimized directly on the discrete trajectory using automatic differentiation. This avoids the adjoint‑based gradient computation required by Optimize‑Discretize (Opt‑Disc), which is notoriously unstable for stiff problems. With Disc‑Opt, IF Euler’s cheap forward passes translate into faster back‑propagation, yielding a three‑fold speed‑up over Radau 5 in wall‑clock time while achieving similar validation loss.

The authors also test higher‑order exponential schemes (e.g., IF RK2, IF RK3) but find that their stability regions shrink dramatically for stiff eigenvalues, making them impractical without additional stabilization. Consequently, the paper emphasizes that IF Euler’s first‑order accuracy is acceptable for many scientific learning tasks where long‑term qualitative behavior or parameter inference is more important than high‑order local accuracy.

Key contributions include: (1) demonstrating that an explicit exponential integrator can train stiff neural ODEs where traditional explicit methods fail; (2) showing that IF Euler matches implicit solvers in stability while dramatically reducing function‑evaluation and memory costs; (3) providing a thorough comparison of Disc‑Opt versus Opt‑Disc in the stiff regime, favoring Disc‑Opt for its robustness; and (4) outlining practical implementation details such as using Krylov subspace methods for matrix exponentials, which keep the per‑step cost low even for moderately sized systems.

The paper concludes that explicit exponential integration offers a promising, low‑overhead alternative for stiff neural ODE training, especially when combined with Disc‑Opt. However, the first‑order nature of IF Euler limits its applicability to problems demanding high precision. Future work is suggested in developing stable higher‑order exponential schemes, efficient large‑scale matrix exponential approximations, and hybrid approaches that blend exponential integration with physics‑informed regularization. Overall, the study provides a solid foundation for expanding neural ODE applicability to a broader class of stiff scientific and engineering problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment