HRRPGraphNet: Make HRRPs to Be Graphs for Efficient Target Recognition

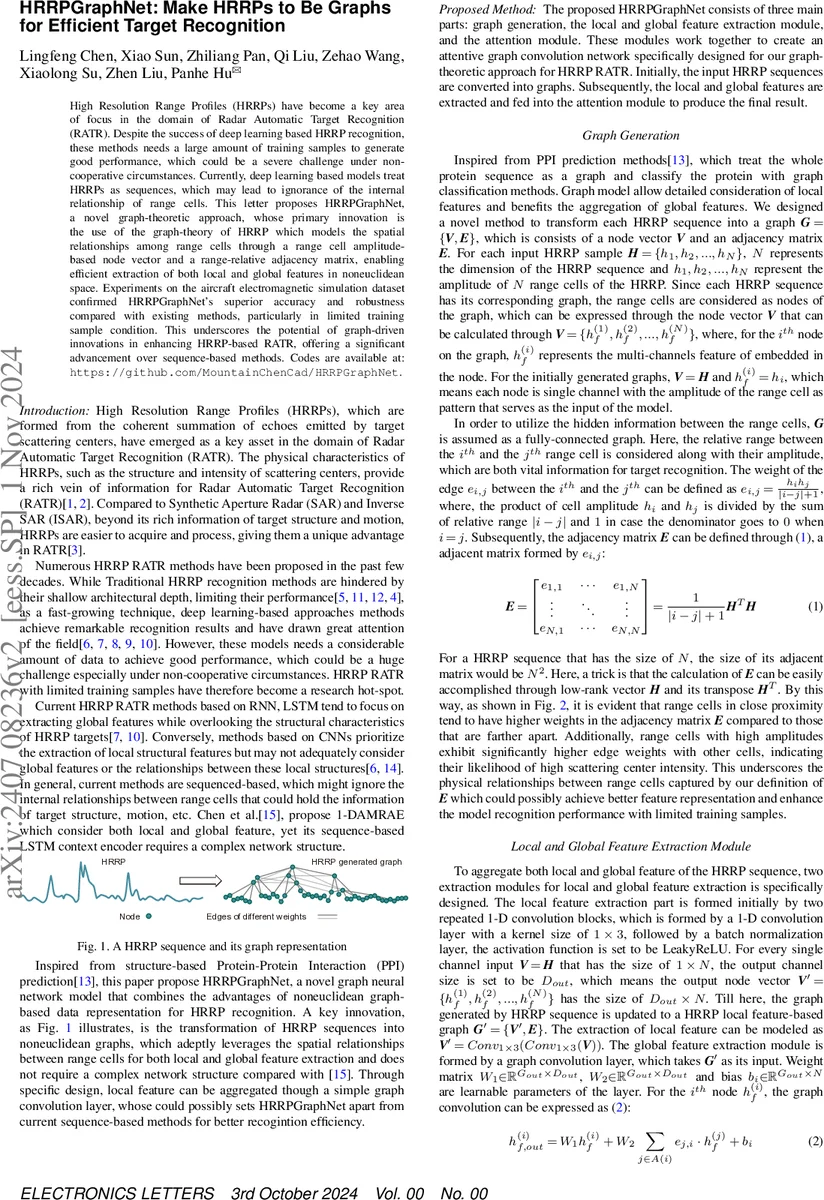

High Resolution Range Profiles (HRRP) have become a key area of focus in the domain of Radar Automatic Target Recognition (RATR). Despite the success of deep learning based HRRP recognition, these methods needs a large amount of training samples to generate good performance, which could be a severe challenge under non-cooperative circumstances. Currently, deep learning based models treat HRRP as sequences, which may lead to ignorance of the internal relationship of range cells. This letter introduces HRRPGraphNet, whose pivotal innovation is the transformation of HRRP data into a novel graph structure, utilizing a range cell amplitude(hyphen)based node vector and a range(hyphen)relative adjacency matrix. This graph(hyphen)based approach facilitates both local feature extraction via one(hyphen)dimensional convolution layers, global feature extraction through a graph convolution layer and a attention module. Experiments on the aircraft electromagnetic simulation dataset confirmed HRRPGraphNet superior accuracy and robustness, particularly in limited training sample environments, underscoring the potential of graph(hyphen)driven innovations in HRRP(hyphen)based RATR.

💡 Research Summary

The paper introduces HRRPGraphNet, a novel graph‑based neural network designed for radar automatic target recognition (RATR) using high‑resolution range profiles (HRRPs). Traditional deep‑learning approaches treat HRRPs as one‑dimensional sequences, which either focus on global temporal dependencies (RNN/LSTM) or local spatial patterns (CNN) but ignore the intrinsic relationships among range cells. HRRPGraphNet addresses this gap by converting each HRRP into a fully‑connected graph: each range cell becomes a node whose feature is the cell’s amplitude, and the edge weight between nodes i and j is defined as (e_{i,j}= \frac{h_i h_j}{|i-j|+1}). This formulation embeds both amplitude similarity and physical proximity, reflecting radar scattering physics (nearby cells with strong returns are more strongly linked).

The architecture consists of three stages. First, a local feature extraction module applies two successive 1‑D convolution blocks (kernel size 1×3, batch normalization, LeakyReLU) to the node feature matrix, expanding the channel dimension from 1 to (D_{out}). This step captures fine‑grained, locality‑oriented patterns akin to conventional CNNs. Second, a graph convolutional layer aggregates global context: for each node i, the output is (W_1 h_i^{(f)} + W_2 \sum_{j\in A(i)} e_{j,i} h_j^{(f)} + b_i), where (A(i)) denotes all neighboring nodes (the graph is fully connected). Because the adjacency matrix already encodes distance‑weighted amplitude relationships, the GCN effectively fuses local descriptors with long‑range structural cues.

Finally, a linear attention module computes a scalar score for each node via (s_i = h_i^{(out)T} W_{att} + b_{att}), normalizes the scores with Softmax to obtain attention coefficients (\alpha_i), and produces a weighted sum (V_{att} = \sum_i \alpha_i h_i^{(out)}). This vector is passed through a fully‑connected layer and Log‑Softmax to generate class probabilities. The attention mechanism emphasizes nodes corresponding to strong scattering centers, which is especially beneficial when training data are scarce.

Experiments were conducted on an X‑band electromagnetic simulation dataset comprising three aircraft types (F‑15, F‑18, IDF) with four polarizations and 501 range cells per HRRP. Two training regimes were evaluated: 900 samples per class (abundant) and 300 samples per class (few‑shot). HRRPGraphNet achieved average accuracies of 91.56 % and 90.78 % respectively, outperforming a wide range of baselines including SVM, LDA, MSFKSPP‑MMC, auto‑encoders, CNN, RNN, 1‑D ResNet, LSTM, 1‑DRCAE, and 1‑D AMRAE. Notably, while most deep models suffered a steep accuracy drop under the 300‑sample condition, HRRPGraphNet maintained robust performance, demonstrating its superior generalization with limited data.

The authors acknowledge that the fully‑connected adjacency matrix incurs (O(N^2)) memory and computational cost, which limits scalability for very long HRRPs. They mitigate this by exploiting low‑rank matrix multiplication (using the outer product of the amplitude vector and its transpose) but suggest future work on sparse graph constructions or adaptive edge pruning to reduce overhead.

In summary, HRRPGraphNet showcases how transforming HRRP signals into graph structures enables simultaneous extraction of local and global features, leading to higher recognition accuracy and resilience to data scarcity. This work opens a promising direction for applying graph neural networks to radar signal processing and other domains where non‑Euclidean relationships among sequential measurements are critical.

Comments & Academic Discussion

Loading comments...

Leave a Comment