A kernel-based framework for learning graded relations from data

Driven by a large number of potential applications in areas like bioinformatics, information retrieval and social network analysis, the problem setting of inferring relations between pairs of data objects has recently been investigated quite intensiv…

Authors: Willem Waegeman, Tapio Pahikkala, Antti Airola

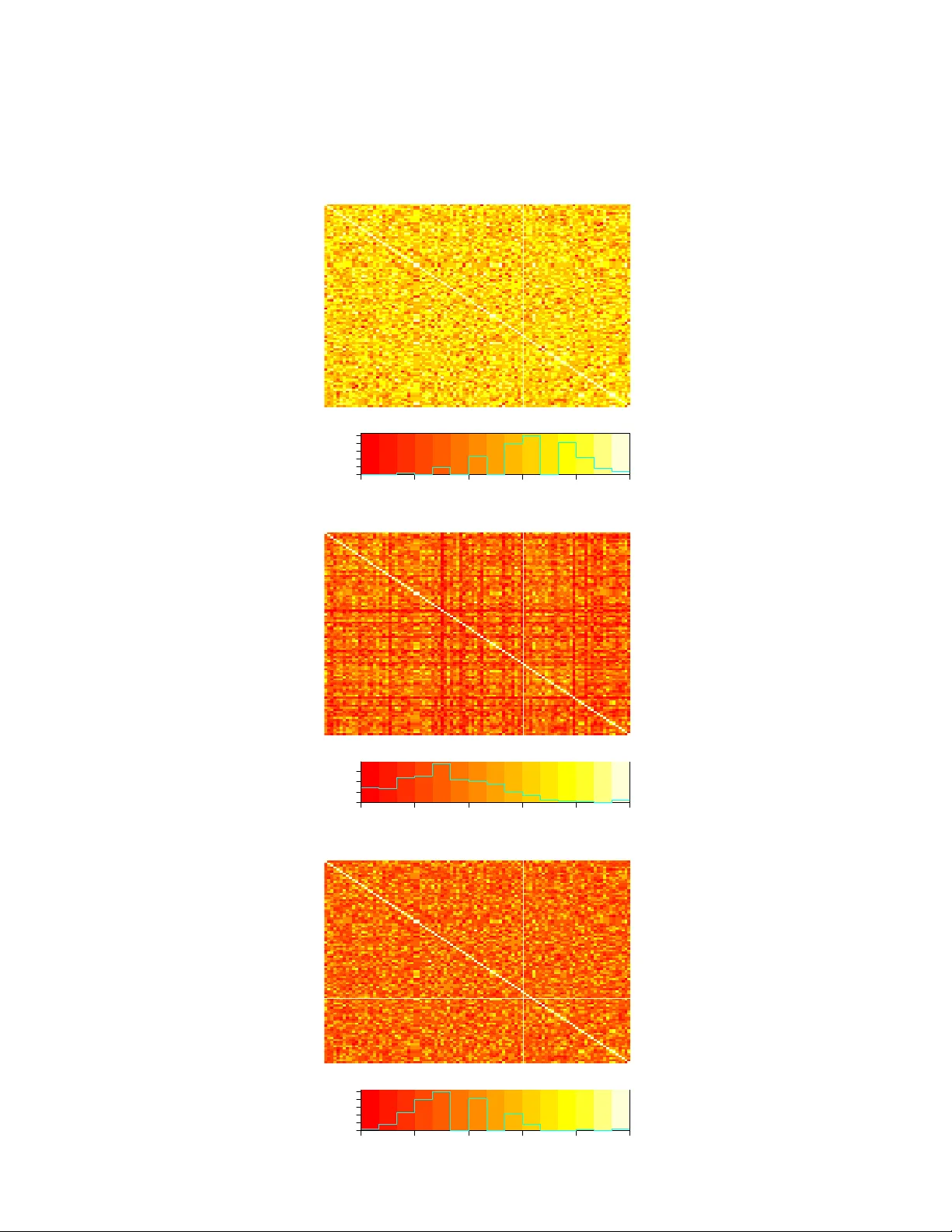

A k ernel-based framew ork for learning graded relations from data Willem W aegeman, T apio P ahikk ala, An tti Airola, T apio Salak oski, Michiel Stock, Bernard De Baets Abstract Driv en b y a large num b er of p otential applications in areas like bioin- formatics, information retriev al and so cial netw ork analysis, the problem setting of inferring relations b et ween pairs of data ob jects has recently b een inv estigated quite intensiv ely in the machine learning communit y . T o this end, curren t approac hes t ypically consider datasets containing crisp relations, so that standard classification metho ds can b e adopted. Ho wev er, relations b etw een ob jects like similarities and preferences are often expressed in a graded manner in real-world applications. A gen- eral kernel-based framew ork for learning relations from data is introduced here. It extends existing approaches because both crisp and graded rela- tions are considered, and it unifies existing approac hes because different t yp es of graded relations can b e mo deled, including symmetric and recip- ro cal relations. This framew ork establishes imp ortant links b et ween recen t dev elopments in fuzzy set theory and machine learning. Its usefulness is demonstrated through v arious exp eriments on synthetic and real-world data. 1 In tro duction Relational data o ccurs in many predictive mo deling tasks, such as forecasting the winner in tw o-play er computer games [7], predicting proteins that interact with other proteins in bioinformatics [67], retrieving do cumen ts that are similar to a target document in text mining [68], inv estigating the persons that are friends of eac h other on social net work sites [57], etc. All these examples represen t fields of application in which sp ecific machine learning and data mining algorithms ha ve been successfully developed to infer relations from data; pairwise relations, to b e more sp ecific. The t ypical learning scenario in suc h situations can b e summarized as fol- lo ws. Given a dataset of kno wn relations b et ween pairs of ob jects and a feature represen tation of these ob jects in terms of v ariables that might characterize the relations, the goal usually consists of inferring a statistical mo del that takes t wo ob jects as input and predicts whether the relation of interest o ccurs for these t wo ob jects. Moreo ver, since one aims to disco ver unkno wn relations, a go o d 1 learning algorithm should b e able to construct a predictive mo del that can gen- eralize for unseen data, i.e., pairs of ob jects for which at least one of the tw o ob jects w as not used to construct the model. As a result of the transition from predictiv e mo dels for single ob jects to pairs of ob jects, new adv anced learning algorithms need to b e developed, resulting in new challenges with regard to mo del construction, computational tractability and model assessmen t. As relations b et ween ob jects can b e observed in man y different forms, this general problem setting provides links to sev eral subfields of machine learning, lik e statistical relational learning [13], graph mining [61], metric learning [66] and preference learning [25]. More specifically , from a graph-theoretic persp ec- tiv e, learning a relation can b e formulated as learning edges in a graph where the no des represent information of the data ob jects; from a metric learning p erspective, the relation that we aim to learn should satisfy some w ell-defined prop erties lik e p ositiv e definiteness, transitivit y or the triangle inequality; and from a preference learning p erspective, the relation expresses a (degree of ) pref- erence in a pairwise comparison of data ob jects. The topic of learning relations b et ween ob jects is also closely related to recen t developmen ts in fuzzy set theory . This article will elab orate on these connections via tw o imp ortant contributions: (1) the extension of the typical setting of learning crisp relations to real-v alued and ordinal-v alued relations and (2) the inclusion of domain knowledge ab out relations into the inference pro cess by explicit modeling of mathematical properties of these relations. F or algorithmic simplicity , one can observe that many approaches only learn crisp relations, that is relations with only 0 and 1 as p ossible v alues, so that standard binary classifiers can b e mo dified. In this context, consider examples as inferring protein-protein interaction netw orks or metab olic netw orks in bioinformatics [21, 67]. Ho wev er, graded relations are obse rv ed in many real-w orld applications [17], resulting in a need for new algorithms that take graded relational information in to account. F urthermore, the properties of graded relations ha ve b een inv es- tigated intensiv ely in the recent fuzzy logic literature 1 , and these prop erties are v ery useful to analyze and improv e curren t algorithms. Using mathematical prop erties of graded relations, constraints can b e imp osed for incorp orating do- main knowledge in the learning pro cess, to impro ve predictiv e p erformance or simply to guarantee that a relation with the right prop erties is learned. This is definitely the case for prop erties like transitivity when learning similarity relations and preference relations – see e.g. [9, 10, 15, 55], but even v ery basic prop erties lik e symmetry , antisymmetry or reciprocity already provide domain kno wledge that can steer the learning pro cess. F or example, in so cial netw ork analysis, the notion “p erson A b eing a friend of p erson B” should b e considered as a symmetric relation, while the notion “p erson A defeats p erson B in a chess game” will b e an tisymmetric (or, equiv alently , reciprocal). Nev ertheless, man y 1 Often the term fuzzy relation is used in the fuzzy set literature to refer to graded relations. How ever, fuzzy relations should b e seen as a sub class of graded relations. F or example, reciprocal relations should not b e considered as fuzzy relations, b ecause they often exhibit a probabilistic semantics rather than a fuzzy semantics. 2 examples exist, to o, where neither symmetry nor antisymmetry necessarily hold, lik e the notion “person A trusts p erson B”. In this pap er we present a general kernel-based approach that unifies all the ab ov e cases into one general framework where domain knowledge can b e easily sp ecified by choosing a prop er kernel and mo del structure, while different learning settings are distinguished by means of the loss function. Let Q ( v , v 0 ) b e a binary relation on an ob ject space V , then the following learning settings will b e considered in particular: • Crisp relations: when the restriction is made that Q : V 2 → { 0 , 1 } , we arriv e at a binary classification task with pairs of ob jects as input for the classifier. • [0 , 1]-v alued relations: here it is allo wed that relations can tak e the form Q : V 2 → [0 , 1], resulting in a regression t yp e of learning setting. The re- striction to the interv al [0 , 1] is predominantly made b ecause many math- ematical frameworks in fields like fuzzy set theory and decision theory are built up on such relations, using the notion of a fuzzy relation, but in gen- eral one can account quite easily for real-graded relations by applying a scaling op eration from R to [0 , 1]. • Ordinal-v alued relations: situated somewhat in the middle b etw een the other t wo settings, here it is assumed that the actual v alues of the relation do not matter but rather the provided order information should b e learned. F urthermore, one can in tegrate differen t types of domain kno wledge in our framew ork, b y guaran teeing that certain prop erties are satisfied. The follo wing cases can be distinguished: • Symmetric relations. Applications arise in man y domains and metric learning or learning similarity measures can be seen as sp ecial cases that require additional prop erties to hold, such as the triangle inequality for metrics and p ositive definiteness or transitivity prop erties for similarity measures. As shown b elo w, learning symmetric relations can b e in ter- preted as learning edges in an undirected graph. • Recipro cal or antisymmetric relations. Applications arise here in domains suc h as preference learning, game theory and bioinformatics for represent- ing preference relations, choice probabilities, winning probabilities, gene regulation, etc. W e will provide a formal definition b elow, but, given a rescaling op eration from R to [0 , 1], antisymmetric relations can b e con- v erted in to reciprocal relations. Similar to symmetric relations, transitiv- it y prop erties t ypically guarantee additional constraints that are definitely required for certain applications. It is, for example, w ell known in decision theory and preference modeling that transitive preference relations result in utility functions [6, 36]. Learning reciprocal or antisymmetric relations can b e in terpreted as learning edges in a directed graph. • Ordinary binary relations. Man y applications can b e found where nei- ther symmetry nor recipro cit y holds. F rom a graph inference p ersp ectiv e, 3 learning such relations should be seen as learning the edges in a bidirec- tional graph, where edges in one direction do not imp ose constraints on edges in the other direction. Indeed, the framew ork that w e prop ose below strongly relies on graphs, where no des represent the data ob jects that are studied and the edges represen t the relations present in the training set. The w eigh ts on the edges characterize the v alues of known relations, while unconnected no des indicate pairs of ob jects for which the unknown relation needs to b e predicted. The left graph in Fig- ure 1 visualizes a toy example representing the most general case where neither symmetry nor recipro cit y holds. Dep ending on the application, the learning algorithm should try to predict the relations for three t yp es of ob ject pairs: • pairs of ob jects that are already presen t in the training dataset b y means of other edges, like the pair (A,B), • pairs of ob jects for which one of the tw o ob jects o ccurs in the training dataset, like the pair (E,F), • pairs of ob jects for which none of the t wo ob jects is observed during train- ing, like the pair (F,G). The graphs on the righ t-hand side in Figure 1 sho w examples of specific types of relations that are co vered b y our framework. The differences b etw een these relations will become more clear in the following sections. 2 General framew ork 2.1 Notation and basic concepts Let us start with in tro ducing some notations. W e assume that the data is structured as a graph G = ( V , E , Q ), where V corresp onds to the set of no des v and E ⊆ V 2 represen ts the set of edges e , for which training labels are pro vided in terms of relations. Moreov er, these relations are represen ted by training w eights y e on the edges, generated from an unknown underlying relation Q : V 2 → [0 , 1]. Relations are required to take v alues in the interv al [0 , 1] b ecause some prop erties that we need are historically defined for suc h relations, but an extension to real- graded relations h : V 2 → R can alwa ys b e realized. Consider b ∈ R + and an increasing isomorphism σ : [ − b, b ] → [0 , 1] that satisfies σ ( x ) = 1 − σ ( − x ), then w e consider the R → [0 , 1] mapping ∇ defined b y: ∇ ( x ) = 0 , if x ≤ − b σ ( x ) , if − b ≤ x ≤ b 1 , if b ≤ x and its in verse ∇ − 1 = σ − 1 . 4 A 0.8 D B 0.2 0.6 C 0.7 E 0.4 1 0.6 0.5 0.3 F G 0.9 A 0 C B 1 1 (a) C, R, T A 1 C B 1 1 (b) C, R, I A 1 C B 1 1 (c) C, S, T A 0 C B 1 1 (d) C, S, I A 0.2 C B 0.7 0.6 (e) G, R, T A 0.8 C B 0.7 0.6 (f ) G, R, I A 0.8 C B 0.7 0.6 (g) G, S, T A 0.2 C B 0.7 0.6 (h) G, S, I Figure 1: Left: example of a multi-graph representing the most general case, where no additional prop erties of relations are assumed. Righ t: examples of eigh t different t yp es of relations in a graph of cardinality three. The following relational prop erties are illustrated: (C) crisp, (G) graded, (R) recipro cal, (S) symmetric, (T) transitive and (I) intransitiv e. F or the recipro cal relations, (I) refers to a relation that do es not satisfy w eak stochastic transitivity , while (T) is sho wing an example of a relation fulfilling strong sto chastic transitivity . F or the symmetric relations, (I) refers a relation that do es not satisfy T -transitivit y w.r.t. the Luk asiewicz t-norm T L ( a, b ) = max( a + b − 1 , 0), while (T) is showing an example of a relation that fulfills T -transitivity w.r.t. the pro duct t-norm T P ( a, b ) = ab . See Section 4 for formal definitions of transitivit y . 5 An y real-v alued relation h : V 2 → R can b e transformed into a [0 , 1]-v alued relation Q as follows: Q ( v , v 0 ) = ∇ ( h ( v , v 0 )) , ∀ ( v , v 0 ) ∈ V 2 , (1) and conv ersely by means of ∇ − 1 . In what follows we tacitly assume that ∇ has b een fixed. F ollowing the standard notations for kernel metho ds, w e formulate our learn- ing problem as the selection of a suitable function h ∈ H , with H a certain hy- p othesis space, in particular a reproducing k ernel Hilbert space (RKHS). More sp ecifically , the RKHS supports in our case hypotheses h : V 2 → R denoted as h ( e ) = w T Φ( e ) , with w a vector of parameters that needs to b e estimated from training data, Φ a joint feature mapping for edges in the graph (see b elow) and a T the transp ose of a vector a . Let us denote a training dataset of cardinalit y q = |E | as a set T = { ( e, y e ) | e ∈ E } of input-label pairs, then w e formally consider the following optimization problem, in which w e select an appropriate hypothesis h from H for training data T : ˆ h = argmin h ∈H 1 q X e ∈E L ( h ( e ) , y e ) + λ k h k 2 H (2) with L a given loss function, k · k 2 H the traditional quadratic regularizer on the RKHS and λ > 0 a regularization parameter. According to the representer theorem [47], any minimizer h ∈ H of (2) admits a dual represen tation of the follo wing form: h ( e ) = w T Φ( e ) = X e ∈E a e K Φ ( e, e ) , (3) with a e ∈ R dual parameters, K Φ the k ernel function associated with the RKHS and Φ the feature mapping corresp onding to K Φ and w = X e ∈E a e Φ( e ) . W e will alternate sev eral times b et ween the primal and dual representation for h in the remainder of this article. The primal representation as defined in (2) and its dual equiv alent (3) yield an RKHS defined on edges in the graph. In addition, we will establish an RKHS defined on no des, as every edge consists of a couple of no des. Giv en an input space V and a kernel K : V × V → R , the RKHS asso ciated with K can b e considered as the completion of ( f ∈ R V f ( v ) = m X i =1 β i K ( v , v i ) ) , 6 in the norm k f k K = s X i,j β i β j K ( v i , v j ) , where β i ∈ R , m ∈ N , v i ∈ V . 2.2 Learning arbitrary relations As men tioned in the introduction, both crisp and graded relations can be han- dled by our framework. T o make a sub division b etw een different cases, a loss function needs to b e sp ecified. F or crisp relations, one can typically use the hinge loss, whic h is giv en b y: L ( h ( e ) , y ) = [1 − y h ( e )] + , with [ · ] + the positive part of the argumen t. Alternatively , one can opt to opti- mize a probabilistic loss function like the logistic loss: L ( h ( e ) , y ) = ln(1 + exp( − y h ( e ))) . Con versely , if in a given application the observed relations are graded instead of crisp, other loss functions hav e to b e considered. Hence, w e will run exp erimen ts with a least-squares loss function: L ( h ( e ) , y ) = ( y e − h ( e )) 2 , (4) resulting in a regress ion t yp e of learning setting. Alternativ ely , one could prefer to optimize a more robust regression loss like the -insensitive loss, in case outliers are expected in the training dataset. So far, our framew ork do es not differ from standard classification and regres- sion algorithms. How ever, the sp ecification of a more precise mo del structure for (2) offers a couple of new challenges. In the most general case, when no further restrictions on the underlying relation can b e sp ecified, the following Kronec ker product feature mapping is prop osed to express pairwise interactions b et ween features of nodes: Φ( e ) = Φ( v , v 0 ) = φ ( v ) ⊗ φ ( v 0 ) , where φ represen ts the feature mapping for individual no des. A formal definition of the Kronec k er product can b e found in the appendix. As first shown in [3], the Kronec ker pro duct pairwise feature mapping yields the Kroneck er pro duct edge k ernel (a.k.a. the tensor pro duct pairwise k ernel) in the dual represen tation: K Φ ⊗ ( e, e ) = K Φ ⊗ ( v , v 0 , v , v 0 ) = K φ ( v , v ) K φ ( v 0 , v 0 ) , (5) with K φ the kernel corresp onding to φ . This section aims to formally pro ve that the Kronec k er product edge k ernel is the b est k ernel one can choose, when no further domain kno wledge is pro vided 7 ab out the underlying relation that generates the data. W e claim that with an appropriate choice for K φ , suc h as the Gaussian RBF kernel, the kernel K Φ generates a class H of universally approximating functions for learning any t yp e of relation. Armed with the definition of universalit y for kernels and the Stone-W eierstraß theorem [53], we arrive at the following theorem concerning the Kroneck er product pairwise k ernels: Theorem 2.1. L et us assume that the sp ac e of no des V is a c omp act metric sp ac e. If a c ontinuous kernel K φ is universal on V , then K Φ ⊗ defines a universal kernel on E . The pro of can be found in the app endix. W e would lik e to emphasize that one cannot conclude from the theorem that the Kronec ker pro duct pairwise k er- nel is the best kernel to use in all possible situations. The theorem only shows that the Kroneck er product pairwise k ernel mak es a reasonably go o d c hoice, if no further domain knowledge ab out the underlying relation is kno wn. Namely , the theorem sa ys that given a suitable sample of data, the RKHS of the kernel con tains functions that are arbitrarily close to any contin uous relation in the uniform norm. Ho wev er, the theorem do es not say anything ab out how likely it is to hav e, as a training set, such a data sample that can represent the ap- pro ximating function. F urther, the theorem only concerns graded relations that are contin uous and therefore crisp relations and graded, discontin uous relations require more detailed considerations. Other kernel functions might of course outp erform the Kroneck er pro duct pairwise kernel in applications where domain kno wledge can b e incorporated in the kernel function. In the following section w e discuss recipro cit y , symmetry and transitivity as three relational prop erties that can b e represented by means of more sp ecific k ernel functions. As a side note, we also in tro duce the Cartesian pairwise kernel, which is formally defined as follows K Φ C ( v , v 0 , v , v 0 ) = K φ ( v 0 , v 0 )[ v = v ] + K φ ( v , v )[ v 0 = v 0 ] , with [ . ] the indicator function, returning one when b oth elemen ts are iden tical and zero otherwise. This kernel was recen tly proposed by [31] as an alternativ e to the Kronec ker pro duct pairwise k ernel. By construction, the Cartesian pair- wise kernel has imp ortant limitations, since it cannot generalize to couples of no des for whic h b oth nodes did not app ear in the training dataset. 3 Sp ecial relations Th us, if no further information is a v ailable ab out the relation that underlies the data, one should definitely use the Kroneck er pro duct edge kernel. In this most general case, we allo w that for an y pair of nodes in the graph several edges can exist, in which an edge in one direction does not necessarily impose constraints on the edge in the opp osite direction. Multiple edges in the same direction can connect tw o nodes, leading to a multi-graph as in Figure 1, where tw o different 8 edges in the same direction connect no des D and E . This construction is re- quired to allo w rep eated measuremen ts. How ev er, tw o important subclasses of relations deserv e further attention: recipro cal relations and symmetric relations. 3.1 Recipro cal relations This subsection briefly summarizes our previous w ork on learning recipro cal relations [43]. Let us start with a definition of this t yp e of relation. Definition 3.1. A binary r elation Q : V 2 → [0 , 1] is c al le d a r e cipr o c al r elation if for al l ( v , v 0 ) ∈ V 2 it holds that Q ( v, v 0 ) = 1 − Q ( v 0 , v ) . Definition 3.2. A binary r elation h : V 2 → R is c al le d an antisymmetric r elation if for al l ( v , v 0 ) ∈ V 2 it holds that h ( v, v 0 ) = − h ( v 0 , v ) . F or recipro cal and antisymmetric relations, every edge e = ( v , v 0 ) in a multi- graph lik e Figure 1 induces an unobserved invisible edge e R = ( v 0 , v ) with appropriate weigh t in the opp osite direction. The transformation op erator ∇ transforms an antisymmetric relation in to a recipro cal relation. Applications of recipro cal relations arise here in domains such as preference learning, game theory and bioinformatics for representing preference relations, choice probabil- ities, winning probabilities, gene regulation, etc. The weigh t on the edge defines the real direction of such an edge. If the weigh t on the edge e = ( v , v 0 ) is higher than 0.5, then the direction is from v to v 0 , but when the weigh t is lo wer than 0.5, then the direction should b e in terpreted as inv erted, for example, the edges from A to C in Figures 1 (a) and (e) should b e interpreted as edges starting from A instead of C . If the relation is 3-v alued as Q : V 2 → { 0 , 1 / 2 , 1 } , then w e end up with a three-class ordinal regression setting instead of an ordinary regression setting. In terestingly , recipro city can be easily incorp orated in our framew ork. Prop osition 3.3. L et Ψ b e a fe atur e mapping on V 2 and let h b e a hyp othesis define d by (2), then the r elation Q of typ e (1) is r e cipr o c al if Φ is given by Φ R ( e ) = Φ R ( v , v 0 ) = Ψ( v , v 0 ) − Ψ( v 0 , v ) . The pro of is immediate. In addition, one can easily show that recipro cit y as domain kno wledge can b e enforced in the dual form ulation. Let us in the least restrictiv e form now consider the Kroneck er pro duct for Ψ, then one obtains for Φ R the kernel K Φ ⊗ R giv en b y K Φ ⊗ R ( e, e ) = 2 K φ ( v , v ) K φ ( v 0 , v 0 ) − K φ ( v , v 0 ) K φ ( v 0 , v ) . (6) The follo wing theorem shows that this kernel can represent any type of recipro cal relation. Theorem 3.4. L et R ( V 2 ) = { t | t ∈ C ( V 2 ) , t ( v , v 0 ) = − t ( v 0 , v ) } 9 b e the sp ac e of al l c ontinuous antisymmetric r elations fr om V 2 to R . If K φ on V is universal, then for every function t ∈ R ( V 2 ) and every > 0 , ther e exists a function h in the RKHS induc e d by the kernel K Φ ⊗ R define d in (6), such that max ( v ,v 0 ) ∈V 2 {| t ( v , v 0 ) − h ( v , v 0 ) |} ≤ . (7) The pro of can b e found in the appendix. 3.2 Symmetric relations Symmetric relations form another imp ortan t sub class of relations in our frame- w ork. As a sp ecific type of symmetric relations, similarity relations constitute the underlying relation in many application domains where relations b etw een ob jects need to b e learned. Symmetric relations are formally defined as follows. Definition 3.5. A binary r elation Q : V 2 → [0 , 1] is c al le d a symmetric r elation if for al l ( v , v 0 ) ∈ V 2 it holds that Q ( v, v 0 ) = Q ( v 0 , v ) . Definition 3.6. A binary r elation h : V 2 → R is c al le d a symmetric r elation if for al l ( v , v 0 ) ∈ V 2 it holds that h ( v, v 0 ) = h ( v 0 , v ) . Note that ∇ preserves symmetry . F or symmetric relations, edges in multi- graphs like Figure 1 b ecome undirected. Applications arise in many domains and metric learning or learning similarity measures can b e seen as sp ecial cases. If the relation is 2-v alued as Q : V 2 → { 0 , 1 } , then we end up with a classification setting instead of a regression setting. Just lik e recipro cal relations, it turns out that symmetry can b e easily in- corp orated in our framework. Prop osition 3.7. L et Ψ b e a fe atur e mapping on V 2 and let h b e a hyp othesis define d by (2), then the r elation Q of typ e (1) is symmetric if Φ is given by Φ S ( e ) = Φ S ( v , v 0 ) = Ψ( v , v 0 ) + Ψ( v 0 , v ) . In addition, b y using mathematical properties of the Kronec ker product, one obtains in the dual form ulation an edge kernel that looks very similar to the one deriv ed for recipro cal relations. Let us again consider the Kroneck er pro duct for Ψ, then one obtains for Φ S the kernel K Φ ⊗ S giv en b y K Φ ⊗ S ( e, e ) = 2 K φ ( v , v ) K φ ( v 0 , v 0 ) + K φ ( v , v 0 ) K φ ( v 0 , v ) . Th us, the substraction of k ernels in the reciprocal case b ecomes an addition of k ernels in the symmetric case. The ab ov e k ernel has b een used for predicting protein-protein interactions in bioinformatics [3] and it has b een theoretically analyzed in [24]. More specifically , for some metho ds one has sho wn in the latter pap er that enforcing symmetry in the k ernel function yields identical results as adding every edge twice to the dataset, by taking each of the t wo no des once as first element of the edge. Unlik e many existing kernel-based metho ds for 10 pairwise data, the mo dels obtained with these kernels are able to represent an y recipro cal or symmetric relation resp ectively , without imp osing additional transitivit y properties of the relations. W e also remark that for symmetry as well, one can pro v e that the Kroneck er pro duct edge kernel yields a mo del that is flexible enough to represent an y type of underlying relation. Theorem 3.8. L et S ( V 2 ) = { t | t ∈ C ( V 2 ) , t ( v , v 0 ) = t ( v 0 , v ) } b e the sp ac e of al l c ontinuous symmetric r elations fr om V 2 to R . If K φ on V is universal, then for every function t ∈ S ( V 2 ) and every > 0 , ther e exists a function h in the RKHS (2) induc e d by the kernel (6), such that max ( v ,v 0 ) ∈V 2 {| t ( v , v 0 ) − h ( v , v 0 ) |} ≤ . The pro of is analogous to that of Theorem 3.4 (see app endix). As a side note, w e remark that a symm etric and recipro cal version of the Cartesian kernel can be in tro duced as w ell. 4 Relationships with fuzzy set theory The previous section rev ealed that sp ecific Kroneck er product edge k ernels can b e constructed for modeling recipro cal and symmetric relations, without requir- ing any further background ab out these relations. In this section we demonstrate that the Kroneck er pro duct edge kernels K Φ ⊗ , K Φ ⊗ R and K Φ ⊗ S are particularly useful for mo deling intransitiv e relations. Intransitiv e relations o ccur in a lot of real-w orld scenarios, like game pla ying [14, 19], comp etition b et ween bacte- ria [8, 30, 32, 33, 40, 44] and fungi [5], mating c hoice of lizards [49] and fo od choice of birds [63], to name just a few. In an informal w ay , Figure 1 sho ws with the help of examples what transitivity means for symmetric and reciprocal relations that are crisp and graded. Despite the o ccurrence of intransitiv e relations in many domains, one has to admit that most applications are still c haracterized by relations that fulfill relativ ely strong transitivity requirements. F or example, in decision making, preference modeling and so cial c hoice theory , one can argue that recipro cal re- lations like choice probabilities and preference judgmen ts should satisfy certain transitivit y prop erties, if they represent rational h uman decisions made after w ell-reasoned comparisons on ob jects [18, 36, 59]. F or symmetric relations as w ell, transitivity pla ys an important role [22, 29], when mo deling similarit y re- lations, metrics, k ernels, etc. It is for this reason that transitivit y prop erties ha v e b een studied extensiv ely in fuzzy set theory and related fields. F or recipro cal relations, one traditionally uses the notion of stochastic transitivity [36]. 11 Definition 4.1. L et g b e an incr e asing [1 / 2 , 1] 2 → [0 , 1] mapping. A r e cipr o c al r elation Q : V 2 → [0 , 1] is c al le d g -sto chastic tr ansitive if for any ( v 1 , v 2 , v 3 ) ∈ V 3 Q ( v 1 , v 2 ) ≥ 1 / 2 ∧ Q ( v 2 , v 3 ) ≥ 1 / 2 ⇒ Q ( v 1 , v 3 ) ≥ g ( Q ( v 1 , v 2 ) , Q ( v 2 , v 3 )) . Imp ortan t special cases are weak sto chastic transitivity when g ( a, b ) = 1 / 2, mo derate stochastic transitivity when g ( a, b ) = min( a, b ) and strong sto chastic transitivit y when g ( a, b ) = max( a, b ). Alternativ e (and more general) frame- w orks are FG-transitivit y [56] and cycle transitivity [9, 10]. F or graded symmet- ric relations, the notion of T -transitivity has b een put forward [12, 39]. Definition 4.2. A symmetric r elation Q : V 2 → [0 , 1] is c al le d T -tr ansitive with T a t-norm if for any ( v 1 , v 2 , v 3 ) ∈ V 3 T ( Q ( v 1 , v 2 ) , Q ( v 2 , v 3 )) ≤ Q ( v 1 , v 3 ) . (8) Three imp ortan t t-norms are the minimum t-norm T M ( a, b ) = min( a, b ), the pro duct t-norm T P ( a, b ) = ab and the Luk asiewicz t-norm T L ( a, b ) = max( a + b − 1 , 0). In addition, sev eral authors ha ve shown that v arious forms of transitivity give rise to utility represen table or numerically representable relations, also called fuzzy weak orders – see e.g. [4, 6, 20, 34, 36]. W e will use the term ranking represen tability to establish a link with mac hine learning. W e give a slightly sp ecific definition that unifies recipro cal and symmetric relations. Definition 4.3. A r e cipr o c al or symmetric r elation Q : V 2 → [0 , 1] is c al le d r anking r epr esentable if ther e exists a r anking function f : V → R such that for al l ( v , v 0 ) ∈ V 2 it r esp e ctively holds that 1. Q ( v , v 0 ) = ∇ ( f ( v ) − f ( v 0 )) (r e cipr o c al c ase) ; 2. Q ( v , v 0 ) = ∇ ( f ( v ) + f ( v 0 )) (symmetric c ase) . The main idea is that ranking represen table relations can b e constructed from a utility function f . Ranking representable reciprocal relations corresp ond to directed acyclic graphs, and a unique ranking of the no des in such graphs can b e obtained with top ological sorting algorithms. The ranking representable recipro cal relations of Figures 1 (a) and (e) for example yield the global ranking A B C . In terestingly , ranking represen tability of recipro cal relations and symmetric relations can b e easily achiev ed in our framework by simplifying the join t feature mapping Ψ. Let Ψ( v, v 0 ) = φ ( v ) such that K Φ simplifies to K Φ f R ( e, e ) = K φ ( v , v ) + K φ ( v 0 , v 0 ) − K φ ( v , v 0 ) − K φ ( v 0 , v ) , K Φ f S ( e, e ) = K φ ( v , v ) + K φ ( v 0 , v 0 ) + K φ ( v , v 0 ) + K φ ( v 0 , v ) , when Φ( v, v 0 ) = Φ R ( v , v 0 ) or Φ( v , v 0 ) = Φ S ( v , v 0 ), respectively , then the follow- ing prop osition holds. Prop osition 4.4. The r elation Q : V 2 → [0 , 1] given by (1) and h define d by (2) with K Φ = K Φ f R (r esp e ctively K Φ = K Φ f S ) is a r anking r epr esentable r e cipr o c al (r esp e ctively symmetric) r elation. 12 The pro of directly follows from the fact that for this sp ecific kernel, h ( v , v 0 ) can b e resp ectiv ely written as f ( v ) − f ( v 0 ) and f ( v ) + f ( v 0 ). The kernel K Φ f R has been initially introduced in [23] for ordinal regression and during the last decade it has b een extensively used as a main building blo ck in many kernel- based ranking algorithms. Since ranking representabilit y of reciprocal relations implies strong stochastic transitivity of reciprocal relations, K Φ f R can represent this type of domain kno wledge. The notion of ranking representabilit y is p o werful for recipro cal relations, b ecause the ma jority of recipro cal relations satisfy this prop erty , but for sym- metric relations it has a rather limited applicability . Ranking representabilit y as defined ab o ve cannot represent relations that originate from an underlying metric or similarity measure. F or suc h relations, one needs another connection with its roots in Euclidean metric spaces [22]. Definition 4.5. A symmetric r elation Q : V 2 → [0 , 1] is c al le d Euclide an r ep- r esentable if ther e exists a r anking function f : V → R such that for al l p airs ( v , v 0 ) ∈ V 2 it holds that Q ( v , v 0 ) = ∇ (( f ( v ) − f ( v 0 )) T ( f ( v ) − f ( v 0 ))) , (9) with ~ a T the tr ansp ose of a ve ctor ~ a . Euclidean represen tability as defined here basically can b e seen as Euclidean em b edding or Multidimensional Scaling in a z -dimensional space [69]. In its most restrictiv e form, when z = 1, it implies that the symmetric relation can b e constructed from the Euclidean distance in a one-dimensional space. When suc h a one-dimensional embedding can b e realized, one global ranking of the ob jects can b e found, similar to recipro cal relations. Nevertheless, although mo dels of type (9) with z = 1 are sometimes used in graph inference [61] and semi-sup ervised learning [2], w e b elieve that situations where symmetric rela- tions b ecome Euclidean repres en table in a one-dimensional space o ccur very rarely , in contrast to recipro cal relations. The extension to z > 1 on the other hand do es not guarantee the existence of one global ranking, then Euclidean represen tability still enforces some interesting prop erties, b ecause it guaran tees that the relation Q is constructed from a Euclidean metric space with a dimen- sion upper b ounded b y the n umber of no des p . Moreo ver, this t yp e of domain kno wledge ab out relations can be incorp orated in our framew ork. T o this end, let Φ( v , v 0 ) = Φ S ( v , v 0 ) and let Ψ( v , v 0 ) = φ ( v ) ⊗ ( φ ( v ) − φ ( v 0 )) such that K Φ b ecomes K Φ MLPK ( e, e ) = ( K Φ f R ( e, e )) 2 = K φ ( v , v ) + K φ ( v 0 , v 0 ) − K φ ( v , v 0 ) − K φ ( v 0 , v ) 2 . This kernel has b een called the metric learning pairwise kernel by [60]. As a consequence, the vector of parameters w can b e rewritten as an r × r matrix W where W ij corresp onds to the parameter asso ciated with ( φ i ( v ) − φ i ( v 0 ))( φ j ( v ) − φ j ( v 0 )) such that W ij = W j i . 13 Prop osition 4.6. If W is p ositive semi-definite, then the symmetric r elation Q : V 2 → [0 , 1] given by (1) with h define d by (2) and K Φ = K Φ MLPK is an Euclide an r epr esentable symmetric r elation. See the appendix for the proof. Although the model established by K Φ MLPK do es not result in a global ranking, this mo del strongly differs from the one es- tablished with K Φ ⊗ S , since K Φ MLPK can only represent symmetric relations that exhibit transitivit y prop erties. Therefore, one should definitely use K Φ MLPK when, for example, the underlying relation corresp onds to a metric or a simi- larit y relation, while the kernel K Φ ⊗ S should b e preferably used for symmetric relations for whic h no further domain kno wledge can b e assumed beforehand. 5 Relationships with other mac hine learning al- gorithms As explained in Section 2, the transition from a standard classification or regres- sion setting to the setting of learning graded relations should b e rather found in the sp ecification of joint feature mappings ov er couples of ob jects, thereb y naturally leading to the in tro duction of specific kernels. Any existing mac hine learning algorithm for classification or regression can in principle b e adopted if join t feature mappings are constructed explicitly . Since kernel metho ds av oid this explicit construction, they can often outperform non-k ernelized algorithms in terms of computational efficiency [47]. As a second main adv antage, ker- nel metho ds allow to express similarity scores for structured ob jects, such as strings, graphs and trees and text [48]. In our setting of learning graded rela- tions, this implies that one should plug these domain-sp ecific kernel functions in to (5) or the other pairwise k ernels that are discussed in this paper. Such a scenario is in fact common practice in some applications of Kronec ker product pairwise kernels, suc h as predicting protein-ligand compatibility in bioinformat- ics [28]. String k ernels or graph kernels can b e defined on v arious types of biological structures [62] and Kronec ker pro duct pairwise kernels then combine these ob ject-based kernels into relation-based kernels (th us, no de kernels v ersus edge kernels). The edge kernels we discussed in this article can b e utilized within a wide v ariety of kernel metho ds. Since we fo cus on learning graded relations, one naturally arriv es at a regression setting. In the following section, we run some exp erimen ts with regularized least-squares metho ds, which optimize (4) using a h yp othesis space induced by kernels. The solution is found b y simply solving a system of linear equations [41, 46, 48, 54]. Apart from kernel metho ds, we briefly men tion a n umber of other algorithms that are somewhat connected, ev en though they pro vide solutions for differen t learning problems. If pairwise relations are considered b etw een ob jects of tw o differen t domains, one arrives at a learning setting that is referred to as predict- ing lab els for dy adic data [37]. Examples of suc h settings include link prediction in bipartite graphs and movie recommendation for users. As such, one could 14 also argue that specific link prediction and matrix factorization metho ds could b e applied in our setting as well, see e.g. [35, 38, 52]. How ev er, these metho ds ha ve b een primarily designed for exploiting relationships in the output space, whereas feature represen tations of the ob jects are often not observed or simply irrelev ant. Moreo ver, similar to the Cartesian pairwise kernel, these metho ds cannot b e applied in situations where predictions need to b e made for t wo new no des that w ere not presen t in the training dataset. Another connection can b e observed with multiv ariate regression and struc- tured output prediction metho ds. Such methods hav e b een o ccasionally applied in settings where relations had to b e learned [21]. Also recall that structured output prediction metho ds use Kroneck er pro duct pairwise kernels on a regular basis to define joint feature representations of inputs and outputs [58, 65]. In addition to predictive models for dyadic data, one can also detect connec- tions with certain information retriev al and pattern matching metho ds. How- ev er, these metho ds predominantly use similarit y as underlying relation, often in a purely intuitiv e manner, as a nearest neighbor type of learning, so they can b e considered as muc h more restrictive. Consider the example of protein ranking [64] or algorithms like query by do cument [68]. These metho ds simply lo ok for rankings where the most similar ob jects w.r.t. the query ob ject app ear on top, contrary to our approach, which should b e considered as muc h more general, since w e learn rankings from any type of binary relation. Nonetheless, similarit y relations will of course still o ccupy a prominen t place in our framework as an important sp ecial case. 6 Exp erimen ts In the experiments, we test the ability of the pairwise k ernels to mo del different t yp es of relations, and the effect of enforcing prior knowledge ab out the prop er- ties of the learned relations. T o this end, w e train the regularized least-squares (RLS) algorithm to regress the relation v alues [41]. W e p erform exp eriments on b oth symmetric and recipro cal relations, considering b oth synthetic and real- w orld data. In addition to the standard, symmetric and recipro cal Kroneck er pro duct pairwise kernels, we also consider the Cartesian kernel, the symmetric Cartesian kernel and the metric learning pairwise kernel. 6.1 Syn thetic data: learning similarit y measures Exp erimen ts on synthetic data were conducted to illustrate the b ehavior of the differen t kernels in terms of the transitivity of the relation to b e learned. A parametric family of cardinalit y-based similarity measures for sets was consid- ered as the relation of in terest [11]. F or tw o sets A and B , let us define the 15 Abbreviation Metho d MPRED Predicting the mean K Φ ⊗ Kronec ker Pro duct Pairwise Kernel K Φ ⊗ S Symmetric Kroneck er Product P airwise Kernel K Φ ⊗ R Recipro cal Kroneck er Pro duct P airwise Kernel K Φ MLPK Metric Learning P airwise Kernel K Φ C Cartesian Pro duct P airwise Kernel K Φ C S Symmetric Cartesian P airwise Kernel T able 1: Metho ds considered in the exp eriments follo wing cardinalities: ∆ A,B = | A \ B | + | B \ A | , δ A,B = | A ∩ B | , ν A,B = | ( A ∪ B ) c | , then this family of similarit y measures for sets can b e expressed as: S ( A, B ) = t ∆ A,B + uδ A,B + v ν A,B t 0 ∆ A,B + uδ A,B + v ν A,B , (10) with t , t 0 , u and v four parameters. This family of similarit y measures includes man y well-kno wn similarity measures for sets, suc h as the Jaccard co efficient [27], the simple matching co efficien t [50] and the Dice co efficient [16]. Three members of this family are inv estigated in our exp erimen ts. The first one is the Jaccard co efficien t, corresp onding to ( t, t 0 , u, v ) = (0 , 1 , 1 , 0). The Jaccard coefficient is kno wn to b e T L -transitiv e. The second mem b er that w e inv estigate w as originally prop osed by [51]. It corresp onds to ( t, t 0 , u, v ) = (0 , 1 , 2 , 2) and it do es not satisfy T L -transitivit y , which is considered as a v ery w eak transitivit y condition. Conv e rsely , the third mem b er that w e analyse has rather strong transitivity properties. It is giv en by ( t, t 0 , u, v ) = (1 , 2 , 1 , 1) and it satisfies T P -transitivit y . F eatures and lab els for all three members are generated as follows. First we generate 20-dimensional feature vectors consisting of statistically indep enden t features that follow a Bernoulli distribution with π = 0 . 5. Subsequen tly , the ab o ve-men tioned similarity measures are computed for each pair of features, resulting in a deterministic mapping b etw een features and lab els. Finally , to in tro duce some noise in the problem setting, 10% of the features are swapped in a last step from a zero to a one or vice versa. Figure 2 illustrates the distribution of the obtained similarity scores for a 100 × 100 matrix. In the exp erimen ts, we alw ays generate three data sets, a training set for building the mo del, a v alidation set for hyperparameter selection, and a test set for performance ev aluation. W e perform tw o kinds of exp eriments. In the first exp erimen t, we hav e a single set of 100 no des. 500 no de pairs are randomly sampled without replacement to the training, v alidation and test sets. Th us, 16 0 0.2 0.4 0.6 0.8 1 V alue 0 1500 Color Key and Histogram Count 0 0.2 0.4 0.6 0.8 1 V alue 0 1000 Color Key and Histogram Count 0.5 0.6 0.7 0.8 0.9 1 V alue 0 1500 Color Key and Histogram Count Figure 2: The distribution of similarity scores obtained on a 100 by 100 matrix for all three mem b ers of the family . F rom top to b ottom: ( t, t 0 , u, v ) = (0 , 1 , 2 , 2), ( t, t 0 , u, v ) = (0 , 1 , 1 , 0) and ( t, t 0 , u, v ) = (1 , 2 , 1 , 1). 17 Setting ( t, t 0 , u, v ) MPRED K Φ ⊗ K Φ ⊗ S K Φ MLPK K Φ C K Φ C S In transitive (0,1,2,2) 0.01038 0.00908 0.00773 0.00768 0.00989 0.00924 T L -transitiv e (0,1,1,0) 0.01514 0.00962 0.00781 0.00805 0.01155 0.00941 T P -transitiv e (1,2,1,1) 0.00259 0.00227 0.00192 0.00188 0.00248 0.00231 T able 2: The predictive p erformance on test data for the different types of relations and k ernels. In this experiment, the task is to predict relation v alues for unknown edges in a partially observ ed relational graph. The p erformance measure is the mean squared error. Setting ( t, t 0 , u, v ) MPRED K Φ ⊗ K Φ ⊗ S K Φ MLPK In transitive (0,1,2,2) 0.01032 0.00995 0.00936 0.00971 T L -transitiv e (0,1,1,0) 0.01515 0.01236 0.01166 0.01453 T P -transitiv e (1,2,1,1) 0.00259 0.00251 0.00236 0.00242 T able 3: The predictive p erformance on test data for the different types of relations and k ernels. In this experiment, the task is to predict relation v alues for a completely new set of nodes. The p erformance measure is the mean squared error. the learning problem here is, giv en a subset of the relation v alues for a fixed set of no des, to learn to predict missing relation v alues. This setup allo ws us to test also the Cartesian k ernel, which is unable to generalize to completely new pairs of no des. In the second exp eriment, we generate three separate sets of 100 no des for the training, v alidation and test sets, and sample from eac h of these 500 edges. This exp eriment allows us to test the generalization capability of the learned mo dels with resp ect to new couples of no des (i.e., previously unseen no des). Here, the Cartesian k ernel is not applicable, and thus not included in the exp eriment. The exp eriments are rep eated 100 times, the presented results are means o ver the rep etitions. F or statistical significance testing, we use the paired Wilcoxon-signed-rank test with significance lev el 0 . 05. All pairs of kernels are compared, and the conserv ative Bonferroni correction is applied to take in to accoun t multiple hypothesis testing, meaning that the required p-v alue is divided b y the num b er of comparisons. The Gaussian RBF kernel w as considered at the no de level. The used p erformance measure is the mean squared error (MSE). F or training RLS we solve the corresp onding system of linear equations using matrix factorization, b y considering an explicit regularization parameter. A grid searc h is conducted to select the width of the Gaussian RBF kernel and the regularization parameter of the RLS algorithm. Both parameters are selected from the range 2 − 20 , . . . , 2 1 . The results for the exp eriments are presented in T ables 2 and 3. In b oth cases all the k ernels outp erform the mean as prediction, meaning that they are able to mo del the underlying relations. F or all the learning methods, the error is lo wer in the first exp eriment than in the second one, demonstrating that it is easier to predict relations betw een kno wn no des, than to generalize to a new set of nodes. 18 Enforcing symmetry is clearly b eneficial, as the symmetric Kroneck er pro duct pairwise kernel alwa ys outp erforms the standard Kroneck er pro duct pairwise k ernel, and the symmetric Cartesian k ernel alwa ys outp erforms the standard one. Comparing the Kroneck er and Cartesian k ernels, the Kroneck er one leads to clearly low er error rates. With the exception of the T L -transitiv e case in the second exp eriment, MLPK turns out to b e highly successful in mo deling the relations, probably due to enforcing symmetry of the learned relation. In the first exp erimen t, all the differences are statistically significant, apart from the difference b et ween the symmetric Kroneck er pro duct pairwise k ernel and MLPK for the in transitive case. In the second exp eriment, all the differences are statistically significant. W e can conclude that including prior kno wledge ab out symmetry really helps b o osting the predictiv e p erformance in this problem. 6.2 Learning the similarity b et ween do cuments In the second exp erimen t, we compare the ordinary and symmetric Kro- nec ker pairwise kernels on a real-w orld data set based on newsgroups do cu- men ts 2 . The data is sampled from 4 newsgroups: rec.autos, rec.sp ort.baseball, comp.sys.ibm.p c.hardw are and comp.windows.x. The aim is to learn to pre- dict the similarity of tw o do cumen ts as measured by the num b er of common w ords they share. The no de features correspond to the n umber of o ccurrences of a word in a do cument. Unlik e the previous exp erimen t, the feature repre- sen tation is very high-dimensional and sparse, as there are more than 50000 p ossible features, the ma jorit y of which are zero for any given do cumen t. First, w e sample separate training, v alidation and test sets each consisting of 1000 no des. Second, we sample edges connecting the nodes in the training and v al- idation set using exp onentially growing sample sizes to measure the effect of sample size on the differences b et ween the kernels. The sample size grid is [100 , 200 , 400 , . . . , 102400]. Again, w e sample only edges with differen t starting and end no des. When computing the test p erformance, w e consider all the edges in the test set, except those starting and ending at the same no de. The linear k ernel is used at the no de level. W e train the RLS algorithm using conjugate gradien t optimization with early stopping [42], optimization is terminated once the MSE on the v alidation set has failed to decrease for 10 consecutive iterations. Since we rely on the regularizing effect of early stopping, a separate regulariza- tion parameter is not needed in this expe rimen t. W e do not include other types of kernels than the Kroneck er pro duct pairwise kernels in the exp eriment. T o the b est of our kno wledge, no algorithms that scale to the considered exp eriment size exist for the other kernel functions. Hence, this exp erimen t mainly aims to illustrate the computational adv an tages of the Kroneck er pro duct pairwise k ernel. The mean as prediction ac hieves an MSE around 145 on this dataset. The results are presented in Figure 3. Even for 100 pairs the errors are for b oth kernels muc h lo wer than the results for the mean as prediction, showing that the RLS algorithm succeeds with b oth kernels in learning the underlying 2 Av ailable at: http://people.csail.mit.edu/jrennie/20Newsgroups/ 19 1 0 2 1 0 3 1 0 4 1 0 5 #edges 0 10 20 30 40 50 MSE K Φ ⊗ K Φ ⊗ S Figure 3: The comparison of the ordinary Kronec ker pro duct pairwise k ernel K Φ ⊗ and the symmetric Kronec k er product pairwise k ernel K Φ ⊗ S on the Newsgroups dataset. The mean squared error is sho wn as a function of the training set size. relation. Increasing the training set size leads to a decrease in test error. Using the prior knowledge ab out the symmetry of the learned relation is clearly helpful. The symmetric kernel achiev es for all sample sizes a low er error than the ordinary Kronec ker pro duct pairwise kernel and the largest differences are observed for the smallest sample sizes. F or 100 training instances, the error is almost halved b y enforcing symmetry . 6.3 Comp etition b et ween sp ecies In this final exp erimen t we ev aluate the p erformance of the ordinary and re- cipro cal Kronec ker pairwise kernels and the metric learning pairwise kernel on sim ulated data from an ecological mo del. The setup is based on the one de- scrib ed in [1]. This mo del pro vides an elegant explanation for the co existence of multiple sp ecies in the same habitat, a problem that has puzzled ecologists for decades [26]. Imagine n species sharing a habitat and struggling for their share of the re- sources. One sp ecies can dominate another sp ecies based on k so-called limiting factors. A limiting factor defines an attribute that can give a fitness adv antage, 20 for example in plants, such as the abilit y to photosynthesize, the ability to draw minerals from the soil, resistance to diseases, etc. Each sp ecies can score b etter or w orse on each of its k limiting factors. The degree to which one species can dominate a comp etitor is relativ e to the n um b er of limiting factors for which it is superior. All p ossible interactions can thus be represented in a tournamen t. In this framew ork relations are recipro cal and often intransitiv e. F or this simulation 400 sp ecies were simulated with 10 limiting factors. The v alue of each limiting factor is for each species dra wn from a random uniform distribution b etw een 0 and 1. Th us, any sp ecies v can b e represented by a v ector f of length k with the limiting factors as elemen ts. The probability that a sp ecies v dominates sp ecies v 0 can easily be calculated: Q ( v , v 0 ) = 1 k k X i =1 H ( f i − f 0 i ) , (11) where H ( x ) is the Heaviside step function. Of the 400 sp ecies, 200, 100 and 100 were used for generating training, v ali- dation and testing data. F or eac h subset, the complete tournament matrix was determined using (11). F rom those matrices 1200 interactions were sampled for training, 600 for mo del v alidation and 600 for testing. No combination of sp ecies was used more than once. Using the limiting factors as features, w e try to regress the probability that one species dominates another one using the ordi- nary and recipro cal Kroneck er pro duct pairwise kernels and the metric learning pairwise k ernel. Again, the Gaussian kernel is applied as the node k ernel. The v alidation set is used to determine the optimal regularization parameter and k ernel width parameter from the grids 2 − 20 , 2 − 19 . . . , 2 4 and 2 − 10 , 2 − 9 . . . , 2 1 . T o obtain statistically significan t results the setup is rep eated 100 times. T able 4: The predictive p erformance on test data for the different types of k ernels. The performance measure is the mean squared error. Kernel MPRED K Φ ⊗ K Φ ⊗ R K Φ MLPK MSE 0.02795 0.01082 0.01067 0.02877 The results are sho wn in T able 4. The Wilcoxon-signed-rank test with sig- nificance lev el 0.05 is used for significance testing, and a conserv ative Bonferroni correction is applied for multiple hypothesis testing. All differences are statisti- cally significant. The metric learning pairwise k ernel giv es rise to w orse predictions than the mean as prediction. This is not surprising, as the MLPK cannot learn reciprocal relations. The ordinary Kroneck er product pairwise kernel performs go o d and the recipro cal Kronec ker pro duct pairwise kernel p erforms even b etter. All the differences are statistically significant. The results show that using the information on the types of relations to b e learned can b o ost the accuracy of the predictions. 21 7 Conclusion A general kernel-based framework for learning v arious types of graded relations w as presented in this article. This framework extends existing approaches for learning relations, because it can handle crisp and graded relations. A Kroneck er pro duct feature mapping was prop osed for combining the features of pairs of ob jects that constitute a relation (edge lev el in a graph), and it was sho wn that this mapping leads to a class of universal approximators, if an appropriate k ernel is chosen on the ob ject lev el (no de lev el in a graph). In addition, we clarified that domain knowledge ab out the relation to b e learned can b e easily incorp orated in our framework, such as recipro city and symmetry prop erties. Exp erimental results on synthetic and real-w orld data clearly demonstrate that this domain knowledge really helps in improving the generalization p erformance. Moreov er, imp ortant links with recent dev elop- men ts in fuzzy set theory and decision theory can be established, b y looking at transitivit y properties of relations. Ac kno wledgmen ts W.W. is supp orted as a p ostdo c b y the Research F oundation of Flanders (FWO Vlaanderen) and T.P . by the Academ y of Finland (grant 134020). App endix 7.1 F ormal definitions Definition 7.1. The Kr one cker pr o duct of two matric es M and N is define d as M ⊗ N = M 1 , 1 N · · · M 1 ,n N . . . . . . . . . M m, 1 N · · · M m,n N , Definition 7.2 ( [53]) . A c ontinuous kernel K on a c omp act metric sp ac e V (i.e. V is close d and b ounde d) is c al le d universal if the RKHS induc e d by K is dense in C ( V ) , wher e C ( V ) is the sp ac e of al l c ontinuous functions f : V → R . That is, for every function f ∈ C ( V ) and every > 0 , ther e exists a set of input p oints { v i } m i =1 ∈ V and r e al numb ers { α i } m i =1 , with m ∈ N , such that max x ∈V ( f ( v ) − m X i =1 α i K ( v i , v ) ) ≤ . A c c or dingly, the hyp othesis sp ac e induc e d by the kernel K c an appr oximate any function in C ( V ) arbitr arily wel l, and henc e it has the universal appr oximating pr op erty. 22 The following result is in the literature kno wn as the Stone-W eierstraß the- orem (see e.g [45]): Theorem 7.3 (Stone-W eierstraß) . L et V b e a c omp act metric sp ac e and let C ( V ) b e the set of r e al-value d c ontinuous functions on V . If A ⊂ C ( V ) is a sub algebr a of C ( V ) , that is, ∀ f ( v ) , g ( v ) ∈ A , r ∈ R : f ( v ) + r g ( v ) ∈ A , f ( v ) g ( v ) ∈ A and A sep ar ates p oints in V , that is, ∀ v , v 0 ∈ V , v 6 = v 0 : ∃ g ∈ A : g ( v ) 6 = g ( v 0 ) , and A do es not vanish at any p oint in V , that is, ∀ v ∈ V : ∃ g ∈ A : g ( v ) 6 = 0 , then A is dense in C ( V ) . 7.2 Pro ofs Pr o of. ( Theorem 2.1 ) Let us define A ⊗ A = { t | t ( v , v 0 ) = g ( v ) u ( v 0 ) , g , u ∈ A} (12) for a compact metric space V and a set of functions A ⊂ C ( V ). W e observe that the RKHS of the kernel K Φ ⊗ can b e written as H ⊗ H , where H is the RKHS of the kernel K φ . Let > 0 and let t ∈ C ( V ) ⊗ C ( V ) b e an arbitrary function whic h can, according to (12), b e written as t ( v , v 0 ) = g ( v ) u ( v 0 ), where g , u ∈ C ( V ). By definition of the universalit y prop ert y , H is dense in C ( V ). Therefore, H contains functions g , u such that max v ∈V {| g ( v ) − g ( v ) |} ≤ , max v ∈V {| u ( v ) − u ( v ) |} ≤ , where is a constan t for whic h it holds that max v ,v 0 ∈V | g ( v ) | + | u ( v 0 ) | + 2 ≤ . Note that, according to the extreme v alue theorem, the maximum exists due to the compactness of V and the conti nuit y of the functions g and u . Now we ha ve max v ,v 0 ∈V {| t ( v , v 0 ) − g ( v ) u ( v 0 ) |} ≤ max v ,v 0 ∈V | t ( v , v 0 ) − g ( v ) u ( v 0 ) | + | g ( v ) | + | u ( v 0 ) | + 2 = max v ,v 0 ∈V | g ( v ) | + | u ( v 0 ) | + 2 ≤ , whic h confirms the densit y of H ⊗ H in C ( V ) ⊗ C ( V ). 23 According to Tyc honoff ’s theorem, V 2 is compact if V is compact. It is straigh tforward to see that C ( V ) ⊗ C ( V ) is a subalgebra of C ( V 2 ), it separates p oin ts in V 2 , it v anishes at no p oint of C ( V 2 ), and it is therefore dense in C ( V 2 ) due to Theorem 7.3. Consequently , H ⊗ H is also dense in C ( V 2 ), and K Φ ⊗ is a univ ersal k ernel on E . Pr o of. ( Theorem 3.4 ) Let > 0 and t ∈ R ( V 2 ) b e an arbitrary function. According to Theorem 2.1, the RKHS of the k ernel K Φ ⊗ defined in (5) is dense in C ( V 2 ). Therefore, w e can select a set of edges and real n umbers { α i } m i =1 , suc h that the function u ( v , v 0 ) = m X i =1 α i K φ ( v , v i ) K φ ( v 0 , v 0 i ) b elonging to the RKHS of the kernel (5) fulfills max ( v ,v 0 ) ∈V 2 {| t ( v , v 0 ) − 4 u ( v , v 0 ) |} ≤ 1 2 . (13) W e observ e that, because t ( v , v 0 ) = − t ( v 0 , v ), the function u also fulfills max ( v ,v 0 ) ∈V 2 {| t ( v , v 0 ) + 4 u ( v 0 , v ) |} ≤ 1 2 and hence max ( v ,v 0 ) ∈V 2 {| 4 u ( v , v 0 ) + 4 u ( v 0 , v ) |} ≤ . (14) Let γ ( v , v 0 ) = 2 u ( v , v 0 ) + 2 u ( v 0 , v ) . Due to (14), we hav e | γ ( v , v 0 ) | ≤ 1 2 , ∀ ( v , v 0 ) ∈ V 2 . (15) No w, let us consider the function h ( v , v 0 ) = m X i =1 α i 2 K φ ( v , v i ) K φ ( v 0 , v 0 i ) − K φ ( v 0 , v i ) K φ ( v , v 0 i ) , whic h is obtained from u by replacing kernel (5) with kernel (6). W e observe that h ( v , v 0 ) = 2 u ( v , v 0 ) − 2 u ( v 0 , v ) = 4 u ( v , v 0 ) − γ ( v , v 0 ) . (16) By combining (13), (15) and (16), w e observ e that the function h fulfills (7). 24 Pr o of. ( Prop osition 4.6 ) The model that we consider can b e written as: Q ( v , v 0 ) = ∇ ( φ ( v ) − φ ( v 0 )) T W ( φ ( v ) − φ ( v 0 )) . The connection with (9) then immediately follows by decomp osing W as W = U T U with U an arbitrary matrix. The specific case of z = 1 is obtained when U can be written as a single-ro w matrix. References [1] Stefano Allesina and Jonathan M. Levine. A comp etitive netw ork the- ory of species diversit y . Pr o c e e dings of the National A c ademy of Scienc es , 108:5638–5642, 2011. [2] M. Belkin, P . Niy ogi, and V. Sindh wani. Manifold regularization: a geomet- ric framework for learning from labeled and unlab eled examples. Journal of Machine L e arning R ese ar ch , 7:2399–2434, 2006. [3] A. Ben-Hur and W. Noble. Kernel metho ds for predicting protein-protein in teractions. Bioinformatics , 21 Suppl 1:38–46, 2005. [4] A. Billot. An existence theorem for fuzzy utility functions: A new elemen- tary pro of. F uzzy Sets and Systems , 74:271–276, 1995. [5] L. Bo ddy . Interspecific combativ e interactions b et ween woo d-decaying ba- sidiom ycetes. FEMS Micr obiolo gy Ec olo gy , 31:185–194, 2000. [6] U. Bodenhofer, B. De Baets, and J. F o dor. A comp endium of fuzzy w eak orders. F uzzy Sets and Systems , 158:811–829, 2007. [7] M. Bowling, J. F ¨ urnkranz, T. Graep el, and R. Musick. Machine learning and games. Machine L e arning , 63(3):211–215, 2006. [8] T. Cz´ ar´ an, R. Ho ekstra, and L. Pagie. Chemical warfare betw een microb es promotes bio diversit y . Pr o c e e dings of the National A c ademy of Scienc es , 99(2):786–790, 2002. [9] B. De Baets and H. De Meyer. T ransitivity frameworks for recipro cal relations: cycle-transitivit y versus F G -transitivity . F uzzy Sets and Systems , 152:249–270, 2005. [10] B. De Baets, H. De Mey er, B. De Sc huymer, and S. Jenei. Cyclic ev aluation of transitivity of recipro cal relations. So cial Choic e and Welfar e , 26:217– 238, 2006. [11] B. De Baets, H. De Mey er, and H. Naessens. A class of rational cardinalit y- based similarity measures. J. Comput. Appl. Math. , 132:51–69, 2001. [12] B. De Baets and R. Mesiar. Metrics and T -equalities. Journal of Mathe- matic al Analysis and Applic ations , 267:531–547, 2002. 25 [13] L. De Raedt. L o gic al and R elational L e arning . Springer, 2009. [14] B. De Sc huymer, H. De Mey er, B. De Baets, and S. Jenei. On the cycle- transitivit y of the dice mo del. The ory and De cision , 54:261–285, 2003. [15] S. Diaz, S. Mon tes, and B. De Baets. T ransitivity bounds in additive fuzzy preference structures. IEEE T r ansactions on F uzzy Systems , 15:275–286, 2007. [16] L. Dice. Measures of the amoun t of ecologic asso ciations betw een sp ecies. Ec olo gy , 26:297–302, 1945. [17] J.-P. Doignon, B. Monjardet, M. Roub ens, and Ph. Vinc ke. Biorder fam- ilies, v alued relations and preference mo delling. Journal of Mathematic al Psycholo gy , 30:435–480, 1986. [18] P . Fish burn. Nontransitiv e preferences in decision theory . Journal of Risk and Unc ertainty , 4:113–134, 1991. [19] L. Fisher. R o ck, Pap er, Scissors: Game The ory in Everyday Life . Basic Bo oks, 2008. [20] L. F ono and N. Andjiga. Utility function of fuzzy preferences on a countable set under max-*-transitivity . So cial Choic e and Welfar e , 28:667–683, 2007. [21] P . Geurts, N. T ouleimat, M. Dutreix, and F. d’Alch ´ e-Buc. Inferring bio- logical netw orks with output kernel trees. BMC Bioinformatics , 8(2):S4, 2007. [22] J. Gow er and P . Legendre. Metric and Euclidean properties of dissimilarity co efficien ts. Journal of Classific ation , 3:5–48, 1986. [23] R. Herbric h, T. Graepel, and K. Ob ermay er. Large margin rank boundaries for ordinal regression. In A. Smola, P . Bartlett, B. Sch¨ olkopf, and D. Sch u- urmans, editors, A dvanc es in L ar ge Mar gin Classifiers , pages 115–132. MIT Press, 2000. [24] M. Hue and J.-P . V ert. On learning with kernels for unordered pairs. In Pr o c e e dings of the 27th International Confer enc e on Machine L e arning, p.463-470, 2010 , 2010. [25] E. H ¨ ullermeier and J. F ¨ urnkranz. Pr efer enc e L e arning . Springer, 2010. [26] G. E. Hutc hinson. The paradox of the plankton. The A meric an Natur alist , 95(882):137–145, 1961. [27] P . Jaccard. Nouvelle recherc hes sur la distribution florale. Bul letin de la So ci´ et ´ ee V audoise de Scienc es Natur el les , 44:223–270, 1908. [28] L. Jacob and J.-P . V ert. Protein-ligand interaction prediction: an impro ved c hemogenomics approac h”, bioinformatics, 24(19):2149-2156, 2008. Bioin- formatics , 241:2149–2156, 2008. 26 [29] F. J¨ akel, B. Sch¨ olkopf, and F. Wichmann. Similarity , kernels, and the triangle inequalit y . Journal of Mathematic al Psycholo gy , 52(2):297–303, 2008. [30] G. K´ arolyi, Z. Neufeld, and I. Scheuring. Ro c k-scissors-pap er game in a c haotic flow: The effect of disp ersion on the cyclic competition of micro or- ganisms. Journal of The or etic al Biolo gy , 236(1):12–20, 2005. [31] H. Kashima, S. Oyama, Y. Y amanishi, and K. Tsuda. On pairwise kernels: An efficien t alternative and generalization analysis. In Thanaruk Theer- am unkong, Bo onserm Kijsirikul, Nick Cercone, and T u Bao Ho, editors, P AKDD , v olume 5476 of L e ctur e Notes in Computer Scienc e , pages 1030– 1037. Springer, 2009. [32] B. Kerr, M. Riley , M. F eldman, and B. Bohannan. Lo cal disp ersal promotes bio div ersity in a real-life game of rock pap er scissors. Natur e , 418:171–174, 2002. [33] B. Kirkup and M. Riley . Antibiotic-mediated antagonism leads to a bacte- rial game of ro ck-paper-scissors in viv o. Natur e , 428:412–414, 2004. [34] M. Kopp en. Random utility represen tation of binary c hoice probabilities: Critical graphs yielding critical necessary conditions. Journal of Mathe- matic al Psycholo gy , 39:21–39, 1995. [35] N. Lawrence and R. Urtasan. Nonlinear matrix factorization with gaus- sian pro cesses. In Pr o c e e dings of the International Confer enc e on Machine L e arning , pages 601–608, 2009. [36] R. Luce and P . Supp es. Handb o ok of Mathematic al Psycholo gy , chapter Preference, Utility and Sub jectiv e Probability , pages 249–410. Wiley , 1965. [37] A. Menon and C. Elk an. Predicting lab els for dy adic data. Data Mining and Know le dge Disc overy , 21:327–343, 2010. [38] K. Miller, T Griffiths, and M. Jordan. Nonparametric latent feature mo dels for link prediction. A dvanc es in Neur al Pr o c essing Systems , 22:1276–1284, 2009. [39] B. Moser. On represen ting and generating kernels b y fuzzy equiv alence relations. Journal of Machine L e arning R ese ar ch , 7:2603–2620, 2006. [40] M. Now ak. Bio div ersity: Bacterial game dynamics. Natur e , 418:138–139, 2002. [41] T. Pahikk ala, E. Tsivtsiv adze, A. Airola, J. J¨ arvinen, and J. Bob erg. An efficien t algorithm for learning to rank from preference graphs. Machine L e arning , 75(1):129–165, 2009. 27 [42] T. Pahikk ala, W. W aegeman, A. Airola, T. Salakoski, and B. De Baets. Conditional ranking on relational data. In J. Balczar, F. Bonc hi, A. Gionis, and M. Sebag, editors, Pr o c e e dings of the Eur op e an Confer enc e on Machine L e arning , v olume 6322 of L e ctur e Notes in Computer Scienc e , pages 499– 514. Springer Berlin / Heidelberg, 2010. [43] T. Pahikk ala, W. W aegeman, E. Tsivtsiv adze, T. Salakoski, and B. De Baets. Learning in transitive recipro cal relations with k ernel methods. Eu- r op e an Journal of Op er ational R ese ar ch , 206:676–685, 2010. [44] T. Reichen bach, M. Mobilia, and E. F rey . Mobility promotes and jeop- ardizes bio div ersity in ro c k-pap er-scissors games. Natur e , 448:1046–1049, 2007. [45] W alter Rudin. F unctional Analysis . International Series in Pure and Ap- plied Mathematics. McGra w-Hill Inc., New Y ork, second edition, 1991. [46] C. Saunders, A. Gammerman, and V. V o vk. Ridge regression learning algorithm in dual v ariables. In Pr o c e e dings of the International Confer enc e on Machine L e arning , pages 515–521, 1998. [47] B. Sch¨ olk opf and A. Smola. L e arning with Kernels, Supp ort V e ctor Ma- chines, R e gularisation, Optimization and Beyond . The MIT Press, 2002. [48] J. Sha we-T aylor and N. Cristianini. Kernel Metho ds for Pattern A nalysis . Cam bridge Univ ersity Press, 2004. [49] S. Sinerv o and C. Liv ely . The rock-paper-scissors game and the evoluti on of alternative mate strategies. Natur e , 340:240–246, 1996. [50] R. Sok al and C. Mic hener. A statistical method for ev aluating systematic relationships. Univ. of Kansas Scienc e Bul letin , 38:1409–1438, 1958. [51] R. Sok al and P . Sneath. Principles of Numeric al T axonomy . W. H. F ree- man, 1963. [52] N. Srebro, J. Rennie, and T. Jaakk ola. Maxximum margin matrix factor- ization. A dvanc es in Neur al Pr o c essing Systems , 17, 2005. [53] I. Stein wart. On the influence of the k ernel on the consistency of support v ector mac hines. Journal of Machine L e arning R ese ar ch , 2:67–93, 2002. [54] J. Suykens, T. V an Gestel, J. De Braban ter, B. De Mo or, and J. V ande- w alle. L e ast Squar es Supp ort V e ctor Machines . W orld Scien tific Pub. Co., Singap ore, 2002. [55] Z. Switalski. T ransitivity of fuzzy preference relations - an empirical study . F uzzy Sets and Systems , 118:503–508, 2000. [56] Z. Switalski. General transitivity conditions for fuzzy reciprocal preference matrices. F uzzy Sets and Systems , 137:85–100, 2003. 28 [57] B. T ask ar, M. W ong, P . Abbeel, and D. Koller. Link prediction in relational data. In A dvanc es in Neur al Information Pr o c essing Systems , 2004. [58] Y. Tso c hantaridis, T. Joachims, T. Hofmann, and Y. Altun. Large mar- gin metho ds for structured and indep endent output v ariables. Journal of Machine L e arning R ese ar ch , 6:1453–1484, 2005. [59] A. Tversky . Pr efer enc e, Belief and Similarity . MIT Press, 1998. [60] J.-P . V ert, J. Qiu, and W. S. Noble. A new pairwise kernel for biological net work inference with supp ort vector mac hines. BMC Bioinformatics , 8 (Suppl 10):S8, 2007. [61] J.-P . V ert and Y. Y amanishi. Sup ervised graph inference. In A dvanc es in Neur al Information Pr o c essing Systems , v olume 17, 2005. [62] S. Vishw anathan, N. Schraudolph, R. Kondor, and K. Borgw ardt. Graph k ernels. Journal of Machine L e arning R ese ar ch , 11:1201–1242, 2010. [63] T. W aite. In transitive preferences in hoarding gray jays ( Perisor eus c anadensis ). Journal of Behaviour al Ec olo gy and So ciobiolo gy , 50:116–121, 2001. [64] J. W eston, A. Eliseeff, D. Zhou, C. Leslie, and W. Stafford Noble. Protein ranking: from lo cal to global structure in the protein similarit y netw orks. Pr o c e e dings of the National A c ademy of Scienc e , 101:6559–6563, 2004. [65] J. W eston, B. Sch¨ olkopf, O. Bousquet, T. Mann, and W. Noble. Pr e dicting structur e d data , c hapter Joint k ernel maps, pages 67–83. MIT Press, 2007. [66] E. Xing, A. Ng, M. Jordan, and S. Russell. Distance metric learning with application to clustering with side information. In A dvanc es in Neur al Information Pr o c essing Systems , volume 16, pages 521–528, 2002. [67] Y. Y amanishi, J.-P . V ert, and M. Kanehisa. Protein netw ork inference from m ultiple genomic data: a sup ervised approach. Bioinformatics , 20:1363– 1370, 2004. [68] Y. Y ang, N. Bansal, W. Dakk a, P . Ip eirotis, N. Koudas, and D. Papadias. Query b y do cumen t. In Pr o c e e dings of the Se c ond A CM International Con- fer enc e on Web Se ar ch and Data Mining, Bar c elona, Sp ain , pages 34–43, 2009. [69] Z. Zhang. Learning metrics via discriminan t kernels and multidimensional scaling: T ow ard exp ected Euclidean represen tation. In Pr o c e e dings of the Twentieth International Confer enc e on Machine L e arning, Washing- ton D.C., USA , pages 872–879, 2003. 29

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment