Angel or Devil: Discriminating Hard Samples and Anomaly Contaminations for Unsupervised Time Series Anomaly Detection

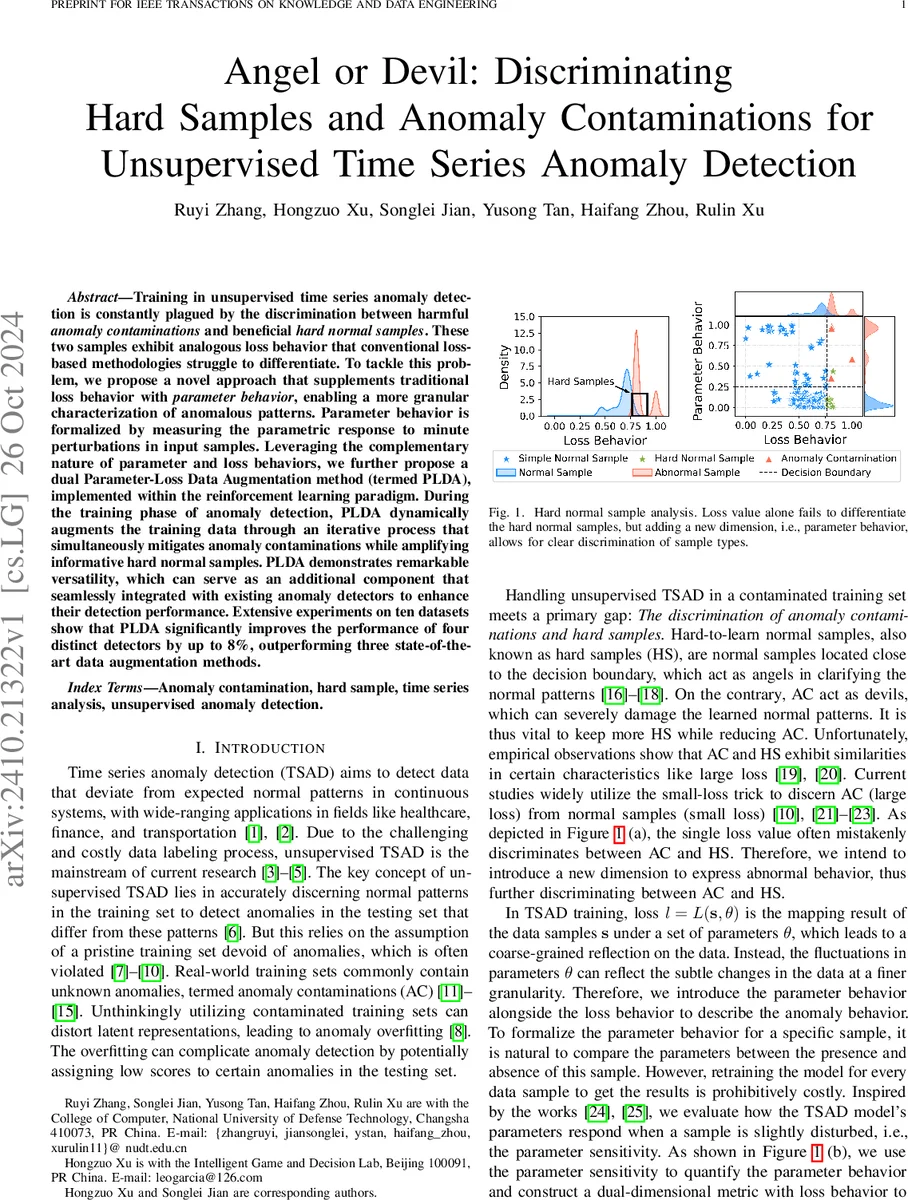

Training in unsupervised time series anomaly detection is constantly plagued by the discrimination between harmful anomaly contaminations' and beneficial hard normal samples’. These two samples exhibit analogous loss behavior that conventional loss-based methodologies struggle to differentiate. To tackle this problem, we propose a novel approach that supplements traditional loss behavior with `parameter behavior’, enabling a more granular characterization of anomalous patterns. Parameter behavior is formalized by measuring the parametric response to minute perturbations in input samples. Leveraging the complementary nature of parameter and loss behaviors, we further propose a dual Parameter-Loss Data Augmentation method (termed PLDA), implemented within the reinforcement learning paradigm. During the training phase of anomaly detection, PLDA dynamically augments the training data through an iterative process that simultaneously mitigates anomaly contaminations while amplifying informative hard normal samples. PLDA demonstrates remarkable versatility, which can serve as an additional component that seamlessly integrated with existing anomaly detectors to enhance their detection performance. Extensive experiments on ten datasets show that PLDA significantly improves the performance of four distinct detectors by up to 8%, outperforming three state-of-the-art data augmentation methods.

💡 Research Summary

The paper addresses a fundamental challenge in unsupervised time‑series anomaly detection (TSAD): training data are rarely pristine and often contain unknown anomalies, termed anomaly contaminations (AC). At the same time, normal samples that lie close to the decision boundary—hard samples (HS)—are valuable because they help the model learn a richer representation of normal behavior. Existing methods rely almost exclusively on the loss value to separate “large‑loss” (presumed anomalies) from “small‑loss” (presumed normal) samples. Unfortunately, HS also tend to exhibit large loss, making it difficult to distinguish them from AC.

To overcome this limitation, the authors introduce a new dimension called parameter behavior. The idea is to measure how the model’s parameters react when a particular sample is slightly perturbed. Formally, given a loss function L(s,θ) and optimal parameters θ̂, the authors define a perturbed optimization problem that adds a small weight ε to the loss of sample s. By differentiating the optimal parameters with respect to ε, they derive a closed‑form expression for the parameter sensitivity:

dθ̂_{ε,s}/dε |{ε=0} = – H^{-1}{θ̂} ∇_θ L(s,θ̂)

where H is the Hessian of the total loss. The parameter behavior function P(s,θ) is then defined as the magnitude of this sensitivity, optionally restricted to a set of “key” parameters (the top‑k with highest average sensitivity in the first epoch) to keep computation tractable.

The authors provide a theoretical justification using Fourier analysis. They show that the contribution of a frequency component f to the parameter behavior at parameter θ_j is approximately proportional to A(f)·e^{−π f^2 ω_j}, where A(f) is the amplitude of the frequency component. This implies that high‑frequency components (common in AC due to abrupt changes or noise) induce larger parameter changes, whereas HS, which are dominated by lower‑frequency structure, cause milder parameter shifts. Consequently, combining loss and parameter behavior yields a dual‑dimensional metric that can separate AC (high loss, high parameter sensitivity) from HS (high loss, low parameter sensitivity).

Building on this metric, the authors propose PLDA (Parameter‑Loss Data Augmentation), a reinforcement‑learning (RL) framework that iteratively augments the training set during TSAD model training. The RL environment treats each time‑series sub‑sequence as a state. The action space consists of three operations: expansion (duplicate the sample), preservation (keep it unchanged), and deletion (remove it). A double DQN learns an action‑value function Q(s,a) that maximizes a dual‑dimensional reward:

r = α·(Δloss reduction) + (1−α)·(Δparameter behavior reduction),

where α balances the two components. An adaptive sliding‑window module defines the granularity of augmentation, preserving temporal continuity. The agent selects the action with the highest expected future reward, the environment applies the augmentation, and the next state is generated. This loop repeats for a predefined number of augmentation epochs.

PLDA is model‑agnostic: it can be plugged into any deep TSAD backbone without altering its architecture. The authors evaluate PLDA on ten public time‑series datasets (including NAB, Yahoo, and real‑world sensor logs) and four representative TSAD models (LSTM‑AE, USAD, GDN, DAGMM). They compare against three state‑of‑the‑art data‑augmentation baselines. Results show that PLDA consistently improves F1‑score by 4–8 % across varying contamination rates (10 %–30 %). The performance gain is especially pronounced when contamination is severe, confirming that parameter behavior is effective at detecting high‑frequency noisy anomalies. Ablation studies demonstrate that using loss alone or parameter behavior alone yields inferior results, while the combined metric achieves the best discrimination. Computational overhead is modest because only a small subset of parameters (top‑k) is used for sensitivity calculation.

In summary, the paper makes three key contributions:

- Parameter behavior modeling: a novel, theoretically grounded metric that captures fine‑grained model response to sample perturbations.

- Dual‑dimensional RL‑based augmentation (PLDA): a flexible, model‑independent plugin that simultaneously suppresses anomaly contaminations and amplifies hard normal samples.

- Extensive empirical validation: demonstrating significant performance gains over existing augmentation methods across diverse datasets and detectors.

The work opens avenues for extending parameter‑behavior analysis to other model families (e.g., transformer‑based TSAD) and for developing online, streaming‑compatible sensitivity estimators.

Comments & Academic Discussion

Loading comments...

Leave a Comment