Towards Robust Knowledge Representations in Multilingual LLMs for Equivalence and Inheritance based Consistent Reasoning

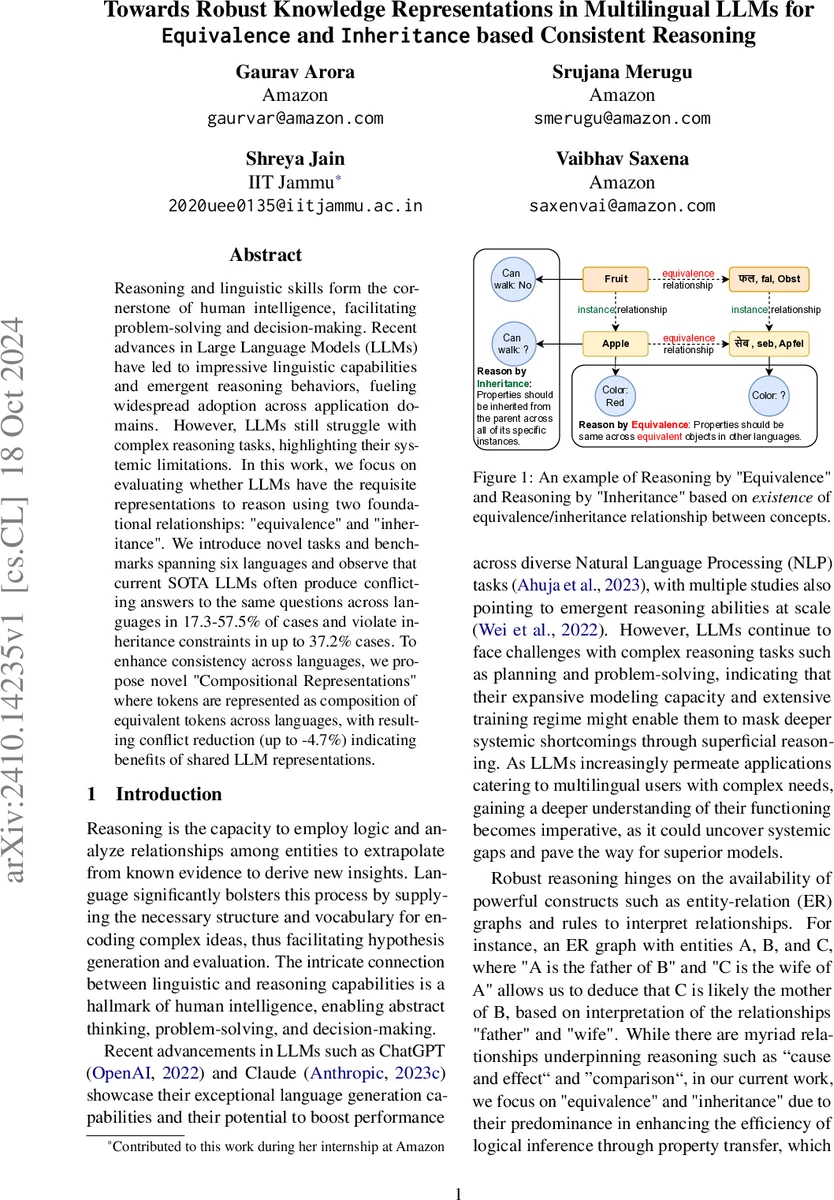

Reasoning and linguistic skills form the cornerstone of human intelligence, facilitating problem-solving and decision-making. Recent advances in Large Language Models (LLMs) have led to impressive linguistic capabilities and emergent reasoning behaviors, fueling widespread adoption across application domains. However, LLMs still struggle with complex reasoning tasks, highlighting their systemic limitations. In this work, we focus on evaluating whether LLMs have the requisite representations to reason using two foundational relationships: “equivalence” and “inheritance”. We introduce novel tasks and benchmarks spanning six languages and observe that current SOTA LLMs often produce conflicting answers to the same questions across languages in 17.3-57.5% of cases and violate inheritance constraints in up to 37.2% cases. To enhance consistency across languages, we propose novel “Compositional Representations” where tokens are represented as composition of equivalent tokens across languages, with resulting conflict reduction (up to -4.7%) indicating benefits of shared LLM representations.

💡 Research Summary

This paper investigates whether large language models (LLMs) possess the internal representations needed to perform consistent reasoning across multiple languages using two fundamental logical relations: equivalence and inheritance. The authors introduce two novel benchmark suites—one for “reasoning by equivalence” and another for “reasoning by inheritance”—each consisting of parallel fact‑based question‑answer pairs in six languages (English, French, Spanish, German, Portuguese, and Hindi).

For the equivalence task, the authors generate 88,334 English factual questions about well‑known entities and translate them into the other five languages using AWS Translate. They define a conflict as any pair of answers that contain contradictory information, regardless of factual correctness. Conflict rates are measured by prompting LLMs to answer each language version independently (Dependency on Input Language, DIL) and by prompting the model in English but requesting answers in each target language (Dependency on Output Language, DOL). Claude v3 Sonnet serves as the automatic judge, achieving >95 % precision.

Results show that even the strongest closed‑source models (Claude v3 Sonnet) produce conflicting answers in 17.3 %–57.5 % of cases across language pairs, with higher conflict rates for languages that use non‑Latin scripts (notably Hindi). Open‑source models (BLOOMZ‑7B, XGLM‑7.5B) exhibit even larger conflict percentages, indicating a strong coupling between knowledge representation and the surface language.

To probe the root cause of these inconsistencies, the authors conduct a controlled experiment with synthetic data. They create 2,063 fictitious entities and associated hallucinated articles, then generate 32,016 QA pairs that can only be answered using the fabricated articles. The synthetic dataset is trained on a single language (English, German, Hindi, or transliterated Hindi) and tested on the other three. Transfer performance is markedly better when source and target languages share script or typological similarity, confirming that token embeddings are largely language‑specific.

Addressing the identified gap, the paper proposes “Compositional Representations.” In this scheme, tokens that denote the same concept across languages are combined—either via linear combination of embeddings or by sharing attention weights—so that the resulting composite token resides in a shared representation space. This design enables the model to access distant but semantically equivalent embeddings, thereby facilitating knowledge sharing. Empirical evaluation demonstrates a reduction in conflict rates of up to 4.7 % relative to baseline models, with the most pronounced gains for language pairs with differing scripts.

The contributions are fourfold: (1) creation and public release of multilingual equivalence and inheritance benchmarks; (2) systematic quantification of language‑dependent answer conflicts in state‑of‑the‑art LLMs; (3) a synthetic controlled experiment that isolates the effect of script and typology on cross‑lingual knowledge transfer; and (4) a novel token‑composition method that improves cross‑language consistency.

The study highlights that current LLMs, despite impressive multilingual pre‑training, still lack language‑agnostic abstractions for basic logical relations, limiting their reliability in multilingual applications that require coherent reasoning. The proposed compositional approach offers a promising direction, but further work is needed—potentially integrating neuro‑symbolic architectures or explicit logical constraints—to achieve truly language‑independent knowledge representations and robust multi‑step reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment