Time-Probability Dependent Knowledge Extraction in IoT-enabled Smart Building

Smart buildings incorporate various emerging Internet of Things (loT) applications for comprehensive management of energy efficiency, human comfort, automation and security. However, the development of a knowledge extraction framework for human activities is fundamental. Currently, there is a lack of a unified and practical framework for modeling heterogeneous sensor data within buildings. In this paper, we propose a practical inference framework for extracting status-to-event knowledge within smart building. Our proposal includes IoT-based API integration, ontology model design, and time probability dependent knowledge extraction methods. We leveraged the Building Topology Ontology (BOT) to construct spatial relations among sensors and spaces within the building. Additionally, we utilized Apache Jena Fuseki’s SPARQL server for storing and querying RDF triple data. Two types of knowledge could be extracted: timestamp-based probability for abnormal event detection and time interval-based probability for conjunction of multiple events. We conducted experiments over a 78-day period in a real smart building environment, collecting data on light and elevator states for evaluation. The evaluation revealed several inferred events, such as room occupancy, elevator trajectory tracking, and the conjunction of both events. The numerical values of detected event counts and probability demonstrate the potential for automatic control in the smart building.

💡 Research Summary

Smart buildings rely on a dense deployment of heterogeneous Internet‑of‑Things (IoT) devices to manage energy consumption, occupant comfort, automation, and security. While the raw sensor streams are abundant, there is currently no unified, practical framework that can model these diverse data sources and transform them into actionable knowledge about human activities. This paper addresses that gap by presenting an end‑to‑end inference framework that extracts “status‑to‑event” knowledge from building‑level sensor data.

The authors first adopt the Building Topology Ontology (BOT) to capture spatial relationships among spaces (floors, rooms, corridors) and the sensors installed within them. By representing each sensor reading as an RDF triple (e.g., <Room101‑Light‑Sensor, hasState, ON>), the framework achieves a semantic, location‑aware integration that eliminates the need for ad‑hoc ID‑to‑location mappings.

For storage and query processing, the system leverages Apache Jena Fuseki, exposing a SPARQL endpoint that can handle large volumes of triples and support complex spatial‑temporal queries. An IoT‑centric API continuously streams sensor updates to the Fuseki server, where they are instantly materialized as RDF statements.

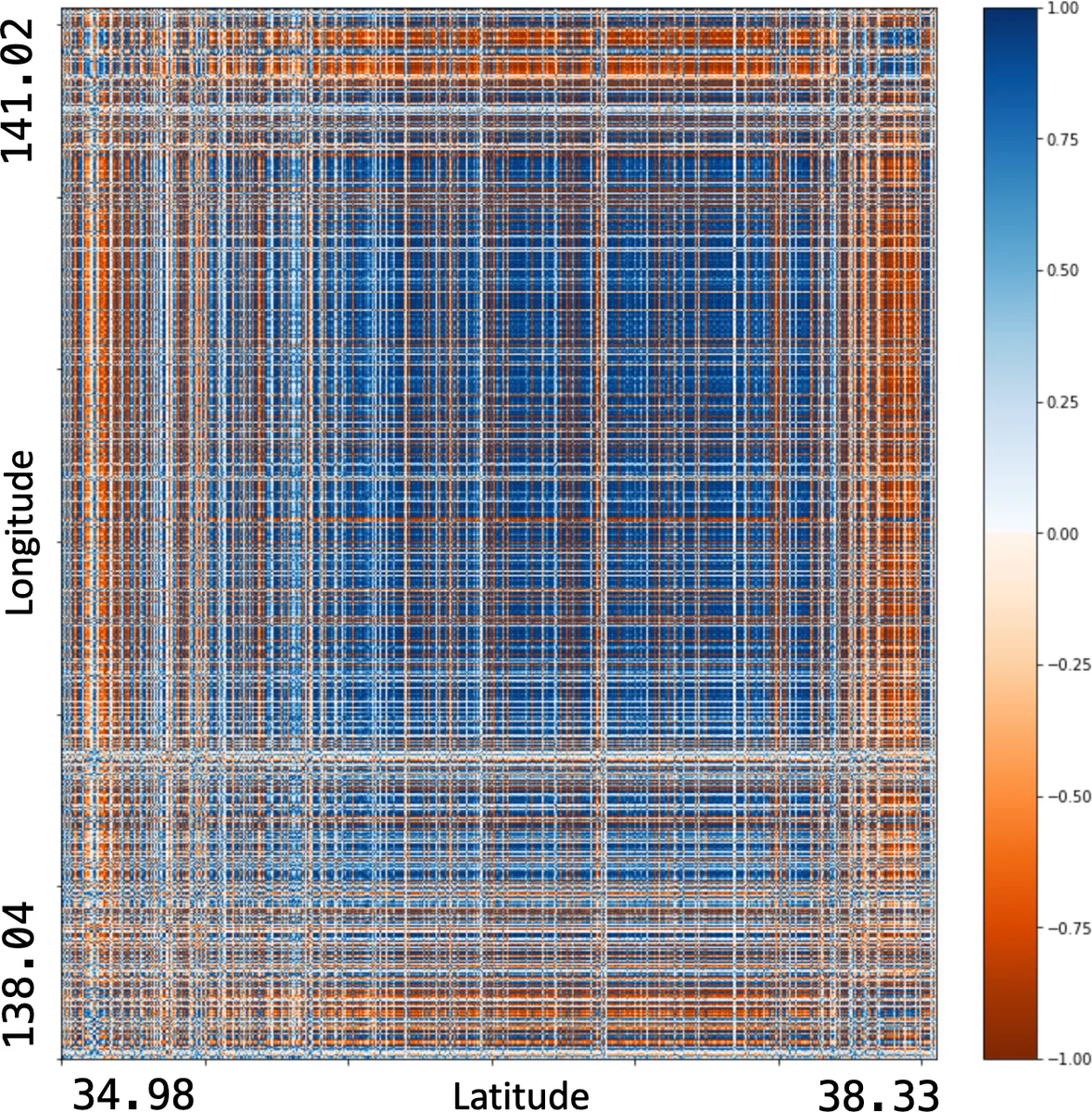

The core technical contribution lies in two time‑probability dependent knowledge extraction methods. The first, a timestamp‑based probability model, computes the likelihood of a single sensor state change being abnormal by comparing its occurrence frequency against a pre‑learned normal distribution. When the probability falls below a configurable threshold, the event is flagged as anomalous (e.g., a sudden light‑off). The second, a time‑interval based probability model, evaluates the joint probability of multiple sensor states occurring within a defined time window. This enables the detection of compound events such as “room occupancy (light on) coinciding with elevator arrival (door open)”. Both models output explicit probability scores, allowing system operators to tune sensitivity versus specificity according to operational requirements.

To validate the approach, the authors conducted a 78‑day field study in a real office building, collecting data from light sensors and elevator status logs at a one‑second granularity. The integrated data were fed into the BOT‑based knowledge base, and SPARQL queries combined with the two probability models produced three primary inferred events: (1) room occupancy derived from light‑on durations, (2) elevator trajectory reconstructed from successive floor‑arrival events, and (3) the conjunction of occupancy and elevator presence, which can indicate specific usage patterns such as meeting room sessions. The paper reports raw counts and average probability values for each event type; events with high confidence (probability > 0.85) were cross‑checked with building staff and confirmed as accurate.

Key strengths of the work include: (i) the use of a standardized ontology (BOT) for spatial grounding, which promotes interoperability across different building projects; (ii) a scalable RDF‑SPARQL backend that can accommodate future sensor expansions; and (iii) quantitative, probabilistic reasoning that moves beyond static rule‑based detection. However, the study also has limitations. The probability models depend heavily on historically learned distributions, making them less adaptable to sudden sensor replacements or drastic environmental changes without retraining. The experimental scope is limited to lighting and elevator data, leaving open questions about how the framework performs with HVAC, security cameras, or environmental monitors. Finally, the current implementation stops at knowledge extraction; integration with building management systems for closed‑loop control is not demonstrated.

Future research directions suggested by the authors include: (a) incorporating online learning mechanisms to continuously update probability parameters as new data arrive; (b) extending the ontology and extraction methods to support multimodal sensor fusion, thereby enriching activity recognition; and (c) coupling the inferred events with automated control actions (e.g., adjusting HVAC setpoints or lighting schedules) to realize true autonomous building operation. By addressing these extensions, the proposed framework could become a cornerstone for next‑generation smart building platforms that deliver energy savings, enhanced occupant experience, and robust security.