MV-PURE Spatial Filters with Application to EEG/MEG Source Reconstruction

In this paper we propose spatial filters for a linear regression model which are based on the minimum-variance pseudo-unbiased reduced-rank estimation (MV-PURE) framework. As a sample application, we consider the problem of reconstruction of brain ac…

Authors: Tomasz Piotrowski, Jan Nikadon, David Gutierrez

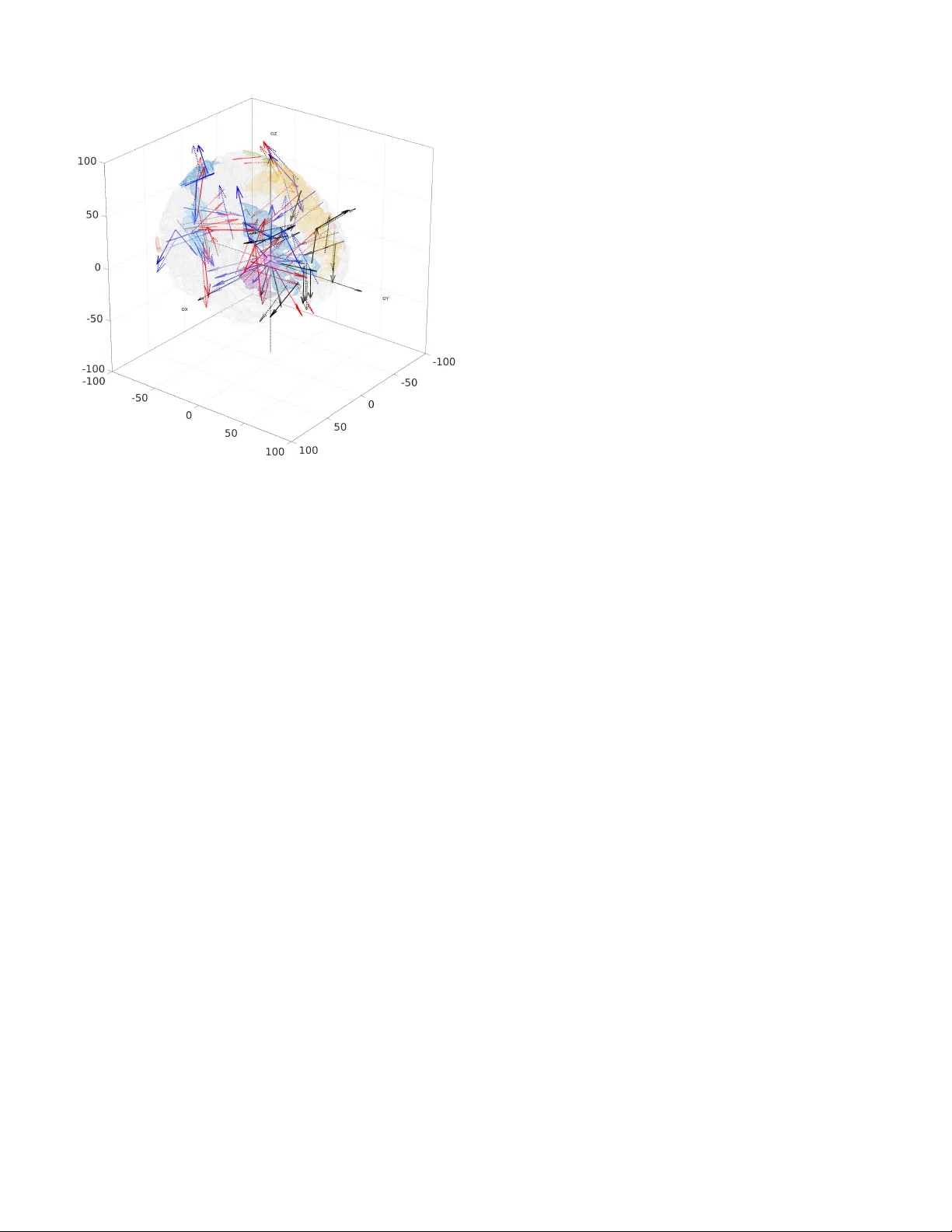

1 MV -PURE Spatial Filters with Application to EEG/MEG Source Reconstruction T omasz Piotro wski ⋆, 1 , 2 , Jan Nikadon 3 and David Guti ´ errez 4 , Senior Member , IEEE 1 Faculty of Physics, Astronomy and Informatics, Nicolaus Copernicus Uni versity , Grudziadzka 5/7, 87-100 T orun, Poland 2 Interdisciplinary Center for Modern T echnologies, Nicolaus Copernicus Uni versity , W ilenska 4, 87-100 T orun, Poland 3 Faculty of Humanities, Nicolaus Copernicus Uni versity , Fosa Staromiejska 1A, 87-100 T orun, Poland 4 Center for Research and Adv anced Studies, Monterrey’ s Unit, Apodaca, N.L., 66600, M ´ exico Abstract —In this paper we pr opose spatial filters f or a linear regr ession model which are based on the minimum-variance pseudo-unbiased reduced-rank estimation (MV -PURE) frame- work. As a sample application, we consider the problem of reconstruction of brain activity from electroencephalographic (EEG) or magnetoencephalographic (MEG) measur ements. The proposed filters come in two v ersions depending on whether or not the EEG/MEG forward model explicitly considers interfering activity in the way of brain activity originating in r egions different to those of main interest, but measured as correlated with signals of interest by the EEG/MEG sensor array . In both cases, the proposed filters are equipped with a rank-selection criterion minimizing the mean-square err or (MSE) of the filter output. Theref ore, we consider them as novel nontrivial generalizations of well-known linearly constrained minimum variance (LCMV) and nulling filters. In order to facilitate r eproducibility of our resear ch, we pro vide (jointly with this paper) comprehensive simulation framework that allows f or estimation of error of signal reconstruction for a number of spatial filters applied to MEG or EEG signals. Based on this framework, chief properties of proposed filters ar e verified in a set of detailed simulations. EDICS Category: SAM-BEAM, SSP-APPL, SSP-P ARE *Corresponding author . I . I N T R O D U C T I O N Beamforming techniques hav e been used in array signal processing since the seminal paper by Frost [1]. In electroen- cephalography (EEG) and magnetoencephalography (MEG), beamforming has been used for signal reconstruction and localization of sources of brain electrical activity . In this field, the dominant approach to solve these problems is to use the linearly constrained minimum variance (LCMV) filter (beamformer), or solutions based on it [2]–[8]. Indeed, the LCMV filter is implemented in virtually all software enabling The work of TP was supported by a grant from the Polish National Science Centre (UMO-2016/20/W/NZ4/00354). The work of JN was supported by a grant from the Polish Ministry of Science and Higher Education (0094/DIA/2015/44). © 2019 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating ne w collectiv e works, for resale or redistribution to servers or lists, or reuse of any copyrighted component of this work in other works. EEG/MEG source analysis, e.g., [9]–[11], and remains useful within EEG/MEG community , e.g., [12], [13]. Howe ver , it has been demonstrated in [2], [4], [5], [8], and references therein, that the LCMV -based solutions may perform sufficiently well only if certain conditions are satisfied by the EEG/MEG forward model, such as uncorrelatedness of the sources, high signal-to-noise ratio (SNR), sufficiently large spatial separation of sources, or the amount of regularization. Thus, it would be desirable to propose spatial filters that keep the advantages of the LCMV -based filters also in lo w- SNR regime, especially if EEG/MEG forward model is ill- conditioned. Ideally , such filters would not require heuristic parameter tuning. Therefore, in this paper we propose a family of reduced- rank filters extending the minimum-variance pseudo-unbiased reduced-rank estimation (MV -PURE) framew ork [14]–[18]. Reduced-rank estimators and filters are well-established in signal processing [4], [5], [19]–[24], as they offer much improv ed performance compared with full-rank solutions in well-defined settings. Indeed, the proposed filters are solutions of certain mean-square error (MSE) optimization problems and they are equipped with a rank-selection criterion minimizing mean-square error (MSE) of its estimate. The proposed filters come in two versions depending on whether or not the EEG/MEG forw ard model e xplicitly consid- ers interfering activity in the way of brain activity originating in regions different to those of main interest, but measured as correlated with signals of interest by the EEG/MEG sensor array . Then, we foresee two possible scenarios: • the interference-free model, in which the proposed filters extend the stochastic MV -PURE approach (previously considered in [16], [18]) by proposing two new cost functions for stochastic MV -PURE, • the model in presence of interference, where the proposed filters extend the nulling filter approach in [25], [26] to the reduced-rank case. In view of the abov e, the proposed filters are nontrivial generalizations of well-known LCMV and nulling filters pa- rameterized by rank, which is selected according to MSE- minimization principle. As such, the number of applications 2 is essentially the same as any other spatial filter, and is not limited to EEG/MEG settings. In particular, the filters proposed for the model in presence of interference may be specially useful in applications where it is important to ha ve interference removed from the reconstructed activity , e.g., in applications of directed connecti vity measures such as partial directed coherence (PDC) [27] or directed transfer function (DTF) [28] as they rely on accurate fitting of the time series representing the sources of interest by means of multiv ariate autoregressi ve (MV AR) models. In order to increase reproducibility of our research, we provide (jointly with this paper) a comprehensiv e simulation framew ork that implements signal reconstruction through dif- ferent spatial filters (including the ones here proposed) to realistic EEG/MEG measurements. Based on this frame work, the main properties of proposed filters are verified in a set of detailed simulations. W e emphasize that only through simulations one can get a proper assessment of methods’ performance, yet our model considers realistic conditions not only for the sources of interest, but also for other interfering brain activity . Preliminary short versions of this paper have been presented at conferences [29], [30]. I I . N O T AT I O N Assume x to be a vector of real-valued random vari- ables x 1 , . . . , x n , each with a finite variance. The expectation functional is denoted by E . The norm of x is defined as ∥ x ∥ rv = q tr E [ xx t ] , where tr denotes trace of a certain square matrix. I n stands for identity matrix of size n , while 0 m × n stands for zero matrix of size m × n. By λ i ( A ) we denote the i -th largest eigen value of symmetric matrix A , by σ i ( B ) the i -th largest singular value of matrix B , by [ B ] u × v the principal submatrix of B composed of the first u rows and v columns of B , by r k ( B ) the rank of B , and by [ B C ] the matrix constructed from matrices B and C by concatenating its columns. Furthermore, we denote by B † the Moore-Penrose pseudoin verse of matrix B [31]. The Frobenius norm of matrix B ∈ R m × n is defined as ∥ B ∥ F = p tr ( B B t ) = q P m i =1 P n j =1 b 2 i,j , where b i,j is the element of B at i -th row and j -th column. W e define X u × v r := { X r ∈ R u × v : rk ( X r ) ≤ r ≤ min { u, v }} . W e denote the orthogonal projection matrix onto subspace S by P S . W e assume that vectors considered in this paper are column vectors. I I I . E E G / M E G M E A S U R E M E N T M O D E L The array response (leadfield) matrix defining the relation- ship between l dipole sources and m sensors is constructed as H ( θ ) = H ( θ 1 ) , . . . , H ( θ l ) ∈ R m × l where for i = 1 , . . . , l , H ( θ i ) ∈ R m is the leadfield vector of the i -th source, θ = ( θ 1 , . . . , θ l ) is such that θ i = { r i , u i } , where r i is the source position, and u i is the orientation unit vector for the i - th source. In this paper we focus on reconstructing activity of sources in predefined locations. Thus, we assume that source positions and orientations are known and fixed during the measurement period. This can be achie ved by defining re gions of interest using source localization methods, e.g., minimum- norm [32] or spatial filtering-based methods [7], [33], [34], or referring to neuroscience studies that have identified regions of interest as in [26]. Nevertheless, the results of this paper apply analogously if the model with unconstrained orientation of the sources is considered. Follo wing [5], we also assume that the leadfield vectors are linearly independent, which implies in particular that leadfield matrices such as H ( θ ) are of full column rank. For notational conv enience, we will drop the explicit depen- dence of leadfield matrices on parameter vector θ from now on. A. Interfer ence-F r ee Measur ement Model Let us consider measurements of brain electrical acti vity by EEG/MEG sensors at a specified time interval. The random vector y ∈ R m composed of measurements at a giv en time instant can be modeled as [2], [5], [35], y = H q + n , (1) where H ∈ R m × l is a leadfield matrix of rank l representing leadfields of l sources of interest, q ∈ R l represents equiv alent current (ECD) dipole moments of sources of interest, and n ∈ R m represents remaining brain activity along with noise recorded at the sensors. Furthermore, we assume that q and n are mutually uncor - related zero-mean weakly stationary stochastic processes. W e denote the positi ve definite covariance matrices of q and n as Q and N , respectiv ely . Note that these assumptions imply that y is also zero-mean weakly stationary processs with positi ve definite cov ariance matrix R = H QH t + N . For the noise n , we consider the following model: n = H b q b + n m , (2) where H b q b ∈ R m represents background activity of the brain and n m ∈ R m is the uncorrelated and Gaussian-distributed measurement noise, which includes all the remaining acti vity of the brain not considered by H b q b . The leadfield matrix H b ∈ R m × p is assumed unknown, and so is the number of sources p representing background activity . 1) Spatial F iltering Under MSE Criterion: A spatial filter aiming at reconstructing activity q in (1) is defined as a matrix W ∈ R l × m applied to measurements: b q = W y . (3) The fidelity of reconstruction is measured using MSE of b q . In terms of (1), it is e xpressed as J F ( W ) = tr E [ W ( H q + n ) − q W ( H q + n ) − q t ] = tr ( W RW t ) − 2 tr W H Q + c, (4) where c = tr ( Q ) = ∥ q ∥ 2 rv > 0 . (5) 3 2) LCMV F ilter: The most commonly used LCMV spatial filter uses MSE as cost function, and it belongs to a class of filters W ∗ satisfying unit-gain constraint W ∗ H = I l . Note that, in view of (4), for filters satisfying this constraint we hav e J F ( W ∗ ) = tr ( W ∗ R ( W ∗ ) t ) − 2 tr W ∗ H Q + c = tr W ∗ ( H QH t + N ) | {z } R ( W ∗ ) t − c = tr ( W ∗ N ( W ∗ ) t ) . (6) Then, the LCMV filter is the solution of the following problem [1], [2]: minimize tr [ W RW t ] subject to W H = I l , (7) and it has the following unique solution: W LC M V = ( H t R − 1 H ) − 1 H t R − 1 . (8) There are two things that are important to highlight from the previous expressions: first, (6) implies that the cost function in (7) can be replaced by tr ( W N W t ) in the interference-free case, and thus the LCMV filter may be expressed equiv alently in terms of N instead of R (see [36] for a throughout ev aluation of both cases under the presence of modeling and source localization errors); second, since the LCMV filter does not consider the case of correlated interference, then its performance degrades significantly under such condition (see, e.g., [25], [26]). 3) Conditioning of the Interfer ence-F r ee Measurement Model: From (6), and using the alternativ e expression of the LCMV filter W LC M V = ( H t N − 1 H ) − 1 H t N − 1 , we can rewrite the cost function as J F ( W LC M V ) = tr ( W LC M V N W t LC M V ) = l X i =1 λ i ( H t N − 1 H ) − 1 . (9) Under the simplifying assumption of white n in (2), i.e., assuming that the background activity is spatially uncorrelated at the sensors yielding N = σ 2 I m , where σ 2 is the noise power , we can further rewrite (9) as λ i ( H t N − 1 H ) − 1 = σ 2 λ i ( H t H ) − 1 and, consequently: 1) with an increasing lev el of background acti vity and/or measurement noise, the MSE of the LCMV filter in- creases, 2) if H has some singular v alues close to zero, the MSE of the LCMV filter can be in principle arbitrarily large. While the first issue is expected, the second has not been fully addressed in the EEG/MEG literature, then in this paper we aim to develop efficient solutions to that problem. B. Measur ement Model in the Presence of Interfer ence The EEG/MEG measurement model considered in this sec- tion expands (1) by introducing interfering sources exhibiting activity correlated with acti vity of sources of interest. Namely , we now consider the follo wing [25], [26]: y = H c q c + n , (10) where H c = [ H H I ] ∈ R m × ( l + k ) is a composite leadfield matrix of rank l + k comprised by the originally defined leadfield matrix H ∈ R m × l and H I ∈ R m × k which represents leadfields of k interfering sources. 1 The vector q c = [ q t q t I ] t ∈ R l + k is similarly composed of q ∈ R l representing activity of sources of interest, and q I ∈ R k representing activity of interfering sources. W e also assume that q c is a zero-mean weakly stationary stochastic process uncorrelated with n , and its positiv e definite cov ariance matrix is given by Q c . These assumptions imply that y is also zero-mean weakly stationary processs with positiv e definite covariance matrix R = H c Q c H t c + N . 1) Spatial F iltering in the Pr esence of Interference: The MSE of estimate b q of the form (3) is expressed in terms of (10) as J I ( W ) = tr E [ W ( H c q c + n ) − q W ( H c q c + n ) − q t ] = tr ( W RW t ) − 2 tr W H c E [ q c q t ] + c, (11) where c is gi ven in (5). 2) Nulling F ilter: The nulling spatial filter proposed in [25], [26] extends the LCMV approach by incorporating constraints on the optimization problem which directly remove the impact of correlated interference. It is defined as minimize tr [ W RW t ] subject to W H = I l W H I = 0 l × k , (12) which has the follo wing unique solution: W N L = [ I l 0 l × k ]( H t c R − 1 H c ) − 1 H t c R − 1 . (13) In view of (11), the MSE of filters W ∗∗ that satisfy the constraints of the optimization problem in (12) is given by J I ( W ∗∗ ) = tr ( W ∗∗ R ( W ∗∗ ) t ) − 2 tr W ∗∗ H c E [ q c q t ] + c = tr W ∗∗ ( H c Q c H t c + N ) | {z } R ( W ∗∗ ) t − c = tr ( W ∗∗ N ( W ∗∗ ) t ) . (14) Again, note that (14) implies that the cost function in (12) can be replaced by tr ( W N W t ) for the nulling filter , and thus the nulling filter may be expressed equi valently in terms of N instead of R , just as the LCMV filter in the interference-free case. The nulling filter is relev ant as it has sho wed remarkable improv ement in reconstruction performance over the LCMV filter in the presence of interfering sources [25], [26]. 3) Conditioning of the Measur ement Model in the Pr esence of Interference: Using the alternativ e expression of the nulling 1 W e note that [26] uses a slightly different notation of the forward model for interfering sources, where they are considered separately for each region of interest. In our case, they are combined into a single forward model for the interfering sources, yielding forward matrix H I . W e took that approach for clarity of the deriv ation of the proposed filter . 4 filter W N L = [ I l 0 l × k ]( H t c N − 1 H c ) − 1 H t c N − 1 , from (14) and similarly as for the LCMV filter , we ha ve that J I ( W N L ) = tr ( W N L N W t N L ) = l X i =1 λ i ( H t c N − 1 H c ) − 1 l × l . (15) While this expression cannot be analyzed as easily as (9), the key observation is that, in the presence of interference, the value of (15) for the nulling filter will be at least as large as the corresponding value of (9) for the LCMV filter in the interference-free case. This is the trade-off of further constraining the optimization problem in (12) compared with (7). In particular , the sensitivity of the nulling filter to the ill- conditioning of the forward model is essentially the same as of the LCMV filter . C. Challenging the Ill-Conditioning of the Measur ement Model One efficient way to lo wer sensitivity on ill-conditioning is to reduce the rank of the filter [20], [24], [37], [38], which is the approach we take in this paper . Specifically , we introduce a parameter r such that 1 ≤ r ≤ l is the rank of a reduced-rank filter W r ∈ X l × m r . The reduced-rank approach relaxes unit- gain constraint W H = I l of the LCMV and nulling filters, as the exact equality cannot be obtained in this case. Thus, in order to employ the corresponding constraint in the reduced- rank case, we take advantage of the MV -PURE framew ork [14]–[16] and insist that the reduced-rank filter is designed to introduce the smallest deviation from unit-gain condition among filters of rank at most r. More precisely , the proposed reduced-rank filter should satisfy: W • r ∈ P ι r := arg min W r ∈ X l × m r ∥ W r H − I l ∥ 2 ι , ι ∈ I , (16) for all ι , where I is the index set of all unitarily in v ariant norms. In essence, the above approach introduces parameter r - the rank of the matrix -, hoping that it can be selected to optimize certain cost function. As it will be sho wn in the next section, it is possible to select r such that the MSE of the resulting filter is minimized. In fact, it has been previously shown that this relaxation of unit-gain constraint deals ef ficiently with ill- conditioning, and it may e ven achiev e lower MSE than their full-rank counterparts [18], [24]. I V . P RO P O S E D S PA T I A L F I LT E R S I N P R E S E N C E O F I N T E R F E R E N C E In this and the follo wing section, we introduce the pro- posed filters which use the reduced-rank MV -PURE paradigm embodied in constraint (16). Unlike in Sections III-A and III-B, we be gin with the case where the interfering sources are explicitly considered since the proofs of theorems establishing filters for interference-free model can be obtained as special cases of the ones in presence of interference. A. Optimization Pr oblems W e propose a ne w filter as a solution of the following optimization problem parameterized by r : minimize J I ( W r ) subject to W r ∈ T ι ∈ I P ι r W r H I = 0 l × k , (17) where P ι r is defined in (16). Note that the relaxation of unit-gain constraint W H = I l used in (12) implies that equalities analogous to those in (14) do not hold for (17). For this reason, we introduce two additional versions of the proposed filter with cost functions tr ( W r RW t r ) and tr ( W r N W t r ) , respectiv ely , and the same constraints on W r as in (17), i.e., minimize tr ( W r RW t r ) subject to W r ∈ T ι ∈ I P ι r W r H I = 0 l × k , (18) and minimize tr ( W r N W t r ) subject to W r ∈ T ι ∈ I P ι r W r H I = 0 l × k , (19) where P ι r is defined in (16). T ogether , these three cost functions produce three different filters, unlike in the case of nulling filter , where all these cost functions are equi valent. Based on the proposed filters (17)-(19), our approach is then to select rank r which minimizes MSE. As it will be demonstrated in the next section, this strategy can be efficiently applied to all three v ersions of the filter produced by the three cost functions considered. In this way , the proposed filters are nontrivial generalizations of the nulling filter . B. Closed Algebraic F orms Theorem 1 below establishes closed algebraic form of the proposed filter obtained as the solution of the optimization problem (17). Then, Theorems 2 and 3 present closed algebraic forms of the proposed filter for the alternativ e cost functions tr ( W r RW t r ) and tr ( W r N W t r ) , respectiv ely . Theor em 1: For a given r such that 1 ≤ r ≤ l , the solution to optimization problem (17) is gi ven by W ⋆ r = P K (1) r P R ( G I ) ⊥ G † P R ( G I ) ⊥ R − 1 / 2 , (20) where • R − 1 / 2 is the unique positiv e definite matrix satisfying R − 1 / 2 R − 1 / 2 = R − 1 [31], • G := R − 1 / 2 H and G I := R − 1 / 2 H I , • P R ( G I ) ⊥ is the orthogonal projection matrix onto orthog- onal complement of range of G I , • P K (1) r is the orthogonal projection matrix onto subspace spanned by eigen vectors corresponding to δ (1) 1 ≤ · · · ≤ δ (1) r - the r smallest eigen values of the symmetric matrix K (1) = ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ ( P R ( G I ) ⊥ G ) † t − 2 Q . (21) Its corresponding MSE is given by J I ( W ⋆ r ) = tr ( P K (1) r K (1) ) + c = r X i =1 δ (1) i + c, (22) 5 where c = tr ( Q ) . Pr oof: See Appendix B. Theor em 2: With notation as in Theorem 1, for a given r such that 1 ≤ r ≤ l , the solution to optimization problem (18) is giv en by W ⋆ r = P K (2) r P R ( G I ) ⊥ G † P R ( G I ) ⊥ R − 1 / 2 , (23) where P K (2) r is the orthogonal projection matrix onto subspace spanned by eigen vectors corresponding to δ (2) 1 ≤ · · · ≤ δ (2) r - the r smallest eigen v alues of the symmetric matrix K (2) = K (1) + 2 Q . (24) The MSE for this solution can be written as J I ( W ⋆ r ) = tr ( P K (2) r K (1) ) + c = r X i =1 δ (2) i − 2 tr ( P K (2) r Q ) + c, (25) where c = tr ( Q ) . Pr oof: See Appendix C. Theor em 3: For a given r such that 1 ≤ r ≤ l , the solution to optimization problem (19) is gi ven by: W ⋆ r = P K (3) r P R ( F I ) ⊥ F † P R ( F I ) ⊥ N − 1 / 2 , (26) where • N − 1 / 2 is the unique positi ve definite matrix satisfying N − 1 / 2 N − 1 / 2 = N − 1 , • F := N − 1 / 2 H and F I := N − 1 / 2 H I , • P R ( F I ) ⊥ is the orthogonal projection matrix onto orthog- onal complement of range of F I , • P K (3) r is the orthogonal projection matrix onto subspace spanned by eigen vectors corresponding to δ (3) 1 ≤ · · · ≤ δ (3) r - the r smallest eigen values of the symmetric matrix K (3) = ( P R ( F I ) ⊥ F ) † P R ( F I ) ⊥ ( P R ( F I ) ⊥ F ) † t . (27) The MSE of (26) is gi ven by J I ( W ⋆ r ) = tr ( P K (3) r K (4) ) + c = r X i =1 δ (3) i − tr ( P K (3) r Q ) + c, (28) where c = tr ( Q ) and K (4) = K (3) − Q . (29) Pr oof: See Appendix D. There are some observations with regards to the results of Theorems 1-3 that deserv e to be highlighted: Remark 1: • For no rank constraint, i.e., r = l , and based on Theorems 1-3, the nulling filter (13) is alternati vely written as W N L = P R ( G I ) ⊥ G † P R ( G I ) ⊥ R − 1 / 2 = P R ( F I ) ⊥ F † P R ( F I ) ⊥ N − 1 / 2 . (30) • In view of (30), the filters in (20), (23) and (26) differ only in the subspace that the estimate of the nulling filter is orthogonally projected onto. Namely , they are of the form: W ⋆ r = P K ( i ) r W N L , i = 1 , 2 , 3 . (31) • Furthermore, from (30), and using the fact that orthogonal projection matrices are both symmetric and idempotent [31], we may obtain alternative representations of matri- ces K (1) and K (3) as: K (1) = W N L RW t N L − 2 Q , (32) K (3) = W N L N W t N L , (33) with K (2) = K (1) + 2 Q = W N L RW t N L and K (4) = K (3) − Q = W N L N W t N L − Q . • It is seen from the previous two points that the proposed filters, if cast in the form (31), require computation of the nulling filter along with the orthogonal projection onto a minor subspace of one of K ( i ) ∈ R l × l matrices for i = 1 , 2 , 3 , whose complexity is O ( l 3 ) using na ¨ ıve im- plementation [39]. Thus, the computational effort needed to compute the proposed filters in the form (31) is of the same order as of the nulling filter . W e should also note that typically l ≪ m in EEG/MEG source reconstruction. • The filter (20) is expressed in terms of Q through K (1) , whereas the filters in (23) and (26) do not depend explicitly on Q . • Q may be estimated as b Q = [ b Q c ] l × l , where b Q c is obtained through [18, Lemma 1] Q c = ( H t c R − 1 H c ) − 1 − ( H t c N − 1 H c ) − 1 , (34) in which R and N are replaced by the finite sample estimates. • The MSE in (22), (25) and (28) yield a natural rank- selection method applicable to all forms of the proposed filter by selecting r which minimizes them. W e note en passant that (22) depends on r only through eigen values of the symmetric matrix K (1) , while (25) depends on r through eigenv alues of the positive semidefinite matrix K (2) and tr ( P K (2) r Q ) . Similarly , (28) depends on r through eigenv alues of the positive semidefinite matrix K (3) and tr ( P K (3) r Q ) . • Finally , as seen in [36] for the LCMV filter , both the nulling and the proposed filters are expected to show differences in performance whether R or N is used in the presence of modeling and source localization errors. W e also note that [26] considers only the expression of the nulling filter in terms of R , but not N . C. Interactions Among Sources Based on MV AR Model One of possible applications of the nulling filter and the filters proposed in this section is in identifying interactions among sources of interest by means of measuring causal dependencies among their reconstructed acti vities, as dis- cussed in [26], [40]. In particular , in [26] the authors have used the partial directed coherence (PDC) [27] of directed causal dependencies based on fitting multiv ariate autoregres- siv e (MV AR) model to the reconstructed activity of sources of interest. As the MV AR model is inherently sensitiv e to linear mixing among time series considered, it is important to obtain the estimate of activity of sources of interest which is to the 6 largest possible extent free from contamination of interfering sources. Indeed, both nulling and filters proposed in this section produce interference-free estimates, as from (13), (20), (23) and (26) we have that: W N L y = W N L ( H c q c + n ) = W N L [ H H I ][ q t q t I ] t + H b q b + n m = q + W N L H b q b + W N L n m | {z } reconstructed noise , (35) and W ⋆ r y = W ⋆ r ( H c q c + n ) = W ⋆ r [ H H I ][ q t q t I ] t + H b q b + n m = P K ( i ) r q + W ⋆ r H b q b + W ⋆ r n m | {z } reconstructed noise , i = 1 , 2 , 3 , (36) respectiv ely . Then, (35) and (36) show that the v alue of r selected to minimize the MSE of W ⋆ r y (which includes W N L y as a special case for r = l ) introduces a trade-off between the dimension of subspace the signal of interest q is orthogonally projected onto and the power of the reconstructed noise. Thus, if q can be well-fit into r -dimensional subspace, we shall expect more accurate MV AR model fitted to the reconstructed activity due to efficient suppression of noise reconstructed by the filter of rank r compared to the full-rank nulling filter . Clearly , the abov e analysis could be used for other applica- tions of the nulling and proposed filters as well. Howe ver , their effecti veness depends on the assumption that all interfering sources have been identified and correctly localized. This may not always be the case in practice, as the following section will describe. D. Extension to P atch Constraints As discussed in [26], in certain scenarios it may be dif ficult to determine exact locations of interfering brain sources. Instead, whole patches of cortical and subcortical regions may be considered as contributing correlated activity to the measured signal y . Then, a complete removal of its impact on reconstructed activity would require imposing constraints W H I = 0 l × k for a large number of interfering sources k . This would result in very tight constraints on the optimization problem in (12) and, consequently , lar ge MSE of the resulting filter . For such cases, a heuristic solution proposed in [26] is to increase the number of degrees of freedom av ailable for the filter by relaxing constraint W H I = 0 l × k . This is achiev ed by replacing the leadfield matrix of interfering sources H I with its best approximation H I s of rank s ≤ k . More precisely , the nulling constraints are replaced with so-called patch constraints [26] W H I s = 0 l × k , (37) where s is a rank of the approximation such that 1 ≤ s ≤ k . This relaxation reduces the number of degrees of freedom needed for nulling constraints from k to s at the price of having them only approximately enforced. In fact, using patch constraints (37) in place of nulling constraints W H I = 0 l × k yields significant differences to the behaviour of the nulling filter introduced in Section III-B2 and filters proposed in this section. Specifically , if we con- sider patch constraints (37) in place of nulling constraints W H I = 0 l × k for the nulling filter and the filters introduced in Theorems 1-3, then we can pose the following optimization problems: minimize tr [ W RW t ] subject to W H = I l W H I s = 0 l × k , (38) and minimize tr [ W N W t ] subject to W H = I l W H I s = 0 l × k , (39) which now yield two different filters. This is due to W ∗∗ s satisfying constraints of these optimization problems, hence we have that W ∗∗ s H c = W ∗∗ s [ H H I ] = [ I l 0 l × k ] , thus in particular , the third equality in (14) does not hold. Furthermore, the proof of Theorem 1 no longer holds at (71), because it turns out that Z r G c E [ q c q t ] = Z r [ G G I ] E [ q c q t ] = [ Z r G 0 l × k ] E [ q c q t ] . (40) Therefore, the optimization problem ( cf . (17)) minimize J I ( W r ) subject to W r ∈ T ι ∈ I P ι r W r H I s = 0 l × k , (41) with P ι r defined in (16), cannot be solved using methods provided in the proof of Theorem 1. For the same reason, the MSE cost function J I ( W r ) cannot be ev aluated exactly for the solutions of the following optimization problems ( cf . (18) and (19)): minimize tr ( W r RW t r ) subject to W r ∈ T ι ∈ I P ι r W r H I s = 0 l × k , (42) and minimize tr ( W r N W t r ) subject to W r ∈ T ι ∈ I P ι r W r H I s = 0 l × k . (43) Nev ertheless, closed algebraic forms of solutions of op- timization problems (42) and (43) can be obtained, as the following theorem shows. Theor em 4: W ith notation as in Theorems 2 and 3, the closed algebraic forms of optimization problems (42) and (43) are, respectiv ely W ⋆ r = P K (2) r P R ( G I s ) ⊥ G † P R ( G I s ) ⊥ R − 1 / 2 , (44) and W ⋆ r = P K (3) r P R ( F I s ) ⊥ F † P R ( F I s ) ⊥ N − 1 / 2 , (45) where G I s := R − 1 / 2 H I s and F I s := N − 1 / 2 H I s . In particular , for r = l , the solutions to optimization problems (38) and (39) are obtained as W ⋆ r = P R ( G I s ) ⊥ G † P R ( G I s ) ⊥ R − 1 / 2 , (46) 7 and W ⋆ r = P R ( F I s ) ⊥ F † P R ( F I s ) ⊥ N − 1 / 2 , (47) respectiv ely . Pr oof: From Fact 1 gi ven in the Appendix A one has that H I s = [ U H I ] m × s [ Σ H I ] s × s ([ V H I ] k × s ) t , where U H I Σ H I V t H I is a singular v alue decomposition of H I . Therefore, R ( H I s ) ⊂ R ( H I ) , and consequently also R ( G I s ) ⊂ R ( G I ) and R ( F I s ) ⊂ R ( F I ) . Hence, R ( G ) ∩ R ( G I s ) = { 0 } and R ( F ) ∩ R ( F I s ) = { 0 } . On this basis, the parts of the proofs of Theorems 2 and 3 needed to obtain closed algebraic form can be reproduced to obtain (44) and (45), respectiv ely . Expressions (46) and (47) follow from (44) and (45), respectively , as special cases for r = l. In the case of patch-constraints, we propose to use the filters as given in Theorem 4, then the rank of the filter is selected to approximately minimize its output MSE. This is achiev ed by using expressions (25) of Theorem 2 and (28) of Theorem 3, replacing G I with G I s in the former case and F I with F I s in the latter . W e will employ this approach in the numerical simulations considered in Section VI. V . P RO P O S E D S PA T I A L F I LT E R S F O R I N T E R F E R E N C E - F R E E M O D E L Correspondingly to optimization problems (17)-(19) con- sidered in Section IV, we propose the following filters as solutions of the optimization problems parameterized by r for the case of the interference-free model in (1): minimize J F ( W r ) subject to W r ∈ T ι ∈ I P ι r , (48) minimize tr ( W r RW t r ) subject to W r ∈ T ι ∈ I P ι r , (49) minimize tr ( W r N W t r ) subject to W r ∈ T ι ∈ I P ι r , (50) where P ι r is defined in (16). The follo wing theorem establishes the closed algebraic forms of filters defined through (48)-(50). Theor em 5: With notation as in Theorems 1-3: 1) For a gi ven r such that 1 ≤ r ≤ l , the solution to optimization problem (48) is given by W ⋆ r = P L (1) r G † R − 1 / 2 , (51) where P L (1) r is the orthogonal projection matrix onto subspace spanned by eigenv ectors corresponding to γ (1) 1 ≤ · · · ≤ γ (1) r - the r smallest eigen values of the symmetric matrix L (1) = G † ( G † ) t − 2 Q . (52) Then, the MSE is given by J F ( W ⋆ r ) = tr ( P L (1) r L (1) ) + c = r X i =1 γ (1) i + c, (53) where c = tr ( Q ) . 2) The solution to optimization problem (49) is given by W ⋆ r = P L (2) r G † R − 1 / 2 , (54) where P L (2) r is the orthogonal projection matrix onto subspace spanned by eigenv ectors corresponding to γ (2) 1 ≤ · · · ≤ γ (2) r - the r smallest eigen values of the symmetric matrix L (2) = L (1) + 2 Q = G † ( G † ) t . (55) In this case, the MSE is gi ven by J F ( W ⋆ r ) = tr ( P L (2) r L (1) ) + c = r X i =1 γ (2) i − 2 tr ( P L (2) r Q ) + c. (56) 3) The solution to optimization problem (50) is given by W ⋆ r = P L (3) r F † N − 1 / 2 , (57) where P L (3) r is the orthogonal projection matrix onto subspace spanned by eigenv ectors corresponding to γ (3) 1 ≤ · · · ≤ γ (3) r - the r smallest eigen values of the symmetric matrix L (3) = F † ( F † ) t . (58) In this case, the MSE is gi ven by J F ( W ⋆ r ) = tr ( P L (3) r L (4) ) + c = r X i =1 γ (3) i − tr ( P L (3) r Q ) + c, (59) where L (4) = L (3) − Q = F † ( F † ) t − Q . (60) Pr oof: The proof follows immediately from the ones of Theorems 1-3 by setting G I and F I to be null matrices, implying P R ( G I ) ⊥ = P R ( F I ) ⊥ = I m . A few comments on Theorem 5 are in place here, most of which are analogous to those made in Remark 1 below Theorems 1-3: Remark 2: • The form (51) has been obtained and analyzed in [16], [18]. The forms (54) and (57) are newly proposed. • For no rank constraint, i.e., r = l , Theorem 5 yields alternativ e forms of the LCMV filter (8) as W LC M V = G † R − 1 / 2 = F † N − 1 / 2 . (61) • In view of (61), the filters in (51), (54) and (57) differ only in the subspace that the estimate of the LCMV filter is orthogonally projected onto. Namely , they are of the form: W ⋆ r = P L ( i ) r W LC M V , i = 1 , 2 , 3 . (62) • Furthermore, from (61) we may obtain alternativ e repre- sentations of matrices L (1) and L (3) as: L (1) = W LC M V RW t LC M V − 2 Q , (63) L (3) = W LC M V N W t LC M V , (64) with L (2) = L (1) + 2 Q = W LC M V RW t LC M V and L (4) = L (3) − Q = W LC M V N W t LC M V − Q . 8 • It is seen from the previous two points that the proposed filters, if cast in the form (62), require computation of the LCMV filter along with the orthogonal projection onto a minor subspace of one of L ( i ) ∈ R l × l matrices for i = 1 , 2 , 3 , which complexity is O ( l 3 ) using na ¨ ıve im- plementation [39]. Thus, the computational effort needed to compute the proposed filters in the form (62) is of the same order as of the LCMV filter . A minor subspace tracking method [41] has been employed in a preliminary study [42] for an online implementation of filter in (51). • W e note that the filter (51) is expressed in terms of the cov ariance matrix Q of signal to be reconstructed through L (1) , whereas the filters in (54) and (57) do not depend explicitly on Q . • From [18, Lemma 1] we have Q = ( H t R − 1 H ) − 1 − ( H t N − 1 H ) − 1 . (65) Then, an estimate of Q can be obtained through (65) by replacing R and N with their finite samples estimates. • The MSE in (53), (56) and (59) yield natural rank- selection method applicable to all forms of the proposed filter by selecting r which minimizes them. It should be highlighted that (53) depends on r only through eigen- values of the symmetric matrix L (1) , while (56) depends on r through eigen values of the positiv e semidefinite matrix L (2) and tr ( P L (2) r Q ) . Similarly , (59) depends on r through eigenv alues of the positive semidefinite matrix L (3) and tr ( P L (3) r Q ) . V I . N U M E R I C A L E X A M P L E S A. Simulation F ramework In order to facilitate reproducibility of our research we provide (jointly with this paper) a comprehensiv e simulation framew ork that allows for estimation of error of signal re- construction for a number of spatial filters applied to MEG or EEG signals. It is av ailable for download at https://github .com/ IS- UMK/supFunSim.git . This framew ork may be used directly from a single org-mode file ( supFunSim.org ) or as a set of MA TLAB scripts. B. Signals in Sour ce Space Generation of time series in source space for bioelec- trical acti vity of brains’ cortical and subcortical regions is conducted using separate MV AR models for the activity of interest q ( sim_sig_SrcActiv ), the interfering activity q I ( sim_sig_IntNoise ), and the biological background noise q b ( sim_sig_BcgNoise ). Additionally , we also add Gaussian uncorrelated noise n m to the time series in sensor space to mimic measurement noise and all the remaining activity of the brain ( sim_sig_MesNoise ). Both q and q b are simulated using independent, random and stable MV AR models of order 6. MV AR modeling and fitting was performed using MV ARICA [43] and ARfit [44] packages. During generation of each MV AR model for q , the coefficient matrix was multiplied by a mask matrix that has 80% of its off-diagonal elements equal to zero. All the remain- ing diagonal and of f-diagonal masking coefficients are equal to one. Such procedure allows us to define specific directed dependencies between activity of sources of interest. These dependencies are measured with the PDC which operates on coefficients deri ved from the MV AR model fitted to the signal. W e refer the reader to [27] for detailed description of the PDC. Activity of each of the q I sources is generated as negati ve of the q with added Gaussian noise of the same power as the q signal itself. In this way , we get the q I signal to be correlated with q . C. V olume Conduction Model and Leadfields In order to obtain measurements (i.e., time series in sensor space), we first use FieldT rip (FT) toolbox [9] for generation of v olume conduction model (VCM) and leadfields. VCM is prepared ( ft_prepare_headmodel ) using DIPOLI method [45] that is applied to three triangulated surface meshes ( tess_innerskull.mat , tess_outerskull.mat and tess_head.mat ) repre- senting the outer surfaces of brain, skull, and scalp, and they correspond to those provided as sample/reference data in the Brainstorm (BS) toolbox [10]. Prior to VCM generation, we transform coordinates of vertices of each mesh to match spatial orientation and length unit of the EEG system used. Additionally , for the results presented herein, we arbitrarily select: • Hydr oCel Geodesic Sensor Net utilizing 128 channels as EEG cap layout. • Regions of interest (R OIs) corresponding to specific cor- tex patches, and whose geometry is reconstructed and parcellated from the detailed cortical surface by means of the BS toolbox ( tess_fs_tcortex_15000V.mat ). W e selected R OIs by their anatomical description in Destrieux and Desikan-Killiany atlases [46], [47]. Sur- face parcellation was prepared using freely a vailable FreeSurfer Software Suite [48] such that each ROI is comprised of a triangular mesh and fully characterized by its nodes, from which candidates for source position and orientation (orthogonal to the mesh) will be randomly drawn. • Both thalami modeled jointly as a single triangulated mesh containing node candidates for a random selection of subcortical bioelectrical activity . Also here, the ori- entation of sources is chosen as orthogonal to the mesh surface. D. Signal in Sensor Space The forw ard model solution is based on the boundary ele- ment method (BEM) proposed in [49], which is implemented in the FT toolbox. Based on this solution, we obtain EEG measurements y using leadfields calculated for each source defined by its position and orientation. W e note that for large power of q I , (10) realistically represents signal y , while the lower the power of q I , the more adequate (1) becomes. E. Simulations Settings and Configuration The simulations framework provided with this paper en- ables to freely change essential simulation parameters. These include, in particular: 9 Fig. 1: Example of simulation setup sho wing R OIs and vertices representing sources of interest q (black), interference q I (red), and background activity q b (blue). Depending on the simulation setup, ROIs and vertices can be fixed or randomly selected. Perturbation of source location and direction can also be introduced. The perturbed sources are indicated using dashed line. • signal to interference noise ratio (SINR) defined as the ratio of power of q signal to the power of q I signal, both projected onto sensor space, • signal to biological noise ratio (SBNR) defined as the ratio of power of q signal to the power of q b signal, both projected onto sensor space, • signal to measurement noise ratio (SMNR) defined as the ratio of power of q signal projected onto sensor space to the power of n m signal, • number of cortical ROIs to be randomly selected, • number of sources (of each type, per any of the cortical and subcortical regions described pre viously). For the sake of numerical results presented in this section, we selected l = 13 sources of interest, k = 27 sources of interfering acti vity , p = 27 sources of background activity ( 7 on the cortex, and remaining 20 in subcortical regions), • number of simulation runs where a ne w MV AR model is generated in each run, • number of independent realizations based on each gener- ated MV AR model (trials), • number of time samples per trial, • usage of original or perturbed leadfield matrices. In the latter case, leadfield vectors of sources of interest (columns of H ) and of interfering sources (columns of H I ) may be generated such that: – position of each source is shifted randomly within a cube of a gi ven side length, centered at the original source location, – orientation of each source is shifted randomly within a prescribed azimuth and elev ation intervals, both centered at the original source orientation. In the numerical example presented in this section, we con- sidered patch-constraints, where the rank s of H I s was set to 8 , which allowed us to keep approximately 30% of singular values of H I . These parameters are also adjustable in the proposed framework. Moreover , we also e valuated a perturbed leadfield matrix of sources of interest, where each source position was allowed to be shifted randomly within a cube of 20 mm side length, with azimuth and ele vation of sources shifted by π / 32 rad. The resulting perturbed leadfield matrix of sources of interest was denoted H P E . For each combination of SNRs we conducted 1000 sim- ulation runs. In each run, the location of the sources were randomly chosen in accordance to the scheme described in Section VI-C and a new MV AR model was generated. Each simulation run contained a realization of the MV AR process with 1000 samples, where: • The first half (i.e., the first 500 samples) of each trial is interpreted as pre-task/stimulus activity and is comprised of q b signal projected onto sensor space along with n m signal. The estimate of noise cov ariance matrix N is obtained from this section of the signals as a finite sample estimate. • The second half of each trial is comprised of all signals, i.e., q , q I , q b signals, projected onto sensor space along with n m signal. The estimate of signal cov ariance matrix R is obtained from this second section of the signals as a finite sample estimate. F . P erformance Evaluation 1) F ilters Evaluated: W e selected for comparison the fol- lowing spatial filters: • The LCMV filter , expressed as both W LC M V ( R ) = ( H t R − 1 H ) − 1 H t R − 1 , and W LC M V ( N ) = ( H t N − 1 H ) − 1 H t N − 1 , • The nulling filter in the form proposed in the work [26], namely W N L = [ I l 0 l × k ]( H t c R − 1 H c ) − 1 H t c R − 1 , cf. (13). • The theoretically MSE-optimal MMSE (W iener) filter , defined as W F − M M S E = QH t R − 1 , for the interference-free model, and W I − M M S E = E [ q q t c ] H t c R − 1 , for the model in presence of interference, • The zero-forcing filter , defined as W Z F = H † , 10 • The eigenspace-LCMV filters [5] exploiting projection of the signal co variance matrix R onto its principal subspace of the forms W E I G − LC M V ( R ) = W LC M V ( R ) P R sig , and W E I G − LC M V ( N ) = W LC M V ( N ) P R sig , where P R sig is the orthogonal projection matrix onto subspace spanned by eigen vectors corresponding to λ 1 ≥ · · · ≥ λ sig - the sig largest eigenv alues of R , where sig is the dimension of signal subspace. In practice, it is difficult to estimate signal subspace dimension [5]. Here, we selected its optimal value, i.e., the v alue of sig that matches the number of active sources. 2) P erformance Measures: W e considered the following performance measures: • MSE , defined as the squared Euclidean distance between the signal of interest and its reconstruction. For better clarity , both signal and its reconstruction were normalized prior to distance measurement. For all 13 signals of interest, the result is av eraged across 500 time samples and 1000 simulation runs. • PDC , defined as a correlation coef ficient between original profiles of absolute PDC values and those based on MV AR model fitted to the reconstructed signal. The result is av eraged across 13 signals of interest (each comprised of 500 samples) and 1000 simulation runs. The MSE and PDC performance measures are ev aluated for: • filters using original leadfield matrix of sources of interest H : MSE ( H ) and PDC ( H ), • filters using perturbed leadfield matrix of sources of interest H P E : MSE ( H P E ) and PDC ( H P E ). The abov e performance measures, along with others not reported here, are implemented in section tg_s06_Error_evaluation of the provided simulation framew ork. 3) Numerical Results: Due to large number of filters con- sidered, we present numerical results in T ables I-VI on the ne xt page. The results presented therein show that for SINR=0 dB, the nulling filters outperform filters designed for interference- free model, which reflects observed signal less accurately in such settings. On the other hand, for higher values of SINR, the interference-free model gradually becomes the preferred one, and filters designed for this model achieve comparable or better performance than their counterparts deriv ed for the model in presence of interference. Moreov er , the MSE and PDC performance measures used in simulations demonstrate that fidelity in reconstruction perfor- mance is related to accuracy of reconstructed PDC profiles, but there is no direct linkage between them. Indeed, the eigenspace-LCMV filters produce reconstruction particularly ill-suited to this aim. On the other hand, despite the fact that the proposed filters use mean-square error as the cost function, they also perform well in terms of PDC measure, which encourages their use in in vestigating interactions among sources based on MV AR model such as PDC or DTF [28]. As discussed in Section IV -C, this is due to efficient cancellation of interfering acti vity , which enables more accurate fitting of the MV AR model to the reconstructed activity of sources of interest. W e refer interested readers to [26], where this particular application of nulling filters is discussed in more detail. Unsurprisingly , the performance of most of the ev aluated filters is negativ ely affected by using the perturbed leadfield matrix of sources of interest H P E in place of the original leadfield matrix H , for both MSE and PDC measures. In particular , degradation in MSE performance of the proposed filters is similar as in other filters ev aluated, which is in line with numerical results of [16], [18], especially considering that highly noisy settings (SMNR=10 dB and SBNR=0 dB) are used to obtain results presented in T ables I-VI. In the case of PDC, the performance of the proposed filters is very similar for H or H P E . This intriguing fact should enhance usability of the proposed filters in the area of inv estigating interactions among sources based on MV AR model. W e should also emphasize that the presented results are for illustrativ e purpose and we invite readers to test other settings. G. Event-Related Experiments In the simulation framework proposed in this section we did not insist on a specific type of EEG/MEG measurements. In particular , the proposed framework is applicable to all types of EEG/MEG measurements, including event-related EEG/MEG experiments, where one may be interested in reconstructing activity which is evok ed (phase-locked) or induced (non-phase locked) to the stimulus. In such experiments, we usually have stationarity in the covariance, but not in the mean [7], due to presence of both e vok ed and induced part of the brain response to the stimuli [50]. Then, if one is interested in reconstruction of ev oked (phase-locked) response, we recommend to use the filter minimizing directly the power of reconstructed noise, i.e., the filter defined in (26), if presence of interference is assumed. If the interference-free model is considered, then we recommend the filter in (57). On the other hand, if reconstruction of induced (non-phase-locked) response is of interest, one may simply subtract the phase-locked activity beforehand (see, e.g., [26]). V I I . C O N C L U S I O N S A N D F U T U R E W O R K W e proposed two families of no vel spatial filters parameter- ized by filter rank. W e also provided a method to select this parameter such that the MSE of the filter output is minimized. The proposed filters extend the MV -PURE framework [14]– [18]. The approach of the proposed filters may be extended further with sensor space transformations, such as projecting R onto its principal subspace [5], [38]. W e emphasize that the proposed filters can be applied in other areas of array signal processing such as wireless communications [16]. Indeed, such applications may stimulate further dev elopment of these filters, by deriving their online implementations or relaxing the nulling constraints (see, e.g., [42], [51] for related work). 11 Filter MSE ( H ) MSE ( H P E ) LCMV R 1.47 ± 0.18 1.52 ± 0.14 LCMV N 1.35 ± 0.25 1.34 ± 0.24 NL 1.45 ± 0.20 1.51 ± 0.15 MMSE 1.38 ± 0.19 1.45 ± 0.15 MMSE INT 1.21 ± 0.23 1.34 ± 0.18 ZF 1.53 ± 0.20 1.51 ± 0.20 EIG LCMV R 1.28 ± 0.22 1.31 ± 0.19 EIG LCMV N 1.37 ± 0.26 1.35 ± 0.24 MVP MSE 1.36 ± 0.18 1.43 ± 0.14 MVP R 1.32 ± 0.17 1.43 ± 0.14 MVP N 1.12 ± 0.26 1.16 ± 0.24 MVP NL MSE 1.11 ± 0.14 1.26 ± 0.12 MVP NL R 1.14 ± 0.17 1.32 ± 0.17 MVP NL N 0.86 ± 0.15 0.95 ± 0.15 T ABLE I: Spatial filters MSE performance for SMNR=10 dB, SBNR=0 dB and SINR=0 dB . The best result is in bold red and top 5 results are in bold. Filter MSE ( H ) MSE ( H P E ) LCMV R 1.34 ± 0.21 1.42 ± 0.16 LCMV N 1.18 ± 0.28 1.19 ± 0.25 NL 1.39 ± 0.21 1.47 ± 0.16 MMSE 1.19 ± 0.21 1.31 ± 0.16 MMSE INT 1.10 ± 0.25 1.27 ± 0.19 ZF 1.44 ± 0.22 1.42 ± 0.21 EIG LCMV R 1.08 ± 0.25 1.15 ± 0.21 EIG LCMV N 1.12 ± 0.28 1.13 ± 0.25 MVP MSE 1.13 ± 0.17 1.27 ± 0.14 MVP R 1.10 ± 0.15 1.26 ± 0.14 MVP N 0.80 ± 0.21 0.88 ± 0.20 MVP NL MSE 0.98 ± 0.12 1.18 ± 0.11 MVP NL R 1.07 ± 0.19 1.27 ± 0.19 MVP NL N 0.81 ± 0.14 0.90 ± 0.14 T ABLE II: Spatial filters MSE performance for SMNR=10 dB, SBNR=0 dB and SINR=5 dB . The best result is in bold red and top 5 results are in bold. Filter MSE ( H ) MSE ( H P E ) LCMV R 1.22 ± 0.24 1.34 ± 0.17 LCMV N 1.07 ± 0.29 1.09 ± 0.26 NL 1.36 ± 0.22 1.45 ± 0.17 MMSE 1.02 ± 0.22 1.21 ± 0.17 MMSE INT 1.04 ± 0.26 1.24 ± 0.19 ZF 1.39 ± 0.23 1.38 ± 0.21 EIG LCMV R 0.92 ± 0.26 1.04 ± 0.21 EIG LCMV N 0.93 ± 0.28 0.98 ± 0.24 MVP MSE 0.93 ± 0.15 1.13 ± 0.13 MVP R 0.94 ± 0.14 1.15 ± 0.13 MVP N 0.63 ± 0.15 0.72 ± 0.15 MVP NL MSE 0.93 ± 0.12 1.14 ± 0.11 MVP NL R 1.04 ± 0.19 1.26 ± 0.20 MVP NL N 0.80 ± 0.14 0.89 ± 0.14 T ABLE III: Spatial filters MSE performance for SMNR=10 dB, SBNR=0 dB and SINR=10 dB . The best result is in bold red and top 5 results are in bold. Filter PDC ( H ) PDC ( H P E ) LCMV R 0.73 ± 0.08 0.75 ± 0.05 LCMV N 0.65 ± 0.11 0.68 ± 0.09 NL 0.72 ± 0.09 0.74 ± 0.06 MMSE 0.58 ± 0.20 0.53 ± 0.20 MMSE INT 0.74 ± 0.08 0.71 ± 0.07 ZF 0.55 ± 0.13 0.58 ± 0.11 EIG LCMV R 0.20 ± 0.15 0.18 ± 0.14 EIG LCMV N 0.26 ± 0.17 0.25 ± 0.16 MVP MSE 0.80 ± 0.04 0.81 ± 0.03 MVP R 0.79 ± 0.06 0.79 ± 0.05 MVP N 0.75 ± 0.09 0.75 ± 0.07 MVP NL MSE 0.83 ± 0.03 0.83 ± 0.02 MVP NL R 0.84 ± 0.03 0.83 ± 0.03 MVP NL N 0.84 ± 0.03 0.83 ± 0.03 T ABLE IV: Spatial filters PDC performance for SMNR=10 dB, SBNR=0 dB and SINR=0 dB . The best result is in bold red and top 5 results are in bold. Filter PDC ( H ) PDC ( H P E ) LCMV R 0.76 ± 0.08 0.77 ± 0.05 LCMV N 0.72 ± 0.10 0.74 ± 0.08 NL 0.73 ± 0.09 0.75 ± 0.06 MMSE 0.60 ± 0.20 0.53 ± 0.20 MMSE INT 0.77 ± 0.08 0.72 ± 0.06 ZF 0.60 ± 0.12 0.63 ± 0.10 EIG LCMV R 0.27 ± 0.18 0.23 ± 0.17 EIG LCMV N 0.35 ± 0.19 0.32 ± 0.18 MVP MSE 0.83 ± 0.03 0.82 ± 0.03 MVP R 0.83 ± 0.04 0.82 ± 0.03 MVP N 0.83 ± 0.05 0.82 ± 0.04 MVP NL MSE 0.84 ± 0.02 0.83 ± 0.02 MVP NL R 0.84 ± 0.03 0.83 ± 0.03 MVP NL N 0.84 ± 0.03 0.83 ± 0.03 T ABLE V: Spatial filters PDC performance for SMNR=10 dB, SBNR=0 dB and SINR=5 dB . The best result is in bold red and top 5 results are in bold. Filter PDC ( H ) PDC ( H P E ) LCMV R 0.78 ± 0.07 0.78 ± 0.05 LCMV N 0.76 ± 0.09 0.77 ± 0.07 NL 0.74 ± 0.09 0.75 ± 0.06 MMSE 0.61 ± 0.20 0.51 ± 0.22 MMSE INT 0.78 ± 0.07 0.73 ± 0.06 ZF 0.63 ± 0.12 0.65 ± 0.10 EIG LCMV R 0.32 ± 0.20 0.27 ± 0.19 EIG LCMV N 0.42 ± 0.21 0.35 ± 0.19 MVP MSE 0.84 ± 0.03 0.83 ± 0.02 MVP R 0.85 ± 0.03 0.83 ± 0.03 MVP N 0.86 ± 0.03 0.84 ± 0.03 MVP NL MSE 0.84 ± 0.02 0.83 ± 0.02 MVP NL R 0.84 ± 0.03 0.83 ± 0.03 MVP NL N 0.84 ± 0.03 0.83 ± 0.03 T ABLE VI: Spatial filters PDC performance for SMNR=10 dB, SBNR=0 dB and SINR=10 dB . The best result is in bold red and top 5 results are in bold. 12 W e also dev eloped open-source MA TLAB simulation plat- form for ev aluation of efficienc y of spatial filters in recon- struction of brain activity from EEG/MEG measurements. This framew ork may be used directly from a single org-mode file ( supFunSim.org ) or as a set of MA TLAB scripts. Jupyter version is also planned and a detailed description of the framew ork will be reported in a future publication. It is av ailable online at https://github.com/IS- UMK/supFunSim.git and may be extended according to users’ needs. For example, various forms of regularization may be used to obtain better conditioning of R or N , especially for small-sample size (see, e.g., [52]). Such impro vement over the currently implemented finite sample estimate of the co variance matrices can then be used across all implemented filters. A P P E N D I X A K N OW N R E S U LT S U S E D For conv enience, let us recall that in this paper we assume that all eigen- and singular value decompositions considered hav e their eigen- and singular v alues org anized in nonincreas- ing order . F act 1 (Eckart-Y oung-Schmidt-Mir sky Theor em [53]): Let A ∈ R m × n be a given matrix of r k ( A ) = a and let us set rank constraint r < a. Then, one has: X S r ∈ \ ι ∈ I arg min X r ∈X m × n r ∥ X r − A ∥ ι , (66) if X S r is of the follo wing form: X r = [ U A ] m × r [ Σ A ] r × r ([ V A ] n × r ) t , (67) where A = U A Σ A V t A is a singular value decomposition of A . Further , X S r is a minimizer if and only if it is obtained in this way . F act 2 ( [54, p.113]): Let A ∈ R m × n be a giv en matrix and let the subspace S ⊂ R n be gi ven. Then, for any b ∈ R m , X b is the minimum-norm least-squares solution of A x = b , where x ∈ S , if and only if X = P s ( AP s ) † , where P s is the orthogonal projection matrix onto S. F act 3 ( [55]): Let C ∈ R n × n , D ∈ R n × n be symmetric matrices. Denoting by c 1 ≥ c 2 ≥ · · · ≥ c n and d 1 ≥ d 2 ≥ · · · ≥ d n the eigen v alues of C and D , respectiv ely , one has tr ( C D ) ≤ n X i =1 c i d i . (68) The equality can be attained. 2 Remark 3: With notation as in Fact A-3, we have equi va- lently: tr C ( − D ) = − tr ( C D ) ≥ − n X i =1 c i d i . (69) 2 The work [55] gives explicit form of eigenv alue decompositions of C and D for which equality can be attained. A P P E N D I X B P RO O F O F T H E O R E M 1 a) Change of variables: Let us first consider G := R − 1 / 2 H and G I := R − 1 / 2 H I along with G c := [ G G I ] and Z r := W r R 1 / 2 . W e note that Z r G = W r H and Z r G I = W r H I and consequently Z r G c = [ Z r G Z r G I ] = [ W r H W r H I ] = W r H c . W e also note that r k ( G ) = l , r k ( G I ) = k , r k ( G c ) = l + k and Z r ∈ X l × m r , as multiplication by in vertible matrix does not change rank of matrix [31]. Therefore, the optimization problem (17) can be recast in vie w of (11) and (16) in terms of these new variables equiv alently as: minimize ∥ Z r ∥ 2 F − 2 tr Z r G c E [ q c q t ] subject to Z r ∈ \ ι ∈ I arg min Z r ∈ X l × m r ∥ Z r G − I l ∥ 2 ι Z r G I = 0 l × k . (70) In particular , we note that the constraint Z r G I = 0 l × k implies that Z r G c E [ q c q t ] = Z r [ G G I ] E [ q c q t ] = [ Z r G 0 l × k ] E [ q c q t ] = Z r GQ , (71) which will be useful later on, with Q = E [ q q t ] . b) F easible set: In this part we reformulate the definition of feasible set of Z r satisfying constraints of optimization problem (70). Regarding the first constraint, we note that, from Fact 1 gi ven in the Appendix A, we have \ ι ∈ I arg min X r ∈ X l × l r ∥ X r − I l ∥ ι = Λ r Λ t r , (72) where Λ r = [ Λ ] l × r for an orthogonal matrix Λ ∈ R l × l . W e further note that equation Z r G = Λ r Λ t r is solvable with respect to Z r , since, e.g., Λ r Λ t r G † is a particular solution. Thus, Z r satisfies Z r ∈ \ ι ∈ I arg min Z r ∈ X l × m r ∥ Z r G − I l ∥ 2 ι if and only if Z r G = Λ r Λ t r for certain Λ r ∈ R l × r satisfying Λ t r Λ r = I r . Moreover , denoting z t r i to be the i -th column of Z t r for i = 1 , . . . , l , and upon noticing that the second constraint Z r G I = 0 l × k is equiv alent to z t r i ∈ N ( G t I ) = R ( G I ) ⊥ ⊂ R m for i = 1 , . . . , l , it is seen that the constraints in (70) can be equiv alently cast as Z r G = Λ r Λ t r z t r i ∈ R ( G I ) ⊥ , i = 1 , . . . , l . (73) In particular, from (71) we obtain that it is sufficient to consider only minimum-norm solutions of (73), as the cost function in (70) is, in vie w of (71), such that ∥ Z ⋆ r ∥ 2 F − 2 tr Z ⋆ r GQ = ∥ Z ⋆ r ∥ 2 F − 2 tr Λ r Λ t r Q ≤ ∥ Z ⋄ r ∥ 2 F − 2 tr Λ r Λ t r Q , (74) where Z ⋆ r is the minimum-norm solution of Z r G = Λ r Λ t r and Z ⋄ r is an y other solution of Z r G = Λ r Λ t r , both subject to the constraints in (73). 13 c) Minimum-norm solution: W e use now Fact 2 in the Appendix A and, after transposition, obtain that Z ⋆ r = Λ r Λ t r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ (75) is the least-squares solution of (73) such that ∥ Z ⋆ r ∥ 2 F is the smallest among all least-squares solutions of (73). W e proceed now to sho w that Z ⋆ r is the minimum-norm solution of (73). T o this end, we note first that the second constraint in (73) is satisfied by ro ws of Z ⋆ r (columns of ( Z ⋆ r ) t ). Thus, it suffices to show that ( P R ( G I ) ⊥ G ) is of full-column rank l , which will ensure that Z ⋆ r G = Λ r Λ t r , as in such a case ( P R ( G I ) ⊥ G ) † ( P R ( G I ) ⊥ G ) = I l [31]. T o this end, we note that due to linear independence of columns of G c = [ G G I ] , one has in particular that R ( G ) ∩ R ( G I ) = { 0 } . Based on this fact, we will prov e no w that if x ∈ R ( G ) then P R ( G I ) ⊥ x = 0 ⇐ ⇒ x = 0 . (76) ( ⇒ ) Let us express x ∈ R ( G ) as x = P R ( G I ) x + P R ( G I ) ⊥ x. Then, by our assumption we have x = P R ( G I ) x , hence x ∈ R ( G I ) . Thus, x ∈ R ( G ) ∩ R ( G I ) and, from the fact that R ( G ) ∩ R ( G I ) = { 0 } , we conclude that it must be x = 0 . ( ⇐ ) This is ob vious. Based on (76), it is easy to prove that P R ( G I ) ⊥ is injec- tiv e on R ( G ) as follows: suppose that x 1 ∈ R ( G ) , x 2 ∈ R ( G ) , x 1 = x 2 , and that P R ( G I ) ⊥ x 1 = P R ( G I ) ⊥ x 2 . Thus, P R ( G I ) ⊥ ( x 1 − x 2 ) = 0 , hence from (76) it must be x 1 = x 2 , which contradicts our assumption x 1 = x 2 . Consider now the linearly independent set { g 1 , g 2 , . . . , g l } of columns of G . Since P R ( G I ) ⊥ is injectiv e on R ( G ) , the set { P R ( G I ) ⊥ g 1 , P R ( G I ) ⊥ g 2 , . . . , P R ( G I ) ⊥ g l } of columns of ( P R ( G I ) ⊥ G ) is also linearly independent. This im- plies that r k ( P R ( G I ) ⊥ G ) = l , and consequently , that ( P R ( G I ) ⊥ G ) † ( P R ( G I ) ⊥ G ) = I l . Thus, Z ⋆ r in (75) is the (minimum-norm) solution of Z r G = Λ r Λ t r such that z t r i ∈ R ( G I ) ⊥ for i = 1 , . . . , l . d) Selection of subspace: It follows from the previous considerations that we have narrowed the set of possible solutions of the optimization problem (70) to matrices of the form (75), parameterized by P r := Λ r Λ t r , (77) which we recognize as an orthogonal projection matrix onto arbitrary subspace of R l of dimension r. Therefore, we need to determine now the form of P r minimizing the cost function in (70). T aking into account (71) and using the f act that P r and P R ( G I ) ⊥ are both symmetric and idempotent [31], insertion of (75) into cost function in (70) rev eals the follo wing: ∥ Z ⋆ r ∥ 2 F − 2 tr Z ⋆ r GQ = tr P r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ × P r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ t − 2 tr ( P r Q ) = tr ( P r K (1) ) , (78) where K (1) = ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ ( P R ( G I ) ⊥ G ) † t − 2 Q . (79) Clearly , K (1) is a symmetric matrix, and so is P r . Thus, by applying Fact 3 in the Appendix A along with (69) we obtain, upon setting C = P r and D = − K (1) therein that tr ( P r K (1) ) is minimized for P r = P K (1) r := Λ K (1) r Λ t K (1) r , where Λ K (1) r ∈ R l × r contains as its columns the eigen vectors corresponding to δ (1) 1 ≤ · · · ≤ δ (1) r - the r smallest eigen v alues of K (1) . Then, the form of solution in (20) is obtained by in verting the change of variables to W ⋆ r = Z ⋆ r R − 1 / 2 , where Z ⋆ r = P K (1) r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ . Moreov er, from (11), (70), (71) and (78) one has J I ( W ⋆ r ) = ∥ Z ⋆ r ∥ 2 F − 2 tr Z ⋆ r G c E [ q c q t ] + c = tr ( P K (1) r K (1) ) + c = r X i =1 δ (1) i + c, (80) where c = tr ( E [ q q t ]) = tr ( Q ) . A P P E N D I X C P RO O F O F T H E O R E M 2 The proof proceeds analogously to the proof of Theorem 1, where in place of optimization problem (70) we consider now , in view of (18), the following: minimize ∥ Z r ∥ 2 F subject to Z r ∈ \ ι ∈ I arg min Z r ∈ X l × m r ∥ Z r G − I l ∥ 2 ι Z r G I = 0 l × k . (81) Then, for Z ⋆ r of the form (75), with P r := Λ r Λ t r as in (77), we hav e now that ∥ Z ⋆ r ∥ 2 F = tr P r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ P r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ t = tr ( P r K (2) ) , (82) where K (2) = ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ ( P R ( G I ) ⊥ G ) † t = K (1) + 2 Q . (83) Clearly , K (2) is a symmetric matrix. Thus, in order to find P r minimizing tr ( P r K (2) ) , we apply Fact 3 in the Appendix A along with (69) in exactly the same way as was done below (79) in the proof of Theorem 1. Based on that, we obtain P r = P K (2) r := Λ K (2) r Λ t K (2) r , where Λ K (2) r ∈ R l × r contains as its columns the eigen vectors corresponding to δ (2) 1 ≤ · · · ≤ δ (2) r - the r smallest eigenv alues of K (2) . Similarly as abov e, the form of solution in (23) is obtained by in verting the change of variables to W ⋆ r = Z ⋆ r R − 1 / 2 , 14 where Z ⋆ r = P K (2) r ( P R ( G I ) ⊥ G ) † P R ( G I ) ⊥ . Moreover , just as in (80), we obtain J I ( W ⋆ r ) = ∥ Z ⋆ r ∥ 2 F − 2 tr Z ⋆ r G c E [ q c q t ] + c = tr ( P K (2) r K (1) ) + c = tr P K (2) r ( K (2) − 2 Q ) + c = r X i =1 δ (2) i − 2 tr ( P K (2) r Q ) + c, (84) where c = tr ( E [ q q t ]) = tr ( Q ) . A P P E N D I X D P RO O F O F T H E O R E M 3 The closed algebraic form (26) of the solution of the optimization problem (19) is obtained in exactly the same way as in the proof of Theorem 2, with G replaced by F , G I replaced by F I , and G c replaced by F c , respectively . Thus, to complete the proof of Theorem 3, we only need to compute J I ( W ⋆ r ) , where W ⋆ r = Z ⋆ r N − 1 / 2 with Z ⋆ r = P K (3) r P R ( F I ) ⊥ F † P R ( F I ) ⊥ . Namely , using (71) for F and F I , we have: J I ( W ⋆ r ) = tr ( W ⋆ r R ( W ⋆ r ) t ) − 2 tr W ⋆ r H c E [ q c q t ] + c = tr ( W ⋆ r ( H c Q c H t c + N ) | {z } R ( W ⋆ r ) t ) − 2 tr W ⋆ r H c E [ q c q t ] + c = tr ( Z ⋆ r F c Q c F t c ( Z ⋆ r ) t )+ tr ( Z ⋆ r ( Z ⋆ r ) t ) − 2 tr Z ⋆ r F c E [ q c q t ] + c = tr ( Z ⋆ r F QF t ( Z ⋆ r ) t ) + tr ( Z ⋆ r ( Z ⋆ r ) t ) − 2 tr Z ⋆ r F Q + c = tr P K (3) r Q ( P K (3) r ) t + ∥ Z ⋆ r ∥ 2 F − 2 tr P K (3) r Q + c = ∥ Z ⋆ r ∥ 2 F − tr ( P K (3) r Q ) + c = tr ( P K (3) r K (4) ) + c, (85) where c = tr ( E [ q q t ]) = tr ( Q ) and K (4) = ( P R ( F I ) ⊥ F ) † P R ( F I ) ⊥ ( P R ( F I ) ⊥ F ) † t − Q = K (3) − Q . (86) Finally , J I ( W ⋆ r ) = tr P K (3) r ( K (3) − Q ) + c = r X i =1 δ (3) i − tr ( P K (3) r Q ) + c. (87) A C K N O W L E D G M E N T The authors are grateful to anonymous revie wers for their constructiv e comments which surely promoted the readability of the revised manuscript. R E F E R E N C E S [1] O. L. Frost, “ An algorithm for linearly constrained adaptive array processing, ” Proc. IEEE , vol. 60, no. 8, pp. 926–935, 1972. [2] B. D. V an V een, W . V an Drongelen, M. Y uchtman, and A. Suzuki, “Lo- calization of brain electrical activity via linearly constrained minimum variance spatial filtering, ” IEEE T rans. Biomed. Eng. , vol. 44, no. 9, pp. 867–880, Sep. 1997. [3] J. Gross, J. Kujala, M. H ¨ am ¨ al ¨ ainen, L. T immermann, A. Schnitzler, and R. Salmelin, “Dynamic imaging of coherent sources: Studying neural interactions in the human brain, ” Pr oceedings of the National Academy of Sciences , vol. 98, no. 2, pp. 694–699, 2001. [4] K. Sekihara, S. S. Nagarajan, D. Poeppel, A. Marantz, and Y . Miyashita, “Reconstructing spatio-temporal activities of neural sources using an MEG vector beamformer technique, ” IEEE T rans. Biomed. Eng. , vol. 48, no. 7, pp. 760–771, 2001. [5] K. Sekihara and S. S. Nagarajan, Adaptive Spatial Filters for Electro- magnetic Brain Imaging . Berlin: Springer, 2008. [6] A. Pezeshki, B. D. V an V een, L. L. Scharf, H. Cox, and M. Lundber g, “Eigen value beamforming using a multi-rank MVDR beamformer and subspace selection, ” IEEE T rans. Signal Pr ocess. , vol. 56, no. 5, pp. 1954–1967, May 2008. [7] A. Moiseev , J. M. Gaspar, J. A. Schneider , and A. T . Herdman, “ Applica- tion of multi-source minimum variance beamformers for reconstruction of correlated neural activity , ” Neur oImage , vol. 58, no. 2, pp. 481–496, Sep. 2011. [8] M. Diwakar , M.-X. Huang, R. Sriniv asan, D. L. Harrington, A. Robb, A. Angeles, L. Muzzatti, R. Pakdaman, T . Song, R. J. Theilmann, and R. R. Lee, “Dual-core beamformer for obtaining highly correlated neuronal networks in MEG, ” Neur oImage , vol. 54, no. 1, pp. 253–263, Jan. 2011. [9] R. Oosten veld, P . Fries, E. Maris, and J.-M. Schoffelen, “FieldTrip: Open source software for advanced analysis of MEG, EEG, and in vasi ve elec- trophysiological data, ” Computational Intelligence and Neur oscience , no. 156869, 2011. [10] F . T adel, S. Baillet, J. Mosher , D. Pantazis, and R. M. Leahy , “Brain- storm: A user-friendly application for MEG/EEG analysis, ” Computa- tional Intelligence and Neuroscience , no. 879716, 2011. [11] A. Gramfort, M. Luessi, E. Larson, D. Engemann, D. Strohmeier, C. Brodbeck, L. Parkkonen, and M. H ¨ am ¨ al ¨ ainen, “MNE software for processing MEG and EEG data, ” Neur oImage , vol. 86, pp. 446–460, 2014. [12] A. Keitel and J. Gross, “Indi vidual human brain areas can be identified from their characteristic spectral activation fingerprints, ” PLoS Biol. , vol. 14, no. 6, p. e1002498, 2016. [13] M. Siems, A.-A. Pape, J. F . Hipp, and M. Siegel, “Measuring the cortical correlation structure of spontaneous oscillatory activity with EEG and MEG, ” NeuroIma ge , vol. 129, pp. 345–355, 2016. [14] I. Y amada and J. Elbadraoui, “Minimum-variance pseudo-unbiased low- rank estimator for ill-conditioned in verse problems, ” in Proc. ICASSP , T oulouse, France, May 2006, pp. 325–328. [15] T . Piotrowski and I. Y amada, “MV -PURE estimator: Minimum-variance pseudo-unbiased reduced-rank estimator for linearly constrained ill- conditioned in verse problems, ” IEEE T rans. Signal Pr ocess. , vol. 56, no. 8, pp. 3408–3423, Aug. 2008. [16] T . Piotro wski, R. L. G. Ca valcante, and I. Y amada, “Stochastic MV - PURE estimator: Robust reduced-rank estimator for stochastic linear model, ” IEEE T rans. Signal Pr ocess. , vol. 57, no. 4, pp. 1293–1303, Apr . 2009. [17] M. Y amagishi and I. Y amada, “ A rank selection of MV -PURE with an unbiased predicted-MSE criterion and its ef ficient implementation in image restoration, ” in Pr oc. IEEE ICASSP , V ancouver, Canada, May 2013, May 2013, pp. 1573–1577. [18] T . Piotrowski and I. Y amada, “Performance of the stochastic MV -PURE estimator in highly noisy settings, ” J. of The F ranklin Institute , vol. 351, no. 6, pp. 3339–3350, Jun. 2014. [19] D. R. Brillinger , T ime Series: Data Analysis and Theory . New Y ork: Holt, Rinehart and W inston, 1975. [20] L. L. Scharf, “The SVD and reduced rank signal processing, ” Signal Pr ocess. , v ol. 25, pp. 113–133, 1991. [21] P . Stoica and M. Viber g, “Maximum likelihood parameter and rank estimation in reduced-rank multivariate linear regressions, ” IEEE T rans. Signal Pr ocess. , vol. 44, no. 12, pp. 3069–3078, Dec. 1996. [22] Y . Y amashita and H. Ogawa, “Relativ e Karhunen-Loeve transform, ” IEEE T rans. Signal Pr ocess. , vol. 44, no. 2, pp. 371–378, Feb . 1996. [23] L. L. Scharf and J. K. Thomas, “W iener filters in canonical coordinates for transform coding, filtering, and quantizing, ” IEEE T rans. Signal Pr ocessing , v ol. 46, pp. 647–654, Mar . 1998. [24] T . Piotrowski and I. Y amada, “Reduced-rank estimation for ill- conditioned stochastic linear model with high signal-to-noise ratio, ” J. of The Fr anklin Institute , vol. 353, no. 13, pp. 2898–2928, Sep. 2016. [25] S. S. Dalal, K. Sekihara, and S. S. Nagarajan, “Modified beamformers for coherent source region suppression, ” IEEE T rans. Biomed. Eng. , vol. 53, no. 7, pp. 1357–1363, Jul. 2006. [26] H. B. Hui, D. Pantazis, S. L. Bressler , and R. M. Leahy , “Identifying true cortical interactions in MEG using the nulling beamformer, ” Neu- r oImage , vol. 49, no. 4, pp. 3161–3174, 2010. 15 [27] L. A. Baccala and K. Sameshima, “Partial directed coherence: a new concept in neural structure determination, ” Biol. Cybern. , vol. 84, no. 6, pp. 463–474, 2001. [28] R. K u ´ s, M. Kami ´ nski, and K. Blinowska, “Determination of eeg activ- ity propagation: pair-wise versus multichannel estimate, ” IEEE T rans. Biomed. Eng. , vol. 51, no. 9, pp. 1501–1510, 2004. [29] T . Piotrowski, C. Zaragoza-Martinez, D. Gutierrez, and I. Y amada, “MV - PURE estimator of dipole source signals in EEG, ” in Pr oc. ICASSP , V ancouver , Canada, May 2013, May 2013, pp. 968–972. [30] T . Piotrowski, J. Nikadon, and D. Gutierrez, “Reconstruction of brain activity in EEG/MEG using reduced-rank nulling spatial filter , ” in Proc. SSP , Palma de Mallorca, Spain, June 2016, Jun. 2016, pp. 1–5. [31] R. A. Horn and C. R. Johnson, Matrix Analysis . Ne w Y ork: Cambridge Univ ersity Press, 1985. [32] R. D. Pascual-Marqui, “Review of methods for solving the EEG in verse problem, ” International Journal of Bioelectr omagnetism , vol. 1, no. 1, pp. 75–86, 1999. [33] T . Piotrowski, D. Gutierrez, I. Y amada, and J. Zygierewicz, “Reduced- rank neural activity index for EEG/MEG multi-source localization, ” in Pr oc. IEEE ICASSP , Florence, Italy , May 2014, pp. 4708–4712. [34] ——, “ A family of reduced-rank neural activity indices for EEG/MEG source localization, ” LNCS , v ol. 8609, pp. 447–458, Aug. 2014. [35] J. C. Mosher , R. M. Leahy , and P . S. Lewis, “EEG and MEG: forward solutions for in verse methods, ” IEEE T rans. Biomed. Eng . , vol. 46, no. 3, pp. 245–259, Mar. 1999. [36] A. Moiseev , S. M. Doesburg, R. E. Grunau, and U. Ribary , “Minimum variance beamformer weights revisited, ” Neur oImage , vol. 120, pp. 201– 213, 2015. [37] Y . Hua, M. Nikpour, and P . Stoica, “Optimal reduced-rank estimation and filtering, ” IEEE T rans. Signal Pr ocess. , vol. 49, no. 3, pp. 457–469, Mar . 2001. [38] D. Guti ´ errez, A. Nehorai, and A. Dogand ˇ zi ´ c, “Performance analysis of reduced-rank beamformers for estimating dipole source signals using EEG/MEG, ” IEEE T rans. Biomed. Eng. , vol. 53, no. 5, pp. 840–844, 2006. [39] G. H. Golub and C. F . V an Loan, Matrix Computations . Baltimore and London: The Johns Hopkins Univ ersity Press, 1996. [40] S. Haufe, V . V . Nikulin, K.-R. M ¨ uller , and G. Nolte, “ A critical assessment of connectivity measures for EEG data: A simulation study , ” Neur oImage , vol. 64, pp. 120 – 133, 2013. [41] T . Chen, S.-I. Amari, and Q. Lin, “ A unified algorithm for principal and minor components extraction, ” Neural Networks , v ol. 11, no. 3, pp. 385 – 390, 1998. [42] T . Piotrowski and I. Y amada, “Efficient parallel computation of the stochastic MV -PURE estimator by the hybrid steepest descent method, ” Lectur e Notes in Computer Science , vol. 7268, pp. 404–412, 2012. [43] G. Gomez-Herrero, M. Atienza, K. Egiazarian, and J. L. Cantero, “Measuring directional coupling between EEG sources, ” Neur oImage , vol. 43, no. 3, pp. 497 – 508, 2008. [44] T . Schneider and A. Neumaier , “ Algorithm 808: Arfit-a MA TLAB package for the estimation of parameters and eigenmodes of multiv ariate autoregressi ve models, ” ACM T rans. Math. Softw . , vol. 27, no. 1, pp. 58– 65, Mar . 2001. [45] T . Oostendorp and A. V an Oosterom, “Source parameter estimation in inhomogeneous volume conductors of arbitrary shape, ” IEEE Tr ans. Biomed. Eng. , vol. 36, no. 3, pp. 382–391, March 1989. [46] B. Fischl, A. v an der K ouwe, C. Destrieux, E. Halgren, F . S ´ egonne, D. H. Salat, E. Busa, L. J. Seidman, J. Goldstein, D. Kennedy , V . Caviness, N. Makris, B. Rosen, and A. M. Dale, “ Automatically parcellating the human cerebral corte x, ” Cer ebral Cortex , v ol. 14, no. 1, pp. 11–22, 2004. [47] R. S. Desikan, F . S ´ egonne, B. Fischl, B. T . Quinn, B. C. Dickerson, D. Blacker , R. L. Buckner, A. M. Dale, R. P . Maguire, B. T . Hyman, M. S. Albert, and R. J. Killiany , “ An automated labeling system for subdividing the human cerebral cortex on MRI scans into gyral based regions of interest, ” NeuroImag e , vol. 31, no. 3, pp. 968–980, 2006. [48] A. M. Dale, B. Fischl, and M. I. Sereno, “Cortical surface-based anal- ysis: I. segmentation and surface reconstruction, ” NeuroIma ge , vol. 9, no. 2, pp. 179–194, 1999. [49] M. Fuchs, J. Kastner, M. W agner, S. Hawes, and J. S. Ebersole, “ A standardized boundary element method volume conductor model, ” Clin Neur ophysiol. , v ol. 113, no. 5, pp. 702 – 712, May 2002. [50] O. David, J. M. Kilner, and K. J. Friston, “Mechanisms of ev oked and induced responses in MEG / EEG, ” Neur oImage , vol. 31, no. 4, pp. 1580–1591, 2006. [51] M. Y ukaw a, Y . Sung, and G. Lee, “Dual-domain adaptive beamformer under linearly and quadratically constrained minimum variance, ” IEEE T rans. Signal Process. , vol. 61, no. 11, pp. 2874–2886, June 2013. [52] O. Ledoit and M. W olf, “ A well-conditioned estimator for large- dimensional cov ariance matrices, ” Journal of Multivariate Analysis , vol. 88, no. 2, pp. 365 – 411, 2004. [53] L. Mirsky , “Symmetric gauge functions and unitarily in variant norms, ” The Quarterly Journal of Mathematics , v ol. 11, no. 1, pp. 50–59, 1960. [54] A. Ben-Israel and T . N. E. Greville, Generalized Inver ses : Theory and Applications, Second Edition . New Y ork: Springer V erlag, 2003. [55] C. M. Theobald, “ An inequality for the trace of the product of two symmetric matrices, ” Math. Proc. Camb . Phil. Soc. , vol. 77, pp. 265– 267, 1975.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment