Historical Ink: 19th Century Latin American Spanish Newspaper Corpus with LLM OCR Correction

This paper presents two significant contributions: First, it introduces a novel dataset of 19th-century Latin American newspaper texts, addressing a critical gap in specialized corpora for historical and linguistic analysis in this region. Second, it…

Authors: Laura Manrique-Gómez, Tony Montes, Arturo Rodríguez-Herrera

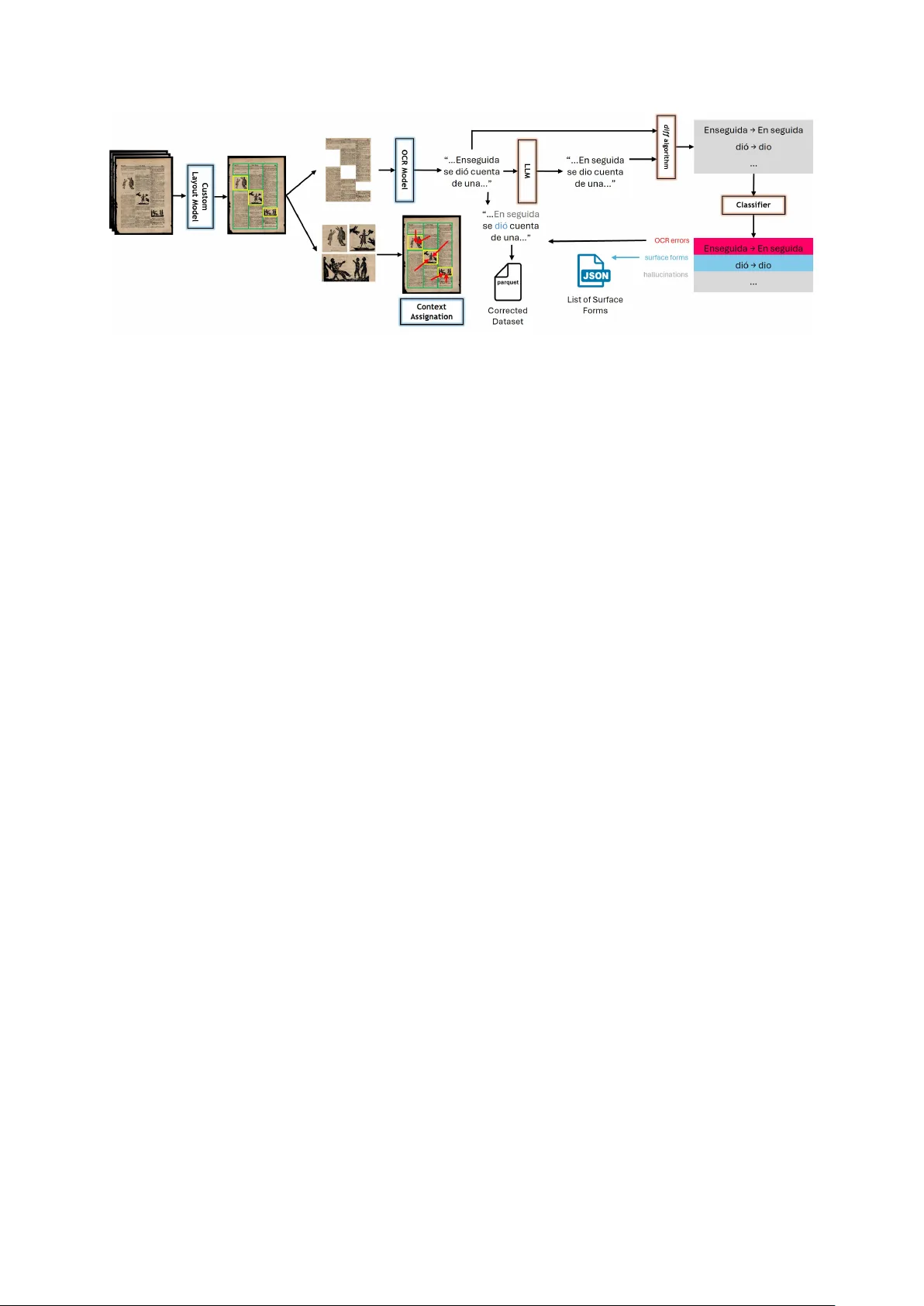

Historical Ink: 19th Century Latin American Spanish Newspaper Corpus with LLM OCR Corr ection Laura Manrique-Gómez 1 T ony Montes 2 Arturo Rodríguez-Herr era 3 Rubén Manrique 2 1 History and Geography Department, Uni versidad de los Andes, Bogotá D.C. 2 Systems and Computing Engineering Department, Uni versidad de los Andes, Bogotá D.C. 3 Ci vil and En vironmental Engineering Department, Rice Uni versity , Houston TX {l.manriqueg, t.montes, rf.manrique}@uniandes.edu.co da.rodriguezh@rice.edu Abstract This paper presents two significant contrib u- tions: First, it introduces a nov el dataset of 19th- century Latin American newspaper texts, ad- dressing a critical gap in specialized corpora for historical and linguistic analysis in this re gion. Second, it de velops a flexible framework that utilizes a Large Language Model for OCR er- ror correction and linguistic surface form detec- tion in digitized corpora. This semi-automated framew ork is adaptable to various contexts and datasets and is applied to the newly created dataset. 1 Introduction The computational processing of historical news- paper texts is crucial due to the v aluable informa- tion these texts contain about political, economic, and cultural history . Over the past three decades, Digital Humanities has driv en extensi ve digitiza- tion e ff orts, resulting in numerous curated digi- tal collections ( Berry and Fagerjord , 2017 ; Dob- son , 2019 ). Howe v er , con verting these images into machine-readable texts remains challenging, par- ticularly in achieving accurate transcription. A pri- mary challenge is the accurac y of OCR technology , especially with the extremely di verse newspaper layouts, materially de graded documents, and non- standardized fonts typical of historical texts. T ra- ditional OCR methods often produce errors that complicate subsequent analysis. T o address these challenges, we employed GPT - 4o-mini ( OpenAI , 2024 ), a Large Language Model (LLM), within a pipeline for OCR error correction. While the LLM is capable of fixing OCR-related errors that traditional systems often miss ( Langlais , 2024 ), our pipeline also detects and classifies po- tential hallucinations to av oid further issues and streamline the process. Additionally , it contributes by identifying surface forms—specific word occur- rences—within the dataset. 1.1 Related W ork The "Chronicling America" initiati ve marks a sig- nificant advancement in the digitization of histor- ical newspaper materials ( Humanities ). Another major e ff ort, is the "Atlas - Oceanic Exchanges" collection, which traces global information net- works in 19th-century newspaper materials ( Ex- changes ). Similarly , “V iral T exts: Mapping Net- works of Reprinting in 19th-Century Ne wspapers and Magazines” ( Cordell and Smith ) e xplores the culture of reprinting in the U.S. before the Ci vil W ar, while the European “Project Impresso: Media Monitoring the Past” ( SNSF and FNR , 2023 ) ad- dresses the OCR challenges specific to English and Germanic languages. Despite these adv ancements, historical newspa- pers are scarcely digitized in the Global South ( LeBlanc , 2024 ). Consequently , a gap remains in specialized corpora for 19th-century Latin Ameri- can ne wspapers, limiting the study of the region’ s unique historical and linguistic features. Our re- search addresses this gap by introducing a new dataset of Latin American newspaper te xts in old Spanish. This dataset was post-processed with LLM models for addressing OCR errors and dis- tinguishing them from historical linguistic surface forms 1 . ICD AR post-OCR correction competitions in 2017 and 2019 ( Chiron et al. , 2017 ; Rigaud et al. , 2019 ) presented interesting solutions to error detec- tion and correction in 10 European languages, such as Clova AI model based on multi-lingual BER T . Similarly , Nguyen et al. ( 2020 ) achieved compara- ble results by initializing embeddings with popu- lar static embeddings such as GloV e ( Pennington et al. , 2014 ). In another approach, V eninga ( 2024 ) examined the fine-tuning of ByT5, a character- 1 The dataset is available at https://huggingface.co/ datasets/Flaglab/latam- xix in its three v ersions: "origi- nal", "cleaned", and "corrected" le vel LLM, emphasizing the importance of pre- processing and context length optimization. This results aligns with earlier studies on character -lev el models, such as Amrhein and Clematide ( 2018 ), which demonstrated the potential of character- based sequence-to-sequence models in improving OCR correction. The application of LLMs for post-OCR correc- tion has gained traction, especially in improving the accuracy of digitized historical texts. Early w ork by Nguyen et al. ( 2021 ) laid the foundation by catego- rizing post-OCR correction methods, highlighting the challenges associated with isolated-word and context-dependent approaches. As discussed by Thomas et al. ( 2024 ), the introduction of T rans- formers’ architecture leads to state-of-the-art per- formance in v arious text correction tasks and also presents a ne w baseline for post-OCR correction. Langlais ( 2024 ) builds on this foundation by addressing the persistent issue of OCR quality in cultural heritage texts. They propose that LLMs can significantly enhance correction accu- racy through context-aware processing, although challenges like hallucinations and language switch- ing remain. More recent work by Thomas et al. ( 2024 ) demonstrates the superiority of a prompt- based approach using Llama 2 ov er traditional models like B AR T ( Soper et al. , 2021a ), reducing character error rates (CER) by ov er 54%. These findings are consistent with those of Soper et al. ( 2021b ), who reported comparable improv ements using fine-tuned BAR T models. These studies high- light the e volution from traditional correction meth- ods to LLM-based approaches. Nev ertheless, fur- ther studies are needed to test correction methods in historical documents containing linguistic and regional v ariants. 2 Sourcing The dataset was initially compiled from Colom- bian digital newspaper archi ves. The primary focus was on publications that included cartoons or il- lustrations, which were intended for subsequent multimodal modeling. This revie w also extended to the physical collections on-site, as only approx- imately 50% of the physical collection had been digitized. Through this process, 64 newspaper ti- tles were identified, representing 7% of the total 1,655 publications in the collections. This first iter- ation resulted in a dataset consisting of 4,032 pages of scanned pages of ne wspapers, primarily from Figure 1: El Oso, Peru. An example of a scanned news- paper image. The corresponding OCR-extracted text and the corrected version can be found in Appendix A , for reference. Nue va Granada—a former country encompassing Colombia, Panama, V enezuela, and Ecuador—. A second iteration completed the revision of 3,038 digitized newspapers of 58 digital collec- tions across Mexico, Argentina, Colombia, Peru, Chile, P anama, V enezuela, Uruguay , Boli via, Cuba, and Ecuador as shown in T able C1 . Some coun- tries, such as Bolivia, Cuba, and V enezuela have very limited or no web collections, resulting in their underrepresentation or absence from the fi- nal dataset. Additionally , some newspapers were printed in Europe due to lo wer costs; in some cases, printing outsourcing was utilized. The final dataset comprises 197 ne wspaper titles and 23,522 pages of scanned images, primarily from Mexico City (Mexico is the only country that has digitized its entire collection), but also includes publications from other Latin American cities, such as Buenos Aires, Lima, Bogota, and Santiago de Chile. An example of a ne wspaper image can be observed in Figure 1 . Originally , the Latin American 19-century news- paper dataset consists of scanned images. These images were processed using a layout model, fol- lo wed by an OCR service. The layout model was specifically trained using data from annotated news- papers av ailable in Roboflo w OCR ( 2022 ); Al- pha ( 2023 ); RSCOE ( 2023 ); GrabadosXIX ( 2023 ). These datasets were merged into a single dataset (CD) consisting of 1368 images of newspapers an- notated for binary layout classification: images and texts. The CD dataset includes 10% of images from our ne wspapers dataset, labeled by hand, and it was enriched with data augmentation for shear and rota- tion. These techniques help to increase the model’ s performance in images with scanning errors. The CD dataset was used to train an image recognition model from Azure Cogniti ve Services 2 , which can extract the images in the ne wspaper page and extract the text through the OCR. The model’ s performance scored MAP@75 of 87.0%, result- ing in a collection of annotations and coordinates for both te xt and images. These coordinates were used to crop the original image, and then process it with the OCR model. Once the OCR results were obtained, we merged the processed text with the im- ages, creating a dataset that contains the ne wspaper images and their associated text. From a sample of 2,500 transcribed texts, each containing 1,000 characters, manual supervision re vealed that 8.5% were unreadable. The remaining texts contained multiple transcription errors, primarily due to the artisanal printing techniques and the grammatical and lexical variations of the era. These errors signif- icantly impacted readability , introducing bias when using the texts as input for NLP-LLM models. 3 Processing The dataset includes samples of ne wspapers that were either handwritten or produced using early carving machines. Over time, these machines would wear out, leading to text features that were easily confused with backward accent marks, un- wanted punctuations, or misplaced characters be- tween words. Such misreadings disrupted the con- tinuity of the text without adding any semantic meaning. Detecting these errors automatically poses a chal- lenge due to the linguistic shifts between modern and 19th-century Spanish. OCR models trained on such historical texts are lacking, especially consid- ering the semantic and orthographic changes o ver time. For instance, what might appear as an OCR error could instead be a historical surface form of a word; for e xample, the conjunction "y" (and) w as often written as "I". Additionally , some texts were completely unin- telligible for OCR, and challenging for humans to interpret, due to the fonts used in certain newspa- pers. The varied layouts of these ne wspapers also resulted in texts filled with scores or numbers, or in some cases, samples containing only chapter titles or numbering (e.g., "III IV V"), which added noise to the dataset. A general ov erview of the pipeline from the source until the final post-processed, is observed in Figure 2 . 2 Model av ailable through Azure cloud services at https://learn.microsoft.com/en- us/azure/ ai- services/computer- vision/ 3.1 Cleaning and filtering Some of the most common cleaning steps for text data include removing duplicates and noisy data, which are particularly crucial for subsequent analy- sis. In this case, 3 . 08% of rows were remo ved due to duplicates or empty texts. Additionally , 1 . 74% of rows were filtered out where over 50% of the characters were non-alphabetic, as these ro ws are more likely to be noise than useful content. Rows with four or fewer tokens were also removed, ac- counting for 0 . 61% of the data; this w as achie ved by training a ne w tokenizer with a vocab ulary size of 52,000, deri ved from the BET O (Spanish BER T) pre-trained tokenizer ( Cañete et al. , 2020 ). 3.2 Post-OCR LLM Corr ection As previously discussed, LLMs hav e established a baseline for correcting OCR errors in historical texts ( Thomas et al. , 2024 ; Langlais , 2024 ). De- tecting and fixing OCR errors from ne wspapers is challenging because these errors are often subtle and numerous. This problem is especially pro- nounced with 19th-century newspapers, where the quality of the paper and the outdated printing meth- ods contribute to a high frequency of errors. These errors create significant noise and complicate the text correction process ( Lopresti , 2008 ). In this paper , we use a technique for detecting OCR errors and correcting them using GPT -4o- mini and taking advantage of the fact that LLMs were trained mostly in modern language. This way , manually checked rules can classify corrections between errors, word surface forms, or none of both (hallucinations). These rules, e xplained in the following section, were re vised and selected by a field expert who served as well as an ev aluator for these corrections testing their precision for this case. W e emplo yed a di ff algorithm to detect the di ff er- ences between the original and corrected texts. This approach allowed us to fully le verage the LLM’ s ability to correct the te xt while ensuring a reliable and structured output. The di ff algorithm identi- fies added, removed, and changed parts between the two texts, similar to the functionality seen in GitHub’ s blame feature. By doing so, we can spec- ify the exact changes made during the correction process, enabling us to classify these alterations e ff ecti vely . This method proved more e ff ecti ve than instruct- ing the LLM to return corrections in a specific Figure 2: Overview of the full methodology pipeline. The blue components correspond to the Layout + OCR stage to get to digitized te xt, and the orange components correspond to the Post-OCR LLM Correction stage. The two outputs of the pipeline are the LatamXIX Corrected Dataset and the List of Surface Forms . The Custom Layout Model also extracts the images of the newspaper which are then assigned to the related texts (context). The final version of the te xt has the OCR errors corrected but not the surface forms, as the y are part of the language. format, such as JSON, as the di ff algorithm pro- duced shorter , more consistent, and less variable outputs. Additionally , this di ff erentiation allows us to ignore an y additions or deletions that result from LLM hallucinations, focusing instead on meaning- ful changes. An example of the original text, the corrected version, and the detected di ff erences can be found in Appendix A , as well as the parameters chosen for this step. 3.3 Corrections Classification Once the corrections are detected and isolated through the di ff algorithm, the last step is to clas- sify them. Still, first, it is important to state the main di ff erences between the possible labels for each correction: • Surface form: In linguistics, the term sur- face form (or word form) denotes the specific appearance of a word in a gi ven conte xt, con- trasting with its le xical form, which pertains to its meaning ( Sarveswaran et al. , 2019 ). Dur- ing the 19th century in Latin America, certain words were documented with variant spellings reflecting language shifts over time. It’ s im- portant to note that changes in surf ace forms do not necessarily alter the semantic content of the word, b ut rather represent orthographic modifications. • OCR err or: An OCR error, on the other hand, refers to e very possible misread text from the real ne wspaper text. The OCR errors must be corrected but must be carefully separated ne wspaper linguistic "errors" that contribute to the linguistics of the time. • Hallucinations: If none of the above is the case, the correction is an LLM hallucination or a translation to modern Spanish, which would be wrong, so these corrections must be omitted. T o enhance classification rule analysis, correc- tions were noted along with their frequency across the dataset to assess relev ance. All corrections were con verted to lo wercase for e ff ectiv e grouping. Many corrections were re vie wed and consolidated into a set of linguistic rules for categorization. This frame work can be used to identify and analyze similar changes and classification rules in other languages and contexts. This paper presents a vali- dated set of standardized rules and e xceptions for classifying corrections in the LatamXIX dataset. 3.3.1 Accent changes Corrections inv olving only accent changes (addi- tion or remo val) between the original and corrected texts refer mostly to surface forms , giv en the di ff er - ences between 19th-century Spanish accent rules and modern ones ( Montgomery , 1966 ). This in- cludes v aried accent expressions for the same word, such as "antes" sometimes written as "ántes". Sur- face forms pose problems for NLP tasks because, in Spanish, words without accents can hav e dif- ferent meanings, such as "acepto" (present) and "aceptó" (past). Thus, for some NLP tasks, focus- ing on surface forms without accent changes may be preferable, which is another outcome presented in this paper . F eature V alue Size ∼ 128 M B Ro ws 64 , 077 W ords ∼ 22 M T okens ∼ 28 . 7 M Ne wspapers 197 Y ears Range 1806 - 1899 T otal Corrections 830,951 Surface F orms 37,492 Non-Accent Surface F orms 7,466 % of OCR Error Corrections 12.33% % of Hallucinations Detected 77.96% T able 1: Final Historical Ink: LatamXIX LLM Post- OCR corrected dataset 3.3.2 Specific changes A set of letter-to-letter changes was extracted to represent key surface words and common OCR errors . For surface words, common changes in- clude "y" for "i" or "g" for "j", e.g., "mui" for "muy" and "jeneral" for "general"; in fact, the connector "y" used to be written as "i" in most of the early 19th-century te xts ( Bouzouita and Gutiérrez , 2015 ). Common OCR errors include accent misreading or number confusion, such as "ó" read as "6" or "i" as "1". Appendix B sho ws a list of surface form changes. 3.3.3 Other letter -to-letter changes When the number of letters in the original and cor- rected texts matches, changes generally refer to OCR errors , e.g., "la" misread as "In" or "señor" as "sefor". 3.3.4 Remaining changes Corrections not fitting the preceding categories are challenging to classify as OCR errors or hallucina- tions, particularly with multiword corrections. A text similarity ratio was computed based on posi- tional character matches between the original and corrected texts. This ratio, combined with the num- ber of words in the corrected text and correction frequency , helped categorize corrections. F or in- stance, "ascripeión" to "suscripción" had a ratio of 0.76, while "que" to "como" had a ratio of 0.0, e ff ecti vely distinguishing most cases. 4 Results Follo wing the outlined steps, we produced the LatamXIX dataset, as shown in T able 1 and de- tailed in Appendix C , alongside a flexible LLM OCR correction framew ork. This frame work al- lo ws for easy interchange between datasets or LLMs, facilitating further research. W e also com- piled a list of 19th-century Latin American Spanish surface forms from newspapers and dev eloped a general frame work for detecting these forms in di- verse conte xts. Old Spanish surface forms are particularly useful for semantic change detection, capturing meaning v ariations of specific words and aiding comparisons of their historical e volution across di ff erent periods and Spanish-speaking regions. In terms of LLM post-OCR corrections, the sys- tem generated 830,951 corrections. Howe ver , a no- table 78% of these were classified as hallucinations, indicating the model’ s tendency to generate incor- rect or fabricated content when uncertain. Only 12% addressed actual OCR errors, reflecting the core objectiv e of the framework. This gap high- lights a ke y limitation of current LLM models in historical OCR correction, where distinguishing between genuine errors and hallucinations remains a challenge, especially in specialized datasets. Moreov er , due to Azure OpenAI’ s API content policy for the chosen LLM (GPT -4o-mini), 2,899 ro ws (4.52%) were excluded from processing be- cause they contained content flagged as harmful, violent, or sexual. This limitation underscores the challenges content moderation policies pose when applying LLMs to historical texts. The percent- age of flagged content provides insight into the pre valence of such material in 19th-century Span- ish, o ff ering valuable perspecti ves for comparati ve analysis with modern Spanish 3 . 5 Future W ork While the OCR correction using LLMs has pro- gressed to wards a more automated pipeline, a substantial portion of rule definition within the presented frame work still requires manual profes- sional input. T o advance this process, future work should aim to enhance the automation of the rule- defining procedures. By reducing the reliance on human expertise, we can improve both the e ffi - ciency and accurac y of the OCR correction frame- work. 3 The dataset, surface forms, and processing steps are a vail- able in https://github.com/historicalink/LatamXIX 6 Limitations A significant limitation of this work is the reliance on manual e valuations for assessing OCR accurac y , as most e valuations and rule definitions were per- formed by e xperts. This manual process introduces subjecti vity and limits scalability . The absence of a comprehensi ve automated e v aluation method pre- vents more consistent accuracy assessments and re- stricts the ability to refine the framew ork based on objecti ve metrics like Character Error Rate (CER). 7 Acknowledgements W e would like to thank the two anonymous re view- ers from the EMNLP NLP4DH conference for their helpful feedback and suggestions. References Alpha. 2023. Newspaperbox Dataset . Chantal Amrhein and Simon Clematide. 2018. Super- vised OCR error detection and correction using statis- tical and neural machine translation methods . Jour- nal for Languag e T echnology and Computational Linguistics , 33(1):49–76. David M. Berry and Anders Fagerjord. 2017. Digital Humanities: Knowledge and critique in a Digital age . Polity Press. Miriam Bouzouita and Mara Fuertes Gutiérrez. 2015. Spanish studies: Language and linguistics . The Y ear’s W ork in Modern Language Studies , 75:171– 185. José Cañete, Gabriel Chaperon, Rodrigo Fuentes, Jou- Hui Ho, Hojin Kang, and Jor ge Pérez. 2020. Spanish pre-trained BER T model and e valuation data . In PML4DC at ICLR 2020 . Guillaume Chiron, Antoine Doucet, Mickaël Coustaty , and Jean-Philippe Moreux. 2017. Icdar2017 competi- tion on post-OCR te xt correction . In 2017 14th IAPR International Confer ence on Document Analysis and Recognition (ICD AR) , volume 01, pages 1423–1428. Ryan Cordell and David Smith. The viral texts project . V iral T exts: Mapping Networks of Reprinting in 19th- Century Newspapers and Magazines (2022) . James E. Dobson. 2019. Critical Digital Humanities: The sear ch for a methodology . Uni versity of Illinois Press. Oceanic Exchanges. The atlas . Mapping the Histories and Metadata of Digitised Ne wspapers Collections Ar ound the W orld. (2021) . GrabadosXIX. 2023. Grabados_Sample Dataset . National Endo wment for the Humanities. Chronicling america: Library of congress . News about Chroni- cling America RSS . Pierre-Carl Langlais. 2024. Post-OCR-correction: 1 billion words dataset of automated OCR correction by llm . Hugging F ace . Zoe LeBlanc. 2024. More than ke ywords . The Ameri- can Historical Revie w , 129(1):164–168. Daniel Lopresti. 2008. Optical character recognition er- rors and their e ff ects on natural language processing . In Pr oceedings of the Second W orkshop on Analytics for Noisy Unstructur ed T ext Data , page 9–16. Asso- ciation for Computing Machinery . Thomas Montgomery . 1966. On the dev elopment of spanish y from "et" . Romance Notes , 8(1):137–142. Thi T uyet Hai Nguyen, Adam Jatowt, Mickael Coustaty , and Antoine Doucet. 2021. Surve y of post-OCR processing approaches . ACM Comput. Surv . , 54(6). Thi T uyet Hai Nguyen, Adam Jatowt, Nhu-V an Nguyen, Mickael Coustaty , and Antoine Doucet. 2020. Neu- ral machine translation with BER T for post-OCR error detection and correction . In Pr oceedings of the A CM / IEEE Joint Confer ence on Digital Libraries in 2020 , JCDL ’20, page 333–336, Ne w Y ork, NY , USA. Association for Computing Machinery . OCR. 2022. OCR_project dataset . OpenAI. 2024. GPT-4o mini: advancing cost-e ffi cient intelligence . Je ff rey Pennington, Richard Socher, and Christopher Manning. 2014. GloVe: Global vectors for word representation . In Pr oceedings of the 2014 Confer- ence on Empirical Methods in Natural Language Pr o- cessing (EMNLP) , pages 1532–1543, Doha, Qatar . Association for Computational Linguistics. Christophe Rigaud, Antoine Doucet, Mickaël Coustaty , and Jean-Philippe Moreux. 2019. ICD AR 2019 com- petition on post-OCR text correction . In 2019 In- ternational Confer ence on Document Analysis and Recognition (ICD AR) , pages 1588–1593. RSCOE. 2023. Newspaper Dataset . Keng atharaiyer Sarveswaran, Gihan Dias, and Miriam Butt. 2019. Using meta-morph rules to de velop mor- phological analysers: A case study concerning Tamil . In Pr oceedings of the 14th International Confer ence on F inite-State Methods and Natural Langua ge Pr o- cessing , pages 76–86, Dresden, Germany . Associa- tion for Computational Linguistics. SNSF and FNR. 2023. Impresso - Media Monitoring of the Past II. Beyond Borders: Connecting Historical Newspapers and Radio . Elizabeth Soper , Stanley Fujimoto, and Y en-Y un Y u. 2021a. B AR T for post-correction of OCR newspa- per text . In Pr oceedings of the Seventh W orkshop on Noisy User-gener ated T ext (W -NUT 2021) , pages 284–290, Online. Association for Computational Lin- guistics. Elizabeth Soper , Stanley Fujimoto, and Y en-Y un Y u. 2021b. B AR T for post-correction of OCR newspa- per text . In Pr oceedings of the Seventh W orkshop on Noisy User-gener ated T ext (W -NUT 2021) , pages 284–290, Online. Association for Computational Lin- guistics. Alan Thomas, Robert Gaizauskas, and Haiping Lu. 2024. Lev eraging LLMs for post-OCR correction of historical ne wspapers . In Pr oceedings of the Thir d W orkshop on Languag e T echnologies for Histori- cal and Ancient Languages (LT4HALA) @ LREC- COLING-2024 , pages 116–121, T orino, Italia. ELRA and ICCL. M.E.B. V eninga. 2024. LLMs for OCR post-correction . A LLM Correction A.1 Prompt Belo w is the prompt used to request the LLM to correct the historical text extracted by the OCR model. This prompt remained unchanged for the correction of the entire dataset and w as generated through manual trial and error , ensuring it was con- cise enough to accommodate the potential length of the text. Dado el texto del siglo XIX entre ˋˋˋ, retorna únicamente el texto corrigiendo los errores ortográficos sin cambiar la gramática. No corrijas la ortografía de nombres: ˋˋˋ {text} ˋˋˋ Equi valent to the follo wing prompt in English: Given the 19th-century text between ˋˋˋ, return only the text with spelling errors corrected without changing the grammar. Do not correct the spelling of names: ˋˋˋ {text} ˋˋˋ A.2 Example The LLM response was successful for most of the texts e xcept for some cases where Azure’ s Content Policy was triggered due to text content, and for very long te xts where the model started to halluci- nate the whole te xt. An example of an original text, its retriev ed LLM correction, and all the changes detected by the di ff algorithm is the following ( sur - face forms and OCR errors ) is: • Original: La publicacion del Oso se harà dos veces cada se mana, y constará de un pliego en cuarto ; ofreciendo à mas sus redactores, dar los grav ados oportunos, siempre que loex- ija el asuntode que trate. Redactado por un Num. 8. TEMA del Periodico. POLITICA MILIT AR. OCT A V A SESION. Abierta la se- sion á las dore y un minuto de la noche , 25 de Febrero de 1845 , con asistencia de todos los Señores Representantes, se leyó y aprobó la acta de la Asamblea anterior , ménos en lo tocante à la torre del Con vento de Santo Domingo, punto que quedó para ventilarse en mejor ocasion. En seguida se dió cuenta de una nota del Ejecuti v o , referente à que urjía la necesidad de organizar un Ejército ; pues decia el Excmo. Decano: - "Un poder sin bayonetas v ale tanto como un cero puesto á la izquierda." • Corrected: La publicación del Oso se hará dos veces cada semana , y constará de un pliego en cuarto; ofreciendo además sus redac- tores, dar los grabados oportunos, siempre que lo exija el asunto de que trate. Redac- tado por un Num. 8. TEMA del Periódico . POLÍTICA MILIT AR. OCT A V A SESIÓN . Abierta la sesión a las dos y un minuto de la noche, 25 de Febrero de 1845, con asistencia de todos los Señores Representantes, se leyó y aprobó la acta de la Asamblea anterior , menos en lo tocante a la torre del Con vento de Santo Domingo, punto que quedó para ventilarse en mejor ocasión . Enseguida se dio cuenta de una nota del Ejecuti vo, referente a que ur gía la necesidad de organizar un Ejército; pues decía el Excmo. Decano: - "Un poder sin bayonetas v ale tanto como un cero puesto a la izquierda." B Specific Surface F orm Changes For the surf ace form extraction from the te xts and its di ff erentiation from OCR errors and LLM hallu- cinations, a set of surface form changes was con- structed for 19th-century Latin American Spanish. The complete set of known changes with an e xam- ple for each case is presented in T able B1 . Change Example á ↔ a hara → hará é ↔ e fué → fue í ↔ i decia → decía ó ↔ o ocasion → ocasión ú ↔ u ningun → ningún i ↔ y mui → muy j ↔ g jente → gente v ↔ b grav ado → grabado s ↔ x espiró → e xpiró j ↔ x méjico → méxico c ↔ s faces → fases s ↔ z dies → diez z → c doze → doce q → c quatro → cuatro n → ñ senor → señor ni → ñ senior → señor k → qu nikel → níquel k → c kiosko → quiosco ou → u boule var → bule v ar s → bs suscriciones → subscripciones c → pc suscriciones → subscripciones s → ns trasportar → transportar t → pt setiembre → septiembre rt → r libertar → liberar r ↔ rr vireinato → virreinato ...lo → lo ... cambiólo → lo cambió ...se → se ... acercóse → se acercó T able B1: Set of Surface F orm change rules to extract them from the LatamXIX dataset C Dataset Overview A more specific ov erview of the dataset is described in Figure C1 and T able C1 . Country Presence (%) Mexico 49.59% Argentina 21.23% Colombia 12.53% Peru 8.43% Chile 6.39% Panama 0.83% V enezuela 0.52% Uruguay 0.17% France 0.16% Ecuador 0.09% Spain 0.06% T able C1: LatamXIX dataset presence distribution grouped by country Figure C1: LatamXIX dataset decade distribution

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment