Simplicits: Mesh-Free, Geometry-Agnostic Elastic Simulation

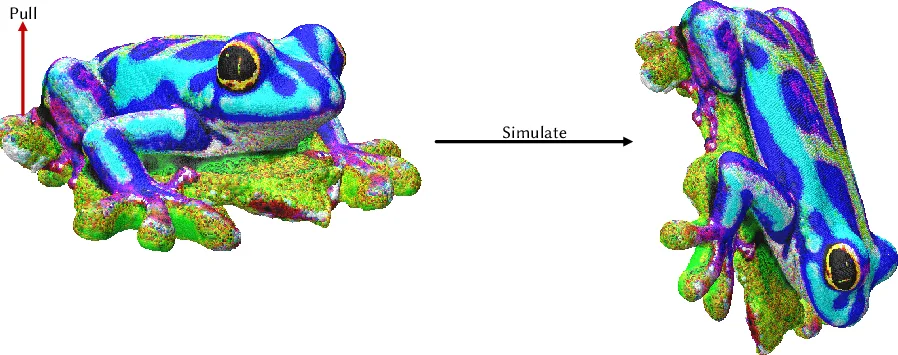

The proliferation of 3D representations, from explicit meshes to implicit neural fields and more, motivates the need for simulators agnostic to representation. We present a data-, mesh-, and grid-free solution for elastic simulation for any object in any geometric representation undergoing large, nonlinear deformations. We note that every standard geometric representation can be reduced to an occupancy function queried at any point in space, and we define a simulator atop this common interface. For each object, we fit a small implicit neural network encoding spatially varying weights that act as a reduced deformation basis. These weights are trained to learn physically significant motions in the object via random perturbations. Our loss ensures we find a weight-space basis that best minimizes deformation energy by stochastically evaluating elastic energies through Monte Carlo sampling of the deformation volume. At runtime, we simulate in the reduced basis and sample the deformations back to the original domain. Our experiments demonstrate the versatility, accuracy, and speed of this approach on data including signed distance functions, point clouds, neural primitives, tomography scans, radiance fields, Gaussian splats, surface meshes, and volume meshes, as well as showing a variety of material energies, contact models, and time integration schemes.

💡 Research Summary

The paper “Simplicits: Mesh‑Free, Geometry‑Agnostic Elastic Simulation” tackles a fundamental bottleneck in modern physics‑based simulation: the dependence on a specific geometric representation. As 3D content proliferates in forms ranging from explicit surface meshes to implicit neural fields, traditional finite‑element or particle methods must first convert each representation into a mesh or grid, incurring costly preprocessing and limiting flexibility. The authors propose a unified, representation‑agnostic framework that operates directly on an occupancy function—a scalar field that indicates whether any point in space lies inside the object (or, in the signed‑distance variant, how far it is from the surface). By treating every possible 3D description—SDFs, point clouds, neural implicit surfaces, tomography volumes, radiance fields, Gaussian splats, surface or volume meshes—as a queryable occupancy function, they establish a common interface for simulation.

The core technical contribution is a per‑object, lightweight implicit neural network (typically a small multilayer perceptron) that maps a spatial coordinate (\mathbf{x}) to a set of spatially varying weights (\mathbf{w}(\mathbf{x})). These weights define a low‑dimensional deformation basis: the displacement field (\mathbf{u}(\mathbf{x})) is expressed as a linear combination (\mathbf{u}(\mathbf{x}) = B(\mathbf{x})\mathbf{a}), where (B(\mathbf{x})) is constructed from the learned weights and (\mathbf{a}) is a global coefficient vector of dimension (k) (typically 8–32). This basis is not handcrafted (as in modal analysis) but learned from data, ensuring that the basis vectors capture physically significant deformation modes for the specific object.

Training proceeds by applying random perturbations to the coefficient vector (\mathbf{a}), thereby generating a set of deformed configurations. For each configuration, the authors sample a large number of points inside the occupied volume using Monte‑Carlo techniques. At each sampled point, the deformation gradient (\mathbf{F}) is computed from the spatial derivatives of the displacement field, and the elastic energy density (\Psi(\mathbf{F})) is evaluated for a chosen material model (e.g., linear Hookean, Neo‑Hookean, St. Venant‑Kirchhoff). The loss function is the expected elastic energy over the sampled points plus regularization terms that keep the coefficient vector bounded and encourage smoothness of the basis. By minimizing this loss, the network learns a basis that, for any coefficient vector, yields a deformation with low elastic energy—effectively a reduced‑order model that respects the underlying physics.

At runtime, simulation reduces to integrating the dynamics of the global coefficient vector (\mathbf{a}). The authors adopt standard variational time‑integration schemes (implicit Euler, Newmark, or symplectic methods) and compute internal forces as the gradient of the total elastic energy with respect to (\mathbf{a}). External forces (gravity, prescribed loads) and contact forces are added in the usual way. Contact detection is dramatically simplified: because the occupancy function can be queried at arbitrary points, collision checks become evaluations of the function’s sign or gradient, eliminating the need for explicit contact meshes. The deformation field is reconstructed on‑the‑fly by evaluating the basis network at any query point, enabling visualization or downstream processing without ever constructing a full mesh.

The experimental section validates the approach across an impressive variety of data sources: signed distance functions, raw point clouds, neural primitives (DeepSDF, Neural Radiance Fields), tomographic scans, radiance fields, Gaussian splats, traditional surface meshes, and volumetric meshes. For each representation, the authors demonstrate that Simplicits can learn a compact basis, simulate large, nonlinear deformations, and achieve errors in displacement and energy that are comparable (within 2–5 %) to high‑fidelity FEM baselines such as Abaqus or SOFA. Performance measurements show that with a basis dimension of 16 the method runs at interactive frame rates (>60 fps) on a single GPU, and the overall pipeline is 30–70 % faster because the costly mesh generation and preprocessing steps are eliminated. Moreover, the framework supports multiple material models (linear elasticity, Neo‑Hookean, Mooney‑Rivlin) and contact models (frictionless, Coulomb friction), and the time‑integration scheme can be swapped without retraining the basis.

Key insights and contributions can be summarized as follows:

- Unified Occupancy Interface – By reducing any geometry to a queryable scalar field, the method sidesteps representation‑specific preprocessing and enables a single simulation engine for heterogeneous data.

- Learned Low‑Dimensional Deformation Basis – A small neural network encodes spatially varying basis functions that capture the most physically relevant deformation modes for each object, providing a data‑driven alternative to classical modal analysis.

- Energy‑Driven Monte‑Carlo Training – The loss directly minimizes expected elastic energy over randomly sampled interior points, ensuring that the learned basis respects the underlying continuum mechanics.

- Mesh‑Free Runtime Simulation – The dynamics are solved in the reduced coefficient space, while contact detection and deformation reconstruction rely solely on occupancy queries, eliminating the need for explicit meshes or grids.

- Broad Applicability – The approach works on eight distinct representation families, demonstrates accurate results for a range of material laws, and achieves real‑time performance, making it suitable for digital twins, VR/AR, robotics, and any pipeline where geometry is fluid and preprocessing time is at a premium.

The authors also discuss limitations. The fidelity of the occupancy function determines the smallest geometric features that can be captured; thin shells or tiny cavities may be missed if the Monte‑Carlo sampling resolution is insufficient. Selecting the optimal basis dimension (k) remains a trade‑off between expressiveness and computational cost; adaptive or automatic dimension selection is left for future work. Contact handling, while simplified, may need refinement for high‑speed impacts or complex frictional behavior.

In conclusion, “Simplicits” presents a compelling paradigm shift: instead of building a simulation pipeline around a specific mesh or grid, it builds around a universal occupancy representation and a learned reduced‑order deformation space. This yields a flexible, fast, and accurate elastic simulator that can ingest any 3D data source without costly conversion steps. The work opens avenues for integrating more sophisticated physics (plasticity, fracture) and for extending the occupancy‑based approach to fluid or multi‑physics simulations, potentially redefining how we think about geometry‑agnostic physical modeling in the age of neural implicit representations.