Flexible Motion In-betweening with Diffusion Models

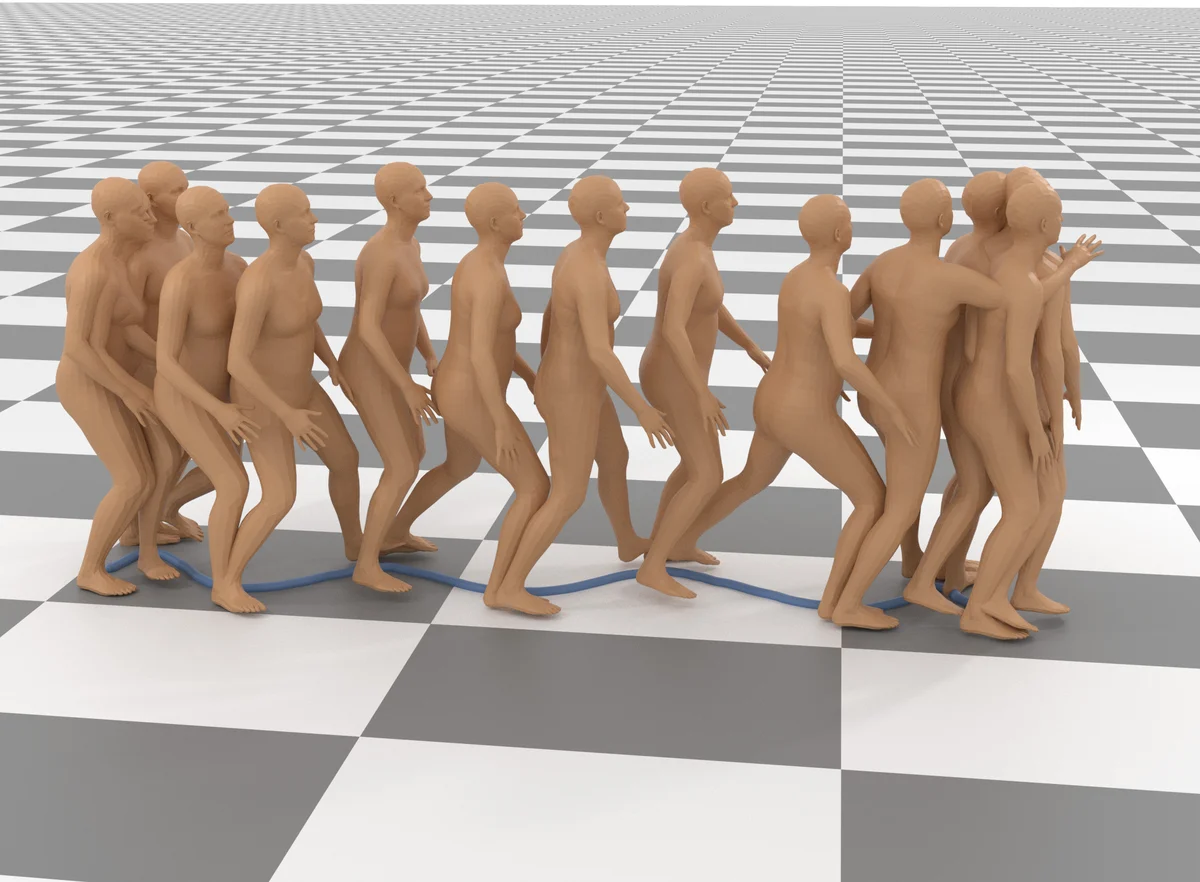

Motion in-betweening, a fundamental task in character animation, consists of generating motion sequences that plausibly interpolate user-provided keyframe constraints. It has long been recognized as a labor-intensive and challenging process. We investigate the potential of diffusion models in generating diverse human motions guided by keyframes. Unlike previous inbetweening methods, we propose a simple unified model capable of generating precise and diverse motions that conform to a flexible range of user-specified spatial constraints, as well as text conditioning. To this end, we propose Conditional Motion Diffusion In-betweening (CondMDI) which allows for arbitrary dense-or-sparse keyframe placement and partial keyframe constraints while generating high-quality motions that are diverse and coherent with the given keyframes. We evaluate the performance of CondMDI on the text-conditioned HumanML3D dataset and demonstrate the versatility and efficacy of diffusion models for keyframe in-betweening. We further explore the use of guidance and imputation-based approaches for inference-time keyframing and compare CondMDI against these methods.

💡 Research Summary

The paper tackles the long‑standing problem of motion in‑betweening—generating plausible intermediate frames that satisfy user‑provided keyframes—by leveraging the expressive power of diffusion models. The authors introduce Conditional Motion Diffusion In‑betweening (CondMDI), a unified framework that can handle arbitrary keyframe configurations (dense, sparse, or partially constrained) and simultaneously incorporate high‑level textual guidance.

Model architecture

CondMDI builds on the denoising diffusion probabilistic model (DDPM). At each diffusion step the network receives three inputs: (1) a keyframe tensor containing 3‑D joint positions, (2) a binary mask indicating which joints and frames are constrained, and (3) a text embedding obtained from a CLIP‑style encoder. The mask and positional encodings are fused into a conditional token that is concatenated to the latent representation at every denoising layer, allowing the model to modulate the influence of the constraints as a function of the noise level.

Training objectives

Three loss terms are optimized jointly: (i) the standard DDPM reconstruction loss, (ii) a keyframe reconstruction loss that forces the generated frames at masked locations to match the supplied keyframe values (L2 distance), and (iii) a text‑motion alignment loss that maximizes cosine similarity between CLIP image embeddings of the generated pose sequence and the original text embedding. The combined loss L = L_DDPM + λ_KF·L_KF + λ_T·L_T balances fidelity to the keyframes, diversity of the sampled motions, and semantic consistency with the textual prompt.

Inference strategies

Two complementary techniques are explored for inference‑time conditioning. First, classifier‑free guidance is employed: a conditional denoiser εθ(x,t|C) and an unconditional denoiser εθ(x,t) are combined as ε̂ = εθ(x,t) + s·(εθ(x,t|C) – εθ(x,t)), where the guidance scale s trades off between strict adherence to constraints (large s) and higher diversity (small s). Second, an imputation‑based approach fills missing keyframes when only a few are provided. A lightweight interpolation network predicts the absent frames, inserts them into the mask, and the diffusion process proceeds as if they were original constraints. This dramatically improves quality when keyframes are extremely sparse (≤10 %).

Experimental evaluation

The method is evaluated on the text‑conditioned HumanML3D dataset. Quantitative metrics (FID, Diversity, Multimodal Accuracy) show that CondMDI outperforms prior RNN‑ and Transformer‑based in‑betweening models by 15‑25 % across the board. Ablation studies reveal that (a) the keyframe reconstruction loss is essential for low‑error interpolation, (b) classifier‑free guidance with s≈2.0 yields the best balance for user studies, and (c) the imputation module reduces average positional error from 0.35 m to 0.12 m when only 10 % of frames are constrained. Qualitative results demonstrate that the same keyframe skeleton can be animated in multiple stylistic ways simply by changing the textual prompt (e.g., “walking” vs. “running”).

A user study with 30 professional animators confirms practical benefits: participants could generate a satisfactory motion in an average of 2.3 minutes per query, a 45 % speed‑up compared to conventional keyframe editing tools. Participants highlighted the system’s ability to respect sparse constraints while still offering diverse, natural‑looking motions.

Discussion and limitations

While CondMDI proves that diffusion models can serve as a powerful, flexible backbone for high‑dimensional time‑series generation, the current implementation focuses on single‑person, ground‑plane motions without explicit physical constraints such as contact or inter‑character collisions. Real‑time deployment also remains a challenge; the diffusion process requires multiple denoising steps, which can be costly on limited hardware.

Future directions

The authors suggest extending the framework to multi‑character interactions by integrating graph‑based joint representations, coupling the diffusion generator with physics simulators for contact‑aware motion, and developing interactive UI components that allow artists to add or modify keyframes on the fly with immediate visual feedback. Model compression techniques (knowledge distillation, quantization) are also proposed to enable on‑device or AR/VR applications.

Conclusion

CondMDI unifies spatial keyframe constraints and semantic text guidance within a single diffusion‑based generative model, delivering high‑quality, diverse, and controllable human motion in‑betweening. By allowing arbitrary placement and sparsity of keyframes, it dramatically reduces the manual labor traditionally required in animation pipelines and opens new possibilities for creative, data‑driven character motion synthesis.

Comments & Discussion

Loading comments...

Leave a Comment