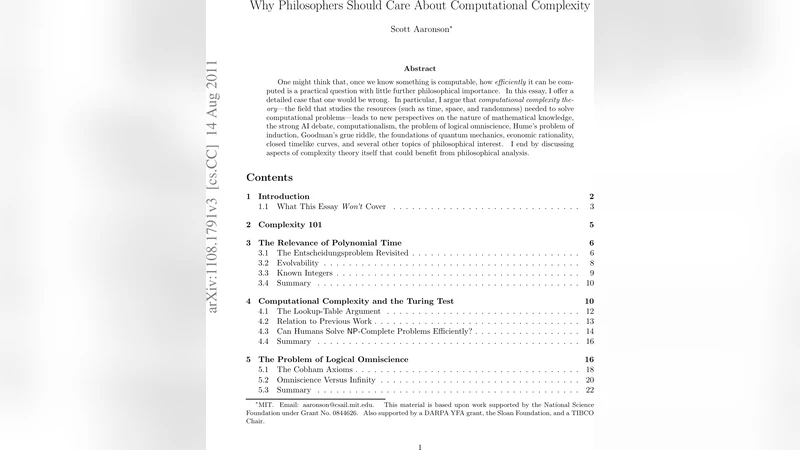

Why Philosophers Should Care About Computational Complexity

One might think that, once we know something is computable, how efficiently it can be computed is a practical question with little further philosophical importance. In this essay, I offer a detailed case that one would be wrong. In particular, I argue that computational complexity theory – the field that studies the resources (such as time, space, and randomness) needed to solve computational problems – leads to new perspectives on the nature of mathematical knowledge, the strong AI debate, computationalism, the problem of logical omniscience, Hume’s problem of induction, Goodman’s grue riddle, the foundations of quantum mechanics, economic rationality, closed timelike curves, and several other topics of philosophical interest. I end by discussing aspects of complexity theory itself that could benefit from philosophical analysis.

💡 Research Summary

Scott Aaronson’s essay makes a compelling case that computational complexity theory is not merely a technical subfield concerned with algorithmic efficiency, but a rich source of philosophical insight. He begins by distinguishing computability (the Turing‑machine notion that a problem is solvable in principle) from computational complexity (the quantitative study of the resources—time, space, randomness—required to solve a problem). While every problem of philosophical interest is computable, the real question is whether it can be solved within reasonable resource bounds, typically polynomial time, or whether it lies beyond, in exponential or even higher complexity classes.

The paper then surveys a wide array of philosophical topics, showing how each is illuminated by complexity considerations. First, the problem of logical omniscience—often assumed in epistemic logic—is reframed: agents cannot instantly know all logical consequences because many entailment problems (e.g., SAT, PSPACE‑complete logics) are believed to be intractable. This provides a natural, resource‑bounded account of knowledge that avoids the unrealistic “all‑knowing” ideal.

Second, the strong AI debate is revisited. Aaronson argues that the question “Can a machine think like a human?” is not only about the existence of a suitable architecture but also about whether the cognitive tasks humans perform (pattern recognition, planning, language understanding) can be realized by algorithms that run in polynomial time. If key cognitive functions correspond to problems in NP‑complete or PSPACE‑complete classes, then any machine that truly replicates human cognition would have to overcome the same complexity barriers that limit human reasoning.

Third, the classic problem of induction and Goodman’s “grue” riddle are examined through the lens of PAC learning and pseudorandomness. PAC theory shows that learning can be efficient (polynomial‑time) only when the hypothesis class has bounded complexity; otherwise, induction becomes computationally infeasible. Pseudorandom generators illustrate that apparent randomness can be generated efficiently, challenging naive notions of empirical regularity and suggesting that the “problem of induction” is partly a complexity issue.

Fourth, Aaronson connects quantum mechanics to complexity. The class BQP (problems efficiently solvable on a quantum computer) sits between P and NP, and its existence gives a formal footing to the many‑worlds interpretation: quantum superpositions can be viewed as parallel computations across exponentially many branches, yet only those that can be extracted in polynomial time are physically observable. This bridges the gap between metaphysical interpretations of quantum theory and concrete resource constraints.

Fifth, economic rationality is reinterpreted. Bounded rationality is modeled as agents solving optimization problems within polynomial time. Many equilibrium concepts (e.g., Nash equilibrium) are PPAD‑complete, indicating that finding exact equilibria may be computationally prohibitive, which explains why real markets often settle on approximate or heuristic outcomes.

Finally, Aaronson turns the lens back onto complexity theory itself. Open problems such as P ≠ NP have deep philosophical ramifications for the nature of mathematical proof, the limits of knowledge, and the demarcation between what is provable in principle and what is provable in practice. He argues that philosophers can contribute by scrutinizing the conceptual foundations of complexity (e.g., the meaning of “efficient,” the role of randomness, the interpretation of reductions) while complexity theorists can offer philosophers precise tools to formalize long‑standing debates.

In conclusion, the essay demonstrates that computational complexity provides a unifying framework for addressing a host of philosophical questions, from epistemology and philosophy of mind to metaphysics and ethics. By recognizing resource constraints as a fundamental aspect of rational thought, Aaronson invites a fruitful dialogue between philosophers and complexity theorists, suggesting that many traditional philosophical puzzles become clearer—or at least more sharply defined—once viewed through the prism of computational complexity.

Comments & Academic Discussion

Loading comments...

Leave a Comment