VR Isle Academy: A VR Digital Twin Approach for Robotic Surgical Skill Development

Contemporary progress in the field of robotics, marked by improved efficiency and stability, has paved the way for the global adoption of surgical robotic systems (SRS). While these systems enhance surgeons’ skills by offering a more accurate and less invasive approach to operations, they come at a considerable cost. Moreover, SRS components often involve heavy machinery, making the training process challenging due to limited access to such equipment. In this paper we introduce a cost-effective way to facilitate training for a simulator of a SRS via a portable, device-agnostic, ultra realistic simulation with hand tracking and feet tracking support. Error assessment is accessible in both real-time and offline, which enables the monitoring and tracking of users’ performance. The VR application has been objectively evaluated by several untrained testers showcasing significant reduction in error metrics as the number of training sessions increases. This indicates that the proposed VR application denoted as VR Isle Academy operates efficiently, improving the robot - controlling skills of the testers in an intuitive and immersive way towards reducing the learning curve at minimal cost.

💡 Research Summary

The paper presents “VR Isle Academy,” a portable, device‑agnostic virtual‑reality (VR) digital twin designed to train surgeons on robotic surgical systems (SRS) such as the da Vinci platform. Recognizing that current SRS training is hampered by the high cost, large footprint, and limited availability of physical simulators, the authors propose a low‑cost solution that replicates the full master‑slave architecture of a modern surgical robot within a VR environment.

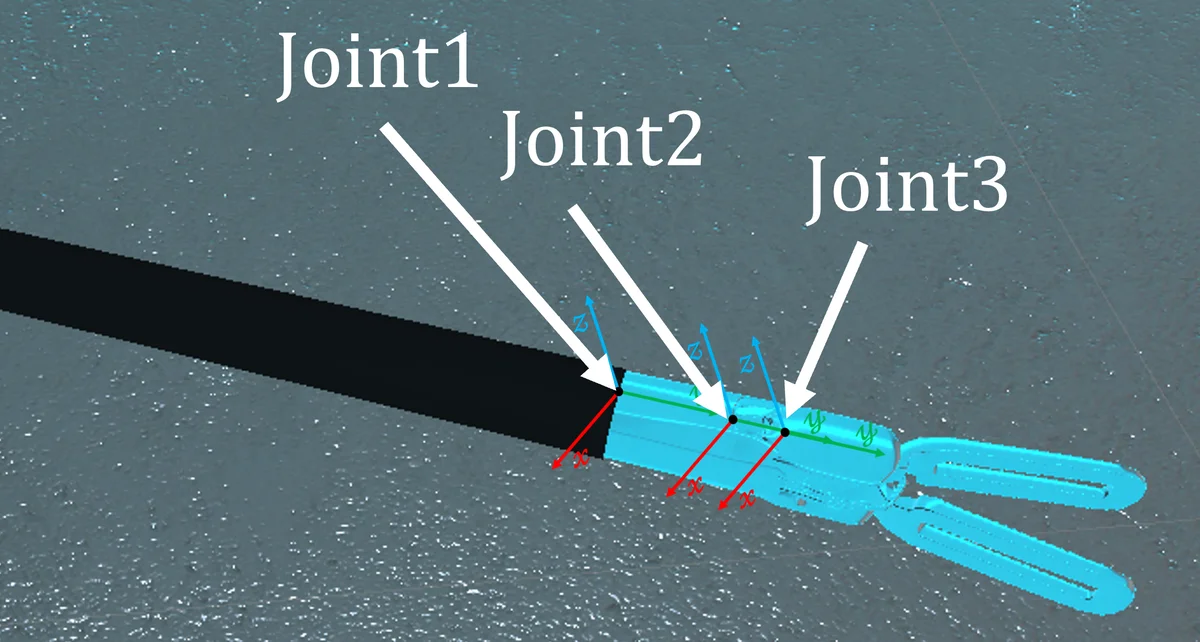

Technical implementation relies on the Unity game engine combined with the MAGES SDK, which provides realistic soft‑tissue deformation, cutting physics, and an analytics engine for event logging. The digital twin models both the master console (two joystick‑like controllers, three pedals, and a visual tablet) and the slave robotic arms (two 6‑DoF arms with 1‑DoF grippers). Hand tracking is achieved through standard VR controllers or hand‑tracking capable headsets, while foot tracking is added via external trackers to emulate the three pedals (camera, clutch, and 30‑degree view). This “hands‑and‑feet” interaction reproduces the multimodal control scheme of the real da Vinci system, a feature rarely found in existing VR surgical simulators.

The authors designed twelve training scenarios that progressively introduce basic arm manipulation, camera control, clutch usage, and combined wrist‑articulation tasks. Each scenario includes step‑by‑step textual instructions, visual cues, and clearly defined success/failure conditions (e.g., “Ring Tower Transfer” requires precise force application without destabilizing the tower). Real‑time error metrics—such as positional deviation, task completion time, pedal usage frequency, and collision events—are captured by the SDK’s analytics engine and stored for offline analysis.

A user study involved ten participants with no prior robotic‑surgery experience. Each participant completed at least five training sessions across the scenario set. Quantitative results showed a statistically significant reduction in average positional error (≈35 % decrease) and task completion time (≈28 % decrease) across sessions. Success rates in complex, multimodal tasks rose from 20 % in the first session to 75 % by the final session, demonstrating that repeated exposure in the VR environment effectively builds the psychomotor skills required for SRS operation.

The paper discusses several limitations. First, the system lacks haptic feedback; while visual and kinematic cues are realistic, the absence of force feedback may limit transferability to high‑precision clinical tasks. Second, the evaluation metrics focus on geometric accuracy and speed, omitting higher‑order clinical decision‑making and situational awareness measures. Third, the current implementation is tied to Unity and MAGES SDK, which may constrain portability to other development ecosystems.

Future work is outlined to address these gaps: integration of low‑cost haptic devices, incorporation of AI‑driven adaptive feedback, expansion of scenario complexity to include tissue‑specific properties and emergency situations, and validation with experienced surgeons to assess skill transfer to real‑world robotic platforms.

In conclusion, VR Isle Academy demonstrates that a fully immersive, low‑cost VR digital twin can effectively replicate the essential control dynamics of a surgical robot, provide objective performance analytics, and accelerate skill acquisition for novice users. By removing the financial and logistical barriers associated with traditional SRS simulators, the platform holds promise for democratizing robotic surgery education worldwide.

Comments & Academic Discussion

Loading comments...

Leave a Comment