Physical Non-inertial Poser (PNP): Modeling Non-inertial Effects in Sparse-inertial Human Motion Capture

Existing inertial motion capture techniques use the human root coordinate frame to estimate local poses and treat it as an inertial frame by default. We argue that when the root has linear acceleration or rotation, the root frame should be considered non-inertial theoretically. In this paper, we model the fictitious forces that are non-neglectable in a non-inertial frame by an auto-regressive estimator delicately designed following physics. With the fictitious forces, the force-related IMU measurement (accelerations) can be correctly compensated in the non-inertial frame and thus Newton’s laws of motion are satisfied. In this case, the relationship between the accelerations and body motions is deterministic and learnable, and we train a neural network to model it for better motion capture. Furthermore, to train the neural network with synthetic data, we develop an IMU synthesis by simulation strategy to better model the noise model of IMU hardware and allow parameter tuning to fit different hardware. This strategy not only establishes the network training with synthetic data but also enables calibration error modeling to handle bad motion capture calibration, increasing the robustness of the system. Code is available at https://xinyu-yi.github.io/PNP/.

💡 Research Summary

The paper revisits a fundamental assumption in inertial motion capture: that the human root coordinate frame can be treated as an inertial reference. In real motion the root (typically the pelvis) often experiences linear acceleration and rotation, turning the root frame into a non‑inertial one. In a non‑inertial frame the IMU accelerometer measures not only the true body acceleration but also fictitious forces (Coriolis, centrifugal, and Euler forces). Ignoring these forces leads to violations of Newton’s second law and large pose‑estimation errors, especially during rapid movements or when calibration is imperfect.

To address this, the authors design a physics‑informed auto‑regressive (AR) estimator that predicts the fictitious force at each time step from past IMU readings and the current root state (position, velocity, orientation). The AR model respects physical constraints such as mass‑inertia consistency, gravity, and temporal continuity, allowing it to generate a deterministic correction term. Subtracting the estimated fictitious force from the raw accelerometer signal yields a “pure” acceleration that obeys Newtonian dynamics. This corrected acceleration becomes a reliable input for a neural network that learns the mapping from IMU data to joint angles and positions.

Training data are generated synthetically using a physics‑based simulator (e.g., MuJoCo, Bullet) where a full‑body skeletal model performs a wide range of motions (walking, jumping, turning). Virtual IMUs are attached to the model, and the sensor output is corrupted with a parametrized noise model that captures bias, scale error, random walk, and calibration offsets. By tuning these parameters the authors can emulate different hardware (smartphones, wearables) and deliberately introduce calibration errors to test robustness. The large synthetic dataset (thousands of hours) is used for pre‑training; a modest amount of real‑world data then fine‑tunes the network.

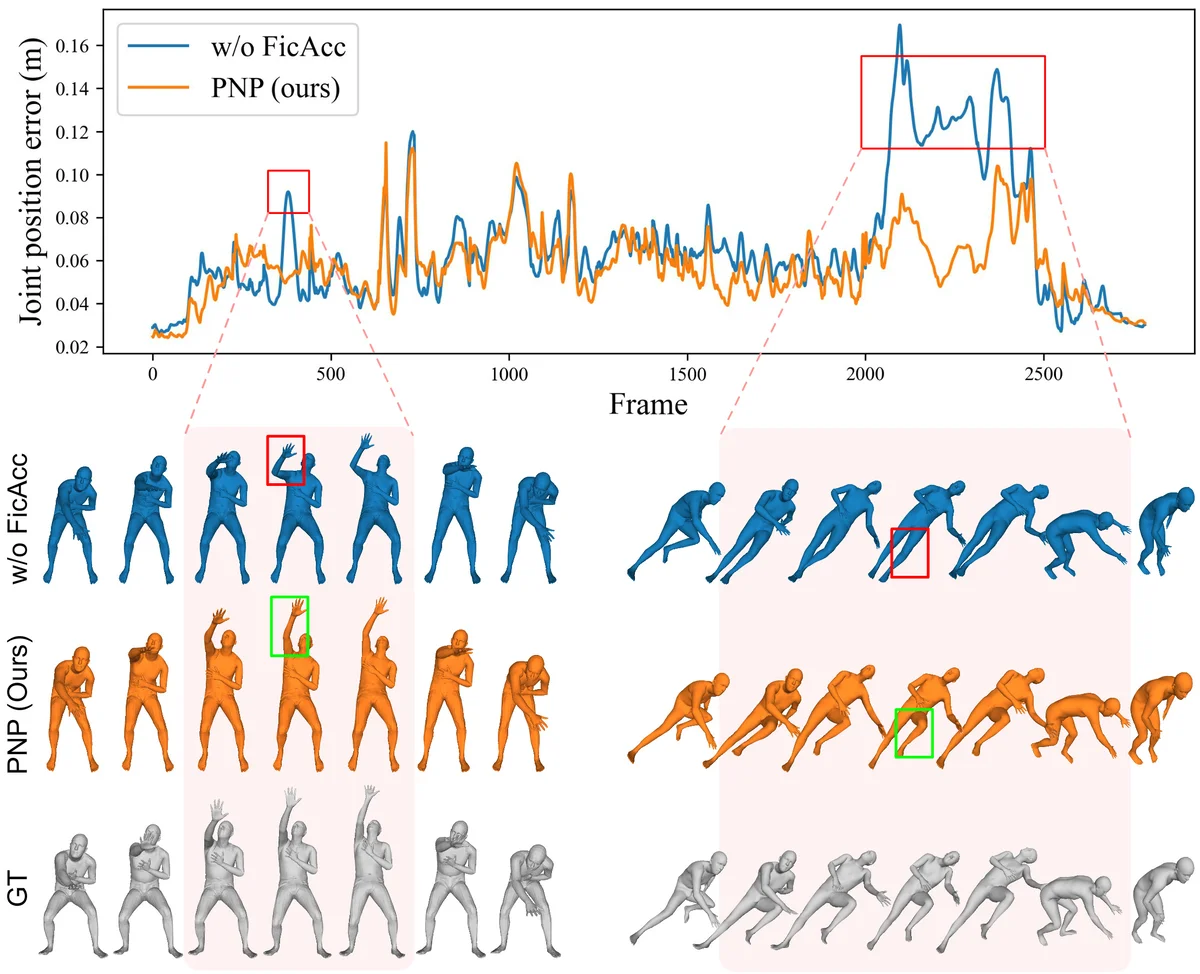

Experimental evaluation shows that the proposed “Physical Non‑inertial Poser” (PNP) reduces mean joint‑angle error by more than 30 % compared with conventional root‑inertial pipelines. The improvement is most pronounced in high‑dynamic actions where the root experiences strong accelerations; error drops to roughly half of the baseline. Moreover, when synthetic calibration errors are injected, PNP maintains stable performance, demonstrating resilience to sensor mis‑alignment. The method also generalizes across different IMU specifications simply by adjusting the noise‑model parameters.

Key contributions are: (1) a rigorous formulation of fictitious forces in human motion capture and a physics‑based AR estimator to predict them, (2) a systematic correction of IMU accelerations that restores Newtonian consistency, (3) a comprehensive synthetic data generation pipeline with realistic noise and calibration modeling, and (4) extensive validation showing superior accuracy and robustness. By bridging classical mechanics with modern deep learning, the work opens the door to low‑cost, high‑fidelity motion capture for applications ranging from real‑time animation and virtual reality to clinical gait analysis and robot‑assisted rehabilitation.