Ultra Inertial Poser: Scalable Motion Capture and Tracking from Sparse Inertial Sensors and Ultra-Wideband Ranging

While camera-based capture systems remain the gold standard for recording human motion, learning-based tracking systems based on sparse wearable sensors are gaining popularity. Most commonly, they use inertial sensors, whose propensity for drift and jitter have so far limited tracking accuracy. In this paper, we propose Ultra Inertial Poser, a novel 3D full body pose estimation method that constrains drift and jitter in inertial tracking via inter-sensor distances. We estimate these distances across sparse sensor setups using a lightweight embedded tracker that augments inexpensive off-the-shelf 6D inertial measurement units with ultra-wideband radio-based ranging—dynamically and without the need for stationary reference anchors. Our method then fuses these inter-sensor distances with the 3D states estimated from each sensor. Our graph-based machine learning model processes the 3D states and distances to estimate a person’s 3D full body pose and translation. To train our model, we synthesize inertial measurements and distance estimates from the motion capture database AMASS. For evaluation, we contribute a novel motion dataset of 10 participants who performed 25 motion types, captured by 6 wearable IMU+UWB trackers and an optical motion capture system, totaling 200 minutes of synchronized sensor data (UIP-DB). Our extensive experiments show state-of-the-art performance for our method over PIP and TIP, reducing position error from 13.62 to 10.65 cm (22% better) and lowering jitter from 1.56 to 0.055 km/s3 (a reduction of 97%). UIP code, UIP-DB dataset, and hardware design: https://github.com/eth-siplab/UltraInertialPoser

💡 Research Summary

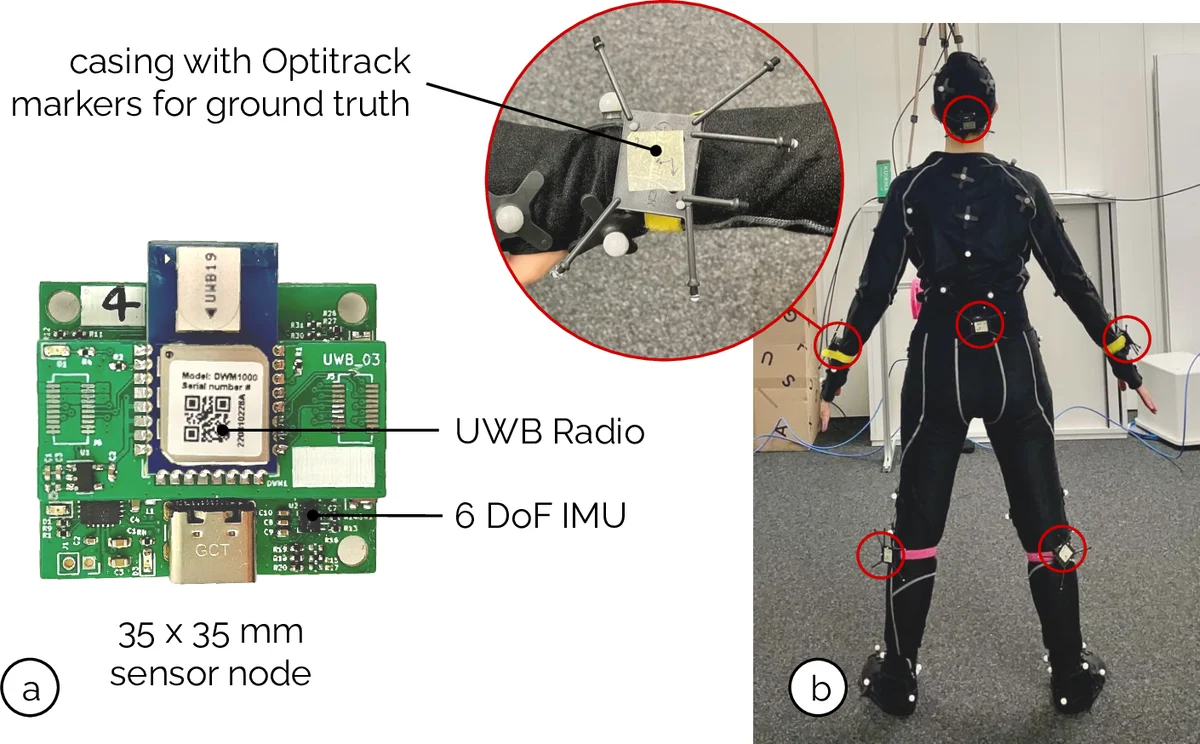

The paper introduces Ultra Inertial Poser (UIP), a novel full‑body motion capture system that fuses inertial measurement unit (IMU) data with ultra‑wideband (UWB) ranging to overcome the long‑standing drift and jitter problems of pure inertial tracking. The authors design a compact “IMU+UWB tracker” that integrates a commercial 6‑DoF IMU and a UWB radio on a single PCB, powered by a small battery and communicating via BLE. Unlike traditional UWB setups that rely on fixed anchors, each tracker performs two‑way ranging with every other tracker, providing real‑time inter‑sensor distances without any external infrastructure.

To exploit these distances, the authors construct a graph where each node represents a sensor’s 3‑D state (position, velocity, acceleration, orientation) and each edge encodes the measured pairwise distance. A graph neural network (GNN) performs message passing to enforce distance consistency, while a Temporal Convolutional Network (TCN) captures the temporal dynamics of human motion. The combined GNN‑TCN model outputs the 3‑D positions of 24 body joints together with the global translation of the subject.

Training data are generated synthetically from the AMASS motion‑capture database. For each AMASS sequence, a physics‑based simulator produces realistic IMU signals and UWB distance measurements, including sensor noise and radio‑interference models. This large‑scale synthetic dataset pre‑trains the network, which is then fine‑tuned on a newly collected real‑world dataset (UIP‑DB). UIP‑DB comprises 10 participants performing 25 distinct actions (walking, running, jumping, turning, etc.) while wearing six IMU+UWB trackers placed on the torso, shoulders, and ankles. The sessions are simultaneously recorded by a Vicon optical system, yielding 200 minutes of synchronized ground‑truth data.

Extensive evaluation shows that UIP outperforms the state‑of‑the‑art inertial‑only methods PIP (Pose Inertial Pose) and TIP (Temporal Inertial Pose). The global position error drops from 13.62 cm (PIP) to 10.65 cm (22 % improvement), and the jitter metric—measured as the third‑order norm of velocity changes—decreases from 1.56 km/s³ to 0.055 km/s³, a 97 % reduction. Joint‑level errors also improve, especially for distal limbs where distance constraints are most beneficial. Importantly, the system maintains low drift over long recordings (30 + minutes), demonstrating suitability for real‑time streaming, AR/VR, and sports analytics.

The authors acknowledge limitations: UWB ranging can degrade in metal‑rich or multipath environments, and the current six‑sensor configuration may not scale trivially due to increased graph complexity. Future work will explore robust UWB models for outdoor settings, efficient sub‑graph sampling, and unsupervised domain adaptation to bridge the synthetic‑real gap.

In summary, Ultra Inertial Poser delivers a low‑cost, anchor‑free, wearable motion capture solution that combines the ubiquity of IMUs with the absolute distance information of UWB. By integrating these modalities through a graph‑based deep learning framework, the system achieves state‑of‑the‑art accuracy and stability, and the authors release all code, hardware designs, and the UIP‑DB dataset to foster further research and commercial adoption.

Comments & Academic Discussion

Loading comments...

Leave a Comment