LeGO: Leveraging a Surface Deformation Network for Animatable Stylized Face Generation with One Example

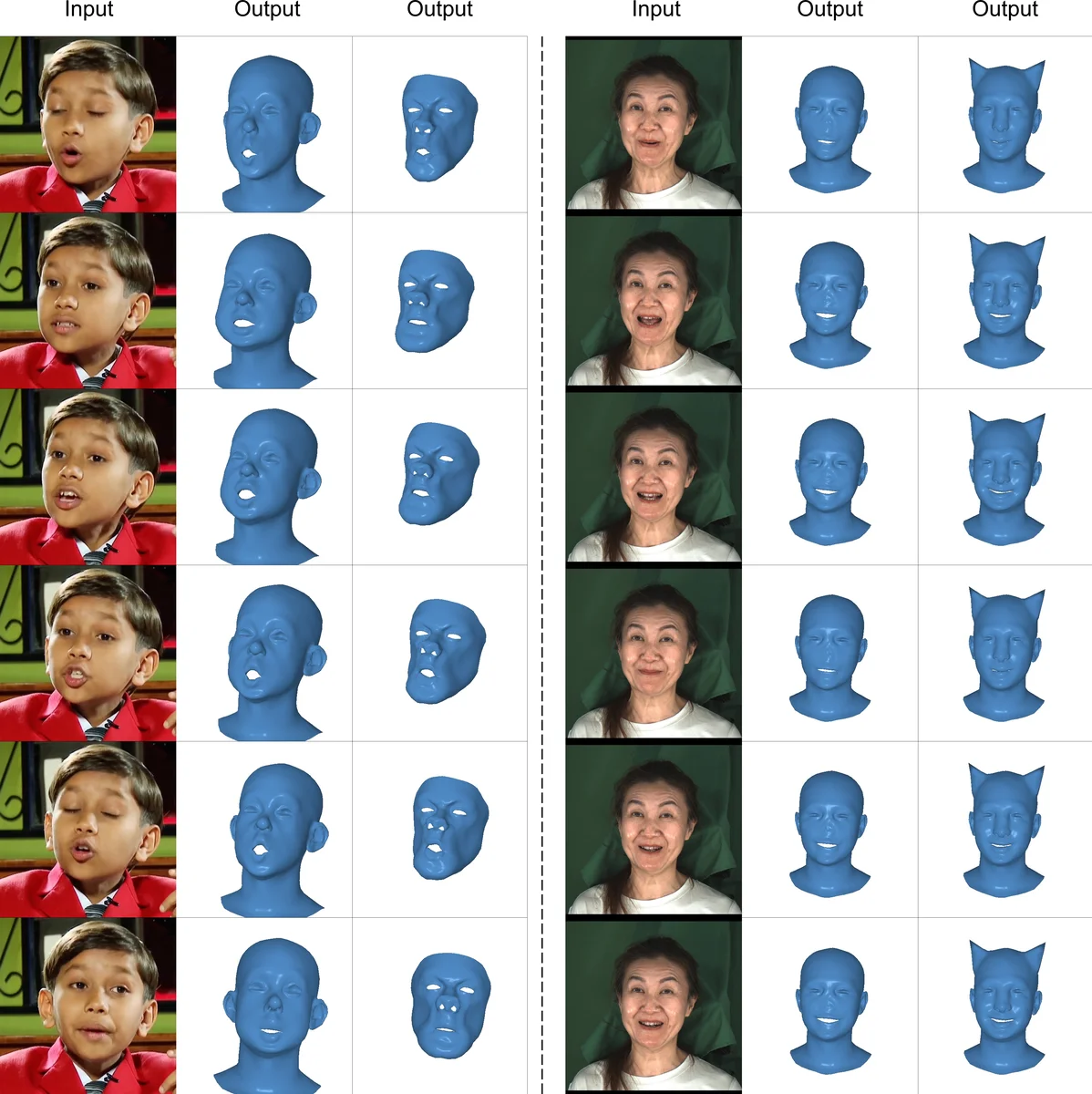

Recent advances in 3D face stylization have made significant strides in few to zero-shot settings. However, the degree of stylization achieved by existing methods is often not sufficient for practical applications because they are mostly based on statistical 3D Morphable Models (3DMM) with limited variations. To this end, we propose a method that can produce a highly stylized 3D face model with desired topology. Our methods train a surface deformation network with 3DMM and translate its domain to the target style using a paired exemplar. The network achieves stylization of the 3D face mesh by mimicking the style of the target using a differentiable renderer and directional CLIP losses. Additionally, during the inference process, we utilize a Mesh Agnostic Encoder (MAGE) that takes deformation target, a mesh of diverse topologies as input to the stylization process and encodes its shape into our latent space. The resulting stylized face model can be animated by commonly used 3DMM blend shapes. A set of quantitative and qualitative evaluations demonstrate that our method can produce highly stylized face meshes according to a given style and output them in a desired topology. We also demonstrate example applications of our method including image-based stylized avatar generation, linear interpolation of geometric styles, and facial animation of stylized avatars.

💡 Research Summary

The paper introduces LeGO, a novel framework for generating highly stylized 3D face models that can be animated with conventional 3D Morphable Model (3DMM) blend‑shapes, using only a single example of the target style. The authors identify a key limitation of prior work: most methods rely on statistical 3DMMs, which constrain the range of geometric variation and consequently produce only modest stylization. To overcome this, LeGO combines two complementary components: a Surface Deformation Network (SDN) and a Mesh‑Agnostic Encoder (MAGE).

The SDN is trained to map a canonical 3DMM mesh onto a stylized domain. Rather than learning a direct vertex‑to‑vertex correspondence, the network is supervised with a combination of geometric and perceptual losses. A differentiable renderer projects the deformed mesh into an image, which is then fed to CLIP. By employing a directional CLIP loss, the rendered image is encouraged to move in the embedding space toward the textual description of the target style, ensuring that high‑frequency details, shading cues, and overall artistic intent are captured. This perceptual supervision complements a traditional L2 vertex loss, allowing the network to produce non‑linear, high‑frequency deformations that go far beyond the linear subspace of a 3DMM.

MAGE addresses the second major obstacle: the need for a fixed mesh topology. Existing deformation pipelines typically require the same vertex ordering and resolution for both training and inference, limiting their applicability to real‑world assets that come in diverse resolutions and topologies. MAGE is a hybrid encoder that processes arbitrary meshes using graph neural network layers together with point‑cloud feature aggregators. It learns a topology‑invariant latent representation z, which can be extracted from any input mesh—whether it is a low‑poly game asset, a sculpted high‑resolution model, or even a noisy scan. The latent code z is then fed to the SDN as a conditional input, enabling the same style transformation to be applied to any mesh geometry.

Training proceeds with paired data (canonical 3DMM mesh, stylized exemplar). Crucially, only a single exemplar per style is required; the method automatically establishes a coarse correspondence between the exemplar and the 3DMM mesh using a self‑supervised alignment step. The loss function combines (i) vertex‑wise L2 distance, (ii) the directional CLIP loss on rendered images, and (iii) a regularization term that enforces consistency with the original 3DMM blend‑shape space. By preserving the blend‑shape coefficients during deformation, the final stylized mesh remains fully compatible with existing animation pipelines—any blend‑shape parameter that drives expression on the original 3DMM can be applied unchanged to the stylized output.

During inference, a user supplies an arbitrary mesh and selects a desired style. MAGE encodes the mesh into z, the style code is concatenated, and the SDN produces a stylized mesh that inherits the input topology. The resulting mesh can be animated in real time using the standard 3DMM blend‑shape set, enabling expressive facial motions on highly artistic avatars.

The authors evaluate LeGO on several fronts. Quantitatively, they report lower Fréchet Inception Distance (FID) and LPIPS scores compared with state‑of‑the‑art 3DMM‑based stylization methods, indicating both higher visual fidelity to the target style and better preservation of identity. Qualitatively, user studies show a strong preference for LeGO’s outputs, especially when the input topology is low‑resolution; the method retains stylistic details without excessive smoothing. Additional experiments demonstrate linear interpolation between different style codes, producing smooth transitions (e.g., from cartoon to sculptural style) and confirming that the latent space is well‑behaved. Finally, the paper showcases practical applications: (a) image‑to‑avatar pipelines where a single portrait is used to generate a stylized 3D avatar, (b) real‑time animation of stylized characters in AR/VR environments, and (c) rapid prototyping for game developers who need stylized faces on meshes of varying polygon counts.

In summary, LeGO makes three major contributions: (1) a domain‑translation framework that requires only one exemplar to achieve high‑quality stylization, (2) a topology‑agnostic encoder that decouples the deformation process from mesh resolution, and (3) seamless integration with existing 3DMM blend‑shape animation, preserving both expressive control and artistic style. These advances collectively push 3D face stylization toward practical, real‑world deployment in interactive media, virtual production, and digital‑human creation.

Comments & Academic Discussion

Loading comments...

Leave a Comment