Perceptual Thresholds for Radial Optic Flow Distortion in Near-Eye Stereoscopic Displays

We provide the first perceptual quantification of user’s sensitivity to radial optic flow artifacts and demonstrate a promising approach for masking this optic flow artifact via blink suppression. Near-eye HMOs allow users to feel immersed in virtual environments by providing visual cues, like motion parallax and stereoscopy, that mimic how we view the physical world. However, these systems exhibit a variety of perceptual artifacts that can limit their usability and the user’s sense of presence in VR. One well-known artifact is the vergence-accommodation conflict (VAC). Varifocal displays can mitigate VAC, but bring with them other artifacts such as a change in virtual image size (radial optic flow) when the focal plane changes. We conducted a set of psychophysical studies to measure users’ ability to perceive this radial flow artifact before, during, and after self-initiated blinks. Our results showed that visual sensitivity was reduced by a factor of 10 at the start and for ~70 ms after a blink was detected. Pre- and post-blink sensitivity was, on average, ~O.15% image size change during normal viewing and increased to ~1.5- 2.0% during blinks. Our results imply that a rapid (under 70 ms) radial optic flow distortion can go unnoticed during a blink. Furthermore, our results provide empirical data that can be used to inform engineering requirements for both hardware design and software-based graphical correction algorithms for future varifocal near-eye displays. Our project website is available at https://gamma.umd.edu/ROF/.

💡 Research Summary

This paper presents the first quantitative assessment of human sensitivity to radial optic flow (ROF) artifacts that arise when the focal plane of a varifocal near‑eye display changes. While varifocal optics are a promising solution to the vergence‑accommodation conflict (VAC) that plagues conventional stereoscopic head‑mounted displays, the shift in virtual image size that accompanies focal adjustments can break the illusion of a stable, coherent world. The authors therefore set out to measure the smallest detectable image‑size change under normal viewing conditions and to determine how this threshold is modulated by the brief visual “blind spot” that occurs around self‑initiated blinks.

Methodology

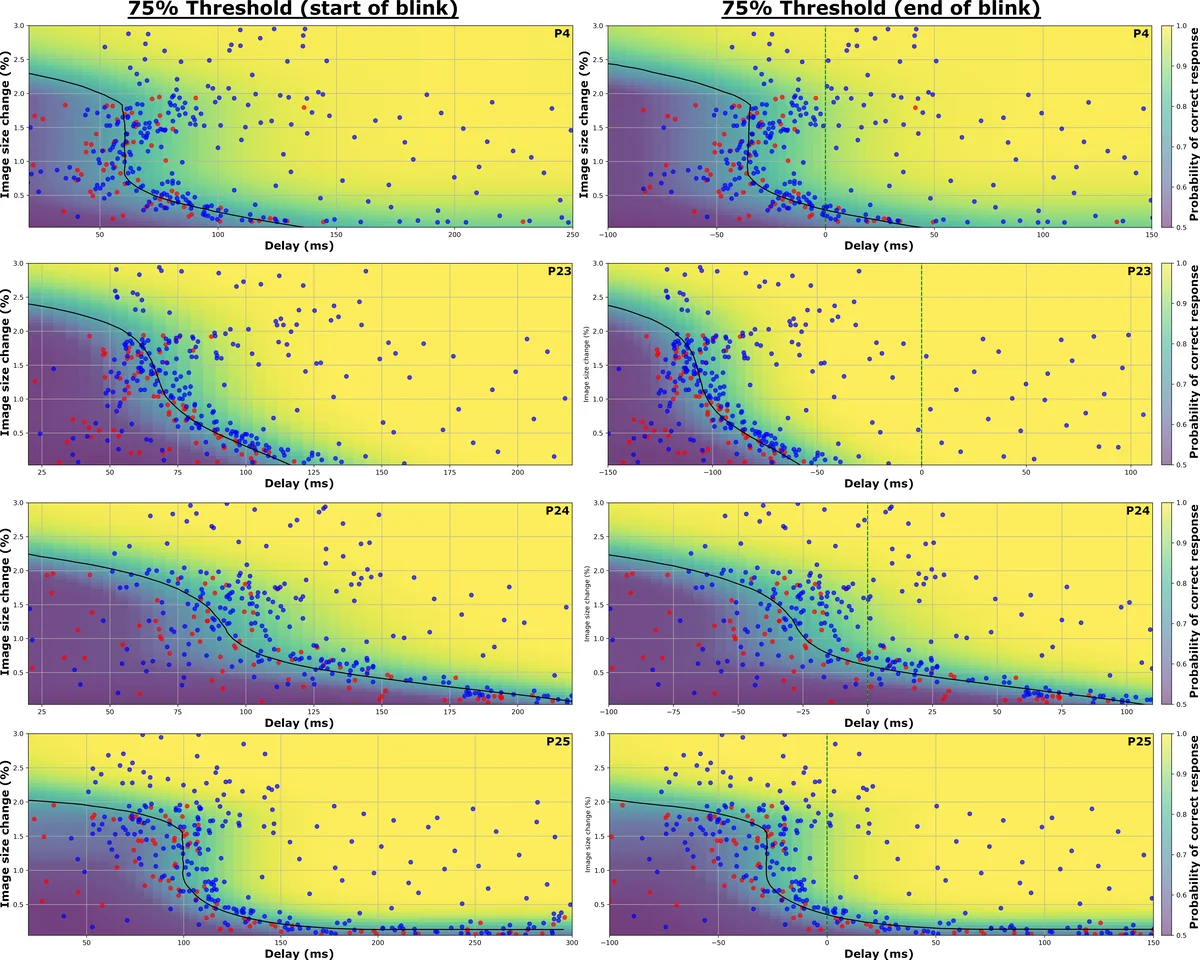

A psychophysical two‑alternative forced‑choice (2AFC) paradigm was employed with 24 participants who had normal or corrected‑to‑normal vision. On each trial a circular stimulus was presented on a varifocal prototype; its diameter was either unchanged or altered by a small percentage (ranging from 0.05 % to 3 %). Participants indicated whether the stimulus appeared larger or smaller. An infrared eye‑tracking module monitored spontaneous blinks in real time. When a blink was detected, the system logged the exact onset and offset timestamps and flagged all stimulus presentations occurring within a 70 ms window before or after the blink as “blink‑masked” trials. All other trials were classified as “normal‑view” trials.

Results

Under normal viewing, the mean just‑noticeable difference (JND) for image‑size change was 0.15 % ± 0.04 %, confirming that the human visual system can detect extremely subtle scaling cues. In stark contrast, during the blink‑masked interval the JND rose dramatically to between 1.5 % and 2.0 %, representing roughly a ten‑fold reduction in sensitivity. The sensitivity drop was most pronounced from the instant a blink began until approximately 70 ms after the blink was detected; after this brief period, performance returned to baseline levels.

Interpretation and Engineering Implications

These findings demonstrate that the visual system is effectively “offline” for about 70 ms surrounding a blink, during which relatively large scaling distortions (up to 2 % of image size) go unnoticed. For designers of varifocal near‑eye displays, this creates a practical masking window. If the focal‑plane transition can be completed within this 70 ms interval, the resulting ROF artifact will be perceptually invisible to most users. Consequently, hardware specifications such as lens actuation speed, positional accuracy, and control latency can be directly tied to the 70 ms threshold. On the software side, real‑time graphics pipelines can schedule corrective scaling operations (e.g., shader‑based warping) to coincide with predicted blink periods, further reducing the likelihood of perceptible artifacts.

Limitations

The study’s participant pool is modest, and the reliance on spontaneous blinks introduces inter‑subject variability in blink frequency and duration that was not explicitly controlled. Moreover, the experimental environment was a static, well‑lit laboratory setting; the interaction of ROF with dynamic lighting, rapid head movements, or complex 3D scenes typical of immersive VR remains to be explored.

Future Directions

The authors propose several avenues for follow‑up work: (1) extending the psychophysical protocol to a broader range of visual content (text, textured 3D objects) and motion speeds; (2) investigating active blink‑induction techniques (e.g., electrical stimulation) to create a deterministic masking signal; (3) integrating eye‑tracking data into a closed‑loop varifocal control algorithm that predicts blink onset and dynamically schedules focal adjustments and graphical corrections.

Conclusion

By quantifying the perceptual thresholds for ROF both in ordinary viewing and during the brief visual suppression associated with blinks, the paper provides concrete, empirically grounded design criteria for next‑generation varifocal near‑eye displays. The 0.15 % baseline JND and the 1.5–2 % blink‑masked JND, together with the 70 ms effective masking window, give hardware engineers and graphics programmers a clear target for timing, speed, and correction accuracy. The publicly available project website (https://gamma.umd.edu/ROF/) supplies the full dataset and code, inviting the broader community to build upon these results and move toward truly seamless, artifact‑free virtual reality experiences.

Comments & Academic Discussion

Loading comments...

Leave a Comment