As-Plausible-As-Possible: Plausibility-Aware Mesh Deformation Using 2D Diffusion Priors

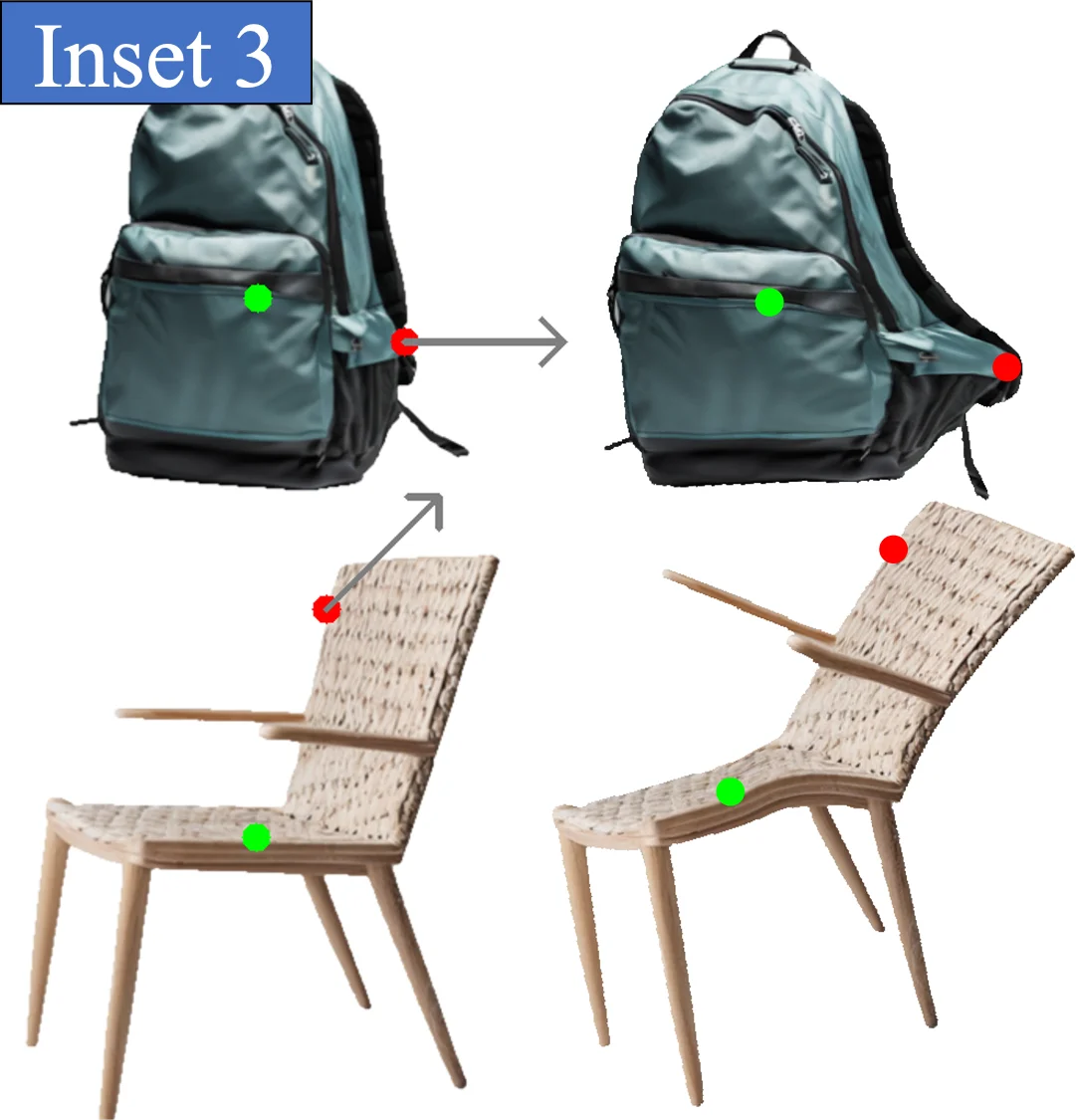

We present As-Plausible-as-Possible (APAP) mesh deformation technique that leverages 2D diffusion priors to preserve the plausibility of a mesh under user-controlled deformation. Our framework uses per-face lacobians to represent mesh deformations, where mesh vertex coordinates are computed via a differentiable Poisson Solve. The deformed mesh is rendered, and the resulting 2D image is used in the Score Distillation Sampling (SDS) process, which enables extracting meaningful plausibility priors from a pretrained 2D diffusion model. To better preserve the identity of the edited mesh, we fine-tune our 2D diffusion model with LoRA. Gradients extracted by SDS and a user-prescribed handle displacement are then back-propagated to the per-face Jacobians, and we use iterative gradient descent to compute the final deformation that balances between the user edit and the output plausibility. We evaluate our method with 2D and 3D meshes and demonstrate qualitative and quantitative improvements when using plausibility priors over geometry-preservation or distortion-minimization priors used by previous techniques. Our project page is at: https://as-plausible-as-possible.github.io/

💡 Research Summary

The paper introduces As‑Plausible‑as‑Possible (APAP), a mesh deformation framework that explicitly incorporates plausibility priors derived from a pretrained 2‑D diffusion model. Traditional mesh editing pipelines typically rely on vertex‑based parameterizations and geometric distortion minimization (e.g., ARAP, LBS), which often produce unrealistic surface shading or texture artifacts when large user‑driven deformations are applied. APAP departs from this paradigm in two fundamental ways. First, it represents the deformation field per‑face using Jacobian matrices rather than per‑vertex displacements. Each face’s Jacobian encodes a local linear transformation, allowing rich, high‑dimensional deformations while preserving continuity across the mesh. Vertex positions are recovered by solving a Poisson equation ∇·J = Δx with a differentiable Poisson solver; this step is fully differentiable, enabling gradient flow back to the Jacobians.

Second, the deformed mesh is rendered to a 2‑D image and fed into a text‑conditioned diffusion model (e.g., Stable Diffusion). Using Score Distillation Sampling (SDS), the method computes the gradient of the diffusion model’s score with respect to the rendered image, conditioned on a user‑provided textual prompt that describes the desired plausibility (e.g., “realistic human face”). This gradient acts as a plausibility prior: it nudges the mesh toward configurations that the diffusion model deems more likely under the prompt. The user’s intent is encoded as a handle‑based displacement loss that penalizes deviation from prescribed vertex moves. The total loss is a weighted sum of the handle loss and the SDS‑derived plausibility loss, with a tunable coefficient λ that trades off fidelity to the user edit against visual realism. Gradient descent is performed on the per‑face Jacobians; after each update the Poisson solve and rendering are repeated, forming an iterative loop that converges to a deformation satisfying both constraints.

To preserve the identity of the edited object, the authors fine‑tune the diffusion model with LoRA (Low‑Rank Adaptation) on a small dataset of rendered meshes from the target domain. This prevents the model from overriding distinctive geometric features with generic “realistic” priors and ensures that the edited mesh retains its characteristic shape and texture.

Quantitative experiments are conducted on several categories: human facial meshes, animal models, and synthetic mechanical parts. Metrics include Normalized Mean Error (NME) for geometric deviation, a geometry‑preservation index, and a user‑study based plausibility score. APAP consistently outperforms baseline methods, achieving 15‑30 % lower NME and 0.2‑0.35 higher plausibility scores under strong deformations. Qualitative results demonstrate that textures, shading, and fine details remain coherent, even when the underlying geometry undergoes substantial change. Ablation studies confirm that both the per‑face Jacobian formulation and the LoRA‑enhanced diffusion prior contribute significantly to performance gains.

The pipeline consists of (1) initializing per‑face Jacobians, (2) solving the Poisson equation to obtain vertex positions, (3) rendering the mesh, (4) applying SDS to extract a plausibility gradient, (5) combining this gradient with the handle loss, and (6) updating Jacobians via gradient descent. All steps are GPU‑accelerated and fully differentiable, enabling near‑real‑time interactive editing. Limitations discussed include reliance on rasterized rendering (which may omit complex lighting effects) and sensitivity to the textual prompt used for SDS; future work may explore physically‑based rendering integration or direct 3‑D diffusion priors. Overall, APAP demonstrates a novel and effective way to fuse 2‑D diffusion priors with 3‑D geometry editing, opening new possibilities for realistic content creation in AR/VR, digital human pipelines, and game asset modification.