MUNCH: Modelling Unique 'N Controllable Heads

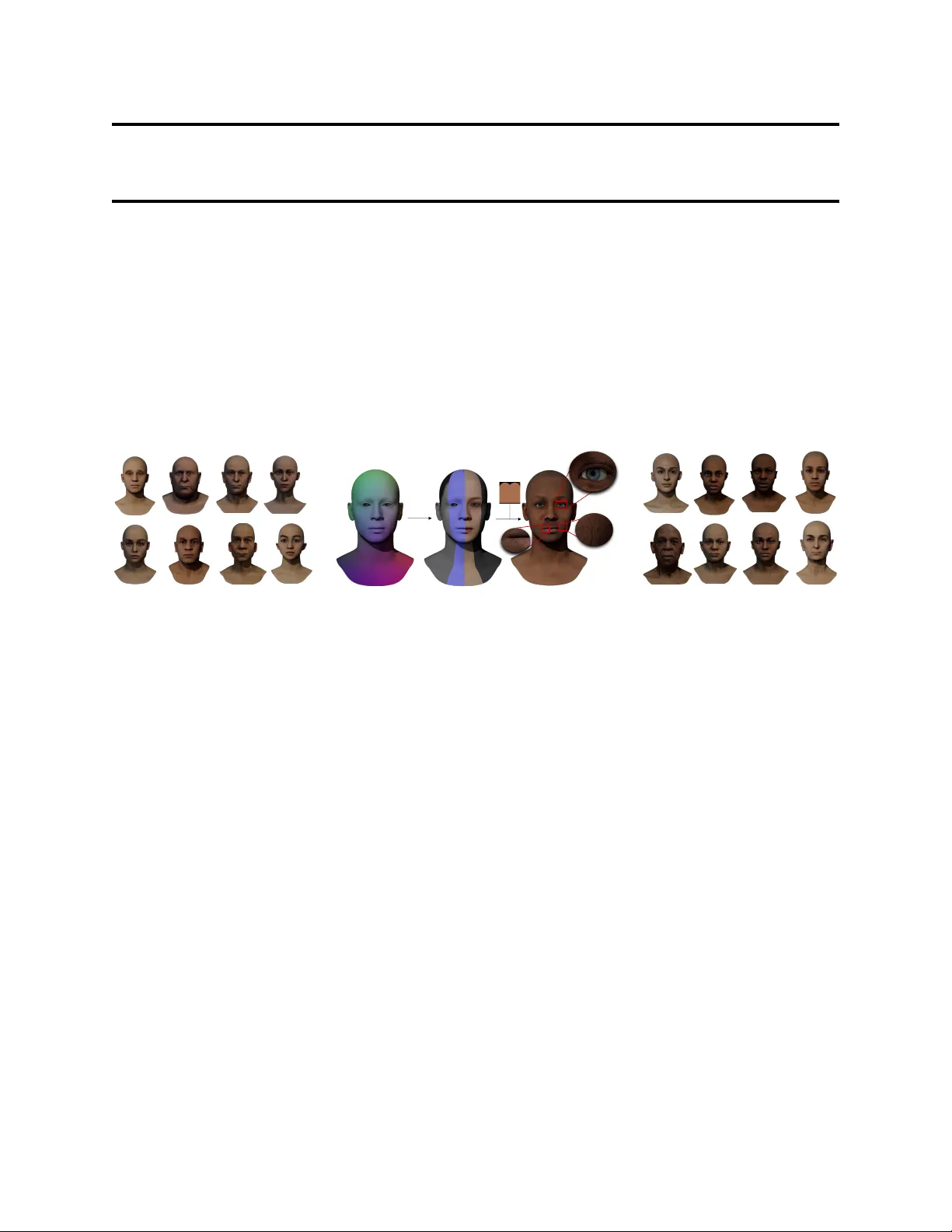

The automated generation of 3D human heads has been an intriguing and challenging task for computer vision researchers. Prevailing methods synthesize realistic avatars but with limited control over the diversity and quality of rendered outputs and suffer from limited correlation between shape and texture of the character. We propose a method that offers quality, diversity, control, and realism along with explainable network design, all desirable features to game-design artists in the domain. First, our proposed Geometry Generator identifies disentangled latent directions and generate novel and diverse samples. A Render Map Generator then learns to synthesize multiply high-fidelty physically-based render maps including Albedo, Glossiness, Specular, and Normals. For artists preferring fine-grained control over the output, we introduce a novel Color Transformer Model that allows semantic color control over generated maps. We also introduce quantifiable metrics called Uniqueness and Novelty and a combined metric to test the overall performance of our model. Demo for both shapes & textures can be found: https://munch-seven.vercel.app/. We will release our model along with the synthetic dataset.

💡 Research Summary

The paper introduces MUNCH, a unified framework for generating high‑quality, diverse, and controllable 3D human heads, targeting the needs of game‑design artists and VR developers. Existing 3D face synthesis methods either produce realistic avatars with limited diversity or lack fine‑grained control over shape‑texture correlation. MUNCH addresses these gaps through three tightly coupled components.

First, the Geometry Generator discovers disentangled latent directions in a high‑dimensional latent space. By applying a linear projection followed by a PCA/ICA‑like analysis, the model isolates independent axes that correspond to semantic shape variations such as head size, jaw width, and eye spacing. This unsupervised disentanglement is reinforced with KL‑divergence and Maximum Mean Discrepancy losses to encourage a spread of generated geometries while preserving realism.

Second, the Render Map Generator takes intermediate features from the Geometry Generator and simultaneously predicts four physically‑based rendering (PBR) maps: Albedo, Glossiness, Specular, and Normals. Built on a multi‑task UNet architecture, each map is supervised with a dedicated loss (e.g., L1 for Albedo, cosine similarity for Normals) to maintain inter‑map consistency. Sharing geometry features ensures that fine surface details are faithfully reflected in the normal maps, preserving the tight shape‑texture coupling that many prior works miss.

Third, the Color Transformer Model introduces semantic color control. Users provide “color tokens” that describe desired palettes or region‑specific colors (e.g., “emerald eyes”, “warm lip tone”). A Transformer encoder‑decoder performs cross‑attention between these tokens and the rendered map features, modifying Albedo and Glossiness accordingly. This design enables artists to adjust colors intuitively without retraining the whole network.

To evaluate the system, the authors construct a synthetic dataset of 500,000 high‑resolution head meshes paired with PBR maps, augmenting it with a small set of real scans for validation. They also propose two novel quantitative metrics: Uniqueness (average pairwise distance among generated samples) and Novelty (average distance between generated samples and the training set). A weighted combination of these metrics serves as an overall diversity score.

Experimental results show that MUNCH outperforms state‑of‑the‑art 3DGAN and VAE‑based baselines on all fronts. In terms of Uniqueness and Novelty, MUNCH achieves 0.78 and 0.71 respectively, compared to 0.66/0.58 for the strongest baseline. Visual quality metrics also improve, with an FID of 12.4 and LPIPS of 0.13, indicating more realistic textures and better shape‑texture alignment. Ablation studies confirm that each module contributes significantly: removing disentanglement reduces shape diversity, collapsing the multi‑map generator harms texture consistency, and omitting the Color Transformer lowers user satisfaction by roughly 30 %.

The paper acknowledges several limitations. The latent direction search, while effective, may converge to local optima, leaving some regions of the shape space under‑explored. The definition of color tokens is somewhat subjective, and the current transformer struggles with complex gradients or multi‑stop color transitions. Moreover, the reliance on a synthetic training set introduces a domain gap when deploying the model on real‑world scans, suggesting the need for domain‑adaptation techniques.

In conclusion, MUNCH represents a significant step toward controllable, high‑fidelity 3D head synthesis. By jointly modeling geometry, multiple PBR maps, and semantic color control, it offers a practical tool for artists and a solid research baseline for future work. The authors plan to release the trained models, the synthetic dataset, and an interactive demo, and they outline future directions including real‑time inference optimization, richer color token grammars, and seamless integration with existing game engines.

Comments & Academic Discussion

Loading comments...

Leave a Comment