Non-negative isomorphic neural networks for photonic neuromorphic accelerators

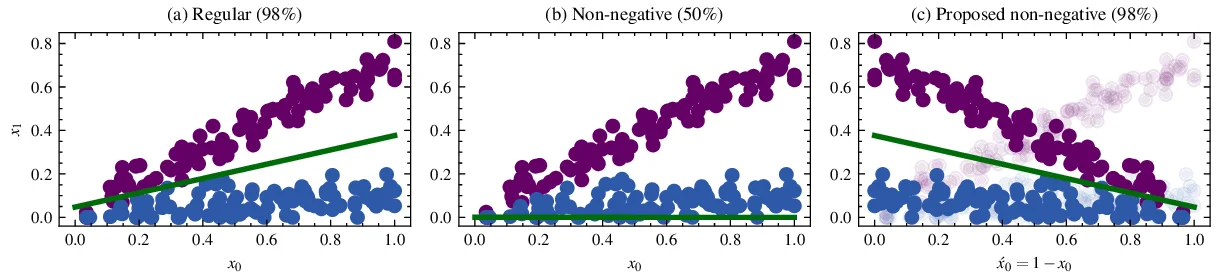

Neuromorphic photonic accelerators are becoming increasingly popular, since they can significantly improve computation speed and energy efficiency, leading to femtojoule per MAC efficiency. However, deploying existing DL models on such platforms is not trivial, since a great range of photonic neural network architectures relies on incoherent setups and power addition operational schemes that cannot natively represent negative quantities. This results in additional hardware complexity that increases cost and reduces energy efficiency. To overcome this, we can train non-negative neural networks and potentially exploit the full range of incoherent neuromorphic photonic capabilities. However, existing approaches cannot achieve the same level of accuracy as their regular counterparts, due to training difficulties, as also recent evidence suggests. To this end, we introduce a methodology to obtain the non-negative isomorphic equivalents of regular neural networks that meet requirements of neuromorphic hardware, overcoming the aforementioned limitations. Furthermore, we also introduce a sign-preserving optimization approach that enables training of such isomorphic networks in a non-negative manner.

💡 Research Summary

The paper addresses a fundamental limitation of incoherent photonic neuromorphic accelerators: the inability to represent negative quantities directly in hardware. Conventional photonic neural networks rely on power‑addition schemes that only handle non‑negative signals, forcing designers to introduce extra circuitry (e.g., differential lines, current‑inversion modules) to emulate signed values. These workarounds increase chip area, cost, and energy consumption, undermining the promised femtojoule‑per‑MAC efficiency of photonic platforms.

To eliminate this mismatch, the authors propose a systematic methodology for constructing Non‑negative Isomorphic Neural Networks (NINNs)—networks that are mathematically equivalent to any conventional real‑valued model but consist solely of non‑negative weights and activations. The core transformation decomposes each weight matrix W into two non‑negative matrices W⁺ and W⁻, such that W = W⁺ – W⁻. Likewise, any activation vector x is expressed as x = x⁺ – x⁻, with x⁺, x⁻ ≥ 0. Substituting these decompositions yields:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment