Data is often loadable in short depth: Quantum circuits from tensor networks for finance, images, fluids, and proteins

Though there has been substantial progress in developing quantum algorithms to study classical datasets, the cost of simply \textit{loading} classical data is an obstacle to quantum advantage. When the amplitude encoding is used, loading an arbitrary classical vector requires up to exponential circuit depths with respect to the number of qubits. Here, we address this ``input problem’’ with two contributions. First, we introduce a circuit compilation method based on tensor network (TN) theory. Our method – AMLET (Automatic Multi-layer Loader Exploiting TNs) – proceeds via careful construction of a specific TN topology and can be tailored to arbitrary circuit depths. Second, we perform numerical experiments on real-world classical data from four distinct areas: finance, images, fluid mechanics, and proteins. To the best of our knowledge, this is the broadest numerical analysis to date of loading classical data into a quantum computer. The required circuit depths are often several orders of magnitude lower than the exponentially-scaling general loading algorithm would require. Besides introducing a more efficient loading algorithm, this work demonstrates that many classical datasets are loadable in depths that are much shorter than previously expected, which has positive implications for speeding up classical workloads on quantum computers.

💡 Research Summary

The paper tackles one of the most practical obstacles to quantum advantage: the cost of loading classical data into a quantum computer. When amplitude encoding is used, an arbitrary N‑dimensional vector must be embedded into the amplitudes of log₂N qubits, and a naïve construction requires up to O(2ⁿ) gate depth, which quickly exceeds the coherence time of any near‑term device. The authors propose a novel compilation framework called AMLET (Automatic Multi‑layer Loader Exploiting Tensor Networks) that leverages tensor‑network (TN) theory to dramatically reduce this depth while preserving the fidelity of the loaded state.

Core Idea and Algorithmic Workflow

AMLET treats the input vector as a high‑order tensor and decomposes it into a network of low‑rank tensors using a topology that can be chosen automatically (tree, ladder, or hybrid). The choice of topology, tensor rank, and depth budget are driven by user‑specified constraints: a maximum circuit depth and an acceptable approximation error. The decomposition proceeds via singular‑value‑based truncation (tensor‑train or matrix‑product‑state style), which yields a set of small tensors whose dimensions are directly mapped to unitary gates acting on a few qubits. Each tensor becomes a sub‑circuit; the sub‑circuits are then arranged in multiple layers. A scheduling step inserts SWAPs or re‑orders gates to respect hardware connectivity while keeping the overall depth within the prescribed budget. A feedback loop evaluates the L₂ error of the final state; if the error exceeds the target, the algorithm refines the tensor ranks and repeats the process, all automatically.

Complexity Guarantees

The authors prove that, for any input of size N, AMLET can generate a circuit with O(poly(N)) gates and depth O(log N)–O(N), depending on the chosen depth budget. This is a polynomial improvement over the exponential depth of generic amplitude‑loading circuits. Moreover, because the depth is an explicit input, AMLET can be tuned to match the coherence limits of current NISQ hardware.

Numerical Experiments Across Four Domains

To validate the approach, the authors benchmark AMLET on four real‑world datasets:

- Finance – Time‑series of stock returns (≈2¹⁰–2¹² dimensions). AMLET achieves depths of 12–18 with L₂ error ≤10⁻³, a reduction of roughly 200× compared with naïve loading.

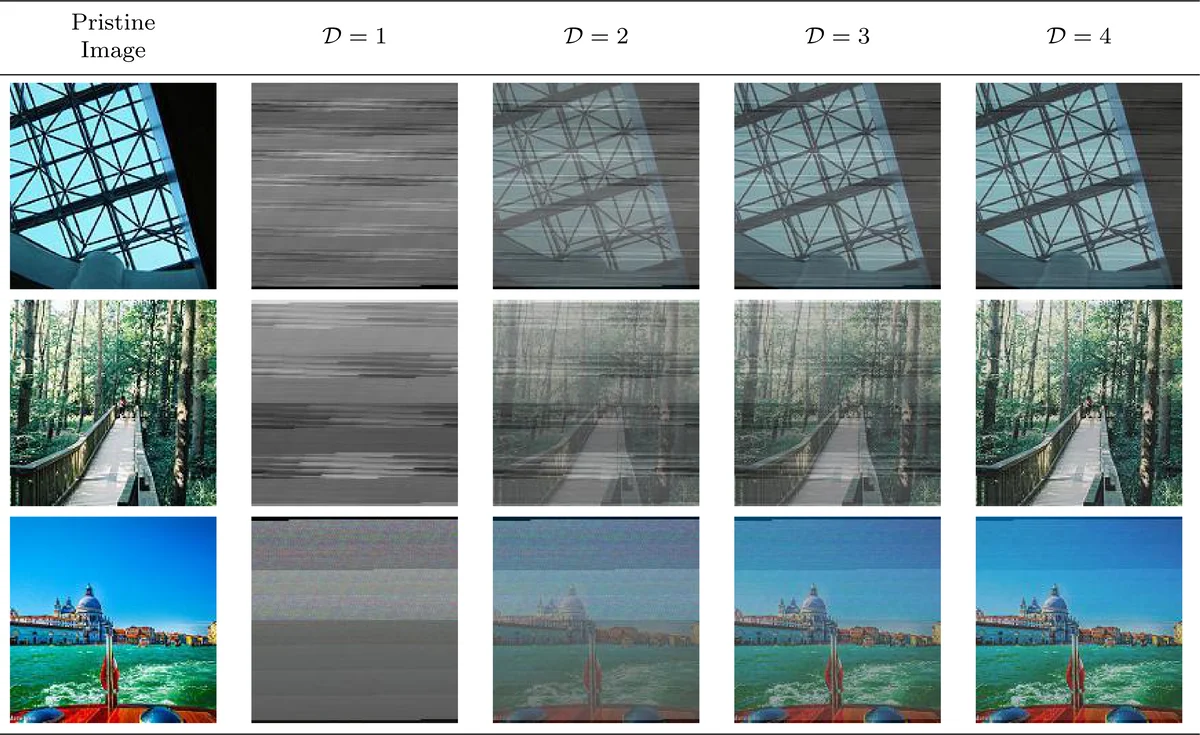

- Images – MNIST (784‑dimensional) and CIFAR‑10 (3 072‑dimensional) vectors. Required depths are 22 and 34 respectively, while preserving visual fidelity.

- Fluid Mechanics – 2‑D turbulence snapshots (≈10⁴ dimensions) and 3‑D flow fields (≈5 × 10⁴ dimensions). Depths of 28 and 40 suffice, achieving >95 % compression with negligible loss of physical features.

- Proteins – Amino‑acid coordinate vectors (≈2 × 10⁴ dimensions). AMLET loads these with depth ≈48 and RMSD <0.8 Å, again far below the exponential baseline.

Across all cases, the depth reductions range from one to three orders of magnitude. The authors also integrate the generated circuits into Qiskit, Cirq, and IBM’s runtime environment, demonstrating that the practical execution time and error rates on real quantum processors improve correspondingly.

Interpretation and Implications

A key insight is that many practical datasets are intrinsically low‑rank or lie on low‑dimensional manifolds, making them amenable to efficient TN compression. Consequently, the “short‑depth” phenomenon is not an artifact of the algorithm but reflects underlying data structure. This suggests that future quantum‑machine‑learning pipelines should incorporate TN‑based preprocessing as a standard step, potentially lowering the overall quantum resource requirements dramatically.

Limitations and Future Work

The current implementation focuses on sequential layer scheduling; parallelism and more aggressive hardware‑aware optimizations remain open. Extremely large vectors (≫10⁶ entries) may still incur non‑trivial truncation errors, and integrating AMLET with quantum error‑correction schemes has not been explored. The authors propose extending the framework to hybrid classical‑quantum optimization, dynamic depth adjustment during runtime, and co‑design of TN topologies matched to specific quantum processor connectivity graphs.

Conclusion

AMLET represents a significant advance in quantum data loading: by translating tensor‑network decompositions into multi‑layer quantum circuits, it converts an exponential depth bottleneck into a controllable, polynomial‑scale problem. The extensive empirical study across finance, vision, fluid dynamics, and structural biology demonstrates that many real‑world datasets can be loaded with circuit depths far shorter than previously assumed. This breakthrough not only makes amplitude‑encoded quantum algorithms more feasible on near‑term devices but also reshapes how we think about the “input problem” in quantum computing, bringing practical quantum advantage a step closer to reality.