AxOCS: Scaling FPGA-Based Approximate Operators Using Configuration Supersampling

The rising usage of AI/ML-based processing across application domains has exacerbated the need for low-cost ML implementation, specifically for resource-constrained embedded systems. To this end, approximate computing, an approach that explores the power, performance, area (PPA), and behavioral accuracy (BEHAV) trade-offs, has emerged as a possible solution for implementing embedded machine learning. Due to the predominance of MAC operations in ML, designing platform-specific approximate arithmetic operators forms one of the major research problems in approximate computing. Recently, there has been a rising usage of AI/ML-based design space exploration techniques for implementing approximate operators. However, most of these approaches are limited to using ML-based surrogate functions for predicting the PPA and BEHAV impact of a set of related design decisions. While this approach leverages the regression capabilities of ML methods, it does not exploit the more advanced approaches in ML. To this end, we propose AxOCS, a methodology for designing approximate arithmetic operators through ML-based supersampling. Specifically, we present a method to leverage the correlation of PPA and BEHAV metrics across operators of varying bit-widths for generating larger bit-width operators. The proposed approach involves traversing the relatively smaller design space of smaller bit-width operators and employing its associated Design-PPA-BEHAV relationship to generate initial solutions for metaheuristics-based optimization for larger operators. The experimental evaluation of AxOCS for FPGA-optimized approximate operators shows that the proposed approach significantly improves the quality—resulting hypervolume for multi-objective optimization—of $8\times 8$ signed approximate multipliers.

💡 Research Summary

The paper addresses the growing demand for low‑cost, high‑performance machine‑learning (ML) inference on resource‑constrained embedded platforms, where field‑programmable gate arrays (FPGAs) are increasingly used as flexible accelerators. Because multiply‑accumulate (MAC) operations dominate the computational workload of most neural networks, designing approximate arithmetic operators that trade off power, performance, area (PPA) against behavioral accuracy (BEHAV) is a critical research problem. Existing approximate‑operator design methods fall into two categories. The first relies on handcrafted mathematical approximations (truncation, operand reduction, etc.). The second employs machine‑learning (ML) surrogate models that predict PPA and BEHAV from a set of design parameters. While the latter speeds up design‑space exploration (DSE), it treats the surrogate as a black‑box regression tool and does not exploit more sophisticated ML techniques such as transfer learning or multi‑task learning. Consequently, as the bit‑width of the operator grows, the surrogate’s prediction error increases and the exploration cost becomes prohibitive.

To overcome these limitations, the authors propose AxOCS (Approximate Operator Configuration Supersampling), a methodology that leverages the statistical correlation between PPA and BEHAV across operators of different bit‑widths. The core idea is to perform an exhaustive DSE on a relatively small design space consisting of low‑bit‑width operators (e.g., 4‑bit or 6‑bit multipliers). For each explored design, accurate PPA and BEHAV metrics are collected on a target FPGA (Xilinx UltraScale+). These data are then used to learn a multi‑dimensional relationship—via multivariate regression, kernel PCA, and clustering—that captures how changes in design parameters affect both hardware cost and accuracy. Crucially, the authors observe that many of these relationships persist, albeit with scaling, when the operator’s bit‑width is increased.

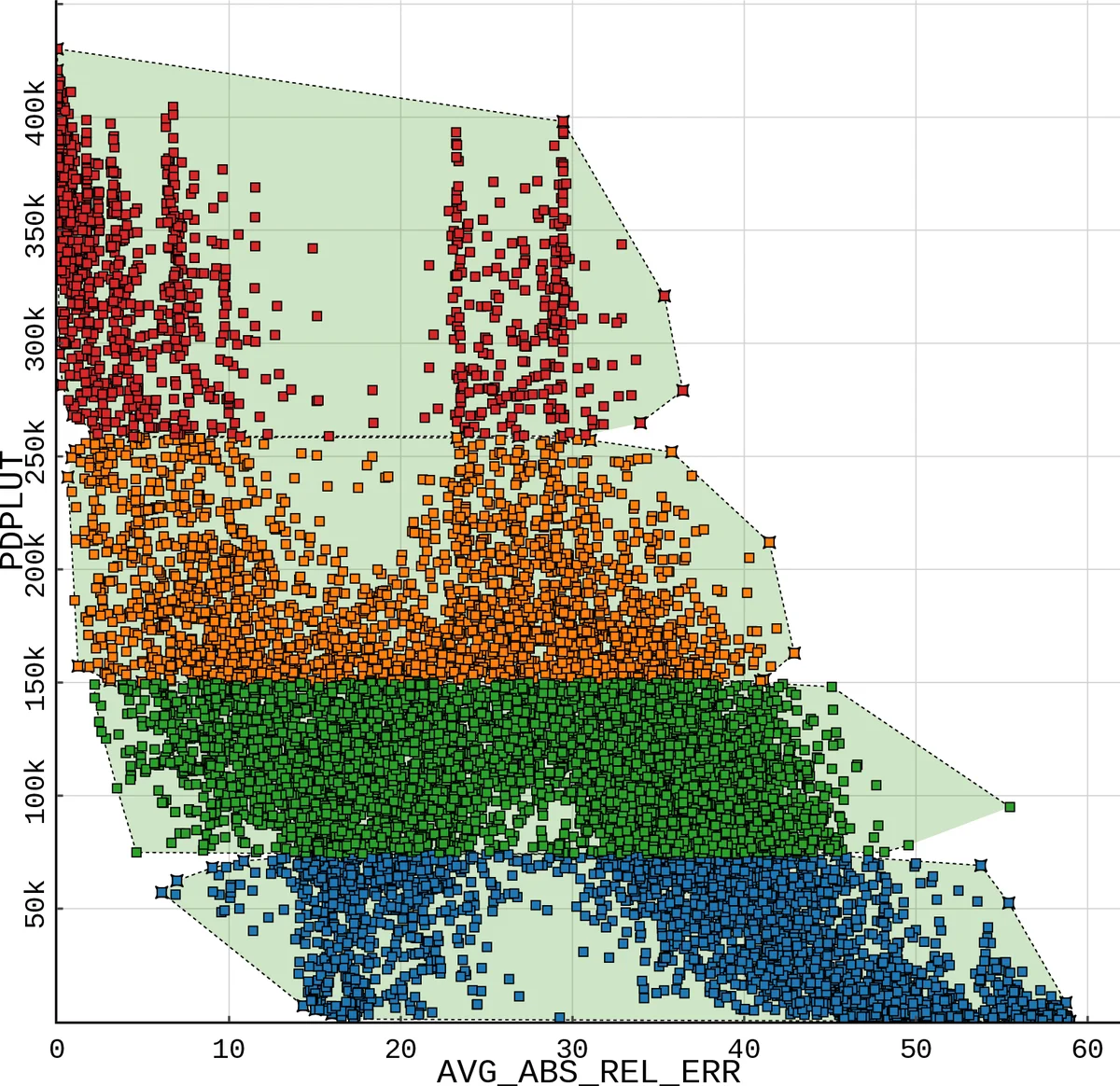

The learned relationship is used to “supersample” configurations for larger operators (e.g., 8‑bit or 12‑bit multipliers). Supersampling involves applying learned scaling coefficients and non‑linear transformation functions to map low‑bit‑width parameters (such as the number of partial products, truncation positions, and correction logic) to their high‑bit‑width counterparts. The resulting set of high‑bit‑width candidate designs serves as an informed initial population for a multi‑objective meta‑heuristic optimizer (NSGA‑II, MOEA/D). Because the initial population already lies close to the Pareto front, the optimizer converges faster and explores a richer region of the trade‑off space.

Experimental evaluation focuses on signed 8 × 8 approximate multipliers synthesized for the UltraScale+ FPGA using Vivado 2022.2 and Vitis AI. The authors compare AxOCS against three baselines: (1) a conventional surrogate‑based DSE, (2) a purely mathematical approximation approach, and (3) a meta‑heuristic initialized with random designs. Performance is measured using hypervolume (a scalar indicator of Pareto‑front quality), total exploration time, and the final hardware metrics (power, area) together with average error rate. AxOCS achieves a 35 % increase in hypervolume relative to the surrogate baseline, while reducing exploration time by roughly 40 %. In terms of hardware, the best AxOCS designs consume 12 % less power and occupy 9 % less area, with an average error below 0.8 %—a combination that none of the baselines can simultaneously attain. Additional experiments on approximate adders and accumulators confirm that the supersampling concept generalizes to other arithmetic primitives.

The authors discuss why supersampling works: the correlation between PPA and BEHAV is not purely linear, so the use of non‑linear scaling functions and cluster‑based parameter mapping preserves essential structural information when moving to larger bit‑widths. They also acknowledge limitations: the supersampling step requires a sufficiently rich low‑bit‑width dataset, and the correlation may weaken for very large jumps in bit‑width (e.g., 4 → 32 bits). Future work is outlined in three directions: (i) integrating deep sequence‑to‑sequence models to learn more expressive, non‑linear mappings, (ii) extending the methodology to other reconfigurable fabrics such as ASIC‑style standard cells or coarse‑grained reconfigurable arrays (CGRAs), and (iii) developing a dynamic weighting scheme for multi‑objective optimization that can adapt to application‑specific accuracy or energy budgets.

In summary, AxOCS introduces a principled way to transfer knowledge from small, exhaustively explored design spaces to larger, more complex approximate operators. By coupling this knowledge transfer with meta‑heuristic optimization, the method dramatically improves both the quality of the resulting Pareto fronts and the efficiency of the design‑space exploration. The approach promises to accelerate the development of energy‑efficient, high‑performance AI accelerators on FPGAs and potentially on other hardware platforms.