Breaking down the relationship between academic impact and scientific disruption

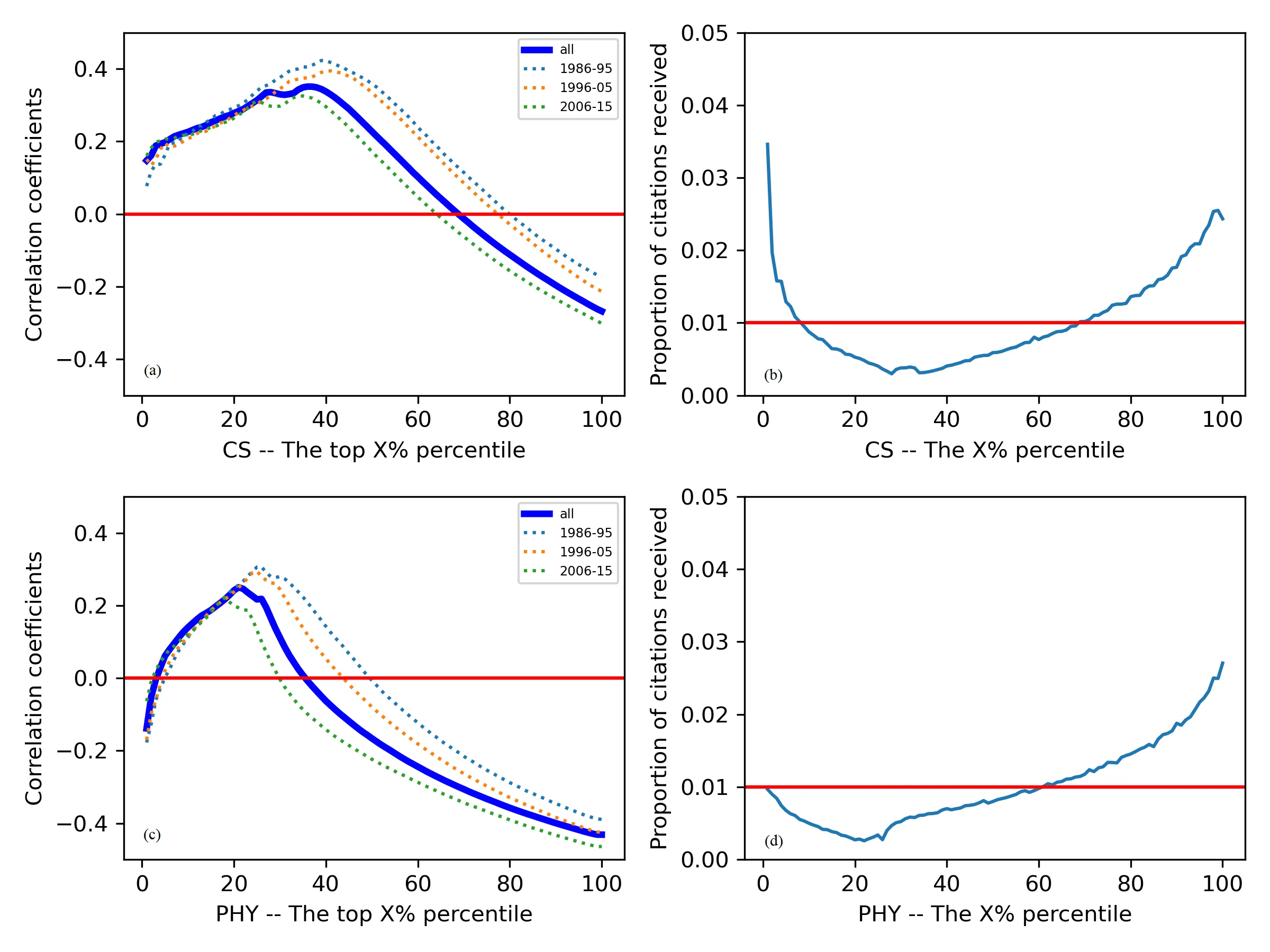

We examine the tension between academic impact - the volume of citations received by publications - and scientific disruption. Intuitively, one would expect disruptive scientific work to be rewarded by high volumes of citations and, symmetrically, impactful work to also be disruptive. A number of recent studies have instead shown that such intuition is often at odds with reality. In this paper, we break down the relationship between impact and disruption with a detailed correlation analysis in two large data sets of publications in Computer Science and Physics. We find that highly disruptive papers tend to be cited at higher rates than average. Contrastingly, the opposite is not true, as we do not find highly impactful papers to be particularly disruptive. Notably, these results qualitatively hold even within individual scientific careers, as we find that - on average - an author’s most disruptive work tends to be well cited, whereas their most cited work does not tend to be disruptive. We discuss the implications of our findings in the context of academic evaluation systems, and show how they can contribute to reconcile seemingly contradictory results in the literature.

💡 Research Summary

This paper investigates the nuanced relationship between academic impact, measured by citation counts, and scientific disruption, quantified using the Disruption Score. Using two extensive bibliometric datasets—over 1.2 million computer‑science papers (2000‑2020) drawn from ACM, IEEE, and DBLP, and more than 850,000 physics papers (1995‑2020) from arXiv and Web of Science—the authors compute for each article (1) total citations, (2) citation trajectory over time, (3) number of co‑authors, (4) venue impact factor, and (5) the disruption metric as defined by Wang et al. (2017).

The analysis proceeds in three stages. First, papers are split into the top‑10 % by citations and the top‑10 % by disruption; mean differences are tested with t‑tests and bootstrap resampling. Second, Pearson and Spearman correlations between citations and disruption are calculated across years, disciplines, and career stages (early, mid, late). Third, multivariate regression models are built to estimate (i) the effect of disruption on future citations and (ii) the reverse effect, controlling for publication year, venue impact, co‑author count, and author‑level h‑index.

Key findings are: (1) Disruptive papers receive substantially more citations than the average—approximately 1.8–2.1 times higher in both fields—whereas highly cited papers do not exhibit elevated disruption scores; only about 15 % of top‑cited papers fall into the disruptive top‑10 %. (2) Across the full sample, the citation‑disruption correlation is modestly positive (r ≈ 0.30), but regression coefficients reveal an asymmetry: disruption predicts future citations (β ≈ 0.45–0.52, p < 0.001) while citations do not predict disruption (β ≈ 0.02, not significant). (3) At the individual‑researcher level, 85 % of scholars have their most disruptive work also among their better‑cited outputs, yet only about 30 % of their most‑cited work is highly disruptive. This pattern holds across career phases, suggesting a “disruption → citation” pathway that is robust, whereas the reverse pathway is weak.

Temporal analysis shows that disruptive papers may start with low citation rates but gain momentum as they reshape citation networks, confirming a delayed‑recognition effect. The authors argue that reliance on citation‑centric metrics alone underestimates the contribution of truly innovative work, especially for early‑career researchers whose disruptive ideas have not yet accrued citations.

Policy implications are discussed in depth. The authors recommend augmenting evaluation frameworks with disruption scores to identify “potential innovators” early, designing funding streams that reward long‑term impact rather than immediate citation counts, and encouraging journals to incorporate disruption metrics into peer‑review criteria. By treating impact and disruption as complementary but distinct dimensions, institutions can better align incentives with the goal of fostering scientific breakthroughs.

In summary, the study provides robust empirical evidence that (i) highly disruptive papers tend to be well‑cited, whereas (ii) highly cited papers are not necessarily disruptive. This asymmetry clarifies contradictory findings in the literature and underscores the need for multi‑dimensional assessment of scholarly contributions.

Comments & Academic Discussion

Loading comments...

Leave a Comment