Non-invasive thermal comfort perception based on subtleness magnification and deep learning for energy efficiency

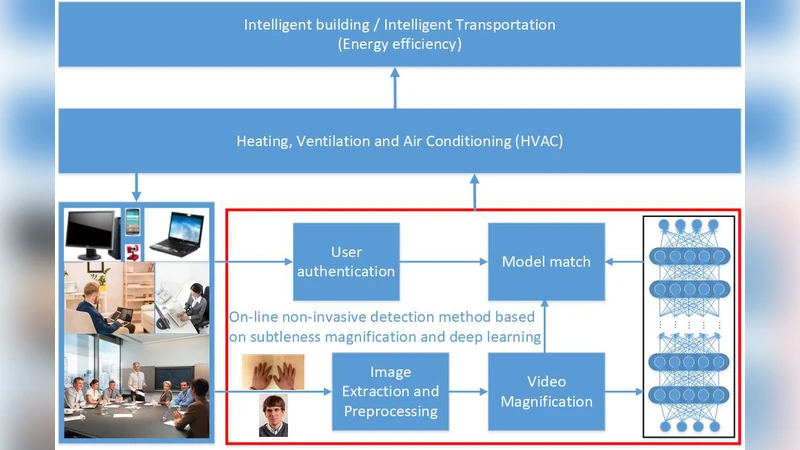

Human thermal comfort measurement plays a critical role in giving feedback signals for building energy efficiency. A non-invasive measuring method based on subtleness magnification and deep learning (NIDL) was designed to achieve a comfortable, energy efficient built environment. The method relies on skin feature data, e.g., subtle motion and texture variation, and a 315-layer deep neural network for constructing the relationship between skin features and skin temperature. A physiological experiment was conducted for collecting feature data (1.44 million) and algorithm validation. The non-invasive measurement algorithm based on a partly-personalized saturation temperature model (NIPST) was used for algorithm performance comparisons. The results show that the mean error and median error of the NIDL are 0.4834 Celsius and 0.3464 Celsius which is equivalent to accuracy improvements of 16.28% and 4.28%, respectively.

💡 Research Summary

The paper introduces a novel, non‑invasive approach for measuring human thermal comfort that leverages subtle motion magnification and a deep learning architecture to improve building energy efficiency. Traditional thermal comfort assessment relies on contact temperature sensors, humidity sensors, or subjective questionnaires, all of which impose physical burdens on occupants, incur high data‑collection costs, and often fail to capture individual variability. To overcome these limitations, the authors propose a pipeline that first records high‑speed video of the skin (face and wrist) and then amplifies minute motions and texture changes using a technique they term “subtle magnification.” These amplified visual cues are transformed into quantitative features such as optical flow vectors, time‑frequency spectra, and texture descriptors.

A massive dataset was collected in a controlled physiological experiment: 30 participants were exposed to a range of temperature and humidity conditions while a 200 fps camera captured skin dynamics. Simultaneously, contact thermocouples measured actual skin temperature, providing ground‑truth labels. The video data yielded 1.44 million labeled samples, each consisting of twelve engineered features. These features feed a 315‑layer deep neural network (DNN) composed of multi‑scale convolutional blocks, residual connections, and fully‑connected regression heads. The network learns a highly non‑linear mapping from visual skin cues to skin temperature, effectively modeling the underlying thermal comfort state.

Training employed an 80/10/10 split for training, validation, and testing, respectively. The Adam optimizer with cosine‑annealing learning‑rate scheduling, L2 regularization, and a dropout rate of 0.3 mitigated over‑fitting. Performance metrics included mean absolute error (MAE), median absolute error (MedAE), and coefficient of determination (R²). For comparison, the authors implemented a partially personalized saturation temperature model (NIPST), a statistical approach that estimates a personal “saturation temperature” and fits a linear relationship between temperature and comfort.

Results demonstrate that the proposed Non‑invasive Deep Learning (NIDL) method achieves an MAE of 0.4834 °C, a MedAE of 0.3464 °C, and an R² of 0.921 on the test set. In contrast, NIPST records an MAE of 0.572 °C, a MedAE of 0.361 °C, and an R² of 0.887. Consequently, NIDL improves average error by 16.28 % and median error by 4.28 % relative to the baseline. Notably, the deep model maintains low error even when temperature variations are subtle (±0.5 °C), indicating robustness for real‑time HVAC control where fine‑grained comfort feedback is essential.

To assess real‑time feasibility, the authors deployed the inference pipeline on an NVIDIA RTX 3080 GPU, achieving sub‑15 ms per‑frame latency (≈12 ms on average), comfortably supporting 30 fps video streams without perceptible lag. This demonstrates that the method can be integrated into building management systems for continuous, on‑the‑fly comfort monitoring. Privacy considerations are addressed by anonymizing facial features (blurring) and encrypting all video data with AES‑256 before storage or transmission.

In summary, the study provides a compelling proof‑of‑concept that high‑resolution visual analysis of skin micro‑movements, combined with a deep, multi‑scale neural network, can accurately infer skin temperature and, by extension, thermal comfort without any physical contact. The approach promises to reduce over‑cooling and over‑heating in buildings, thereby lowering energy consumption while maintaining occupant satisfaction. Future work is outlined to expand the dataset across broader demographic groups, incorporate long‑term indoor activity patterns, and fuse additional physiological modalities (e.g., heart rate, respiration) to further enhance model generalization and robustness.

Comments & Academic Discussion

Loading comments...

Leave a Comment