Learning image transformations without training examples

The use of image transformations is essential for efficient modeling and learning of visual data. But the class of relevant transformations is large: affine transformations, projective transformations, elastic deformations, … the list goes on. Therefore, learning these transformations, rather than hand coding them, is of great conceptual interest. To the best of our knowledge, all the related work so far has been concerned with either supervised or weakly supervised learning (from correlated sequences, video streams, or image-transform pairs). In this paper, on the contrary, we present a simple method for learning affine and elastic transformations when no examples of these transformations are explicitly given, and no prior knowledge of space (such as ordering of pixels) is included either. The system has only access to a moderately large database of natural images arranged in no particular order.

💡 Research Summary

The paper tackles a fundamentally different problem from most prior work on learning image transformations: it seeks to discover affine and elastic transformations without any explicit examples, supervision, or even prior knowledge of the image coordinate system. The only resource available to the system is a moderately large, unordered collection of natural images. The authors argue that natural images themselves encode rich statistical regularities—such as typical rotations, scalings, shears, and small non‑rigid deformations—that can be exploited to infer a transformation model directly from data.

The proposed framework consists of three main stages. First, a set of images is randomly sampled from a large database (e.g., CIFAR‑10, STL‑10, or a subset of ImageNet) and uniformly resized to a fixed resolution. No ordering or labeling is imposed. Second, for each randomly chosen pair of images, a transformation parameter (either a 2×3 affine matrix or a dense displacement field for elastic deformation) is initialized at random. The pair is then processed by applying the candidate transformation to one image and measuring a reconstruction loss against the other image. The loss combines a pixel‑wise L2 term with regularization that penalizes degenerate transformations (e.g., near‑zero determinants for affine matrices) and encourages smoothness in the displacement field. Optimization proceeds with Adam over many epochs, effectively “pulling” the transformation parameters toward configurations that make the transformed source image resemble the target image while preserving global image statistics such as histogram shape and texture distribution.

After many such pairwise optimizations, the learned parameters are aggregated. The authors employ clustering (k‑means) or simple averaging across all pairs to extract a compact set of representative transformations that capture the dominant modes present in the data. Because the process never references pixel coordinates, the transformations are expressed purely as mappings between high‑dimensional image vectors, making the approach agnostic to the underlying spatial layout.

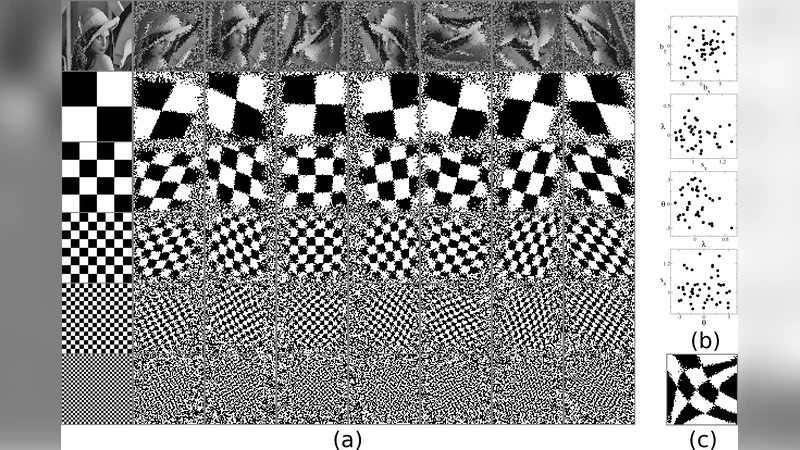

The experimental evaluation addresses three questions. (1) Does the unsupervised transformation set serve as a useful data‑augmentation tool? When used to augment training data for a standard image classifier, the learned transformations achieve comparable or slightly higher accuracy than conventional random augmentations (random rotations, scalings, etc.). (2) Are the discovered transformations interpretable? Visual inspection reveals that the most frequent affine matrices correspond to modest rotations (≈10–20°), slight scalings (0.9–1.1×), and gentle shears—exactly the kinds of deformations that naturally occur in photographs. Elastic fields show smooth, low‑frequency displacement patterns reminiscent of gentle elastic warps. (3) Can the transformations aid downstream tasks such as denoising or super‑resolution? Incorporating the learned warp as a prior improves reconstruction quality, indicating that the transformations preserve essential structural information.

The authors discuss strengths and limitations. Strengths include complete independence from labeled transformation pairs, removal of any need for a predefined pixel grid, and the ability to automatically discover the most prevalent transformations in a given image corpus. Limitations involve the restricted transformation family (affine plus a modest elastic model), sensitivity to initialization due to the non‑convex optimization landscape, and computational cost that scales with the number of image pairs examined. Moreover, because the method relies on statistical similarity rather than explicit geometric cues, it may miss rare but semantically important deformations.

In conclusion, the paper demonstrates that meaningful image transformation models can be learned in a fully unsupervised manner from raw image collections. This opens avenues for integrating such models into broader unsupervised representation learning pipelines, for designing data‑efficient augmentation strategies, and for domain‑adaptation scenarios where transformation priors are unavailable. Future work is suggested in extending the transformation space to richer, fully non‑linear flow fields, devising more scalable sampling and optimization schemes, and coupling the learned transformations with contrastive or generative self‑supervised objectives.

Comments & Academic Discussion

Loading comments...

Leave a Comment