Momentum-Net: Fast and convergent iterative neural network for inverse problems

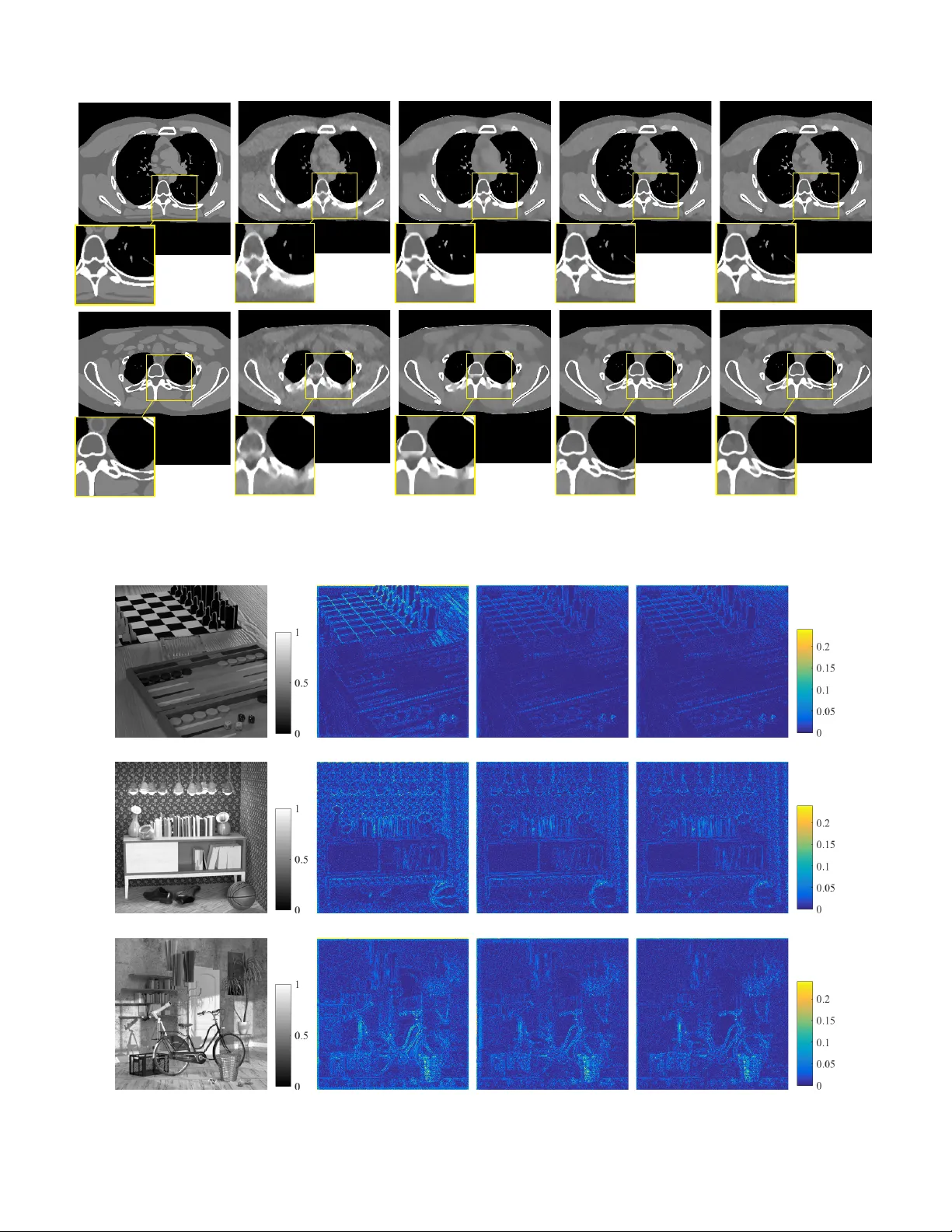

Iterative neural networks (INN) are rapidly gaining attention for solving inverse problems in imaging, image processing, and computer vision. INNs combine regression NNs and an iterative model-based image reconstruction (MBIR) algorithm, often leadin…

Authors: Il Yong Chun, Zhengyu Huang, Hongki Lim