Closing the loop on multisensory interactions: A neural architecture for multisensory causal inference and recalibration

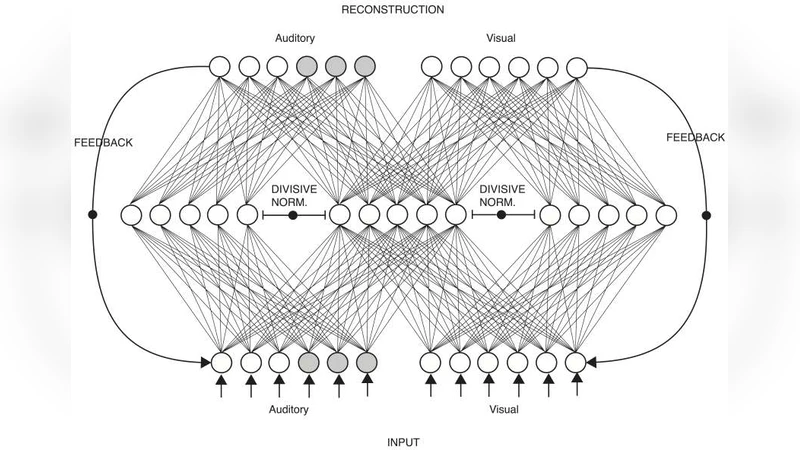

When the brain receives input from multiple sensory systems, it is faced with the question of whether it is appropriate to process the inputs in combination, as if they originated from the same event, or separately, as if they originated from distinct events. Furthermore, it must also have a mechanism through which it can keep sensory inputs calibrated to maintain the accuracy of its internal representations. We have developed a neural network architecture capable of i) approximating optimal multisensory spatial integration, based on Bayesian causal inference, and ii) recalibrating the spatial encoding of sensory systems. The architecture is based on features of the dorsal processing hierarchy, including the spatial tuning properties of unisensory neurons and the convergence of different sensory inputs onto multisensory neurons. Furthermore, we propose that these unisensory and multisensory neurons play dual roles in i) encoding spatial location as separate or integrated estimates and ii) accumulating evidence for the independence or relatedness of multisensory stimuli. We further propose that top-down feedback connections spanning the dorsal pathway play key a role in recalibrating spatial encoding at the level of early unisensory cortices. Our proposed architecture provides possible explanations for a number of human electrophysiological and neuroimaging results and generates testable predictions linking neurophysiology with behaviour.

💡 Research Summary

The paper addresses two fundamental challenges that the brain must solve when confronted with simultaneous inputs from multiple sensory modalities: (1) deciding whether the signals should be combined as arising from a common cause or treated as independent events, and (2) maintaining calibrated representations of each modality over time. To this end, the authors propose a biologically plausible neural network architecture that simultaneously approximates optimal multisensory spatial integration—grounded in Bayesian causal inference—and implements a mechanism for sensory recalibration. The architecture mirrors key features of the dorsal processing hierarchy. At the lowest level, modality‑specific unisensory neurons encode spatial location with narrow receptive fields and high signal‑to‑noise ratios. Their outputs converge onto a population of multisensory neurons that perform two intertwined functions. First, they generate integrated location estimates by weighting the posterior distributions of each modality according to the inferred probability that the cues share a common cause (the Bayesian “causal” term). Second, they accumulate evidence about the causal structure itself; the pattern of activity across multisensory neurons encodes the posterior probability of a common versus independent cause. This causal evidence is fed back through top‑down connections that reach the early unisensory cortices. The feedback modulates the tuning of unisensory neurons, effectively shifting their spatial encoding to reduce systematic discrepancies—a process the authors label “recalibration signal.” The model reproduces classic psychophysical findings: when cue reliability is high and spatial disparity low, the system behaves as an optimal integrator; when disparity grows or reliability drops, the inferred common‑cause probability declines and the system reverts to near‑independent processing. Moreover, simulated neural activity aligns with human electrophysiological and fMRI data showing multisensory convergence in intraparietal sulcus, superior temporal sulcus, and other dorsal areas, as well as feedback‑related modulations in early visual and auditory cortices. The authors also explore how temporal delays, varying reliabilities, and sustained cue conflicts affect both the integration weight and the speed of recalibration, providing quantitative predictions that can be tested with neurophysiological recordings or non‑invasive brain stimulation. In discussion, they argue that the dual role of multisensory neurons—encoding both integrated estimates and causal evidence—offers a parsimonious solution to the “binding problem” while simultaneously furnishing a substrate for long‑term calibration. The paper concludes by highlighting the broader implications for computational neuroscience, suggesting that similar architectures could be employed in artificial systems that need to fuse uncertain sensory data and adapt to drift over time. Overall, the work bridges the gap between abstract Bayesian models of multisensory perception and concrete neural circuitry, delivering a testable, unified framework for both perception and learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment