(e.g. habundanter for abundanter , or ebdo mada for hebdomada ), strengthen ed aspiration or fortition (e.g. michi for mihi or nichil for nihil ), the intru sion of after an (e.g. hiemps for hiems , or dampnum for damnum ), etc. Natur all y, ortho graph ical artif acts and homography pose chal leng ing problems from a com putational perspective [Piotrowski 2012]. Consider the surface form poetae to which, alread y, three differen t analyses might be ap plicable: ‘nominative m asculine plural’, ‘genitive m asculine singular’ and ‘dative mascu lin e singul ar’ . In medi eva l texts , the form poetae could easily be spelled as poete , a spelling which in its turn causes confus ion with other decle nsio ns, such as dux , duc -e . T hus, a good mod el of the loc al contex t in w hich a mbiguou s word form s appea r is cru cial to their dis ambiguatio n. Nevert hel ess, Lati n is a highly synt het ic language which gener all y lack s a stri ct word order: it is therefore far from trivial to ex tract synta ctic patterns from L atin senten ces (e.g. an adjective modifyin g a noun does not necessarily immediately proceed or follow it). T his lack of a strict word order o n the one hand and the concordance of morphological features on the other can cause Latin to display a number of amphibologies or so called “crash blossoms”. These are sentences which allow for differ ent syntactic readings (e.g. nautae poet ae mensas dant ). 1 !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! 1 This specific example could be translated as “the seafarers give food to the poet”, “the poets give food to the seafarer”, “the seafarers give the food of the poet”, “the seafarers give the foo d of the poet”, etc. Gramm atically 3 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! 1.2 Survey of r esources and related research Whil e basi c sequ enc e tagg in g task s such as P oS - tagging are typ ically co nsidered ‘solved’ for many moder n language s such as Englis h, these probl ems remain more challe ngi ng for so - called ‘resource - scarce’ languages, such as Latin, for which few er or smaller resources are generally available, such as a nnotated training corpora. In this section, w e review some of the main corpora which are currently available, including a short characterization, and a brief description of the type of annotations they include. 1.2.1 Index Thomisticus From early onwards, Latin was an important research topic in the emerging community of Digital Humanit ies , more speci fic all y with the undert akin g of the Ind ex Thom isticus by R oberto Busa, s.j. in the secon d half of the 1940 s [Passarotti 2013]. T he corpus c ontains all 118 t exts of 13th ce ntury author Thomas Aquinas as well as 61 texts which are related to him, approximating ±11,000,000 words which can be searched onli ne. The websi te additi onal ly allows to compare and sort words, phrases , quotations, similitudes, correlations and statistical information. In 2006, the Index T homisticus tea m started a treebank 2 project in close collaboration with the Latin Dependency Treebank [Passarotti et al, 2010; 2014]. Their annotation style was inspire d by that of the Prague D ependenc y Treebank and the Latin grammar of Pinkster [Bamman et al, 2007]. The I T - TB t raini ng sets, taken from Thomas Aquina s’ Script um super Sententiis Magistri Petri Lombardi , are av ailable for d ownload in the CoNLL - format and com prise o ver ±175,000 tokens . Th e In dex Thomisticus , with its present treebank venture, is a seminal project that until this very day proves to be of considerable value to the progress of Latin aut omatic annotati on. 1.2.2 Latin Dependency Tre ebank A second project occupi ed with Latin treebank ing is the Latin Dependency Treebank (LD T), whic h was develop ed as a part of the Perseu s Project at the Tufts Univers ity in 2006. Classic al text s from Caesar, Cicero, Jerome, Vergil, Ovid, Petroni us, Phaedr us, Sallus t and Suetoni us were manually annotated by adopting the Guidelines for the Syntactic Annotation of Latin Treebanks (cfr. supra), resulting in a corpus o f ±53,000 word s which was m ade available online. Treebanki ng impl ies fu ll parsing information (syntacti c and semantic annotation), whereas for us only the m orphological informatio n included in the PoS - tag is releva nt. 1.2.3 PROIEL Anothe r notewor thy treebank project is PROEIL ( Pragmatic Resources of Old Indo - Europe an Langua ges ) [Haug an d Jøh ndal, 2 008]. Its goa l is to find in formation structure systems cross - linguistically over the dif ferent transla tions of the Bible (La tin, Greek, Gothic, A rmenian and Ch urch Slavonic). In a first phase, these texts were automaticall y PoS - ta gged and manually corrected. A rule - based ‘guesser’ consequently suggested the m ost likely dependency relation for the annotator. Their annotation scheme for syntactic dependencies was based on that of the LDT, but they have fine - grained the domain of verb al argum ents and adnom inal functions [Haug and Jøhndal, 2008]. This training data h as been made availab le online (in th e CoN LL sta ndard), and in cludes r oughly 179,000 !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! each of these translations can be considered correct, although it is likely that the best option can either be inferred f rom con text or fro m the la nguage’s patterns o f word order (w hich exh ibits itself m ore often in prose than in poetry) [Devin e and Stephe ns, 200 6]. The stateme nt th at Latin does not have a structuralized sentential order has to be nuanced. 2 A synta cti call y and /or s emanti call y pa rsed corpu s of text . 4 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! Latin words from Jerome’s New Testament , Cic ero’s Letters to Atticus , Ca esar’s De Bello G allico and the Peregrinat io Eger iae . 1.2.4 LASLA A four th project wort h ment ioni ng is LASLA ( Laboratoire d’Analyse Statis tique des Langues Anciennes ). This project has developed a lemmatiz ed corpus, comprising classi cal texts such as those of Caesar, Catullus, Horace, Ovid and Virgil, which can be searched online if registered, but is not publicly available for download. Their lemmatization method of Lat in is , h owever, semi - automatic. Firstl y, the word is automatical ly analyzed on the basis of its stem and case ending, which results in a list of possible lemm as. A t this s tage, the choice of th e corre ct lemm a and its co rrect m orphologic al analysis occurs m anually, w hich is a rather time - co nsuming undertaking [Mellet and Purnelle, 200 2]. For a reference dictionary in producing the lemmas, LASLA has used Forcellini’s Lexicon totius latinitatis , with the rea sonable arg ument that it is the least incoh erent [Man uel de lemmatisat ion, LASLA, 2013] . 1.2.5 ( CHLT ) LemLat CHLT LemLat is a Neo - Latin morphologica l analyzer, the first version of which appeared in 1992. It was “sta tis tic all y able to lemmatize ±1,300,0 00 wordfo rms from the origins to the fift h/s ixt h centu ry after Christ” [Bozzi et al, 2002]. CHLT LemLat adopts a rule - based approach which first splits the token into three parts in order to perform morpholog ical tagging, nam ely the invariab le part of the wordfo rm (LES, e.g. antiqu - ), th e pa radigmatic suffix (SM, e.g . – issim - ) and the en ding (SF, e.g. - orum ) [Passarotti, 2007]. Like LASLA , LemLat is unable to contextua lly disambigu ate ambigu ous forms in running text, since it is lexicon - based, and more specificall y makes use of the dictionaries George s, Grade nwit z an d t he Oxf ord Lat in Di cti onar y. 1.2.6 LatinI SE The LatinISE corpus comprises a total of ±13,000,000 Latin words covering a time span from the 2nd century B.C. to the 21st century A.D ., was annotated through a com bination of two pre - existing metho ds . F irstly, the PROEIL Project ’s morphological analyser and Quick Latin w ere used for lemma tization and PoS - tagging [M cGillivray and K ilgariff, 2013]. Th is a nalyser genera ted various options in disambiguati on for a w ord. Secondly, the output from the analys er w as the input to a TreeTagger model trained on the Index Thomisticus dataset , which would take context into account and choose the most likely lemma and PoS - ta g. LatinISE can be accessed online on the Sketch Engine, but is not freely a vailable. 1.2.7 CompHistSem A more recent promisi ng project is CompHistSem ( Computat ional H ist orical Semantics ). The team has applied network theory to detect semantic changes in diachronic Latin corpora. Recently, they have released a composite lexicon called the Frankfurt Latin Lexicon, also referred to as the Collex .LA , w hich br ings tog ether le mmas from various web - based resources (such as the Latin Wikt io nar y) [Meh le r et al , 2014] . 3 Additiona lly the TTLab Latin Tagger was relea sed, which has the !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! 3 “AGFL Gram mar Work Lab, the Latin m orphological analyzer LemLat, the Perseus Digital Library, W illiam Whit ake r’s Word s, the Index Thom isticum (sic), Ramminger’s Neulateinische Wo rtliste, the L atin Wiktionary, Latin trainin g data of the Treetagger, the Najock Thesaurus [...] and several other resources. B eyond that, the FLL is continuously manually checked, corrected and updated by historians and other researchers from the humanities” (90). In the meantime, they report to have 8.347.062 word forms, 119.595 lemmas and 104.905 5 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! objective to automatically tag large corpora such as the Patrologi a Lati na . Both the se resour ces, Collex . LA and the TTLab Latin Tagger , are ava ilable for trial onlin e. Their TTLab Lati n Tagger is hybrid, in that it combines a linguistic rule - based approach w ith a statisti cal one, avoiding the huge effort rule - based tagger s require for every target la nguage separatel y on the one hand, and avoi ding the overfitting characteristi c of statist ical taggers on the other [M ehler et al, 2014]. They have trained and tested the TTLab Latin Tagger on the Carolingian Capitula ria — i.e. ord inances in Latin dec reed by Caroli ngian rul ers, spl it u p in seve ral sect ions or c hapter s. In a recent publication, the team has contrib uted to the field with an oversightful survey pap er in which they have employed these Capitulari a as training data to produce a comparative study of six taggers and two lem matizatio n meth ods [Eg er et al, 2015]. These results arguably offer the best discussion of the stat e of the art at pr esent. Out of the six taggers which they compared, more specifically TreeTagger , TnT , Lapos , Mate , OpenNLPTa gger and Stanford Tagger , th e best tagger was r eport ed t o be Lapos . Wh en it comes to lem matization, the team concluded that a trained lemma tizer (as o pposed to a le xicon - based lemm atizers) provides better results (from 93 - 94% to 94 - 95%) and moreover deals better with lemm atizing words which are out - of - vocabulary (OOV) or whi ch suffer from several variations ( honos and honor ) [Eger et al, 2015]. T hey used LemmaGen fo r this spec ific purpo se, which is a le mmatize r depend ent on induced rule cond itions (RD R, ripp le - down - rules) [Jur š i č et al, 2 010]. This proves that lexicon - based approaches to lemmatization are not always favourable. In an upcom ing article [Eger e t al, forthcoming], they have fu rther d eveloped this idea, by sh owing that the lemmatiz er LAT , wh ich relies on sta tistical infe rence a nd treats lemm atization as a se quence labeling prob lem (involving co ntext), provides be tter re sults than LemmaGen . Both lem mati zers were based on prefix and suffix transformations. Moreover, CompHist Sem has shown how the ‘joint learning’ of a lem matizer with a tagg er (a s o pposed to ‘pipelin e le arning’, whic h is the ind ependent training an d testing on each subcategory as PoS, c ase, gender etc.) can also improve the overall accuracy of the lemmatization / PoS - tagg ing task, especially in the cas e o f th e MarMoT tag ger which — once additional resources such as word embeddings 4 and an underlying lexicon such as Collex .LA are provided — gains the highest results. T he CompHistSem - team wa s generou s to provide us with th e annotated Capitulari a - corpus (and their exact train - test splits), which f acilitates the com parison of ou r results to theirs . 1.3. Gen eral tr ends , r emai ning pro blems The prec eding survey demonstrate s that fo r lem matizatio n a nd part - of - spee ch tagging we dispose of the following annotated data: the Index Tho misticus Treebank , the Latin Dependency Treebank , the PROIEL data and the Capitul aria corpus. All of these annotated corpora offer at least a lem ma, coarse PoS tags and a f ine - grained morphologi cal analysi s. Interestingly, three trends ap pear from the state - of - the - art survey. (1) Firstly, T he autom atic annotation of La tin texts has been movin g away from semi - automated, rule - based approaches (e.g. PROIEL , LASLA , CHLT LemLat ) to d ata - driven machine learning technique s (e.g. the TreeTagger in Lati nISE and CompHistSem ). In gene ral, older approaches were strongly dependent on static lexica, wh ich for eac h word form would exhau stively list all potential morpho log ical analys is, e.g. in the form of tag - lemma pairs. I n the case of am biguity, a s tatistically trained part - of - speech tagger would be used to later single out the best option. First of al l, such a !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! superlemmas (these are lem mas which cover a certain word in its d ifferent varieties) in their Colle x.LA [Eger et al, 2015]. 4 A techniqu e which enable s a represent atio n of words as vectors, cont aini ng real numbers in a low - dimensional space which has a size dependent of the vocabulary size. 6 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! lexicon - based lemmatization approach has the disadvantage that it is in principle unable to correctly lemma tize out - of - vocabulary words, which are not covered in the available lexica. In the case of medie val te xts , orth ogra phi c vari ati on rend ers this prob lem even more ac ute. M oreove r, lex icon - based strategies are very susceptible to the p roblem of error percolation: if the trained tagger predicts the wrong PoS tag, this renders it less likely that the tagger will be able to select the correct lemm a. The CompHistS em project leads the way in this respect, showing that statistic al lemmatization techniques offer an i nteresting, and perhaps even more robust alternati ve to tr aditional lexicon - based appr oaches. (2) Seco ndly, CompHistSem ’s latest results dem onstrate that taggers which include distributed word rep rese ntat ions (so - called “embeddings”, see infra ) are g enerally su perior to previous approaches. This observation will prove relevant in the next section, since our architecture mak es use of a simil ar representation st rategy. (3) Very few s ystems have attempted to learn the tasks of lemma tization a nd PoS - tagging in an integrated fashion . 5 Most syst ems conti nue to learn both tas ks inde pe nde ntl y alt hou gh some sys te ms would make use of cascade tagg ers , where the ou tput of e.g. the PoS - tag ger wo uld be s ubsequen tly fed as input to the lem matizer. Nevertheless, previous research has clearly dem onstrated that both tasks mig ht mutually inform eac h other [To utanova et a l, 2009]. II AN INTEG RATED ARCHIT ECTUR E 2.1. Introducti on to archite ctural set - up In this section, we describe our attempt at an integrated architecture which can be used for the automated sequence tagging of medieval Latin at several levels, e.g. combined lemmatization and PoS - tagging . This architecture is com parable in natu re to other seq uence ta ggers, s uch as Morfe tte [Chrupa ł a et al, 2008 ; Ch rupa ł a 2008 ]. Our architec ture is in principle lan guage - independ ent and could easily be applied to other languages and corpora. While this section is restricted to a com plete, but high - le vel descrip tion, minor details of th e architecture and trainin g p roced ure can be con sulted in the co de repo sitory w hich is associate d with this p aper. 6 A graphica l depicti on of our compl ete model is depicte d in Fig . 1. The overall id ea behin d the arc hitecture is simple. We first create two ‘s ubnets’ that a ct as enco ders: one s ubnet is us ed to mo del a particu lar foc us to ken — th e toke n w hich we like to tag — , a nd a secon d subne t s erves to mo del the lexic al context su rroundin g the focus to ken. The result of these two ‘encoding’ subnets is joined into a single representation which is then fed to two other ‘decoding’ netw orks: one which w ill generate the lemm a, and another which will predict the PoS tag. 2.2 One - hot word repres entation Latin is a highly inflected language. In order to arrive at a good model for indivi dual wor ds, it is vital to take into accoun t morp hemic informa tion at the subword level. We make use of re cent ad vances in the field of “deep” re presentation learning [L eCun et a l, 2015; Be ngio et al, 20 13], whe re it has bee n demonstrated recently that (even longe r) pieces of text can be efficiently modeled from the raw character level upwards [Chrupa ł a 2014; Zhang et al, 2015 ; Bagnall 2015; Kim et al, 2016]. We therefore presen t ind ividual words to the n etwork using a simple matrix represe ntation as f ollows: each row rep resents a c haracter, a nd e ach c olumn represents the resp ective c haracter positions in the word (cf. [Zhang et al, 2015]). We set the number of columns to be the leng th of the longest word in the training materia l: lon ger words at test time are trunc ated to this fixed length, and shorter words are padded with all - zero columns. The cells are p opulated with binary ‘one hot’ values, indicating the presence or absence of a character in a specific position in the w ord. A simplified example of this represen tation is offered be low in Table 1 (for the w ord aliquis ). All tokens are lowercased before this conversion, in order t o limit the si ze of the character vocabulary. !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! 5 Note that ou r notio n of the i ntegr atio n of thes e task s diffe rs from w hat others ha ve called the ‘joint’ learning of complex part - of - speech tags. 6 https://git hub.com/mikekestemont/pandora 7 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! Char/p osition 1 (a) 2 (l) 3 (i) 4 (q) 5 (u) 6 (i) 7 (s) 8 ( -) 9 ( -) 10 ( -) a 1 0 0 0 0 0 0 0 0 0 l 0 1 0 0 0 0 0 0 0 0 i 0 0 1 0 0 1 0 0 0 0 q 0 0 0 1 0 0 0 0 0 0 u 0 0 0 0 1 0 0 0 0 0 s 0 0 0 0 0 0 1 0 0 0 Table 1 : Examp le of the charac ter - level represen tation of an indiv idual focus tok en ( aliquis ): this representatio n encodes the presence of characters (one per row) in subsequent positions of the word (the columns). Shorter words are padded with all - zero columns. 2.3 Long Short - Term Memory (LSTM) 2.3.1 Advantages to LSTM Next, we model the se mat rix - representations of wo rds using s o - called ‘Long Short - Term M emory’ (LSTM ) layers [Hochreiter et al, 1997; G raves et al, 2013]. LSTM is a powerful type of sequence model er to which is curre ntl y paid a great deal of atte nti on in th e field of representation learning, in the context of na tural languag e p rocessing in pa rticular [LeC un et al, 2015]. Th is so rt o f ‘r ecurrent’ model er will iterat ive ly work its way thro ugh the subsequen t positi ons in a time serie s, such as the character pos itio ns in our m atrix. At th e end of th e series, the L STM is able to o utput a sing le, dense vector representation of the entire sequence. LSTMs are interesting sequence modelers, because they arguably capture longer - term depend encies betwe en the inform ation at different positions in the time series. From the po int of view of the present task, one could for instance expect the L STM to d evelop a sensiti vity to the presence of specific m orphemes in words, such as word stems or inflectional endings. L STM layers can be stacked on top of each other, to obtain deeper levels of abstraction. In our experiments, we use stac ks of two LSTM layers throughout. 2.3.2 Word embeddings Apart from this charact er - level representa tion, our ne twork arc hitecture ha s a separa te su bnet which we use to model the lexica l neighbo urhoo d surroun ding a focus token for the purpose of contextu al disambiguation. We used the series of tokens starting from two words before the focus token until (and including) the token follow ing the focus tok en (including t he focus token itself), which is common contextual param etrization in this sort of sequence tagging [cf. Zavrel et al, 1999]. This part of the network is based on the concept of so - called ‘word em beddings’ [Baroni et al, 2010; M ikolov et al, 2013; Manning, 2015]. In tradit ional machine learni ng approaches, w ords are represent ed using their ind ex in a vo cabulary: in the case of a vocabu lary con sisting of 10,000 word s, each token would get represented by a vector of 10,000 binary values, one of which would be set to 1, and all others to zero (hence, the alternative name ‘one - hot encoding’). Such a representati on has the disadvantage that it re quires word ve ctors of a conside rable dimens ionality (e.g. 10,0 00). Moreo ver, it is a cate gorical 8 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! word r epresentation that judges all words to equidistant, w hich is of course a less u seful approximation in the case of synonyms or spelling variants. In the ca se of ‘em beddings’, to kens are re presented by vectors o f a mu ch lower dimensiona lity (e.g. 150), which offer word representations in which the available information is distributed much more evenly over the available units. The general idea is that such w ord embeddings do no t only com e at a much lower comput ati onal cost, but that they also offer a smoothe r rep resentation of words, beca use they are able to reflec t, f or instance, the close r s emantic distan ce between s ynonym s. From a computational perspective, learning word embeddings typically involves optimizing a randomly initialized m atrix [L evy et a l, 201 5], which for each vocabulary item holds a fixed - size vector of e.g. 150 dimensions. While such a matrix can quickly grow very large, word embeddings are still very efficient, because for each token only a single vector in the matrix has to be updated each time, leaving the rest o f the m atrix unalte red. In o ur netwo rk architec ture, mo delling the context s urrounding a focu s token as such in volves to select 4 vectors from o ur embe ddings m atrix, and concaten ate these into a single vector. 7 This yields a model of the focus token, as well as the surrounding context. We can consequently concatenate both ‘encoding’ representations into a single ‘hidden representation’. The decoding parts of our net work will produce the ulti mate output f or a focus token. 2.3.3 Traini ng an LSTM As to the lemmatiza tio n, we now feed our ‘hidden represen tat ion’ into a second stack of LSTMs, by repeating this representation n tim es, w here n corresponds to the maximum lemm a length encountered during training. The task of this ‘decoding’ LSTM is to produce the correct lemma by generating the required lem ma character by character . This is an e xtreme ly challen ging ap proach to the problem of lemma tization. Lemm atization was previously app roached in a mo re con ventional classifica tion setting: either the lemma w as considered an atomic class label [Kestemont et al, 2010 ] or the lemma tization w as solve d by predicting an ‘edit script’ a s a cla ss label [Chrupa ł a 2008; Chrupa ł a et al, 2008; Eger et al, 2015], which could be used to convert the input token to its lemm a. Instea d of h aving the LST M - stack output a single vector — as was the case for the encoder — , we n ow outp ut a probability distri bution over the characters in our alphabet for each character slot in the lemma. Therefor e, we repres ent the l emma as a character matrix, using the exact same representation m ethod as for the input tokens (cf. Table 1). W e borrow the idea of an encoder - decoder LSTM architecture from a s eminal paper in the field of M achine Translation which showed that stacks of encoding/decoding LSTM s can be used to transduce sentences from a source language into a target language [Suts kever et al, 2015 ; Cho et al, 2 014]. H ere, howev er, w e do not learn to map a series of words in one langua ge to a series of words in another languag e, but we use it to translate the series of characters in a t oken, to a series of characters represent ing the corresponding lemma. The proposed network archit ecture is ‘multi - headed’ [Bagnall 2015], in the sense that a single architecture is used to simul taneous ly solve m ultiple tasks in a n integrated fasion. Ap art from the ‘lemma - head’, we also add a second ‘head’ to the architecture which aims to predict a PoS tag for a focus token. W e use the ‘hidden represen tation’ obtained from the en coder and feed it into a stack of two standa rd dense layers [L eCun et al, 2015]. A s is inc reasingly com mon in repre sentation learn ing, we apply dropo ut to thes e layers ( p =.5), meaning that during training, each time randomly half of the available values in a vector are set to ze ro [Sriv astava, 2 014]. A s a non - line arity, we use rectified linear un its, which set all negativ e values to zero. Fin ally, we produce a probability ve ctor for e ach PoS label, w hich is normalized using a so - called softmax layer, ensuring that the resul ting probabiliti es sum to one. !!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! 7 In reality, this vector is then pro jected onto a more co mpressed layer, using a standard dense layer. 9 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! We tr ain thi s networ k durin g a maxi mum of 15 epoch s usi ng an opt im iza ti on meth od calle d ‘minibatch gradient desce nt’, w ith an in itial b atch size o f 100. M ore specifica lly, w e used the RMSprop update mechanism, which helps ne tworks to conv erge faster beca use it k eeps track of the recent gradient history of each parameter. After 10 epo chs, w e would d ecrease the curre nt learn ing rate b y a factor of three and the initial batch size w ith a factor to get more fine - updates for each batch. Some more specific implementatio n details : w e do not apply any dropout in the recurrent layer as it proved to be detrimental; the recurrent layers use the tanh - nonlinearit y, and all other nonlinearities which we te ste d fai led to conve rge. All rec urr ent layers and dense layers have a dimensionality of 150, with the except ion of the final outpu t layer of the encoding LSTM, which we set to 450. We used a dimensionality of 100 for the embeddings matrix (below, we offer a visualizati on of one of its optim ized versions). We im plemen ted these m odels using the keras, sklearn, gensim and the ano [Bastien et al, 2012] libraries and trained them on an NVIDIA Titan X . Dep ending on the model’s complexity and current batch size, one epoch on average would take bet ween 600 and 1000 seconds. Figure 1 Graphica l represe ntati on of the proposed model archite cture . The model has two ‘encoding’ subnets, which model a focus token and the surrou nding cont ext: the result is concatene d into a single hidd en representation. This represe nt is fed to two ‘head nets’: one which aims to generat e the tar get lemma on a character - by - character basis; a second which predicts the PoS tag. III DATASE TS AND E VALUA TION M ETRICS 10 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! Non - Classi cize d Classi cize d Train Dev Test Train Dev Test Tokens 389,304 43,256 49,018 389,304 43,256 49,018 Unique tokens 38,044 10,353 11,526 38,045 10,353 5.71 Prop. unseen NA 5.14 5.71 NA 5.14 5.71 Unique lemmas 12,413 4,904 5,256 10,906 4,568 4,837 Table 2 : S tatistics on the tw o datasets used in term s of nu mber o f words etc: the test set in the non - classisized spelling is identical to the one used by Eger et al. [2015]. 3.1 Evaluation spec ifications In this pape r we evalua te the perform ance of o ur models using the trad itional accuracy score (i.e. the ratio of correct answers over all an swers). As is c ommon in lingu istic sequence tag ging studies, w e make a dist inc tio n betwee n known and unknown tok ens i n the development and test data. “Unknown” tokens refer to the predictions fo r surface to kens which were not verb atimly encountered in the training data (w hich d oes not say anything about wheth er the target lem ma for tha t surface form was encountered durin g training or not). In the training of neural networks, it has become standard to differentia te between a traini ng set, a development set and a test set. The general idea here is that algorithms can be trained on the training data during a num ber of iterat ions: a fter each epoch , the system w ill gain in performance and can be e valuated on the held - out development data. When the performance of the system on the development data is no longer increasing, this is a sign that the system is overfitting the trainin g data and will not generalize or scale well to the unseen data. At this point, one should halt the training procedure (a procedure also known as ‘early stopping’). Finally, the system can b e evaluating on the actual test data; this testing proce dure shoul d be postponed to the very end, to guarantee that researchers have not been optimizing a system in the light of a speci fic text set. We h av e u se d the e xac t sa me te st d at a a s Ege r et a l. [ 20 15] , who se da ta s et we wi ll be fo cus in g on. F or development data, we have used the final 10% of inst ances of the remain ing data ; the first 90% were used as training data. Importantly, while this is a very objective approach to evaluating our system, this division of the da ta will put o ur architectu re at a sligh t d isadvan tage in c omparison to previou s studies, in the sense that our system w ill only have been trained on 90 % of all the available training data. Thus, our models can be expected to have a slightly worse lexical coverage, which might result in slightly lower s co res etc. On e important a spect of PoS - tagging is the co mplexity or granu larity of the tags et used, which has of cou rse an important im pact on the p erformanc e of a tagger. In this exploratory paper, we limit ou r experiments to the sim ple PoS tags in the data set, w hich only distinguish very basic word classes ( e.g. N for nouns, V f or verbs, etc.). 3.2 Making an additional “classici zed” annotation l ayer in the dataset One import ant issue with the ori gin al annot ati on stand ard used for t he Capitul aria data can be illustrated using the f ollowing example: consider the spelling of the word oracio , wh ich ha s shifted from the class ical oratio as a result of the lenition of the /t/ in medieval times. The current lemma tization stand ard will m ap both to kens to two sepa rate lemm as, wher eas they m ight just as well 11 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! be mapped to the same lemma. For many projects (e.g. semantic or literary analysis), we would like a lemma tizer to collapse bo th spellings and map both to the same “supe rlemma” , preferably in a uniform, “classical” spelling (for the sake of simplicity). We have therefore produced an alternative version of the Capitul aria corpus, where all the training data’s lemmas were normalized towards the classicized orthography, conventionally found in reference dic tion ary by Lewis & Short [Lewis an d Short, 1879]. Amongst many, some of the more import ant rules ar e that bot h and are reta ined in the ir respective distinction s as consonant a nd vowel (auu s or avvs is norm alized to avus), < j> disappears for ( conjunx is n ormalized to coniunx ), the dip htong is corrected or recovered where this is necessary ( aecclesia to ecclesia , b ut demon to daemon ), is recovered w here is inappropriate in classical spelling ( rationem in stead of racionem ), assimil ations - especially in the case of prepositional prefixes - are allowed ( collabor instead of conlabor ), etc. Since many of the to kens in the Capitula ria data had not been normalized to a standard spelling, we had to manually correct all deviant lemmas to the Lewis & Short norm, thus creating a resource to train models with classical spellings and lemm as. R egarding lemmatization conventions, the predominant principle is that all words are converte d to their base form, which is the nominativ e singular for nou ns, the nominative mascu lin e singula r for pronouns , adject ive s and ordi nal numbers, and the first person singul ar for verbs. Some choices are perhaps w orth mentioning. For instance, comparatives and superlatives have been redressed to their neutral base fo rms (e.g. maior to magnus ), geru nds and participles to their 1st person singular verb form. Adverbs retained their original form. Below, we will also report results using this dataset, which can be considered easier in the sense that the set of output lemm as is smaller, (see Tab le 2 for an overview), but m ore difficult in the sense that the character transduction between tokens an d lemma s potentially b ecomes m ore com plicated in the corrected ca ses. IV RESU LTS AND DISCUSSIO N 4.1 Scores As to the lemmat iza tion results, our test s cores are g enerally lower than the m ost successful scores reported by Ed er et al. [2015], with an o verall drop around 1.5 - 2.% in overall accuracy on the test set. This was partially to be expected, given the fact that our training o nly represents 90% of theirs, and thus has a slightly w orse lexical cov erage. Also, the formulation of the le mmatizatio n ta sk as a character - per - character string generation task is m ore complex, and currently does not seem to outperform more conventional approaches, in particular that of dedicated tools such as LemmaGen . Interestingly, howev er, o ur model is not outperform ed by the results which E ger et al. [2 015] reported for lexicon - based approaches, indicating that a m achine learning approach relaxes the overall need for large, corpu s - external lexica. Surprisingly, the accuracy scores for all tasks remain relatively low for the training data too , and no ne o f o ur m odels reached accuracies ove r 96 % for a particular tagging task, indicatin g the relative d ifficulty of the m odelling tas ks under scru tiny. The res ults for the ‘classicized lemma s’ versio n of the data set are generally in the same ballpark as the non - classicized data. This is a valuable result, since the string transduction task does in fact become more complex (although the set of outp ut lemmas does shrink). I n this respect, it is worth pointing out that the results for the PoS - tag ging tas k are relatively high and mostl y on par with the best corre spon din g resul ts report ed by Eder et al. [2015]. This is somewhat surprising, given the limited training data that w as u sed, as well as the fact that the model is fairly generic and does not include any of the more task - specific bells an d whistles which current PoS - taggers ty pically inc lude. M odern P oS - tagg ers often implement the recently p redicted P oS tags of previous words as an addition al featur e to hel p disambigu ate the current focus token. We did not include such features in our model, because they are not trivial to imp lement using a mini - batch 12 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! training meth od. Neverthe less, o ur re sults suggest that our netw ork pro duces excellent tagging results for the PoS labels. In all likelihood, this is due to the inclusion of distributed w ord em beddings, which have advanced the state of the art across multi ple NLP tasks [Manning, 2016]. Note that in our impleme ntat ion , we wou ld first ‘pretrain’ a c onventiona l wo rd em beddings mo del on the training data, usin g a po pular implem entation of word2vec ’s skipgram alg orithm [Mikolov et al, 2013]. The fact that this pretraining data set is much smaller in size than the one used by Eger et al. [2015], i.e. th e who le Patrologia Latin a, did not seem to pose a serious disadvantage. W e used the resulting emb eddings matrix, w hich is a cheap method to sp eed up conv ergence. Impo rtantly, our word embeddin gs are dynamic, and the corres pondi ng weig ht m atrix will in fact be optimized during the training process to optimize them ev en further in the ligh t of a spe cific task. A rguably, th is is why our word embeddings approach is still on par with the approach reported by Eger. et al. [2015] where the wo rd embed dings are a dded as a static feature, although they are trained on a m uch larger dataset. This create s interes ting persp ective s for future rese arch. Below, we include a visualiza tion of the word embeddings after training, using the popular t- SNE (t - distri buted stochastic neighbor embedding) algorithm [Van der Maaten et al, 2008]. As v isibly demonstrated, the model seems to learn useful representations of high - lev el w ord classes — e.g. preprositions form a tight cluster in light blue ( ex , in , pro , …) — but also colloca tional patt erns ( nos tro tempore , in light green). For the integrat ed learning experiment, the res ults are curi ously mixed: int eresti ngly, in some respect s, the ta sks d o seem to mu tually in form themse lves. T he P oS r esults, f or insta nce, a re higher in the case of the integrated approach, w hich suggests that the PoS - tagging is helped by the inf ormation whic h is being backpropagated by the lemmatization - specific components. Surprisingly, how ever, this is not the case for lem matizatio n scores , w hich are actually lower in the integrated experiments. This is especially true for the unkn own word scores. We hypothesize that the successful lemmatization of unknown words makes use of the surplus capacity in the hidden representation, or the capacity w h ich is n ot s trictly needed to predict the know n word lemm as. In the integrated archite cture, the PoS - tagger will requi re more infor mati on from the hidden repr esen tat ion, putt ing pressur e on this surp lus capacity. This strongly sug gests that both tasks are to some exte nt competing f or resources in the network, and fur ther resea rch into t he matter i s required. 13 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! Figure 2 A typica l visualiza tion of the word embeddi ngs for the set of the 500 most fr equent tokens in the training d ata after 15 epoc hs of o ptimization. A conven tional agg lomerative cluster an alysis wa s ran on the data points in the scatterplot to identify 8 word clusters, which were coloured accordingly as a reading aid. These results are for the classicized c orpus (integrated task of lem matization and simple PoS tag prediction). 4.2. Output evaluat ion As to an analys is of the errors output ted by the LSTM, one of the most recur rent was the intrusi on of unwanted consonants or vow els. This is a predictable problem, since we generated the lemmas in a character - by - character fashion. S ome false lemmatization results could perhaps best be described as a kind of “com putational hypercorrection”: the tagger attempts to solve a problem — i.e. se t straight a n orthographical variation — where i t is i n fact unnecess ary. T his is t rue for the normaliza tio n of praesentalit er to praesintaliter , in which a corre ction of th e to the is obse rved, w hich we would onl y have expec ted wit h a token suc h as quolebet . Som etimes the tagg er s eemed sen sitive to an orthographic problem but drew wrong conclusi ons in solving it, which is the case for ymnus being normalized to omnis instea d of hymnus . Ano ther typ ical pro blem is that proper names are not recognized as such, bu t a s a different PoS , an d are conseque ntly “normalized ” to an unrecog nisable form. In ge neral, we have noticed th at this intrusion of consonants and vowels som etimes causes the fabrication of a lemma which is still q uite far off from the lemm a we w anted to pred ict, su ch as the lemma tization of intromissi to intromittu , or lap idem to lapid . An other inte resting error w as that pluribus was in some rare occas ions lemmat ized to multus , w hich in dicates that th e wo rd em beddin gs in our mode l have struck a conn ection b etween two w ords that hav e sema ntic equ ivalence. This no ise provides the pointers which we will need for solving this problem in future endeavours. Interestingly, a lot of the lemmatization errors which were eventually made, involve only small differences at the character level n ear the end of the lem ma, which was in some ways to be expected since we generated the lemm a left - to - righ t. Altho ugh some minor postpro cessing might already be very helpful here, this 14 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! suggests that the application of ‘bidirectional’ recurrent networks might be a valuable direction for future research [Graves et al, 2005]. Train Dev Test Task All All Kno Unk All Kno Unk Lemma 95.08 93.54 95.73 53.25 93.16 95.74 50.58 PoS 95.14 94.16 95.03 78.04 93.97 95.14 74.81 Lemma / PoS 92.09 / 95.59 91.03 / 94.50 93.53 / 95.34 44.85 / 78.98 90.54 / 94.44 93.57 / 40.37 40.37 / 75.63 Table 3 Results (in accuracy) for the origin al, non - classicized lemmas in the Capitular ia dataset [Eger et al, 2015]. Results are shown for the train, development and test set, for all words, as well as for the known and unknown words separately. Train Dev Tes t Task All All Kno Unk All Kno Unk Lemma 95.40 93.91 96.22 51.27 93.57 96.27 48.91 Lemma / PoS 92.65 / 95.70 91.43 / 94.58 93.92 / 95.43 45.71 / 78.94 91.19 / 94.47 94.17 / 95.63 41.83 / 75.34 Table 4 Results (in accurac y) for the Capitular ia dataset w ith ‘classicized’ lemmas [Eger et al, 2015]. Results are show n for the train, dev elopment and test set, for all words, as well as for the known and unknow n w ords separately. V CONCLUSI ON This paper has presented an attempt to jointly learn two sequ ence tagging tasks for med ieval Latin: lemma tization and P oS - tagg ing. T hese tasks a re traditio nally solved using a cas caded approach , wh ich we bypa ssed by int egrat ing both task s in a sing le model. As a model, we have prop osed a novel machi ne lear ning a pproach, based upon recent advances in deep representation learning using neural networks. When trained on both tasks separately, our model yields acceptable scores, which are on par with previous ly reported studi es. Interes ti ngly, our appr oach too is lexi con - indepe ndent, which place s our results in line with previous studies with have moved away from lexicon - based approaches. When learned jointly, we observed that the P oS - tagg ing accuracy increased, but lemmatizatio n accuracy decreased. Further research is req uired to discover how this com petition for resources in th e network can be handled in an efficient way. An important novelty of the paper is that we produced an annotation layer in the Capitu laria dataset in which w e normalized the m edieval orthography of the lemma labels used by “classiciz ing” th em. In sp ite of the increased difficu lty of the string transductio n task, our m odel perfo rmed reaso nably we ll on this nove l data in term s of lemm atization. Acknowle dgement s 15 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! Our since rest grati tude goes out toward s our colle agues Prof. Dr. Jeroen Deploi ge and Prof. Dr. Wim Verbaa l from Ghent Univers it y, whose expert ise and feedback was indisp ensab le in the creati on of this paper, which is a prelimin ary step in our joint project “Collaborative A uthorship in Twelfth Century Latin Literatur e: A Stylometr ic Approach to Gender, Synergy and Authori ty”, funded by the BOF research fund in Ghent. Without thei r consider ate guidanc e in respectiv ely the histor ical and linguistic / literary field, this article wo uld been im possible. We would m oreover like to e xpress our gratitude to the CompHistSem - team that generous ly provide d us with additiona l training d ata, and to the anony mous rev iewer for h is/her indispen sable feedb ack in the p rocess o f revision . References Bagnall D. Author Identifi cation Using Multi - Headed Recurrent Neural Networks. Conference and Labs of the Evaluation Forum ( CLEF ). Notebook for PAN . 2015. Bamman D., Passarotti M., Busa R., Crane G. The Annotation G uidelin es of the Lati n Dependency Treebank and Index Thomisticus Treebank. The Treatment of Some Syntactic Constructions in Latin. Proceedings of the Sixth International Conference on Language Res ources and Evaluation ( LREC ). 2008:71 - 76. Bamman D., Passarotti M., Crane G., Raynaud S. Guidelines for the Syntac tic Annotation of Latin Treebanks (v. 1.3.). 2007:1 - 48. http://nlp.perse us.tufts.edu/syntax/ treebank/1.3/docs/guidel ines.pdf. Baroni M., Lenc i A. Distribution al Memo ry: A General Fra mework for Corpus - Based Semantics . Computation al Linguisti cs . 2010;36(4):673 - 721. Bastien F., Lamblin P., Pascanu R., Bergstra J., Goodfellow I.J., Bergeron A., Bouchard N., Bengio Y. Theano: New Features and Speed Improvements. Deep Learning and Unsupervised Featu re Learning . Neural Inf ormation Processing Systems Workshop ( NIPS ). 2012:1 - 10. Bengio Y., Courville A., Vincent P. Represent ation Learning: A Review and New Perspectives. IEE E T ransactions on Pattern Anal ys is and Machine In telligence . 2013;35 (8):1798 - 1828. Bozzi A., Cappell i G., Passar otti M., Ruffol o P. Pe riodic Progress Report: Wor kpackage 5. Neo - Latin Morphological Analyser . 2002: 1 - 17. http://www.ilc. cnr.it /lemlat/ Cho K., van Merrienboer B., Bahdanau D., Bengio Y. On the Properties of Neural Machine Translatio n: Encoder - Decode r Approache s. Syntax, Semantics and Structure i n Statistical Trans lation ( SSST ). 2014;8. Chrupa ł a G. Normalizing Tweets with Edit Scripts and Recurr ent Neural Embeddings. Proceedi ngs of the 52nd Annual Meetin g of the Asso ciati on f or Co mputat iona l Li nguis tics (Sho rt P apers ) . 2014;2:6 80 - 686. Chrupa ł a G. Towards a Machine - Learning Architecture fo r L exical Functional Gra mmar Parsing. Chapter 6. PhD Dissert ation. Dublin City Un iversi ty, 20 08. Chrupa ł a G., Dinu G., van Genabit h, J. Learning Morpho logy with Morfette . Proceedings of the Internati onal Conference on Language Resources and Evaluation ( LRE C ). 2008. Devine A.M., Stephens L.D. Latin Word Order: Structu red Meaning and Information . Oxford University Press (Oxford), 2006. Eger, S., Gleim, R., Mehler, A. Lemmatization and Morphological Tagging in German and Latin: A Compariso n and a Survey of the State - of - the - art. Proceedings of the 10th Internation al Conference on Language Resources and Evaluation ( LREC ). 2016:1507 - 1513. Eger S., vor der Brück T., Mehler A. Lexicon - assisted Tagging and Lemm atization in Latin: A Comparison of Six Taggers and Two Lemmatization Methods. Proceedings of the 9th Workshop on Language Technology for Cultural Heritage, Social Sciences, and Humaniti es ( LaTeCH ). 2015:105 - 113. Franzini G., Franzini E., Büchler M. Hi storical Te xt Reuse: What Is It? http://http:// etrap.gcdh.de/?page_id=332 . Graves A., Mohamed A., Hinton G. Speech Recogniti on with Deep Recurrent Neur al Network s. Acoustics, Speech and Signal Processing ( ICASSP ) , 2013 IEEE International Co nference . 2013 :6645 - 6649. Graves A., Schmidhuber J. Framewise Phoneme Classificat ion with Bidirection al LSTM and Other Neural Network Architec tures. Neural Networks . 2005;18:602 – 610. Haug D.T.T., Jøhndal M.L. Creating a Parallel Treebank of the Old Indo - European Bible Translations. ed. Sporleder C., Ribarov K. Proceedings of the Second Workshop on Language Technology for Cultural Heritage Data ( LaTeCH ). 2008:27 - 34. Hochreit er S., Schmi dhuber J. Long Short - Term Memor y. Neural Computation . 1997;9(8):1735 - 1780. http1 http://www.ilc.cnr.it /lemlat/ http2 http://ilk.uvt. nl/conll/ http3 http://itreebank. marginalia.it/view/download. php http4 http://proiel.gi thub.io http5 http://www.quicklatin.c om/ http6 http://sites.t ufts.edu/perseusupdates/ 2013/01/17/querying - the - perseus - ancient - greek - and - latin - treebank - data - in - annis/ http7 https://perseusdl.gi thub.io/treebank_data/ 16 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " ! http8 http://web.philo.ulg. ac.be/lasla/ http9 http://www.comphistsem.org/home.html http10 http://www.corpusthomisticum.org http11 https://www.sketchengine.co.uk Jurafsky D.S., Martin J.H . Speech and Language Processing. An Introduction to Natural Language Processing, Computational Linguis tics a nd Speech Recogniti on . Englewood Cliffs , 2000:285 - 318. Kestemont M., Daelemans W., De Pauw G. Weigh Your W ords – Memory - based Lemm atization for Middle Dutch. Literary and Linguistic Computing ( LLC ). 2010;25(3):287 - 301. Kim Y. et al. Character - Aware Neural Language Models. Pr oceedings of the Thirti eth AAAI Conference on Artificial Intelligence . 2016;16:2 741 - 2749. Knowles G., Mohd Don Z. The Notion of a “Lemma”. Headwords, Roots and Lexical Sets. Interna tional Journal of C orpus Linguistics . 2004;9:69 - 81. LeCun Y., Bengi o Y., Hi nton G. Deep Learning. Natu re . 2015;521:436 - 444. Levy O., Goldberg Y., Dagan I. Improving Distributional Similarity with Lessons Learned from Word Em beddings. Transactions of the Associa tion for Comput ational Lingu istics ( TACL ). 2015;3:211 - 225. Lewis C.T., Short C., Andrews E.A., Freund W. A Latin Dictionary, Founded on Andrews' Edition of Freund's Latin Dictiona ry ( LLD ). Oxford, Clarendon Press, 1879. Mannin g, C. D. Comput atio nal Lingu isti cs and D eep L earni ng. Computat ional Li nguistic s . 2015;41(4 ):701 - 707. Matja ž J., Mozeti č I., Erjavec T., Lavra č N. Lemm aGen: Multilingual L emmatisation with Induced Ripple - Down Rules . Journal of Universal Computer Science . 2010;16:1190 - 1214. McGill ivr ay B., Kilga riff , A. Too ls for Hist oric al Corp us Res earch , and a Corpus of Latin. New Methods in Historical Corpus Ling uistics . ed. Durrell P., Sc heible M., Whit t S., Bennet t R.J. 2013;3: 247 - 255. Mehler A., Gl eim R ., Wal ting er U. , Diew ald N. Time S erie s of Li ngui stic Netwo rks i n the P atro logi a Lati na. Gesell schaft f ür Informatik ( GI ). 2010;2:586 - 593. Mehler A., vor der Brück T., Gleim R., Geelhaar T. Towards a Network Model of the Corenes s of Texts: An Experi ment in Classifyi ng Latin Tex ts Using the TTLab Latin Tag ger. From Ontol ogy Learning to Automat e d Text Processing Applications . Text Mining. ed. Bieman C., Mehler A. Springer International Publishing (Switzerland). 2014:87 - 112. Melle t S., Pur nell e G. Les ato uts mul tipl es de la le mmati sati on: l’ exempl e du lat in. Journées internationales d’Analyse stat istique des Donn ées Textuelles ( JAD T ). 2002;6:529 - 538. Mikolo v T., Suts kever I., Chen K., Corrado G.S ., Dean J. Dis tri buted Represe ntat ions of Words and Phras es and thei r Compositiona lity. Neural I nformati on Process ing Syst ems ( NIPS ). 2013;26:3111 – 3119. Passarotti M. From Syntax to Semantics. First Steps Towards Tectogrammatical Annotation of Latin. Proceedings of the 8th Worksho p on Langu age T echno logy for Cultu ral Herit age, Soci al S cienc es, and h umanit ies ( LaTeCH ) . 2014:100 - 109. Passarotti M. One Hundre d Years Ago. In Mem ory of Father Roberto Busa SJ. Proceedings of the Third Workshop on Annotation of Corpora fo r Research i n the Humanit ies. ed. Mambrini F. et al. Sofia, 2013:15 - 24. Passarotti M. LEMLA T. Uno strumento per la lemmatizzazione morfologica au tomatica del latino. From Manuscript to Digital Text. Problems of Interp retati on and Markup. Proceedings of the Colloquium (Bologn a, June 12th 2003) . ed . Citti F., Del Vecchi o T. 2007:107 - 128. Passarotti M., Dell’Orletta F. Improvements in Parsi ng the Inde x Thomisticus Tr eebank. Revision, Combination and a Feature Model for Medieval Latin. Proceedings of the International Conference on Language Resources and Evaluation ( LREC ). 2010;(17 - 23):1964 - 1971. Piotrowski M. Na tural Lan guage Proc essing f or Histo rical Texts. Mor gan & Cla ypool Publ ish ers, 2012 . Rigg A.G. Orthography and Pronunciation. Medieval Latin : An Introduction and Bibliographical Guide . ed. Man tello F .A.C, Rigg A.G. The Cathol ic Unive rsity o f America Press (Washington , D.C.) , 1996:79 - 83. Schinke R, Greengrass M, Robertso n AM, Willett P. A stemming algorithm for Latin text databases. Journal of Documentat ion . 1996;52:172 - 187. Srivastava N., Hinton G., Krizhev sky A. Sutskever I., Salakhutdi nov R. Dropout: A Simple Way to Prevent Neural Networks from O verfitting. Journal of Machine Learning Research . 2014;15:1929 - 1958. Sutskever I., Vinyals O., Le V.Q. Sequence to Sequence Learning wit h Neural Networks. NIPS (Ne ural Inf ormation Processing Sy stems) . 2014: 3104 - 3112. Toutanova K., Cherry C. A Global Model for Joint Lemm atization and Part - of - Speech Prediction. Proceedings of the Joint Conference of the 47th Annual Meeting of the ACL and the 4th Internat ional Join t Conferenc e on Natural Language Processing of the AFNLP . 2009;1:486 - 494. van der Maaten L.J.P., Hinton G.E. Visuali zing High - Dime nsional Data Using t - SNE. Journal of M achine Learning Research . 2008;9:2579 - 2605. Zavrel J., Daelemans W. Recent Advances in Memory - Based Part - of - Speech Tagging. Actas del VI Simposio Internacional de Comunicacion Social . 1999:590 - 957. Zhang X., Zhao J., Lecun Y. Characte r - level Convolutio nal Networks for T ext Classification. Neural I nformatio n Process ing Systems ( NIPS ) . 2015;28:1 - 9. ! 17 " Journal"of"Data"Mining"a nd "D igital"H u m an ities " !""#$%%&'('!)*#+,-+*.-*,)/01 2 344526789 : ;<<<=2>.2/#*. : >--*,,2&/?0.>@ " !

Comments & Academic Discussion

Loading comments...

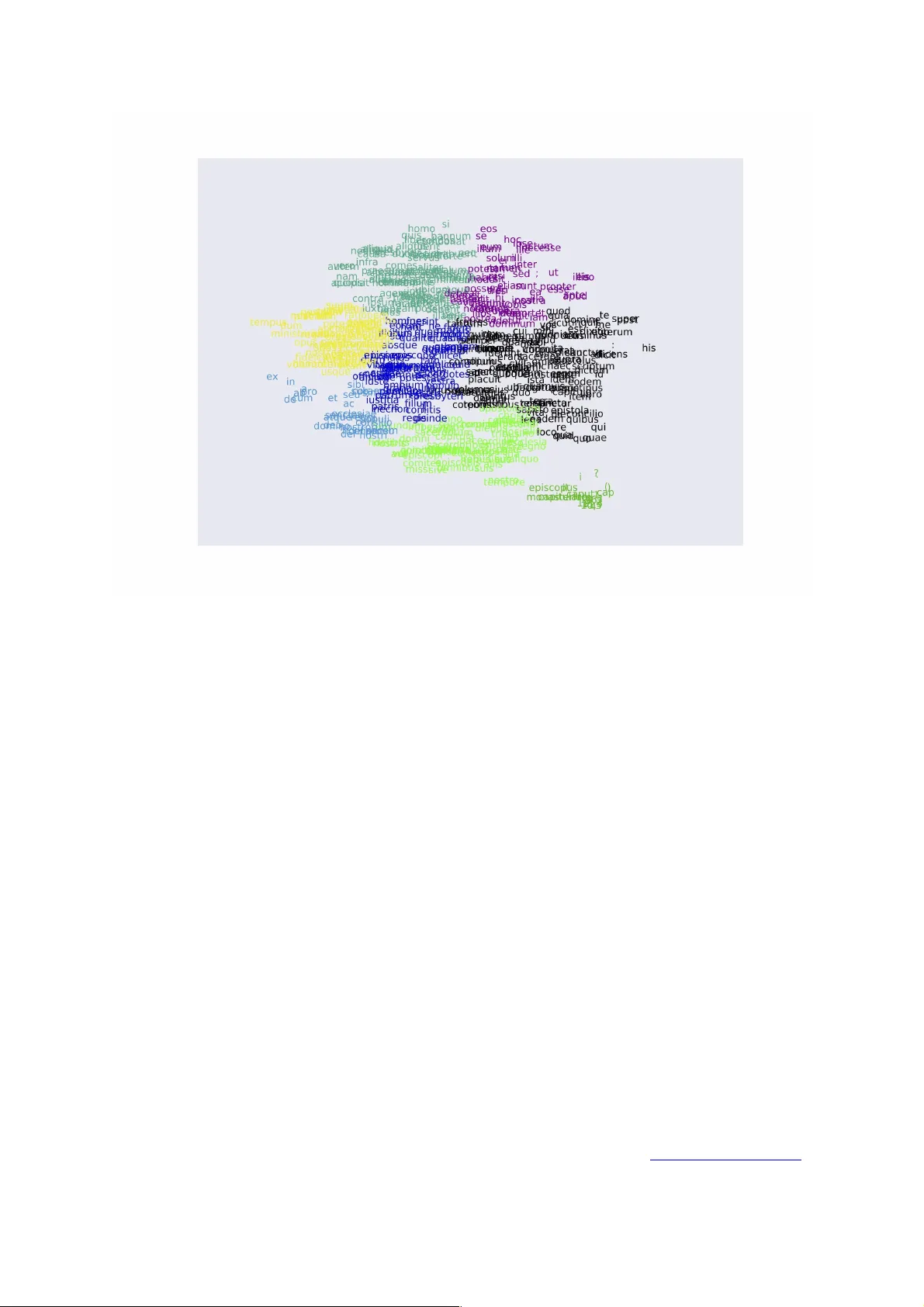

Leave a Comment