Enforcing public data archiving policies in academic publishing: A study of ecology journals

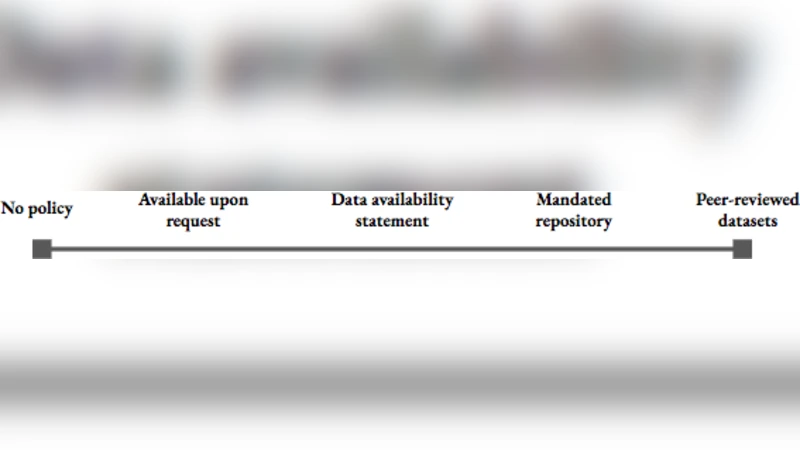

To improve the quality and efficiency of research, groups within the scientific community seek to exploit the value of data sharing. Funders, institutions, and specialist organizations are developing and implementing strategies to encourage or mandate data sharing within and across disciplines, with varying degrees of success. Academic journals in ecology and evolution have adopted several types of public data archiving policies requiring authors to make data underlying scholarly manuscripts freely available. Yet anecdotes from the community and studies evaluating data availability suggest that these policies have not obtained the desired effects, both in terms of quantity and quality of available datasets. We conducted a qualitative, interview-based study with journal editorial staff and other stakeholders in the academic publishing process to examine how journals enforce data archiving policies. We specifically sought to establish who editors and other stakeholders perceive as responsible for ensuring data completeness and quality in the peer review process. Our analysis revealed little consensus with regard to how data archiving policies should be enforced and who should hold authors accountable for dataset submissions. Themes in interviewee responses included hopefulness that reviewers would take the initiative to review datasets and trust in authors to ensure the completeness and quality of their datasets. We highlight problematic aspects of these thematic responses and offer potential starting points for improvement of the public data archiving process.

💡 Research Summary

The paper investigates how public data‑archiving policies adopted by ecology and evolution journals are actually enforced in practice. While funding agencies, institutions, and professional societies have promoted data sharing as a means to improve research quality and efficiency, anecdotal evidence and prior studies suggest that the mere existence of journal policies does not guarantee that datasets are deposited, are complete, or meet quality standards. To explore the enforcement mechanisms and the perceived responsibilities for data completeness and quality, the authors conducted a qualitative, interview‑based study with editorial staff and other stakeholders involved in the publishing process.

A purposive sample of twelve high‑impact ecology journals was selected based on their stated data‑archiving policies (mandatory, recommended, or absent). From these journals, 27 participants were recruited, including editors‑in‑chief, associate editors, data editors, publishing managers, and a few peer reviewers. Semi‑structured interviews lasting roughly 45 minutes each were transcribed and analyzed using thematic coding in NVivo. Five overarching themes emerged: (1) awareness and interpretation of the journal’s data‑policy, (2) operational procedures for handling data submissions, (3) attribution of responsibility for data verification, (4) expectations of reviewer involvement, and (5) criteria used (or not used) to assess dataset completeness and quality.

The analysis revealed a striking lack of consensus across journals and roles. Some editors view the policy as a strong recommendation, leaving the onus on authors to decide what to share; others treat it as a strict condition for acceptance. Operationally, most journals rely on manual email exchanges; automated checks linking manuscript submission systems to repositories are rare. Responsibility for data verification is split: roughly 40 % of interviewees expect reviewers to examine the data, but most acknowledge that reviewers lack the time, expertise, and incentives to do so. The majority of participants place the primary burden on authors, expecting them to self‑audit their datasets before submission, while editorial staff typically only confirm that a link to a repository exists. Because explicit quality‑assessment guidelines are absent, issues such as metadata completeness, file‑format standardization, and reproducibility checks are inconsistently addressed.

These findings point to two systemic problems: a “responsibility‑avoidance culture” where no party feels fully accountable, and a “trust‑based expectation” that authors will provide adequate data without verification. The lack of detailed procedural guidance in policy documents, combined with limited integration between journal platforms and data repositories, hampers consistent enforcement.

To move beyond these shortcomings, the authors propose several concrete interventions. First, journals should appoint dedicated data editors or data curators whose explicit role is to evaluate dataset completeness, metadata quality, and compliance with repository standards. Second, reviewer involvement with data should be made optional but incentivized—through training modules, recognition badges, or modest compensation—to ensure that those who have the expertise can contribute meaningfully. Third, a mandatory data‑submission checklist, aligned with community metadata standards such as the Ecological Metadata Language (EML), should be embedded in the manuscript submission workflow, with automated validation tools that flag missing fields or non‑standard file types. Fourth, policy statements must be revised to clearly delineate who is responsible (author, reviewer, editor, data curator) at each stage of the review process, what specific checks are required, and what consequences follow non‑compliance.

By implementing these measures, the authors argue that journals can significantly improve both the quantity and the quality of publicly archived ecological data, thereby enhancing data reusability, facilitating reproducible research, and ultimately advancing the scientific enterprise.

Comments & Academic Discussion

Loading comments...

Leave a Comment