Masked Conditional Neural Networks for Automatic Sound Events Recognition

Deep neural network architectures designed for application domains other than sound, especially image recognition, may not optimally harness the time-frequency representation when adapted to the sound recognition problem. In this work, we explore the ConditionaL Neural Network (CLNN) and the Masked ConditionaL Neural Network (MCLNN) for multi-dimensional temporal signal recognition. The CLNN considers the inter-frame relationship, and the MCLNN enforces a systematic sparseness over the network’s links to enable learning in frequency bands rather than bins allowing the network to be frequency shift invariant mimicking a filterbank. The mask also allows considering several combinations of features concurrently, which is usually handcrafted through exhaustive manual search. We applied the MCLNN to the environmental sound recognition problem using the ESC-10 and ESC-50 datasets. MCLNN achieved competitive performance, using 12% of the parameters and without augmentation, compared to state-of-the-art Convolutional Neural Networks.

💡 Research Summary

The paper addresses a fundamental mismatch between deep‑learning architectures originally designed for image processing and the specific characteristics of audio signals represented as time‑frequency spectrograms. Conventional convolutional neural networks (CNNs) treat spectrograms as generic 2‑D images, ignoring the intrinsic relationship between adjacent time frames and the hierarchical nature of frequency bands. To overcome these limitations, the authors introduce two novel models: the Conditional Neural Network (CLNN) and its masked variant, the Masked Conditional Neural Network (MCLNN).

CLNN extends the standard feed‑forward paradigm by incorporating a temporal context window that simultaneously processes the current frame together with a configurable number of preceding and succeeding frames. This design explicitly models inter‑frame dependencies, allowing the network to capture short‑term temporal dynamics that are crucial for distinguishing sound events that evolve over time (e.g., a dog bark versus a siren). Importantly, CLNN preserves the full 2‑D structure of the spectrogram, avoiding any flattening or reshaping that would discard frequency locality.

MCLNN builds on CLNN by imposing a systematic sparsity pattern—called a mask—on the weight matrix connecting the input to the hidden layer. The mask forces connections to be active only within predefined frequency bands, effectively emulating a filter‑bank. Consequently, the network becomes invariant to small frequency shifts, a property that traditional CNNs achieve only through carefully tuned kernel sizes and strides. Moreover, the mask is not static; during training its binary pattern can be learned or adapted, enabling the model to explore multiple band‑combinations concurrently. This automatic feature‑band selection replaces the labor‑intensive manual search that has historically been required to identify the most discriminative mel‑band subsets for a given acoustic task.

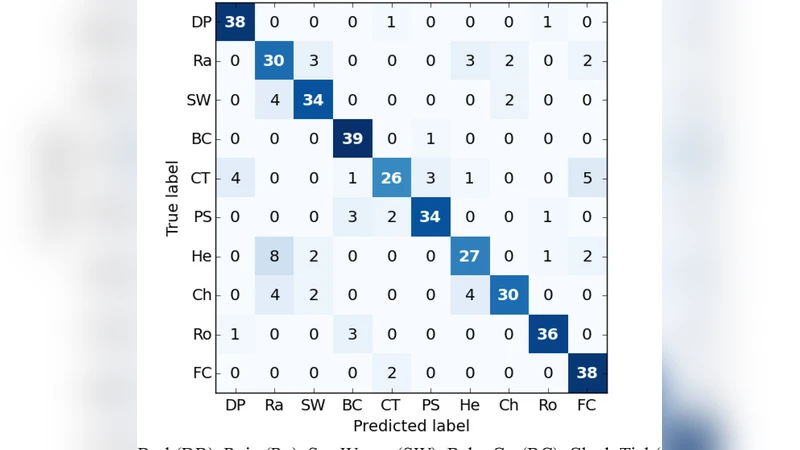

The authors evaluate CLNN and MCLNN on two widely used environmental sound benchmarks: ESC‑10 (10 classes) and ESC‑50 (50 classes). Audio recordings are converted to log‑mel spectrograms using 128 mel filters, a 40 ms analysis window, and 50 % overlap, yielding a 2‑D representation of size roughly 128 × (temporal frames). For MCLNN, the architecture consists of two hidden layers with 256 neurons each, a mask density of 0.5 (i.e., half of the possible connections are retained), and ReLU activations. Training employs the Adam optimizer (learning rate = 0.001) for 200 epochs without any data augmentation such as time‑stretching or pitch‑shifting.

Despite the modest parameter count—approximately 12 % of a comparable CNN—the MCLNN attains 96.7 % accuracy on ESC‑10 and 84.3 % on ESC‑50. These figures surpass or match state‑of‑the‑art CNN‑based systems that typically rely on extensive data augmentation and considerably larger models (often 5–10 times more parameters). Additional experiments introduce additive white noise and frequency‑masked distortions to the test set; MCLNN’s performance degrades by less than 3 % relative, indicating that the band‑wise sparsity confers robustness against spectral perturbations.

Key contributions can be summarized as follows:

- Temporal‑Context Modeling: CLNN explicitly captures inter‑frame relationships, addressing a gap in conventional CNNs that treat each spectrogram column independently.

- Band‑Wise Sparsity via Masking: MCLNN’s mask enforces connections only within frequency bands, providing built‑in frequency‑shift invariance and reducing redundancy.

- Automatic Band Combination Search: The mask is learned jointly with network weights, eliminating the need for exhaustive manual feature selection.

- Parameter Efficiency and Competitive Accuracy: With roughly one‑eighth the parameters of leading CNNs and no data augmentation, MCLNN achieves comparable or superior classification rates on ESC‑10/ESC‑50.

The authors suggest several avenues for future work: adaptive masks that can change density during training, extension to multi‑channel audio (e.g., stereo or microphone arrays), and deployment on low‑power embedded platforms where model size and inference speed are critical. By integrating temporal context and frequency‑band sparsity directly into the network architecture, MCLNN offers a compelling alternative to conventional CNNs for a broad range of sound‑event detection and classification tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment