Ensuring Data Integrity in Electronic Health Records: A Quality Health Care Implication

An Electronic Health Record (EHR) system must enable efficient availability of meaningful, accurate and complete data to assist improved clinical administration through the development, implementation and optimisation of clinical pathways. Therefore data integrity is the driving force in EHR systems and is an essential aspect of service delivery at all levels. However, preserving data integrity in EHR systems has become a major problem because of its consequences in promoting high standards of patient care. In this paper, we review and address the impact of data integrity of the use of EHR system and its associated issues. We determine and analyse three phases of data integrity of an EHR system. Finally, we also present an appropriate method to preserve the integrity in EHR systems. To analyse and evaluate the data integrity, one of the major clinical systems in Australia is considered. This will demonstrate the impact on quality and safety of patient care.

💡 Research Summary

The paper investigates the critical role of data integrity within Electronic Health Record (EHR) systems and its direct impact on patient safety and overall healthcare quality. It begins by defining data integrity not merely as the absence of errors, but as the continuous assurance of consistency, accuracy, and completeness throughout the entire data lifecycle—creation, modification, storage, and transmission. Recognizing that compromised integrity can lead to misdiagnoses, medication errors, and delayed treatment, the authors argue that safeguarding integrity must be a top priority for any health organization.

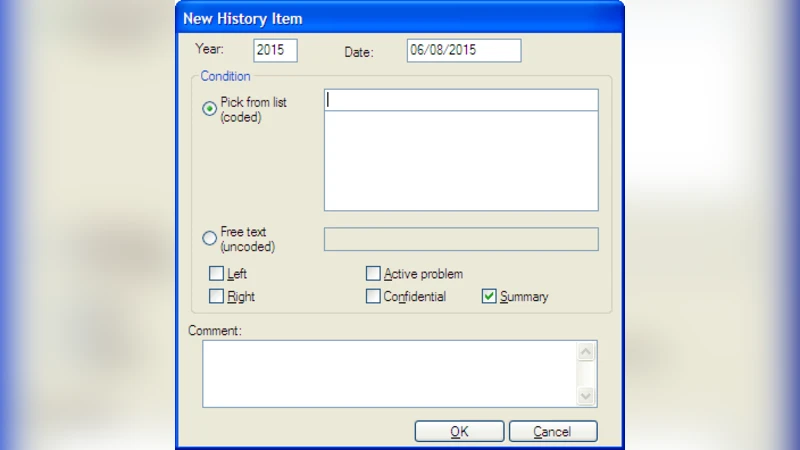

To structure the discussion, the authors divide integrity management into three distinct phases. The first phase—data entry—focuses on user‑interface design, real‑time validation, and the use of standardized clinical terminologies such as SNOMED CT and LOINC. Features like auto‑completion, drop‑down lists, and immediate feedback alerts are recommended to reduce human error, especially in high‑pressure clinical settings where shortcuts are tempting.

The second phase—data storage and management—emphasizes robust database design. Normalization, primary‑key and foreign‑key constraints, and check constraints are presented as foundational safeguards. Transaction management, rollback mechanisms, and comprehensive audit trails are advocated to handle concurrency issues and enable post‑hoc verification. The authors also stress the need for business‑rule validation at the application layer (e.g., ensuring patient age aligns with age‑specific diagnosis codes) to complement database constraints.

The third phase—data transmission and utilization—covers interoperability standards such as HL7 and FHIR. The paper recommends TLS encryption, digital signatures, and checksum/hash verification to protect data in transit from tampering. It also calls for continuous maintenance of terminology mapping tables and automated mapping validation tools to prevent schema mismatches when exchanging data with external systems.

A case study of a major clinical information system used in Australia provides empirical grounding. Log analysis revealed three primary integrity threats: (1) user entry errors (typos, incorrect code selection), (2) legacy data inconsistencies arising from schema changes, and (3) format conversion errors during interface exchanges with ancillary systems. Notably, the fast‑paced environment often led clinicians to bypass validation prompts, amplifying the risk of downstream errors.

To address these challenges, the authors propose an integrated technical and organizational strategy. Technologically, they suggest deploying a real‑time data validation engine that operates across all three phases, coupled with a data‑quality dashboard that visualizes integrity violations. They explore the use of blockchain‑based immutable audit logs to enhance transparency and traceability of data changes. Organizationally, they recommend establishing a data‑governance committee responsible for defining integrity policies, conducting regular staff training on data quality, and implementing systematic audit programs. Clear accountability structures and rapid remediation procedures are also outlined to ensure swift correction of any integrity breaches.

In conclusion, the paper asserts that data integrity is the cornerstone of effective EHR systems. Maintaining it requires a holistic approach that intertwines technical safeguards with cultural and procedural reforms. The proposed framework aims to reduce error propagation, improve clinical decision‑making, and ultimately elevate patient outcomes. Future research directions include multi‑site validation of the integrity management model and the development of AI‑driven predictive tools for early detection of data quality issues.

Comments & Academic Discussion

Loading comments...

Leave a Comment